Carlos Santiago

How to rewrite the stars: Mapping your orchard over time through constellations of fruits

Feb 04, 2026Abstract:Following crop growth through the vegetative cycle allows farmers to predict fruit setting and yield in early stages, but it is a laborious and non-scalable task if performed by a human who has to manually measure fruit sizes with a caliper or dendrometers. In recent years, computer vision has been used to automate several tasks in precision agriculture, such as detecting and counting fruits, and estimating their size. However, the fundamental problem of matching the exact same fruits from one video, collected on a given date, to the fruits visible in another video, collected on a later date, which is needed to track fruits' growth through time, remains to be solved. Few attempts were made, but they either assume that the camera always starts from the same known position and that there are sufficiently distinct features to match, or they used other sources of data like GPS. Here we propose a new paradigm to tackle this problem, based on constellations of 3D centroids, and introduce a descriptor for very sparse 3D point clouds that can be used to match fruits across videos. Matching constellations instead of individual fruits is key to deal with non-rigidity, occlusions and challenging imagery with few distinct visual features to track. The results show that the proposed method can be successfully used to match fruits across videos and through time, and also to build an orchard map and later use it to locate the camera pose in 6DoF, thus providing a method for autonomous navigation of robots in the orchard and for selective fruit picking, for example.

Benchmarking 3D Human Pose Estimation Models Under Occlusions

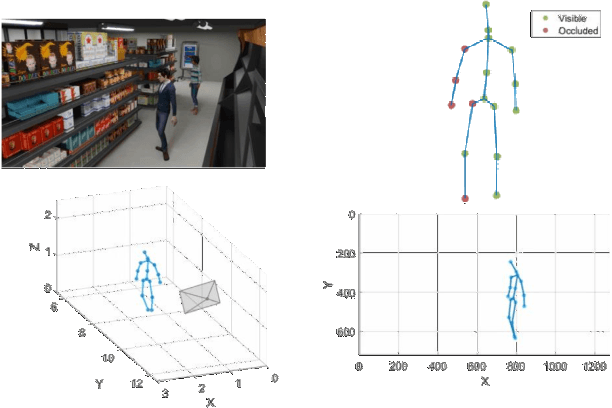

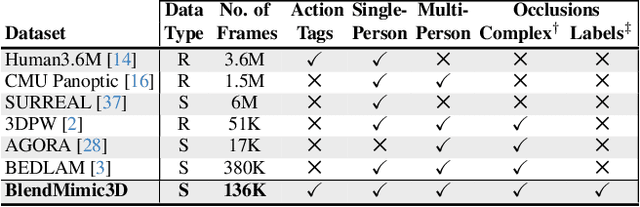

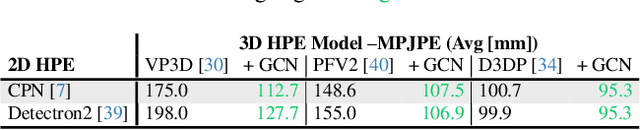

Apr 14, 2025Abstract:This paper addresses critical challenges in 3D Human Pose Estimation (HPE) by analyzing the robustness and sensitivity of existing models to occlusions, camera position, and action variability. Using a novel synthetic dataset, BlendMimic3D, which includes diverse scenarios with multi-camera setups and several occlusion types, we conduct specific tests on several state-of-the-art models. Our study focuses on the discrepancy in keypoint formats between common datasets such as Human3.6M, and 2D datasets such as COCO, commonly used for 2D detection models and frequently input of 3D HPE models. Our work explores the impact of occlusions on model performance and the generality of models trained exclusively under standard conditions. The findings suggest significant sensitivity to occlusions and camera settings, revealing a need for models that better adapt to real-world variability and occlusion scenarios. This research contributed to ongoing efforts to improve the fidelity and applicability of 3D HPE systems in complex environments.

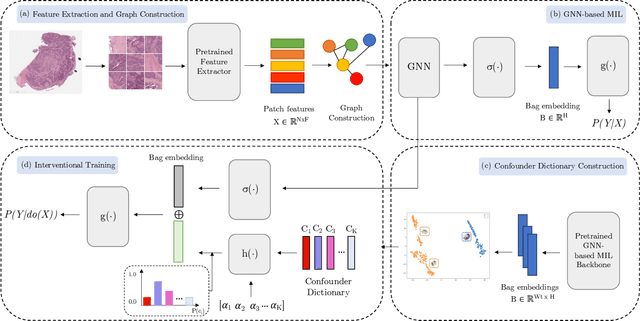

The Role of Graph-based MIL and Interventional Training in the Generalization of WSI Classifiers

Jan 31, 2025

Abstract:Whole Slide Imaging (WSI), which involves high-resolution digital scans of pathology slides, has become the gold standard for cancer diagnosis, but its gigapixel resolution and the scarcity of annotated datasets present challenges for deep learning models. Multiple Instance Learning (MIL), a widely-used weakly supervised approach, bypasses the need for patch-level annotations. However, conventional MIL methods overlook the spatial relationships between patches, which are crucial for tasks such as cancer grading and diagnosis. To address this, graph-based approaches have gained prominence by incorporating spatial information through node connections. Despite their potential, both MIL and graph-based models are vulnerable to learning spurious associations, like color variations in WSIs, affecting their robustness. In this dissertation, we conduct an extensive comparison of multiple graph construction techniques, MIL models, graph-MIL approaches, and interventional training, introducing a new framework, Graph-based Multiple Instance Learning with Interventional Training (GMIL-IT), for WSI classification. We evaluate their impact on model generalization through domain shift analysis and demonstrate that graph-based models alone achieve the generalization initially anticipated from interventional training. Our code is available here: github.com/ritamartinspereira/GMIL-IT

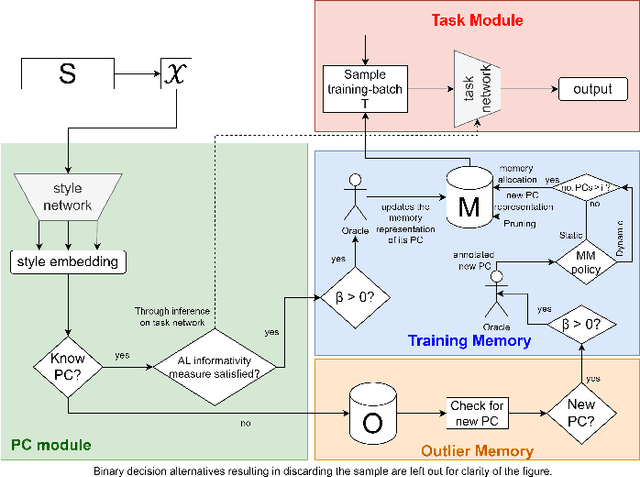

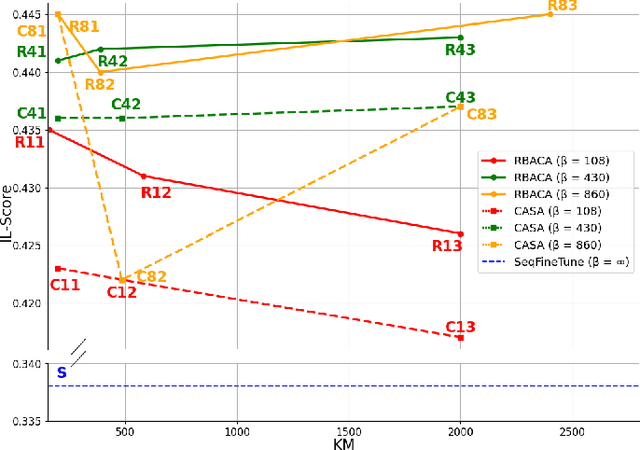

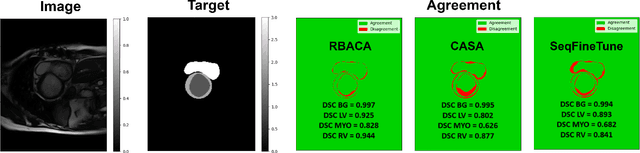

Continual Deep Active Learning for Medical Imaging: Replay-Base Architecture for Context Adaptation

Jan 14, 2025

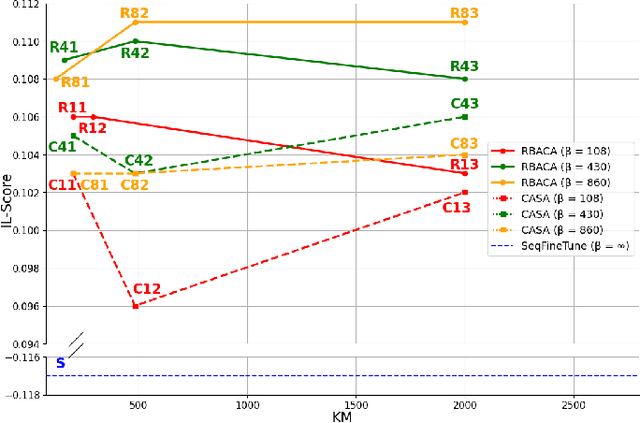

Abstract:Deep Learning for medical imaging faces challenges in adapting and generalizing to new contexts. Additionally, it often lacks sufficient labeled data for specific tasks requiring significant annotation effort. Continual Learning (CL) tackles adaptability and generalizability by enabling lifelong learning from a data stream while mitigating forgetting of previously learned knowledge. Active Learning (AL) reduces the number of required annotations for effective training. This work explores both approaches (CAL) to develop a novel framework for robust medical image analysis. Based on the automatic recognition of shifts in image characteristics, Replay-Base Architecture for Context Adaptation (RBACA) employs a CL rehearsal method to continually learn from diverse contexts, and an AL component to select the most informative instances for annotation. A novel approach to evaluate CAL methods is established using a defined metric denominated IL-Score, which allows for the simultaneous assessment of transfer learning, forgetting, and final model performance. We show that RBACA works in domain and class-incremental learning scenarios, by assessing its IL-Score on the segmentation and diagnosis of cardiac images. The results show that RBACA outperforms a baseline framework without CAL, and a state-of-the-art CAL method across various memory sizes and annotation budgets. Our code is available in https://github.com/RuiDaniel/RBACA .

MMIST-ccRCC: A Real World Medical Dataset for the Development of Multi-Modal Systems

May 02, 2024

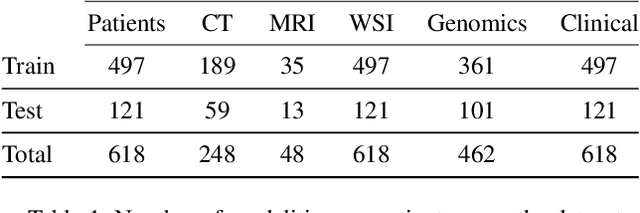

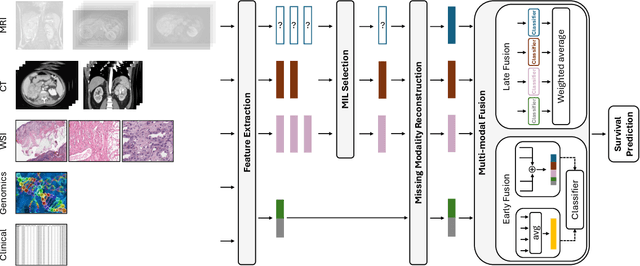

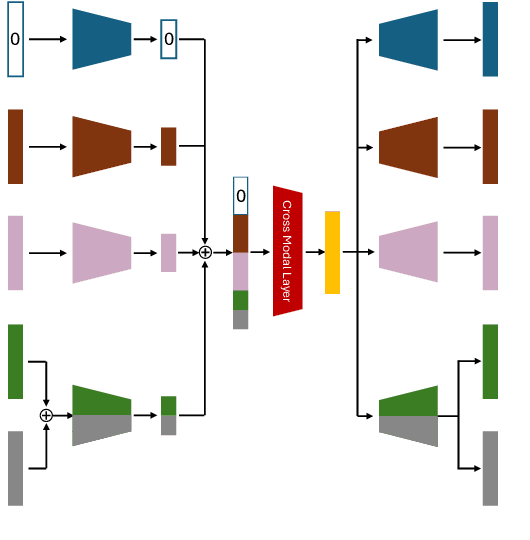

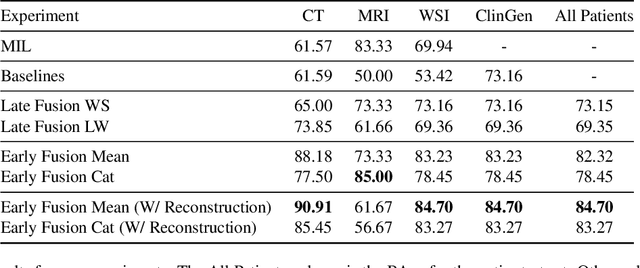

Abstract:The acquisition of different data modalities can enhance our knowledge and understanding of various diseases, paving the way for a more personalized healthcare. Thus, medicine is progressively moving towards the generation of massive amounts of multi-modal data (\emph{e.g,} molecular, radiology, and histopathology). While this may seem like an ideal environment to capitalize data-centric machine learning approaches, most methods still focus on exploring a single or a pair of modalities due to a variety of reasons: i) lack of ready to use curated datasets; ii) difficulty in identifying the best multi-modal fusion strategy; and iii) missing modalities across patients. In this paper we introduce a real world multi-modal dataset called MMIST-CCRCC that comprises 2 radiology modalities (CT and MRI), histopathology, genomics, and clinical data from 618 patients with clear cell renal cell carcinoma (ccRCC). We provide single and multi-modal (early and late fusion) benchmarks in the task of 12-month survival prediction in the challenging scenario of one or more missing modalities for each patient, with missing rates that range from 26$\%$ for genomics data to more than 90$\%$ for MRI. We show that even with such severe missing rates the fusion of modalities leads to improvements in the survival forecasting. Additionally, incorporating a strategy to generate the latent representations of the missing modalities given the available ones further improves the performance, highlighting a potential complementarity across modalities. Our dataset and code are available here: https://multi-modal-ist.github.io/datasets/ccRCC

Key Patches Are All You Need: A Multiple Instance Learning Framework For Robust Medical Diagnosis

May 02, 2024Abstract:Deep learning models have revolutionized the field of medical image analysis, due to their outstanding performances. However, they are sensitive to spurious correlations, often taking advantage of dataset bias to improve results for in-domain data, but jeopardizing their generalization capabilities. In this paper, we propose to limit the amount of information these models use to reach the final classification, by using a multiple instance learning (MIL) framework. MIL forces the model to use only a (small) subset of patches in the image, identifying discriminative regions. This mimics the clinical procedures, where medical decisions are based on localized findings. We evaluate our framework on two medical applications: skin cancer diagnosis using dermoscopy and breast cancer diagnosis using mammography. Our results show that using only a subset of the patches does not compromise diagnostic performance for in-domain data, compared to the baseline approaches. However, our approach is more robust to shifts in patient demographics, while also providing more detailed explanations about which regions contributed to the decision. Code is available at: https://github.com/diogojpa99/MedicalMultiple-Instance-Learning.

3D Human Pose Estimation with Occlusions: Introducing BlendMimic3D Dataset and GCN Refinement

Apr 24, 2024

Abstract:In the field of 3D Human Pose Estimation (HPE), accurately estimating human pose, especially in scenarios with occlusions, is a significant challenge. This work identifies and addresses a gap in the current state of the art in 3D HPE concerning the scarcity of data and strategies for handling occlusions. We introduce our novel BlendMimic3D dataset, designed to mimic real-world situations where occlusions occur for seamless integration in 3D HPE algorithms. Additionally, we propose a 3D pose refinement block, employing a Graph Convolutional Network (GCN) to enhance pose representation through a graph model. This GCN block acts as a plug-and-play solution, adaptable to various 3D HPE frameworks without requiring retraining them. By training the GCN with occluded data from BlendMimic3D, we demonstrate significant improvements in resolving occluded poses, with comparable results for non-occluded ones. Project web page is available at https://blendmimic3d.github.io/BlendMimic3D/.

XAI for Skin Cancer Detection with Prototypes and Non-Expert Supervision

Feb 02, 2024Abstract:Skin cancer detection through dermoscopy image analysis is a critical task. However, existing models used for this purpose often lack interpretability and reliability, raising the concern of physicians due to their black-box nature. In this paper, we propose a novel approach for the diagnosis of melanoma using an interpretable prototypical-part model. We introduce a guided supervision based on non-expert feedback through the incorporation of: 1) binary masks, obtained automatically using a segmentation network; and 2) user-refined prototypes. These two distinct information pathways aim to ensure that the learned prototypes correspond to relevant areas within the skin lesion, excluding confounding factors beyond its boundaries. Experimental results demonstrate that, even without expert supervision, our approach achieves superior performance and generalization compared to non-interpretable models.

Unsupervised Vehicle Counting via Multiple Camera Domain Adaptation

Apr 20, 2020

Abstract:Monitoring vehicle flow in cities is a crucial issue to improve the urban environment and quality of life of citizens. Images are the best sensing modality to perceive and asses the flow of vehicles in large areas. Current technologies for vehicle counting in images hinge on large quantities of annotated data, preventing their scalability to city-scale as new cameras are added to the system. This is a recurrent problem when dealing with physical systems and a key research area in Machine Learning and AI. We propose and discuss a new methodology to design image-based vehicle density estimators with few labeled data via multiple camera domain adaptations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge