Bowei Li

Linda

NeSyPack: A Neuro-Symbolic Framework for Bimanual Logistics Packing

Jun 06, 2025Abstract:This paper presents NeSyPack, a neuro-symbolic framework for bimanual logistics packing. NeSyPack combines data-driven models and symbolic reasoning to build an explainable hierarchical system that is generalizable, data-efficient, and reliable. It decomposes a task into subtasks via hierarchical reasoning, and further into atomic skills managed by a symbolic skill graph. The graph selects skill parameters, robot configurations, and task-specific control strategies for execution. This modular design enables robustness, adaptability, and efficient reuse - outperforming end-to-end models that require large-scale retraining. Using NeSyPack, our team won the First Prize in the What Bimanuals Can Do (WBCD) competition at the 2025 IEEE International Conference on Robotics and Automation.

SPARK: A Modular Benchmark for Humanoid Robot Safety

Feb 05, 2025Abstract:This paper introduces the Safe Protective and Assistive Robot Kit (SPARK), a comprehensive benchmark designed to ensure safety in humanoid autonomy and teleoperation. Humanoid robots pose significant safety risks due to their physical capabilities of interacting with complex environments. The physical structures of humanoid robots further add complexity to the design of general safety solutions. To facilitate the safe deployment of complex robot systems, SPARK can be used as a toolbox that comes with state-of-the-art safe control algorithms in a modular and composable robot control framework. Users can easily configure safety criteria and sensitivity levels to optimize the balance between safety and performance. To accelerate humanoid safety research and development, SPARK provides a simulation benchmark that compares safety approaches in a variety of environments, tasks, and robot models. Furthermore, SPARK allows quick deployment of synthesized safe controllers on real robots. For hardware deployment, SPARK supports Apple Vision Pro (AVP) or a Motion Capture System as external sensors, while also offering interfaces for seamless integration with alternative hardware setups. This paper demonstrates SPARK's capability with both simulation experiments and case studies with a Unitree G1 humanoid robot. Leveraging these advantages of SPARK, users and researchers can significantly improve the safety of their humanoid systems as well as accelerate relevant research. The open-source code is available at https://github.com/intelligent-control-lab/spark.

Maximizing User Connectivity in AI-Enabled Multi-UAV Networks: A Distributed Strategy Generalized to Arbitrary User Distributions

Nov 07, 2024

Abstract:Deep reinforcement learning (DRL) has been extensively applied to Multi-Unmanned Aerial Vehicle (UAV) network (MUN) to effectively enable real-time adaptation to complex, time-varying environments. Nevertheless, most of the existing works assume a stationary user distribution (UD) or a dynamic one with predicted patterns. Such considerations may make the UD-specific strategies insufficient when a MUN is deployed in unknown environments. To this end, this paper investigates distributed user connectivity maximization problem in a MUN with generalization to arbitrary UDs. Specifically, the problem is first formulated into a time-coupled combinatorial nonlinear non-convex optimization with arbitrary underlying UDs. To make the optimization tractable, a multi-agent CNN-enhanced deep Q learning (MA-CDQL) algorithm is proposed. The algorithm integrates a ResNet-based CNN to the policy network to analyze the input UD in real time and obtain optimal decisions based on the extracted high-level UD features. To improve the learning efficiency and avoid local optimums, a heatmap algorithm is developed to transform the raw UD to a continuous density map. The map will be part of the true input to the policy network. Simulations are conducted to demonstrate the efficacy of UD heatmaps and the proposed algorithm in maximizing user connectivity as compared to K-means methods.

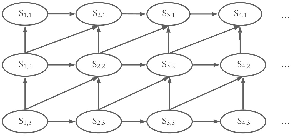

Learning with Dynamics: Autonomous Regulation of UAV Based Communication Networks with Dynamic UAV Crew

Sep 25, 2024Abstract:Unmanned Aerial Vehicle (UAV) based communication networks (UCNs) are a key component in future mobile networking. To handle the dynamic environments in UCNs, reinforcement learning (RL) has been a promising solution attributed to its strong capability of adaptive decision-making free of the environment models. However, most existing RL-based research focus on control strategy design assuming a fixed set of UAVs. Few works have investigated how UCNs should be adaptively regulated when the serving UAVs change dynamically. This article discusses RL-based strategy design for adaptive UCN regulation given a dynamic UAV set, addressing both reactive strategies in general UCNs and proactive strategies in solar-powered UCNs. An overview of the UCN and the RL framework is first provided. Potential research directions with key challenges and possible solutions are then elaborated. Some of our recent works are presented as case studies to inspire innovative ways to handle dynamic UAV crew with different RL algorithms.

Ensemble Learning For Mega Man Level Generation

Jul 27, 2021

Abstract:Procedural content generation via machine learning (PCGML) is the process of procedurally generating game content using models trained on existing game content. PCGML methods can struggle to capture the true variance present in underlying data with a single model. In this paper, we investigated the use of ensembles of Markov chains for procedurally generating \emph{Mega Man} levels. We conduct an initial investigation of our approach and evaluate it on measures of playability and stylistic similarity in comparison to a non-ensemble, existing Markov chain approach.

* 9 pages, 7 figures, Workshop on Procedural Content Generation

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge