Bei Hui

Historically Relevant Event Structuring for Temporal Knowledge Graph Reasoning

May 17, 2024

Abstract:Temporal Knowledge Graph (TKG) reasoning focuses on predicting events through historical information within snapshots distributed on a timeline. Existing studies mainly concentrate on two perspectives of leveraging the history of TKGs, including capturing evolution of each recent snapshot or correlations among global historical facts. Despite the achieved significant accomplishments, these models still fall short of (1) investigating the influences of multi-granularity interactions across recent snapshots and (2) harnessing the expressive semantics of significant links accorded with queries throughout the entire history, especially events exerting a profound impact on the future. These inadequacies restrict representation ability to reflect historical dependencies and future trends thoroughly. To overcome these drawbacks, we propose an innovative TKG reasoning approach towards \textbf{His}torically \textbf{R}elevant \textbf{E}vents \textbf{S}tructuring ($\mathsf{HisRES}$). Concretely, $\mathsf{HisRES}$ comprises two distinctive modules excelling in structuring historically relevant events within TKGs, including a multi-granularity evolutionary encoder that captures structural and temporal dependencies of the most recent snapshots, and a global relevance encoder that concentrates on crucial correlations among events relevant to queries from the entire history. Furthermore, $\mathsf{HisRES}$ incorporates a self-gating mechanism for adaptively merging multi-granularity recent and historically relevant structuring representations. Extensive experiments on four event-based benchmarks demonstrate the state-of-the-art performance of $\mathsf{HisRES}$ and indicate the superiority and effectiveness of structuring historical relevance for TKG reasoning.

FCDS: Fusing Constituency and Dependency Syntax into Document-Level Relation Extraction

Mar 04, 2024

Abstract:Document-level Relation Extraction (DocRE) aims to identify relation labels between entities within a single document. It requires handling several sentences and reasoning over them. State-of-the-art DocRE methods use a graph structure to connect entities across the document to capture dependency syntax information. However, this is insufficient to fully exploit the rich syntax information in the document. In this work, we propose to fuse constituency and dependency syntax into DocRE. It uses constituency syntax to aggregate the whole sentence information and select the instructive sentences for the pairs of targets. It exploits the dependency syntax in a graph structure with constituency syntax enhancement and chooses the path between entity pairs based on the dependency graph. The experimental results on datasets from various domains demonstrate the effectiveness of the proposed method. The code is publicly available at this url.

Learning Multi-graph Structure for Temporal Knowledge Graph Reasoning

Dec 04, 2023

Abstract:Temporal Knowledge Graph (TKG) reasoning that forecasts future events based on historical snapshots distributed over timestamps is denoted as extrapolation and has gained significant attention. Owing to its extreme versatility and variation in spatial and temporal correlations, TKG reasoning presents a challenging task, demanding efficient capture of concurrent structures and evolutional interactions among facts. While existing methods have made strides in this direction, they still fall short of harnessing the diverse forms of intrinsic expressive semantics of TKGs, which encompass entity correlations across multiple timestamps and periodicity of temporal information. This limitation constrains their ability to thoroughly reflect historical dependencies and future trends. In response to these drawbacks, this paper proposes an innovative reasoning approach that focuses on Learning Multi-graph Structure (LMS). Concretely, it comprises three distinct modules concentrating on multiple aspects of graph structure knowledge within TKGs, including concurrent and evolutional patterns along timestamps, query-specific correlations across timestamps, and semantic dependencies of timestamps, which capture TKG features from various perspectives. Besides, LMS incorporates an adaptive gate for merging entity representations both along and across timestamps effectively. Moreover, it integrates timestamp semantics into graph attention calculations and time-aware decoders, in order to impose temporal constraints on events and narrow down prediction scopes with historical statistics. Extensive experimental results on five event-based benchmark datasets demonstrate that LMS outperforms state-of-the-art extrapolation models, indicating the superiority of modeling a multi-graph perspective for TKG reasoning.

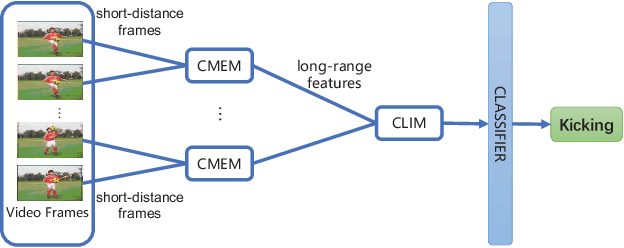

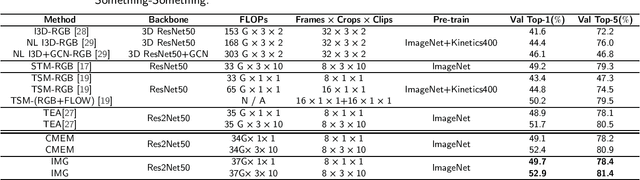

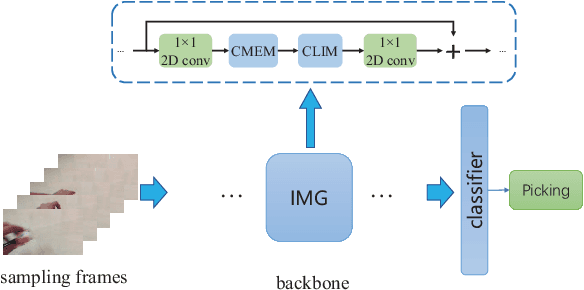

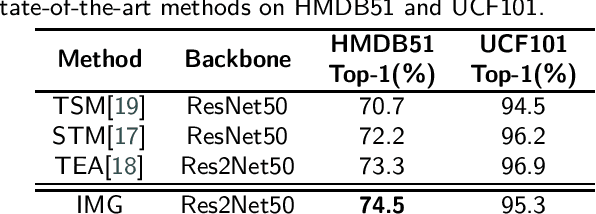

Behavior Recognition Based on the Integration of Multigranular Motion Features

Mar 07, 2022

Abstract:The recognition of behaviors in videos usually requires a combinatorial analysis of the spatial information about objects and their dynamic action information in the temporal dimension. Specifically, behavior recognition may even rely more on the modeling of temporal information containing short-range and long-range motions; this contrasts with computer vision tasks involving images that focus on the understanding of spatial information. However, current solutions fail to jointly and comprehensively analyze short-range motion between adjacent frames and long-range temporal aggregations at large scales in videos. In this paper, we propose a novel behavior recognition method based on the integration of multigranular (IMG) motion features. In particular, we achieve reliable motion information modeling through the synergy of a channel attention-based short-term motion feature enhancement module (CMEM) and a cascaded long-term motion feature integration module (CLIM). We evaluate our model on several action recognition benchmarks such as HMDB51, Something-Something and UCF101. The experimental results demonstrate that our approach outperforms the previous state-of-the-art methods, which confirms its effectiveness and efficiency.

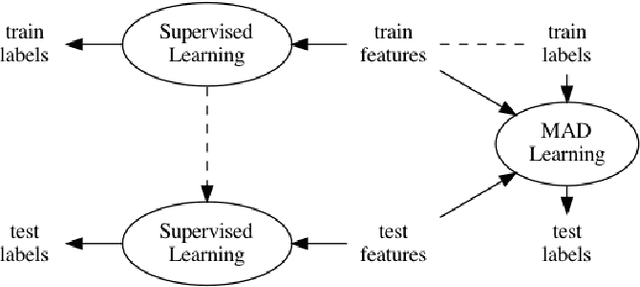

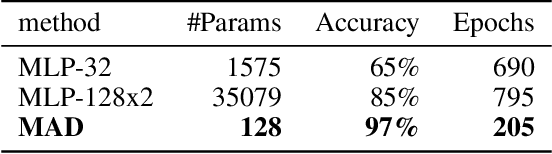

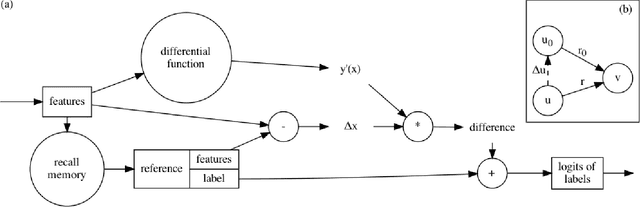

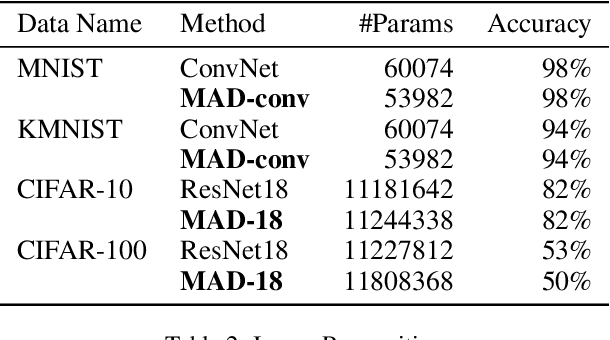

Memory-Associated Differential Learning

Feb 10, 2021

Abstract:Conventional Supervised Learning approaches focus on the mapping from input features to output labels. After training, the learnt models alone are adapted onto testing features to predict testing labels in isolation, with training data wasted and their associations ignored. To take full advantage of the vast number of training data and their associations, we propose a novel learning paradigm called Memory-Associated Differential (MAD) Learning. We first introduce an additional component called Memory to memorize all the training data. Then we learn the differences of labels as well as the associations of features in the combination of a differential equation and some sampling methods. Finally, in the evaluating phase, we predict unknown labels by inferencing from the memorized facts plus the learnt differences and associations in a geometrically meaningful manner. We gently build this theory in unary situations and apply it on Image Recognition, then extend it into Link Prediction as a binary situation, in which our method outperforms strong state-of-the-art baselines on three citation networks and ogbl-ddi dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge