Art B. Owen

Model free Shapley values for high dimensional data

Nov 15, 2022

Abstract:A model-agnostic variable importance method can be used with arbitrary prediction functions. Here we present some model-free methods that do not require access to the prediction function. This is useful when that function is proprietary and not available, or just extremely expensive. It is also useful when studying residuals from a model. The cohort Shapley (CS) method is model-free but has exponential cost in the dimension of the input space. A supervised on-manifold Shapley method from Frye et al. (2020) is also model free but requires as input a second black box model that has to be trained for the Shapley value problem. We introduce an integrated gradient version of cohort Shapley, called IGCS, with cost $\mathcal{O}(nd)$. We show that over the vast majority of the relevant unit cube that the IGCS value function is close to a multilinear function for which IGCS matches CS. We use some area under the curve (AUC) measures to quantify the performance of IGCS. On a problem from high energy physics we verify that IGCS has nearly the same AUCs as CS. We also use it on a problem from computational chemistry in 1024 variables. We see there that IGCS attains much higher AUCs than we get from Monte Carlo sampling. The code is publicly available at https://github.com/cohortshapley/cohortintgrad.

Variable importance without impossible data

May 31, 2022

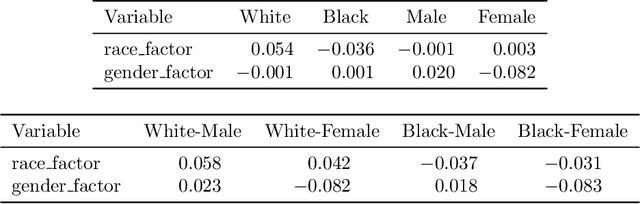

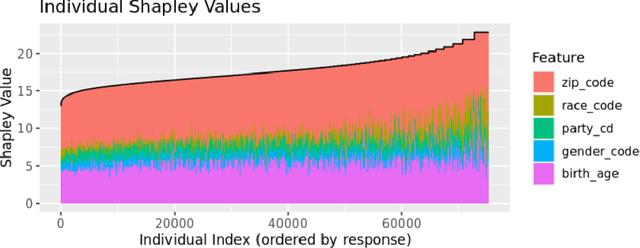

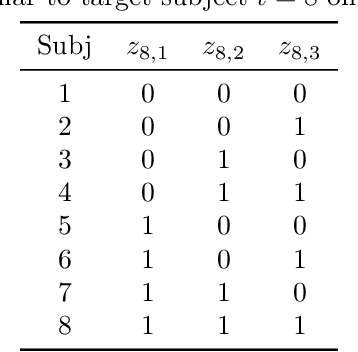

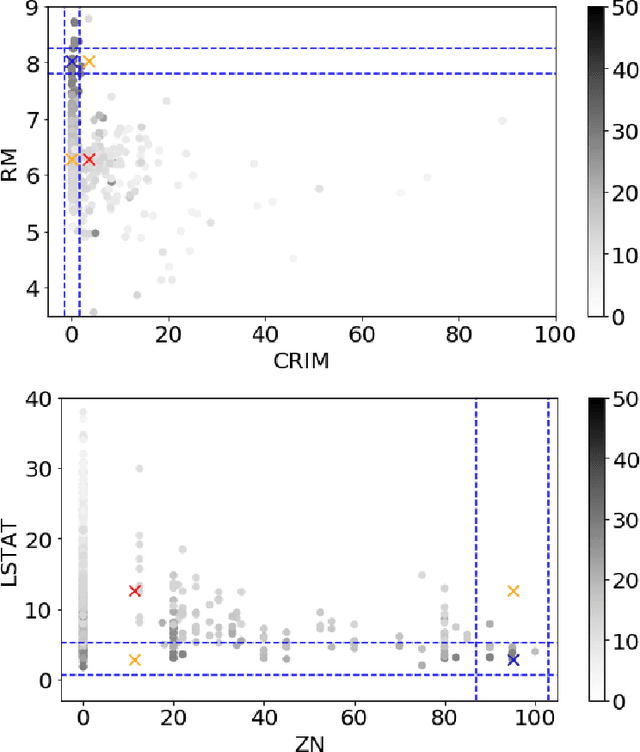

Abstract:The most popular methods for measuring importance of the variables in a black box prediction algorithm make use of synthetic inputs that combine predictor variables from multiple subjects. These inputs can be unlikely, physically impossible, or even logically impossible. As a result, the predictions for such cases can be based on data very unlike any the black box was trained on. We think that users cannot trust an explanation of the decision of a prediction algorithm when the explanation uses such values. Instead we advocate a method called Cohort Shapley that is grounded in economic game theory and unlike most other game theoretic methods, it uses only actually observed data to quantify variable importance. Cohort Shapley works by narrowing the cohort of subjects judged to be similar to a target subject on one or more features. A feature is important if using it to narrow the cohort makes a large difference to the cohort mean. We illustrate it on an algorithmic fairness problem where it is essential to attribute importance to protected variables that the model was not trained on. For every subject and every predictor variable, we can compute the importance of that predictor to the subject's predicted response or to their actual response. These values can be aggregated, for example over all Black subjects, and we propose a Bayesian bootstrap to quantify uncertainty in both individual and aggregate Shapley values.

Deletion and Insertion Tests in Regression Models

May 25, 2022

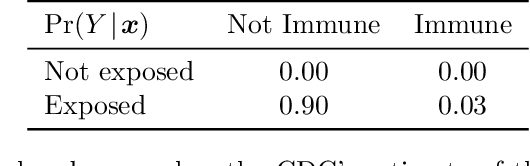

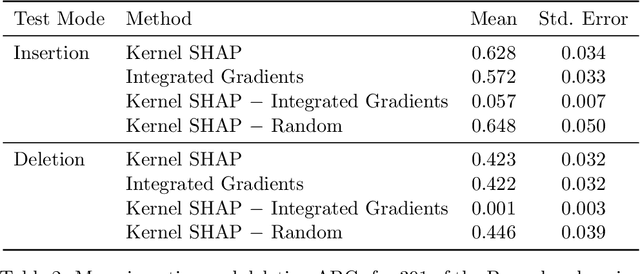

Abstract:A basic task in explainable AI (XAI) is to identify the most important features behind a prediction made by a black box function $f$. The insertion and deletion tests of \cite{petsiuk2018rise} are used to judge the quality of algorithms that rank pixels from most to least important for a classification. Motivated by regression problems we establish a formula for their area under the curve (AUC) criteria in terms of certain main effects and interactions in an anchored decomposition of $f$. We find an expression for the expected value of the AUC under a random ordering of inputs to $f$ and propose an alternative area above a straight line for the regression setting. We use this criterion to compare feature importances computed by integrated gradients (IG) to those computed by Kernel SHAP (KS). Exact computation of KS grows exponentially with dimension, while that of IG grows linearly with dimension. In two data sets including binary variables we find that KS is superior to IG in insertion and deletion tests, but only by a very small amount. Our comparison problems include some binary inputs that pose a challenge to IG because it must use values between the possible variable levels. We show that IG will match KS when $f$ is an additive function plus a multilinear function of the variables. This includes a multilinear interpolation over the binary variables that would cause IG to have exponential cost in a naive implementation.

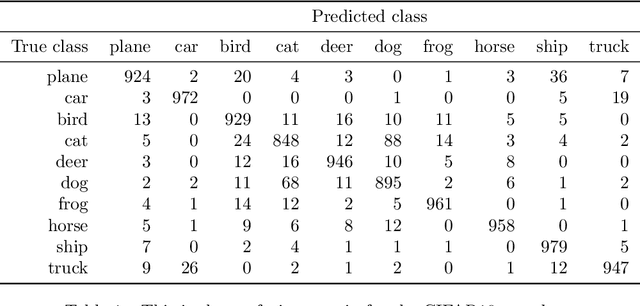

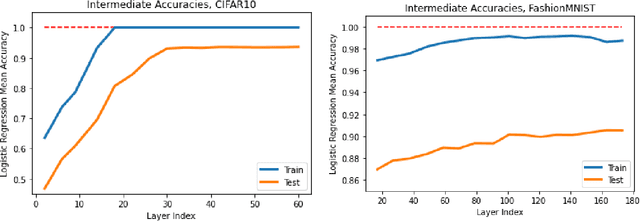

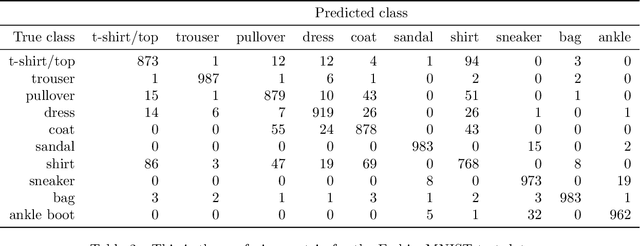

Probing neural networks with t-SNE, class-specific projections and a guided tour

Jul 27, 2021

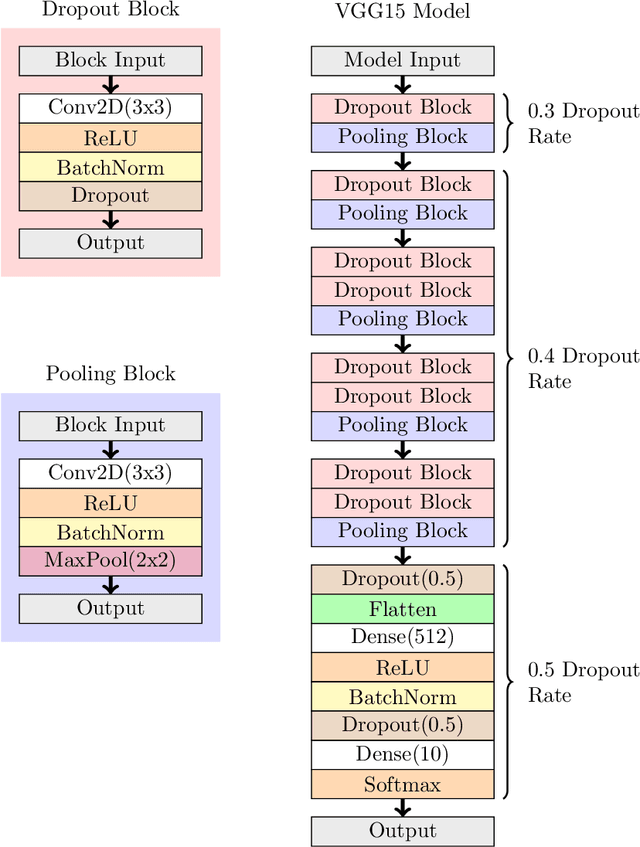

Abstract:We use graphical methods to probe neural nets that classify images. Plots of t-SNE outputs at successive layers in a network reveal increasingly organized arrangement of the data points. They can also reveal how a network can diminish or even forget about within-class structure as the data proceeds through layers. We use class-specific analogues of principal components to visualize how succeeding layers separate the classes. These allow us to sort images from a given class from most typical to least typical (in the data) and they also serve as very useful projection coordinates for data visualization. We find them especially useful when defining versions guided tours for animated data visualization.

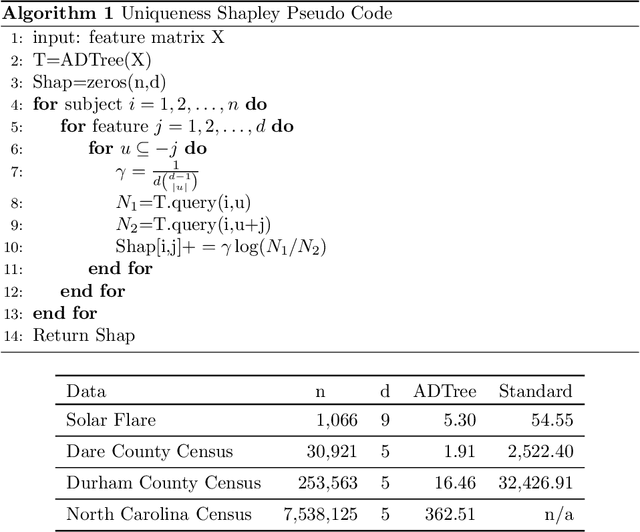

What makes you unique?

May 17, 2021

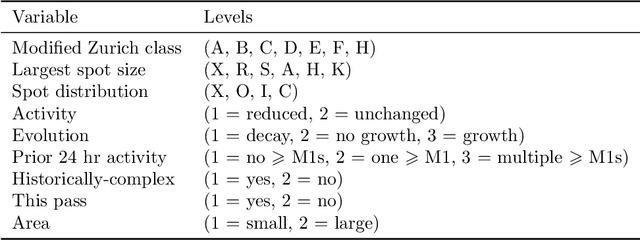

Abstract:This paper proposes a uniqueness Shapley measure to compare the extent to which different variables are able to identify a subject. Revealing the value of a variable on subject $t$ shrinks the set of possible subjects that $t$ could be. The extent of the shrinkage depends on which other variables have also been revealed. We use Shapley value to combine all of the reductions in log cardinality due to revealing a variable after some subset of the other variables has been revealed. This uniqueness Shapley measure can be aggregated over subjects where it becomes a weighted sum of conditional entropies. Aggregation over subsets of subjects can address questions like how identifying is age for people of a given zip code. Such aggregates have a corresponding expression in terms of cross entropies. We use uniqueness Shapley to investigate the differential effects of revealing variables from the North Carolina voter registration rolls and in identifying anomalous solar flares. An enormous speedup (approaching 2000 fold in one example) is obtained by using the all dimension trees of Moore and Lee (1998) to store the cardinalities we need.

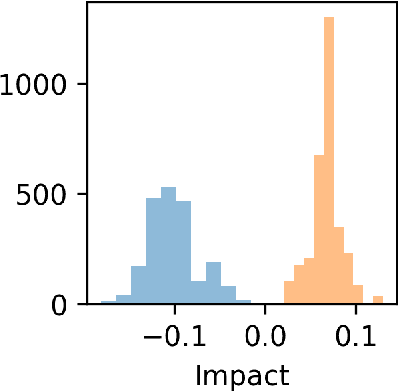

Cohort Shapley value for algorithmic fairness

May 15, 2021

Abstract:Cohort Shapley value is a model-free method of variable importance grounded in game theory that does not use any unobserved and potentially impossible feature combinations. We use it to evaluate algorithmic fairness, using the well known COMPAS recidivism data as our example. This approach allows one to identify for each individual in a data set the extent to which they were adversely or beneficially affected by their value of a protected attribute such as their race. The method can do this even if race was not one of the original predictors and even if it does not have access to a proprietary algorithm that has made the predictions. The grounding in game theory lets us define aggregate variable importance for a data set consistently with its per subject definitions. We can investigate variable importance for multiple quantities of interest in the fairness literature including false positive predictions.

Quasi-Newton Quasi-Monte Carlo for variational Bayes

Apr 21, 2021

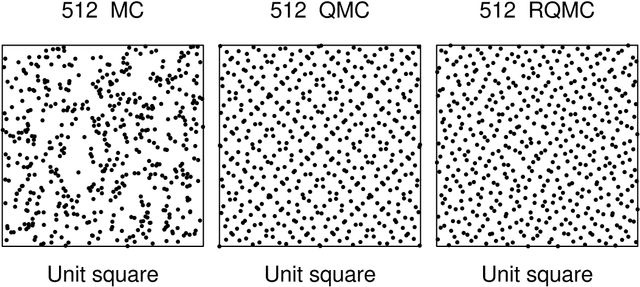

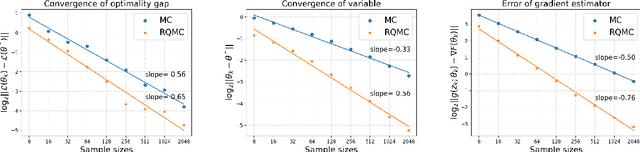

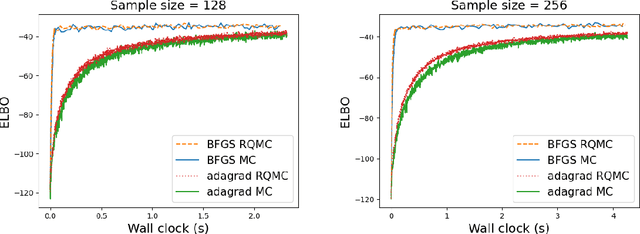

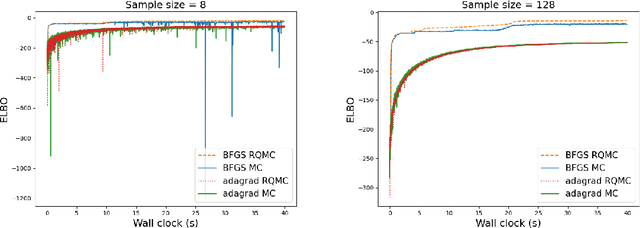

Abstract:Many machine learning problems optimize an objective that must be measured with noise. The primary method is a first order stochastic gradient descent using one or more Monte Carlo (MC) samples at each step. There are settings where ill-conditioning makes second order methods such as L-BFGS more effective. We study the use of randomized quasi-Monte Carlo (RQMC) sampling for such problems. When MC sampling has a root mean squared error (RMSE) of $O(n^{-1/2})$ then RQMC has an RMSE of $o(n^{-1/2})$ that can be close to $O(n^{-3/2})$ in favorable settings. We prove that improved sampling accuracy translates directly to improved optimization. In our empirical investigations for variational Bayes, using RQMC with stochastic L-BFGS greatly speeds up the optimization, and sometimes finds a better parameter value than MC does.

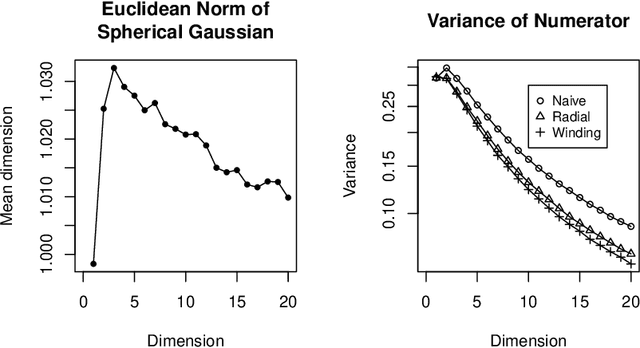

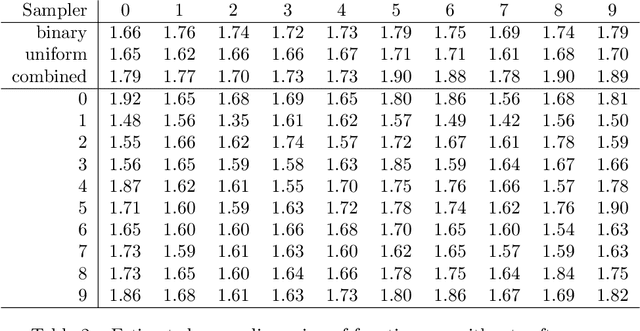

Efficient estimation of the ANOVA mean dimension, with an application to neural net classification

Jul 02, 2020

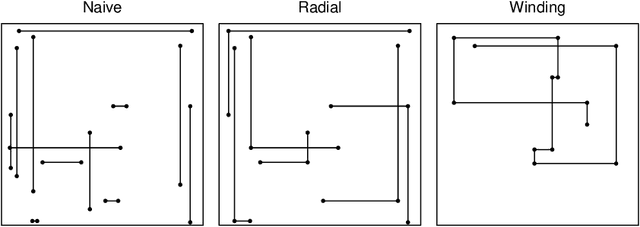

Abstract:The mean dimension of a black box function of $d$ variables is a convenient way to summarize the extent to which it is dominated by high or low order interactions. It is expressed in terms of $2^d-1$ variance components but it can be written as the sum of $d$ Sobol' indices that can be estimated by leave one out methods. We compare the variance of these leave one out methods: a Gibbs sampler called winding stairs, a radial sampler that changes each variable one at a time from a baseline, and a naive sampler that never reuses function evaluations and so costs about double the other methods. For an additive function the radial and winding stairs are most efficient. For a multiplicative function the naive method can easily be most efficient if the factors have high kurtosis. As an illustration we consider the mean dimension of a neural network classifier of digits from the MNIST data set. The classifier is a function of $784$ pixels. For that problem, winding stairs is the best algorithm. We find that inputs to the final softmax layer have mean dimensions ranging from $1.35$ to $2.0$.

Explaining black box decisions by Shapley cohort refinement

Nov 01, 2019

Abstract:We introduce a variable importance measure to explain the importance of individual variables to a decision made by a black box function. Our measure is based on the Shapley value from cooperative game theory. Measures of variable importance usually work by changing the value of one or more variables with the others held fixed and then recomputing the function of interest. That approach is problematic because it can create very unrealistic combinations of predictors that never appear in practice or that were never present when the prediction function was being created. Our cohort refinement Shapley approach measures variable importance without using any data points that were not actually observed.

Statistically efficient thinning of a Markov chain sampler

Apr 11, 2017

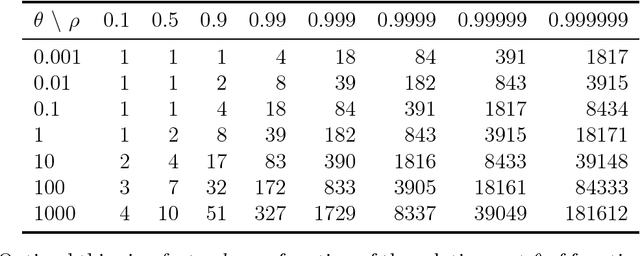

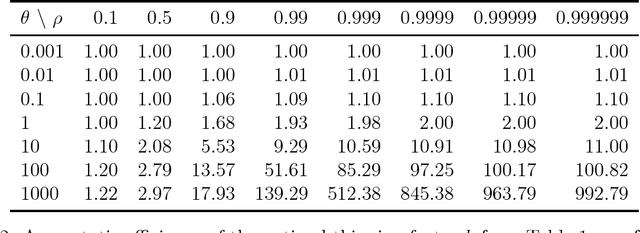

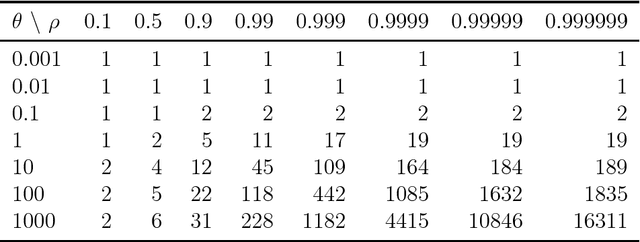

Abstract:It is common to subsample Markov chain output to reduce the storage burden. Geyer (1992) shows that discarding $k-1$ out of every $k$ observations will not improve statistical efficiency, as quantified through variance in a given computational budget. That observation is often taken to mean that thinning MCMC output cannot improve statistical efficiency. Here we suppose that it costs one unit of time to advance a Markov chain and then $\theta>0$ units of time to compute a sampled quantity of interest. For a thinned process, that cost $\theta$ is incurred less often, so it can be advanced through more stages. Here we provide examples to show that thinning will improve statistical efficiency if $\theta$ is large and the sample autocorrelations decay slowly enough. If the lag $\ell\ge1$ autocorrelations of a scalar measurement satisfy $\rho_\ell\ge\rho_{\ell+1}\ge0$, then there is always a $\theta<\infty$ at which thinning becomes more efficient for averages of that scalar. Many sample autocorrelation functions resemble first order AR(1) processes with $\rho_\ell =\rho^{|\ell|}$ for some $-1<\rho<1$. For an AR(1) process it is possible to compute the most efficient subsampling frequency $k$. The optimal $k$ grows rapidly as $\rho$ increases towards $1$. The resulting efficiency gain depends primarily on $\theta$, not $\rho$. Taking $k=1$ (no thinning) is optimal when $\rho\le0$. For $\rho>0$ it is optimal if and only if $\theta \le (1-\rho)^2/(2\rho)$. This efficiency gain never exceeds $1+\theta$. This paper also gives efficiency bounds for autocorrelations bounded between those of two AR(1) processes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge