Cohort Shapley value for algorithmic fairness

Paper and Code

May 15, 2021

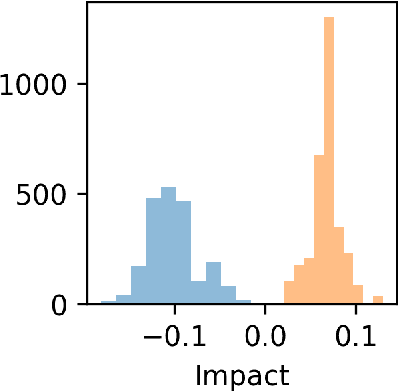

Cohort Shapley value is a model-free method of variable importance grounded in game theory that does not use any unobserved and potentially impossible feature combinations. We use it to evaluate algorithmic fairness, using the well known COMPAS recidivism data as our example. This approach allows one to identify for each individual in a data set the extent to which they were adversely or beneficially affected by their value of a protected attribute such as their race. The method can do this even if race was not one of the original predictors and even if it does not have access to a proprietary algorithm that has made the predictions. The grounding in game theory lets us define aggregate variable importance for a data set consistently with its per subject definitions. We can investigate variable importance for multiple quantities of interest in the fairness literature including false positive predictions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge