Anuradha Annaswamy

Safe Autonomy for Uncrewed Surface Vehicles Using Adaptive Control and Reachability Analysis

Oct 01, 2024

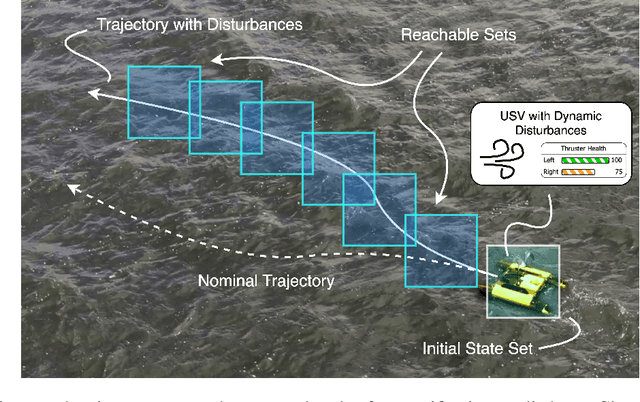

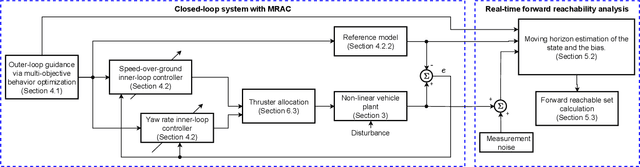

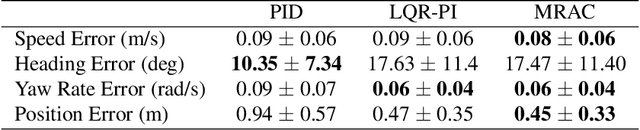

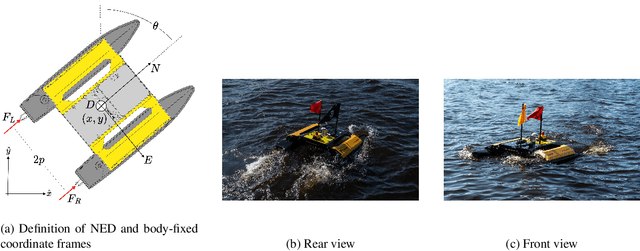

Abstract:Marine robots must maintain precise control and ensure safety during tasks like ocean monitoring, even when encountering unpredictable disturbances that affect performance. Designing algorithms for uncrewed surface vehicles (USVs) requires accounting for these disturbances to control the vehicle and ensure it avoids obstacles. While adaptive control has addressed USV control challenges, real-world applications are limited, and certifying USV safety amidst unexpected disturbances remains difficult. To tackle control issues, we employ a model reference adaptive controller (MRAC) to stabilize the USV along a desired trajectory. For safety certification, we developed a reachability module with a moving horizon estimator (MHE) to estimate disturbances affecting the USV. This estimate is propagated through a forward reachable set calculation, predicting future states and enabling real-time safety certification. We tested our safe autonomy pipeline on a Clearpath Heron USV in the Charles River, near MIT. Our experiments demonstrated that the USV's MRAC controller and reachability module could adapt to disturbances like thruster failures and drag forces. The MRAC controller outperformed a PID baseline, showing a 45%-81% reduction in RMSE position error. Additionally, the reachability module provided real-time safety certification, ensuring the USV's safety. We further validated our pipeline's effectiveness in underway replenishment and canal scenarios, simulating relevant marine tasks.

Federated Learning Forecasting for Strengthening Grid Reliability and Enabling Markets for Resilience

Jul 16, 2024

Abstract:We propose a comprehensive approach to increase the reliability and resilience of future power grids rich in distributed energy resources. Our distributed scheme combines federated learning-based attack detection with a local electricity market-based attack mitigation method. We validate the scheme by applying it to a real-world distribution grid rich in solar PV. Simulation results demonstrate that the approach is feasible and can successfully mitigate the grid impacts of cyber-physical attacks.

Physics-Informed Graph Neural Network for Dynamic Reconfiguration of Power Systems

Oct 01, 2023

Abstract:To maintain a reliable grid we need fast decision-making algorithms for complex problems like Dynamic Reconfiguration (DyR). DyR optimizes distribution grid switch settings in real-time to minimize grid losses and dispatches resources to supply loads with available generation. DyR is a mixed-integer problem and can be computationally intractable to solve for large grids and at fast timescales. We propose GraPhyR, a Physics-Informed Graph Neural Network (GNNs) framework tailored for DyR. We incorporate essential operational and connectivity constraints directly within the GNN framework and train it end-to-end. Our results show that GraPhyR is able to learn to optimize the DyR task.

MRAC-RL: A Framework for On-Line Policy Adaptation Under Parametric Model Uncertainty

Nov 20, 2020

Abstract:Reinforcement learning (RL) algorithms have been successfully used to develop control policies for dynamical systems. For many such systems, these policies are trained in a simulated environment. Due to discrepancies between the simulated model and the true system dynamics, RL trained policies often fail to generalize and adapt appropriately when deployed in the real-world environment. Current research in bridging this sim-to-real gap has largely focused on improvements in simulation design and on the development of improved and specialized RL algorithms for robust control policy generation. In this paper we apply principles from adaptive control and system identification to develop the model-reference adaptive control & reinforcement learning (MRAC-RL) framework. We propose a set of novel MRAC algorithms applicable to a broad range of linear and nonlinear systems, and derive the associated control laws. The MRAC-RL framework utilizes an inner-loop adaptive controller that allows a simulation-trained outer-loop policy to adapt and operate effectively in a test environment, even when parametric model uncertainty exists. We demonstrate that the MRAC-RL approach improves upon state-of-the-art RL algorithms in developing control policies that can be applied to systems with modeling errors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge