Andy Nealen

Generating and Adapting to Diverse Ad-Hoc Cooperation Agents in Hanabi

Apr 30, 2020

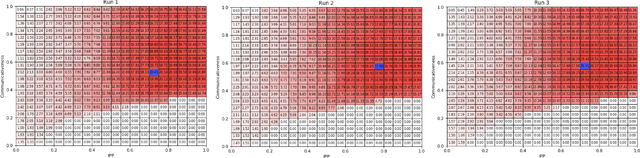

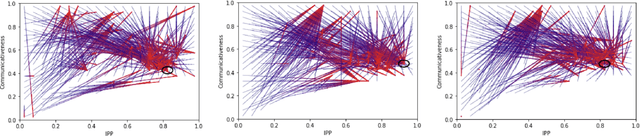

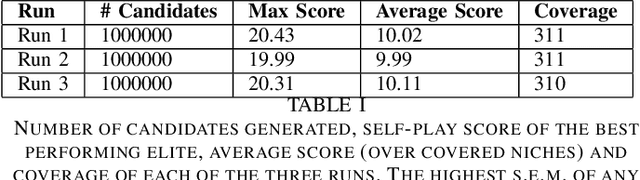

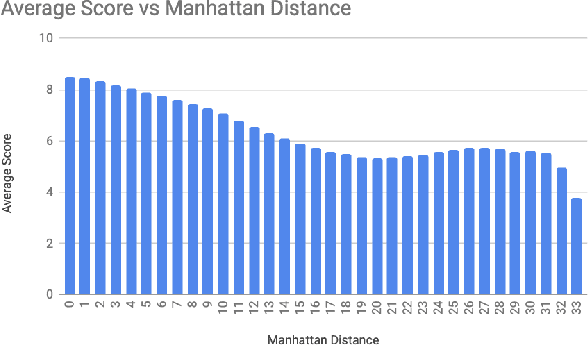

Abstract:Hanabi is a cooperative game that brings the problem of modeling other players to the forefront. In this game, coordinated groups of players can leverage pre-established conventions to great effect, but playing in an ad-hoc setting requires agents to adapt to its partner's strategies with no previous coordination. Evaluating an agent in this setting requires a diverse population of potential partners, but so far, the behavioral diversity of agents has not been considered in a systematic way. This paper proposes Quality Diversity algorithms as a promising class of algorithms to generate diverse populations for this purpose, and generates a population of diverse Hanabi agents using MAP-Elites. We also postulate that agents can benefit from a diverse population during training and implement a simple "meta-strategy" for adapting to an agent's perceived behavioral niche. We show this meta-strategy can work better than generalist strategies even outside the population it was trained with if its partner's behavioral niche can be correctly inferred, but in practice a partner's behavior depends and interferes with the meta-agent's own behavior, suggesting an avenue for future research in characterizing another agent's behavior during gameplay.

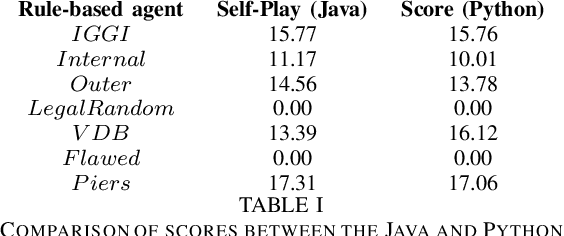

Evaluating the Rainbow DQN Agent in Hanabi with Unseen Partners

Apr 28, 2020

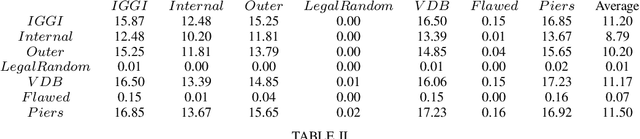

Abstract:Hanabi is a cooperative game that challenges exist-ing AI techniques due to its focus on modeling the mental states ofother players to interpret and predict their behavior. While thereare agents that can achieve near-perfect scores in the game byagreeing on some shared strategy, comparatively little progresshas been made in ad-hoc cooperation settings, where partnersand strategies are not known in advance. In this paper, we showthat agents trained through self-play using the popular RainbowDQN architecture fail to cooperate well with simple rule-basedagents that were not seen during training and, conversely, whenthese agents are trained to play with any individual rule-basedagent, or even a mix of these agents, they fail to achieve goodself-play scores.

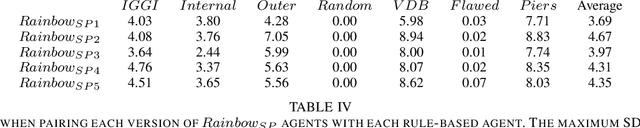

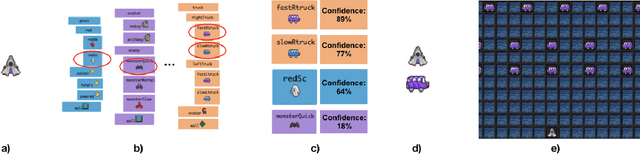

Evaluation of a Recommender System for Assisting Novice Game Designers

Aug 13, 2019

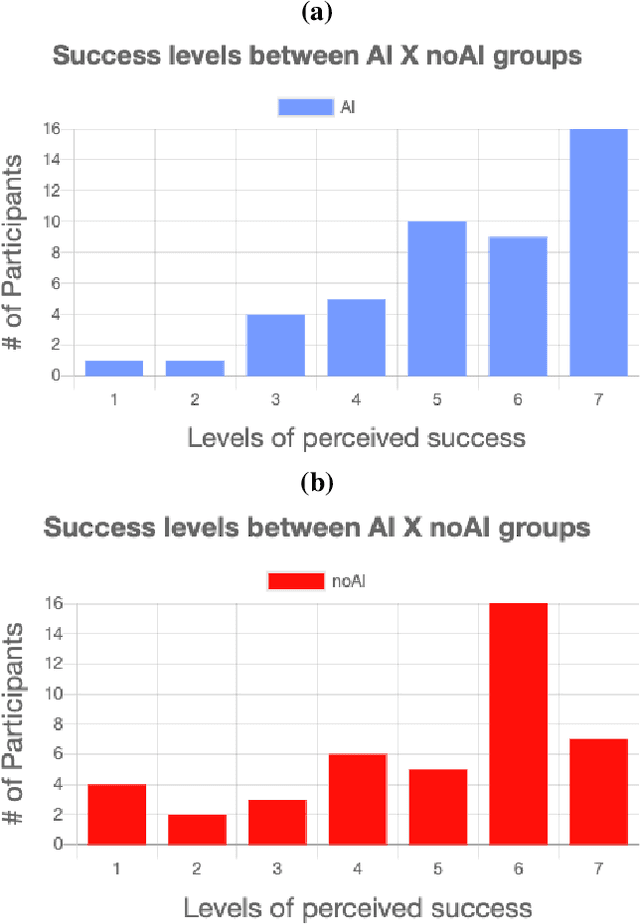

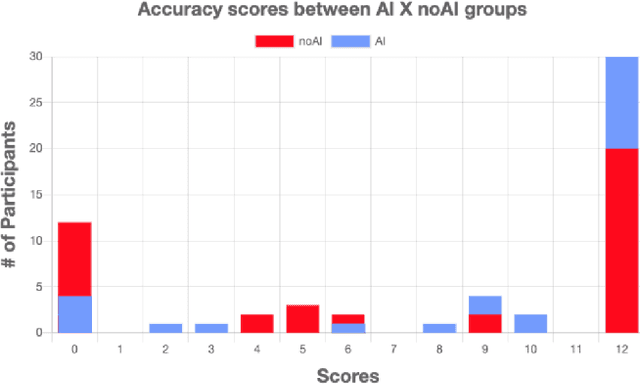

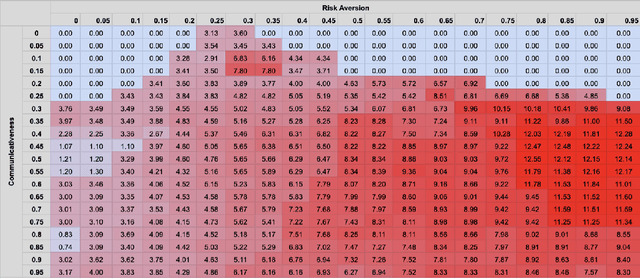

Abstract:Game development is a complex task involving multiple disciplines and technologies. Developers and researchers alike have suggested that AI-driven game design assistants may improve developer workflow. We present a recommender system for assisting humans in game design as well as a rigorous human subjects study to validate it. The AI-driven game design assistance system suggests game mechanics to designers based on characteristics of the game being developed. We believe this method can bring creative insights and increase users' productivity. We conducted quantitative studies that showed the recommender system increases users' levels of accuracy and computational affect, and decreases their levels of workload.

Pitako -- Recommending Game Design Elements in Cicero

Jul 08, 2019

Abstract:Recommender Systems are widely and successfully applied in e-commerce. Could they be used for design? In this paper, we introduce Pitako1, a tool that applies the Recommender System concept to assist humans in creative tasks. More specifically, Pitako provides suggestions by taking games designed by humans as inputs, and recommends mechanics and dynamics as outputs. Pitako is implemented as a new system within the mixed-initiative AI-based Game Design Assistant, Cicero. This paper discusses the motivation behind the implementation of Pitako as well as its technical details and presents usage examples. We believe that Pitako can influence the use of recommender systems to help humans in their daily tasks.

Diverse Agents for Ad-Hoc Cooperation in Hanabi

Jul 08, 2019

Abstract:In complex scenarios where a model of other actors is necessary to predict and interpret their actions, it is often desirable that the model works well with a wide variety of previously unknown actors. Hanabi is a card game that brings the problem of modeling other players to the forefront, but there is no agreement on how to best generate a pool of agents to use as partners in ad-hoc cooperation evaluation. This paper proposes Quality Diversity algorithms as a promising class of algorithms to generate populations for this purpose and shows an initial implementation of an agent generator based on this idea. We also discuss what metrics can be used to compare such generators, and how the proposed generator could be leveraged to help build adaptive agents for the game.

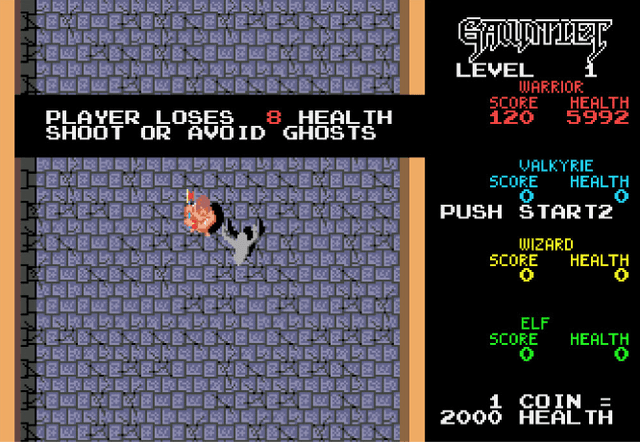

Leveling the Playing Field -- Fairness in AI Versus Human Game Benchmarks

Apr 14, 2019Abstract:From the beginning if the history of AI, there has been interest in games as a platform of research. As the field developed, human-level competence in complex games became a target researchers worked to reach. Only relatively recently has this target been finally met for traditional tabletop games such as Backgammon, Chess and Go. Current research focus has shifted to electronic games, which provide unique challenges. As is often the case with AI research, these results are liable to be exaggerated or misrepresented by either authors or third parties. The extent to which these games benchmark consist of fair competition between human and AI is also a matter of debate. In this work, we review the statements made by authors and third parties in the general media and academic circle about these game benchmark results and discuss factors that can impact the perception of fairness in the contest between humans and machines

Generating Levels That Teach Mechanics

Oct 01, 2018

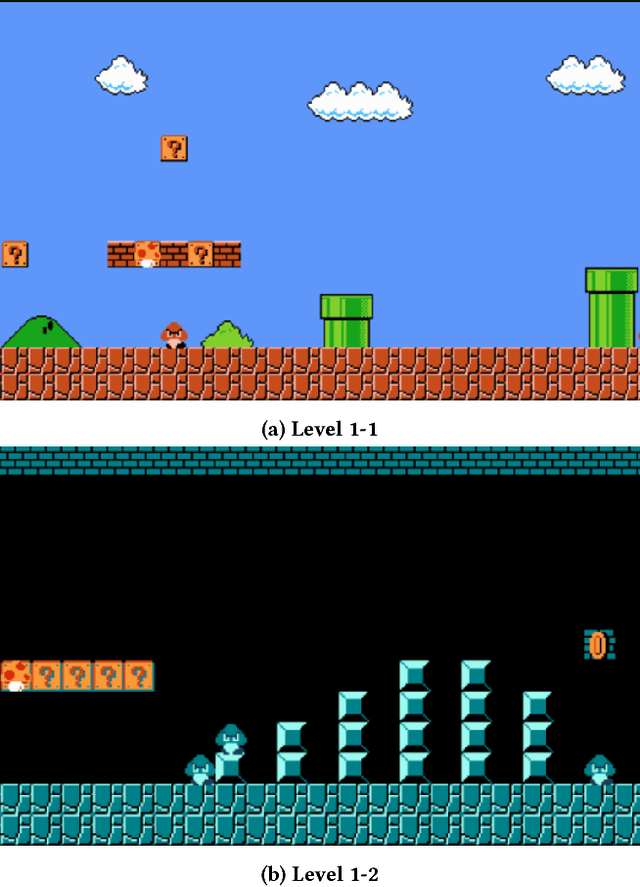

Abstract:The automatic generation of game tutorials is a challenging AI problem. While it is possible to generate annotations and instructions that explain to the player how the game is played, this paper focuses on generating a gameplay experience that introduces the player to a game mechanic. It evolves small levels for the Mario AI Framework that can only be beaten by an agent that knows how to perform specific actions in the game. It uses variations of a perfect A* agent that are limited in various ways, such as not being able to jump high or see enemies, to test how failing to do certain actions can stop the player from beating the level.

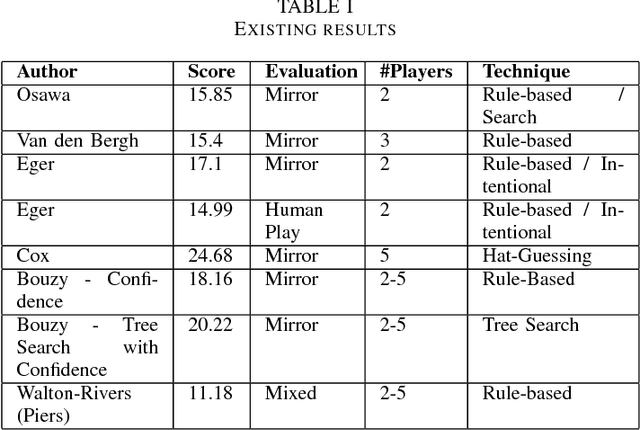

Evolving Agents for the Hanabi 2018 CIG Competition

Sep 26, 2018

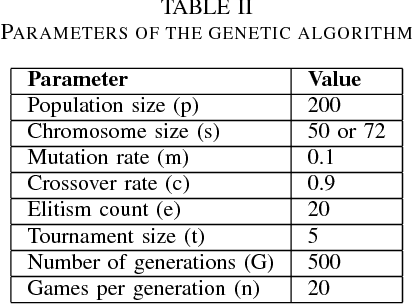

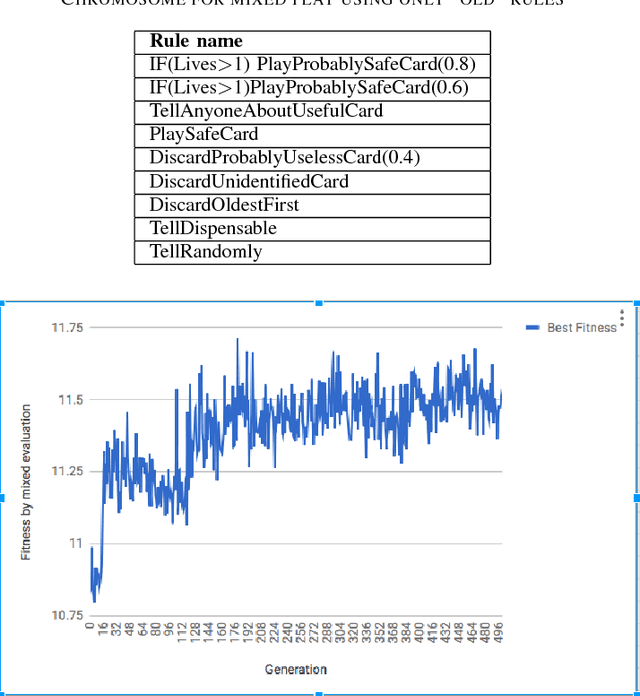

Abstract:Hanabi is a cooperative card game with hidden information that has won important awards in the industry and received some recent academic attention. A two-track competition of agents for the game will take place in the 2018 CIG conference. In this paper, we develop a genetic algorithm that builds rule-based agents by determining the best sequence of rules from a fixed rule set to use as strategy. In three separate experiments, we remove human assumptions regarding the ordering of rules, add new, more expressive rules to the rule set and independently evolve agents specialized at specific game sizes. As result, we achieve scores superior to previously published research for the mirror and mixed evaluation of agents.

Towards Game-based Metrics for Computational Co-creativity

Sep 26, 2018

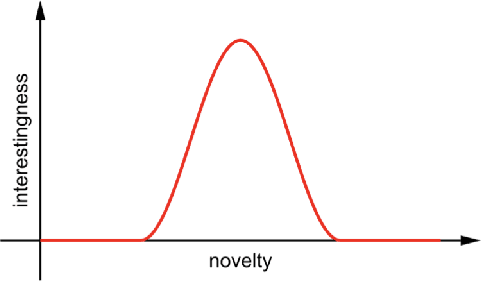

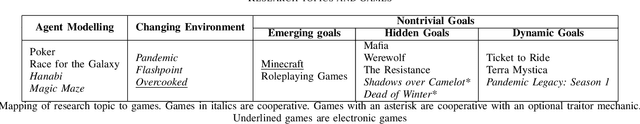

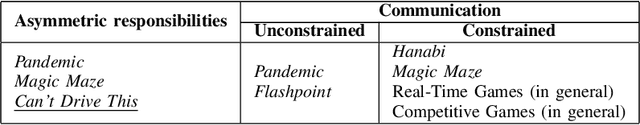

Abstract:We propose the following question: what game-like interactive system would provide a good environment for measuring the impact and success of a co-creative, cooperative agent? Creativity is often formulated in terms of novelty, value, surprise and interestingness. We review how these concepts are measured in current computational intelligence research and provide a mapping from modern electronic and tabletop games to open research problems in mixed-initiative systems and computational co-creativity. We propose application scenarios for future research, and a number of metrics under which the performance of cooperative agents in these environments will be evaluated.

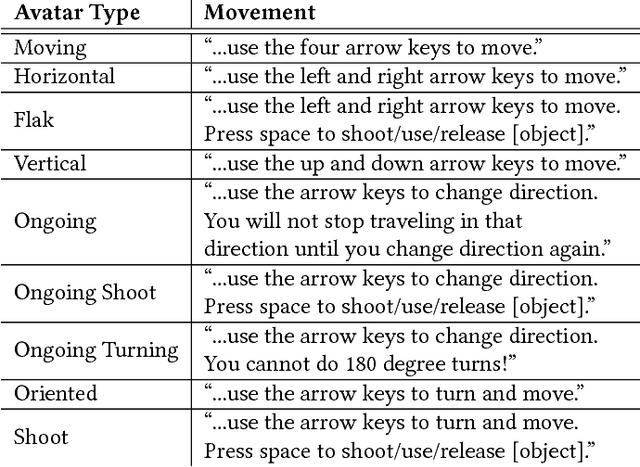

AtDelfi: Automatically Designing Legible, Full Instructions For Games

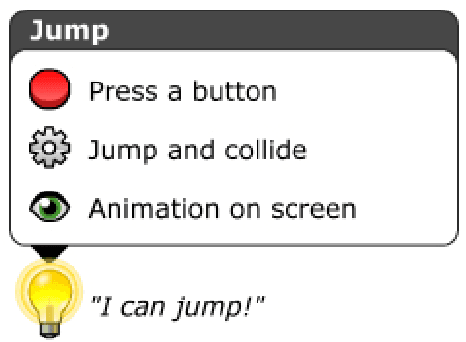

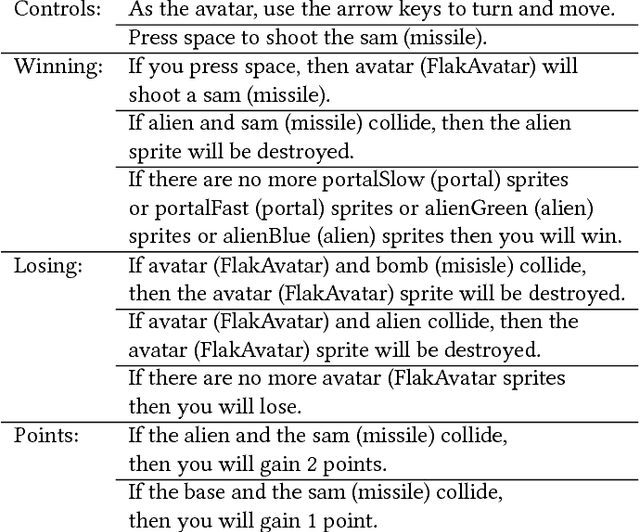

Sep 17, 2018

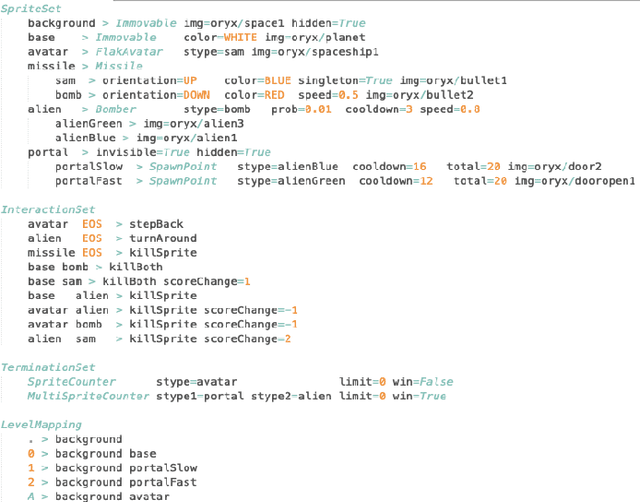

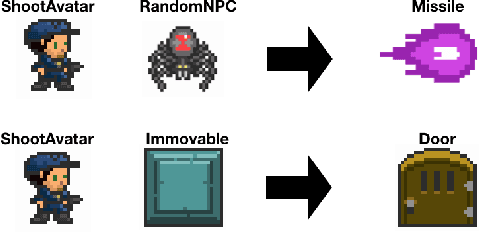

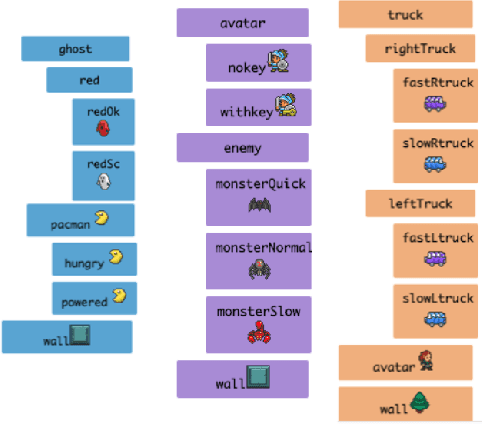

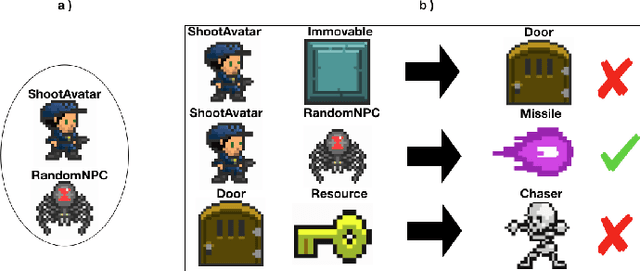

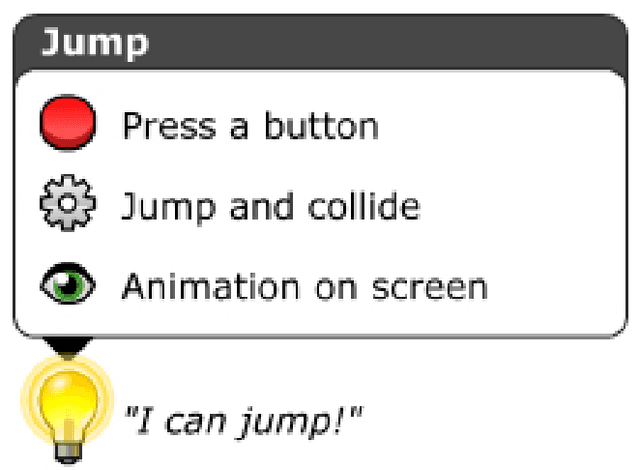

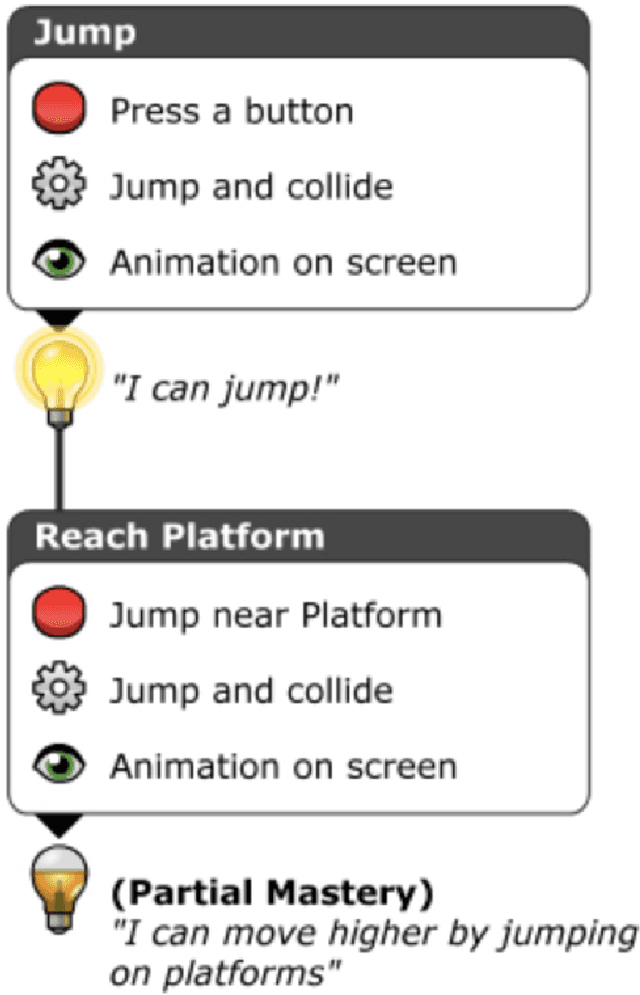

Abstract:This paper introduces a fully automatic method for generating video game tutorials. The AtDELFI system (AuTomatically DEsigning Legible, Full Instructions for games) was created to investigate procedural generation of instructions that teach players how to play video games. We present a representation of game rules and mechanics using a graph system as well as a tutorial generation method that uses said graph representation. We demonstrate the concept by testing it on games within the General Video Game Artificial Intelligence (GVG-AI) framework; the paper discusses tutorials generated for eight different games. Our findings suggest that a graph representation scheme works well for simple arcade style games such as Space Invaders and Pacman, but it appears that tutorials for more complex games might require higher-level understanding of the game than just single mechanics.

* 10 pages, 11 figures, published at Foundations of Digital Games Conference 2018

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge