Andre Wibisono

Tail-Sensitive KL and Rényi Convergence of Unadjusted Hamiltonian Monte Carlo via One-Shot Couplings

Jan 13, 2026Abstract:Hamiltonian Monte Carlo (HMC) algorithms are among the most widely used sampling methods in high dimensional settings, yet their convergence properties are poorly understood in divergences that quantify relative density mismatch, such as Kullback-Leibler (KL) and Rényi divergences. These divergences naturally govern acceptance probabilities and warm-start requirements for Metropolis-adjusted Markov chains. In this work, we develop a framework for upgrading Wasserstein convergence guarantees for unadjusted Hamiltonian Monte Carlo (uHMC) to guarantees in tail-sensitive KL and Rényi divergences. Our approach is based on one-shot couplings, which we use to establish a regularization property of the uHMC transition kernel. This regularization allows Wasserstein-2 mixing-time and asymptotic bias bounds to be lifted to KL divergence, and analogous Orlicz-Wasserstein bounds to be lifted to Rényi divergence, paralleling earlier work of Bou-Rabee and Eberle (2023) that upgrade Wasserstein-1 bounds to total variation distance via kernel smoothing. As a consequence, our results provide quantitative control of relative density mismatch, clarify the role of discretization bias in strong divergences, and yield principled guarantees relevant both for unadjusted sampling and for generating warm starts for Metropolis-adjusted Markov chains.

Fast and Furious Symmetric Learning in Zero-Sum Games: Gradient Descent as Fictitious Play

Jun 16, 2025Abstract:This paper investigates the sublinear regret guarantees of two non-no-regret algorithms in zero-sum games: Fictitious Play, and Online Gradient Descent with constant stepsizes. In general adversarial online learning settings, both algorithms may exhibit instability and linear regret due to no regularization (Fictitious Play) or small amounts of regularization (Gradient Descent). However, their ability to obtain tighter regret bounds in two-player zero-sum games is less understood. In this work, we obtain strong new regret guarantees for both algorithms on a class of symmetric zero-sum games that generalize the classic three-strategy Rock-Paper-Scissors to a weighted, n-dimensional regime. Under symmetric initializations of the players' strategies, we prove that Fictitious Play with any tiebreaking rule has $O(\sqrt{T})$ regret, establishing a new class of games for which Karlin's Fictitious Play conjecture holds. Moreover, by leveraging a connection between the geometry of the iterates of Fictitious Play and Gradient Descent in the dual space of payoff vectors, we prove that Gradient Descent, for almost all symmetric initializations, obtains a similar $O(\sqrt{T})$ regret bound when its stepsize is a sufficiently large constant. For Gradient Descent, this establishes the first "fast and furious" behavior (i.e., sublinear regret without time-vanishing stepsizes) for zero-sum games larger than 2x2.

Hamiltonian Descent Algorithms for Optimization: Accelerated Rates via Randomized Integration Time

May 18, 2025Abstract:We study the Hamiltonian flow for optimization (HF-opt), which simulates the Hamiltonian dynamics for some integration time and resets the velocity to $0$ to decrease the objective function; this is the optimization analogue of the Hamiltonian Monte Carlo algorithm for sampling. For short integration time, HF-opt has the same convergence rates as gradient descent for minimizing strongly and weakly convex functions. We show that by randomizing the integration time in HF-opt, the resulting randomized Hamiltonian flow (RHF) achieves accelerated convergence rates in continuous time, similar to the rates for the accelerated gradient flow. We study a discrete-time implementation of RHF as the randomized Hamiltonian gradient descent (RHGD) algorithm. We prove that RHGD achieves the same accelerated convergence rates as Nesterov's accelerated gradient descent (AGD) for minimizing smooth strongly and weakly convex functions. We provide numerical experiments to demonstrate that RHGD is competitive with classical accelerated methods such as AGD across all settings and outperforms them in certain regimes.

Mixing Time of the Proximal Sampler in Relative Fisher Information via Strong Data Processing Inequality

Feb 08, 2025Abstract:We study the mixing time guarantee for sampling in relative Fisher information via the Proximal Sampler algorithm, which is an approximate proximal discretization of the Langevin dynamics. We show that when the target probability distribution is strongly log-concave, the relative Fisher information converges exponentially fast along the Proximal Sampler; this matches the exponential convergence rate of the relative Fisher information along the continuous-time Langevin dynamics for strongly log-concave target. When combined with a standard implementation of the Proximal Sampler via rejection sampling, this exponential convergence rate provides a high-accuracy iteration complexity guarantee for the Proximal Sampler in relative Fisher information when the target distribution is strongly log-concave and log-smooth. Our proof proceeds by establishing a strong data processing inequality for relative Fisher information along the Gaussian channel under strong log-concavity, and a data processing inequality along the reverse Gaussian channel for a special distribution. The forward and reverse Gaussian channels compose to form the Proximal Sampler, and these data processing inequalities imply the exponential convergence rate of the relative Fisher information along the Proximal Sampler.

Convergence of the Min-Max Langevin Dynamics and Algorithm for Zero-Sum Games

Dec 29, 2024Abstract:We study zero-sum games in the space of probability distributions over the Euclidean space $\mathbb{R}^d$ with entropy regularization, in the setting when the interaction function between the players is smooth and strongly convex-concave. We prove an exponential convergence guarantee for the mean-field min-max Langevin dynamics to compute the equilibrium distribution of the zero-sum game. We also study the finite-particle approximation of the mean-field min-max Langevin dynamics, both in continuous and discrete times. We prove biased convergence guarantees for the continuous-time finite-particle min-max Langevin dynamics to the stationary mean-field equilibrium distribution with an explicit bias estimate which does not scale with the number of particles. We also prove biased convergence guarantees for the discrete-time finite-particle min-max Langevin algorithm to the stationary mean-field equilibrium distribution with an additional bias term which scales with the step size and the number of particles. This provides an explicit iteration complexity for the average particle along the finite-particle algorithm to approximately compute the equilibrium distribution of the zero-sum game.

High-accuracy sampling from constrained spaces with the Metropolis-adjusted Preconditioned Langevin Algorithm

Dec 24, 2024

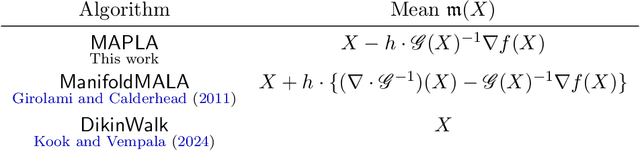

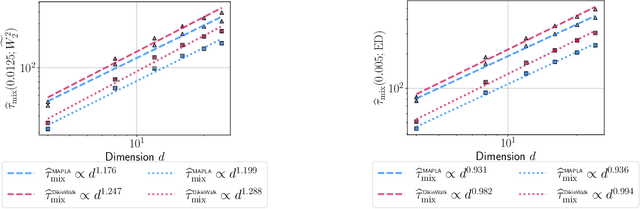

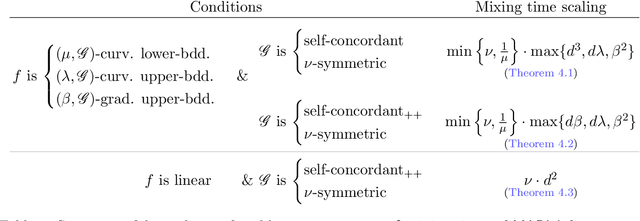

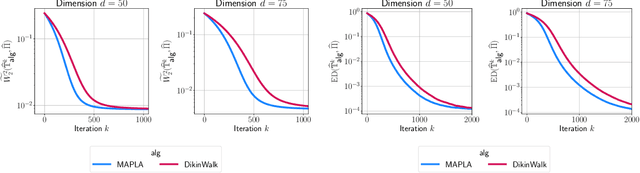

Abstract:In this work, we propose a first-order sampling method called the Metropolis-adjusted Preconditioned Langevin Algorithm for approximate sampling from a target distribution whose support is a proper convex subset of $\mathbb{R}^{d}$. Our proposed method is the result of applying a Metropolis-Hastings filter to the Markov chain formed by a single step of the preconditioned Langevin algorithm with a metric $\mathscr{G}$, and is motivated by the natural gradient descent algorithm for optimisation. We derive non-asymptotic upper bounds for the mixing time of this method for sampling from target distributions whose potentials are bounded relative to $\mathscr{G}$, and for exponential distributions restricted to the support. Our analysis suggests that if $\mathscr{G}$ satisfies stronger notions of self-concordance introduced in Kook and Vempala (2024), then these mixing time upper bounds have a strictly better dependence on the dimension than when is merely self-concordant. We also provide numerical experiments that demonstrates the practicality of our proposed method. Our method is a high-accuracy sampler due to the polylogarithmic dependence on the error tolerance in our mixing time upper bounds.

Fast Convergence of $Φ$-Divergence Along the Unadjusted Langevin Algorithm and Proximal Sampler

Oct 14, 2024Abstract:We study the mixing time of two popular discrete time Markov chains in continuous space, the unadjusted Langevin algorithm and the proximal sampler, which are discretizations of the Langevin dynamics. We extend mixing time analyses for these Markov chains to hold in $\Phi$-divergence. We show that any $\Phi$-divergence arising from a twice-differentiable strictly convex function $\Phi$ converges to $0$ exponentially fast along these Markov chains, under the assumption that their stationary distributions satisfies the corresponding $\Phi$-Sobolev inequality. Our rates of convergence are tight and include as special cases popular mixing time regimes, namely the mixing in chi-squared divergence under a Poincar\'e inequality, and the mixing in relative entropy under a log-Sobolev inequality. Our results follow by bounding the contraction coefficients arising in the appropriate strong data processing inequalities.

A Symplectic Analysis of Alternating Mirror Descent

May 06, 2024

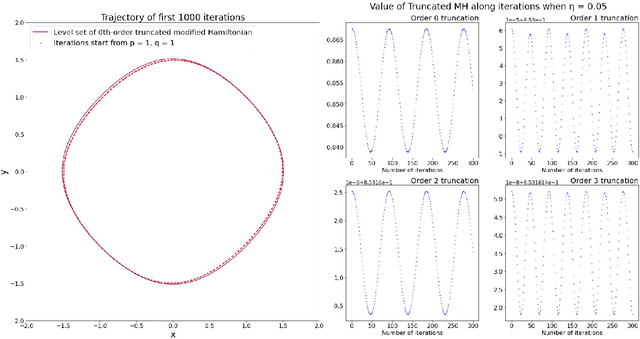

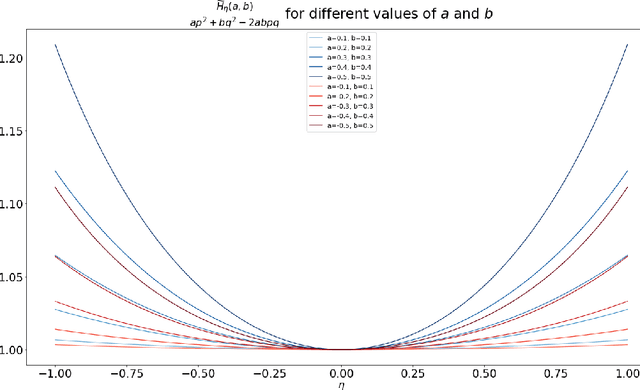

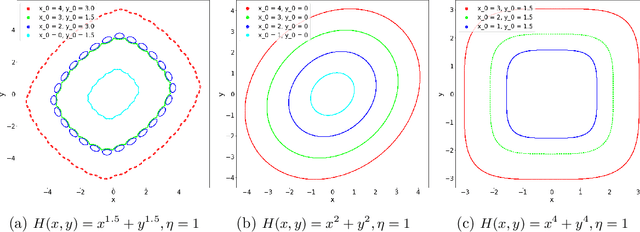

Abstract:Motivated by understanding the behavior of the Alternating Mirror Descent (AMD) algorithm for bilinear zero-sum games, we study the discretization of continuous-time Hamiltonian flow via the symplectic Euler method. We provide a framework for analysis using results from Hamiltonian dynamics, Lie algebra, and symplectic numerical integrators, with an emphasis on the existence and properties of a conserved quantity, the modified Hamiltonian (MH), for the symplectic Euler method. We compute the MH in closed-form when the original Hamiltonian is a quadratic function, and show that it generally differs from the other conserved quantity known previously in that case. We derive new error bounds on the MH when truncated at orders in the stepsize in terms of the number of iterations, $K$, and utilize this bound to show an improved $\mathcal{O}(K^{1/5})$ total regret bound and an $\mathcal{O}(K^{-4/5})$ duality gap of the average iterates for AMD. Finally, we propose a conjecture which, if true, would imply that the total regret for AMD goes as $\mathcal{O}\left(K^{\varepsilon}\right)$ and the duality gap of the average iterates as $\mathcal{O}\left(K^{-1+\varepsilon}\right)$ for any $\varepsilon>0$, and we can take $\varepsilon=0$ upon certain convergence conditions for the MH.

On Independent Samples Along the Langevin Diffusion and the Unadjusted Langevin Algorithm

Feb 26, 2024Abstract:We study the rate at which the initial and current random variables become independent along a Markov chain, focusing on the Langevin diffusion in continuous time and the Unadjusted Langevin Algorithm (ULA) in discrete time. We measure the dependence between random variables via their mutual information. For the Langevin diffusion, we show the mutual information converges to $0$ exponentially fast when the target is strongly log-concave, and at a polynomial rate when the target is weakly log-concave. These rates are analogous to the mixing time of the Langevin diffusion under similar assumptions. For the ULA, we show the mutual information converges to $0$ exponentially fast when the target is strongly log-concave and smooth. We prove our results by developing the mutual version of the mixing time analyses of these Markov chains. We also provide alternative proofs based on strong data processing inequalities for the Langevin diffusion and the ULA, and by showing regularity results for these processes in mutual information.

Optimal score estimation via empirical Bayes smoothing

Feb 12, 2024Abstract:We study the problem of estimating the score function of an unknown probability distribution $\rho^*$ from $n$ independent and identically distributed observations in $d$ dimensions. Assuming that $\rho^*$ is subgaussian and has a Lipschitz-continuous score function $s^*$, we establish the optimal rate of $\tilde \Theta(n^{-\frac{2}{d+4}})$ for this estimation problem under the loss function $\|\hat s - s^*\|^2_{L^2(\rho^*)}$ that is commonly used in the score matching literature, highlighting the curse of dimensionality where sample complexity for accurate score estimation grows exponentially with the dimension $d$. Leveraging key insights in empirical Bayes theory as well as a new convergence rate of smoothed empirical distribution in Hellinger distance, we show that a regularized score estimator based on a Gaussian kernel attains this rate, shown optimal by a matching minimax lower bound. We also discuss the implication of our theory on the sample complexity of score-based generative models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge