Abhinav Lalwani

Machine Learning in Sports: A Case Study on Using Explainable Models for Predicting Outcomes of Volleyball Matches

Jun 18, 2022

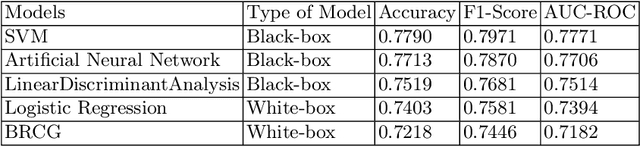

Abstract:Machine Learning has become an integral part of engineering design and decision making in several domains, including sports. Deep Neural Networks (DNNs) have been the state-of-the-art methods for predicting outcomes of professional sports events. However, apart from getting highly accurate predictions on these sports events outcomes, it is necessary to answer questions such as "Why did the model predict that Team A would win Match X against Team B?" DNNs are inherently black-box in nature. Therefore, it is required to provide high-quality interpretable, and understandable explanations for a model's prediction in sports. This paper explores a two-phased Explainable Artificial Intelligence(XAI) approach to predict outcomes of matches in the Brazilian volleyball League (SuperLiga). In the first phase, we directly use the interpretable rule-based ML models that provide a global understanding of the model's behaviors based on Boolean Rule Column Generation (BRCG; extracts simple AND-OR classification rules) and Logistic Regression (LogReg; allows to estimate the feature importance scores). In the second phase, we construct non-linear models such as Support Vector Machine (SVM) and Deep Neural Network (DNN) to obtain predictive performance on the volleyball matches' outcomes. We construct the "post-hoc" explanations for each data instance using ProtoDash, a method that finds prototypes in the training dataset that are most similar to the test instance, and SHAP, a method that estimates the contribution of each feature on the model's prediction. We evaluate the SHAP explanations using the faithfulness metric. Our results demonstrate the effectiveness of the explanations for the model's predictions.

Logical Fallacy Detection

Feb 28, 2022

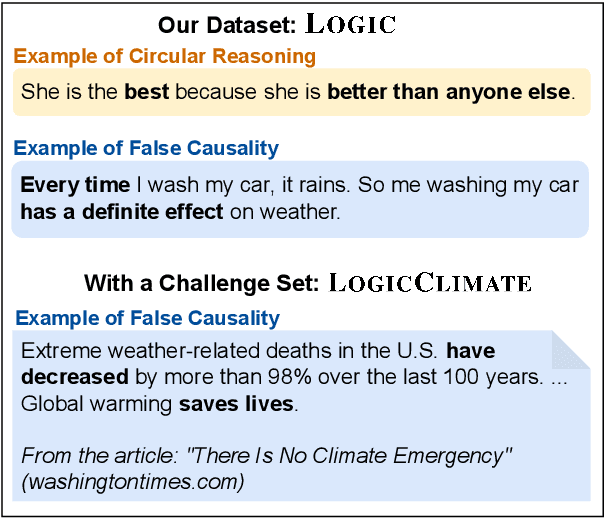

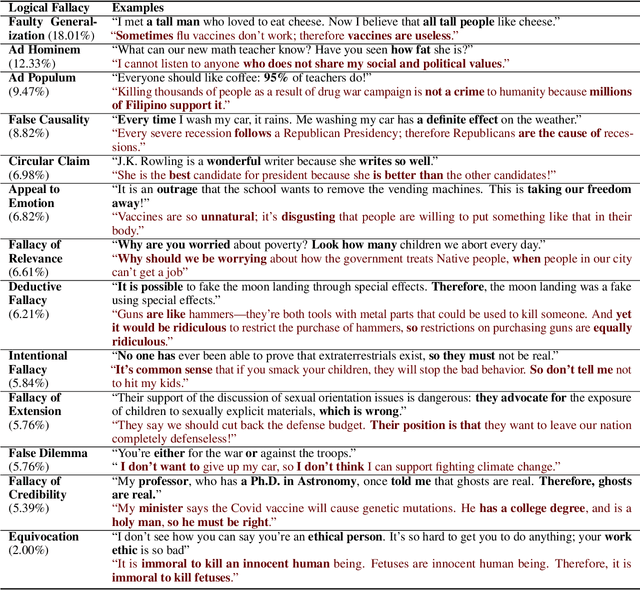

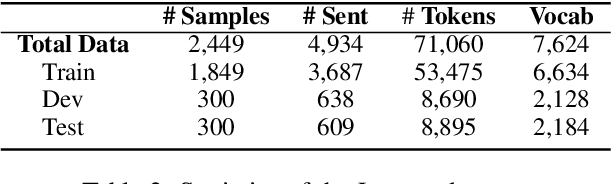

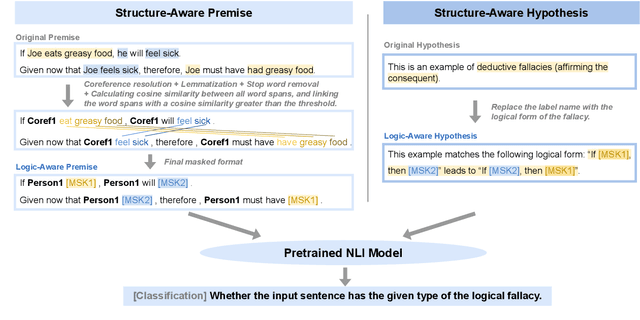

Abstract:Reasoning is central to human intelligence. However, fallacious arguments are common, and some exacerbate problems such as spreading misinformation about climate change. In this paper, we propose the task of logical fallacy detection, and provide a new dataset (Logic) of logical fallacies generally found in text, together with an additional challenge set for detecting logical fallacies in climate change claims (LogicClimate). Detecting logical fallacies is a hard problem as the model must understand the underlying logical structure of the argument. We find that existing pretrained large language models perform poorly on this task. In contrast, we show that a simple structure-aware classifier outperforms the best language model by 5.46% on Logic and 3.86% on LogicClimate. We encourage future work to explore this task as (a) it can serve as a new reasoning challenge for language models, and (b) it can have potential applications in tackling the spread of misinformation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge