Time Series Forecasting with Hypernetworks Generating Parameters in Advance

Paper and Code

Nov 22, 2022

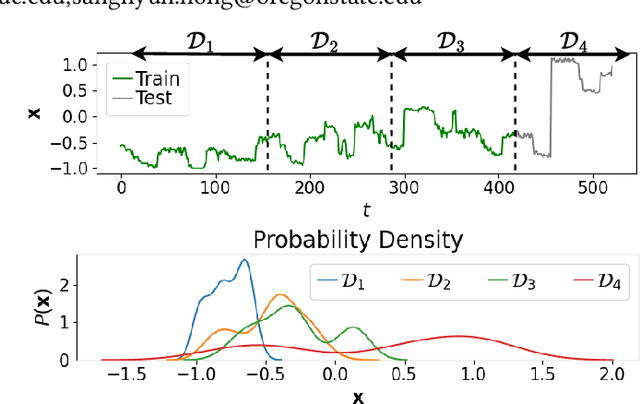

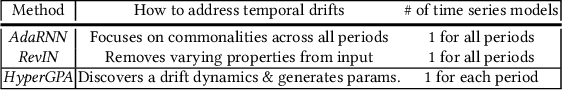

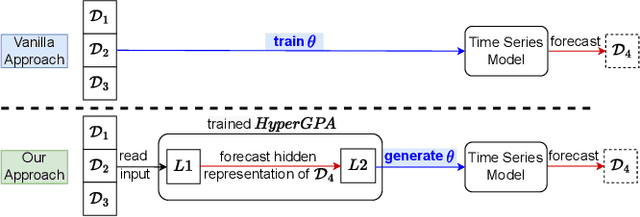

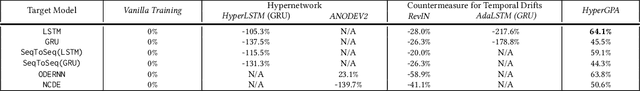

Forecasting future outcomes from recent time series data is not easy, especially when the future data are different from the past (i.e. time series are under temporal drifts). Existing approaches show limited performances under data drifts, and we identify the main reason: It takes time for a model to collect sufficient training data and adjust its parameters for complicated temporal patterns whenever the underlying dynamics change. To address this issue, we study a new approach; instead of adjusting model parameters (by continuously re-training a model on new data), we build a hypernetwork that generates other target models' parameters expected to perform well on the future data. Therefore, we can adjust the model parameters beforehand (if the hypernetwork is correct). We conduct extensive experiments with 6 target models, 6 baselines, and 4 datasets, and show that our HyperGPA outperforms other baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge