Mamba as Decision Maker: Exploring Multi-scale Sequence Modeling in Offline Reinforcement Learning

Paper and Code

Jun 04, 2024

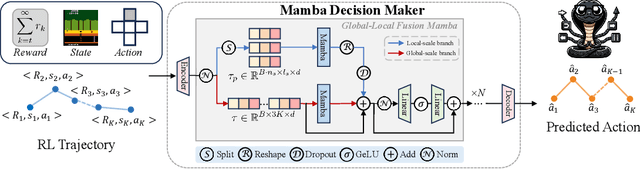

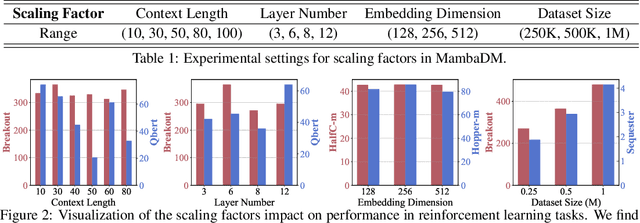

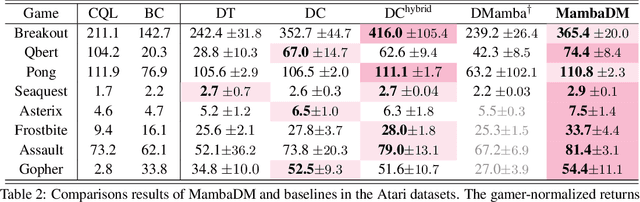

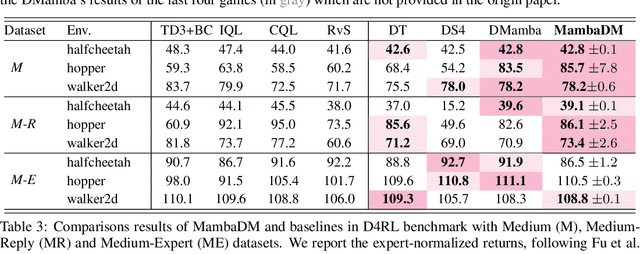

Sequential modeling has demonstrated remarkable capabilities in offline reinforcement learning (RL), with Decision Transformer (DT) being one of the most notable representatives, achieving significant success. However, RL trajectories possess unique properties to be distinguished from the conventional sequence (e.g., text or audio): (1) local correlation, where the next states in RL are theoretically determined solely by current states and actions based on the Markov Decision Process (MDP), and (2) global correlation, where each step's features are related to long-term historical information due to the time-continuous nature of trajectories. In this paper, we propose a novel action sequence predictor, named Mamba Decision Maker (MambaDM), where Mamba is expected to be a promising alternative for sequence modeling paradigms, owing to its efficient modeling of multi-scale dependencies. In particular, we introduce a novel mixer module that proficiently extracts and integrates both global and local features of the input sequence, effectively capturing interrelationships in RL datasets. Extensive experiments demonstrate that MambaDM achieves state-of-the-art performance in Atari and OpenAI Gym datasets. Furthermore, we empirically investigate the scaling laws of MambaDM, finding that increasing model size does not bring performance improvement, but scaling the dataset amount by 2x for MambaDM can obtain up to 33.7% score improvement on Atari dataset. This paper delves into the sequence modeling capabilities of MambaDM in the RL domain, paving the way for future advancements in robust and efficient decision-making systems. Our code will be available at https://github.com/AndyCao1125/MambaDM.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge