Zhihao Chi

NormKD: Normalized Logits for Knowledge Distillation

Aug 01, 2023

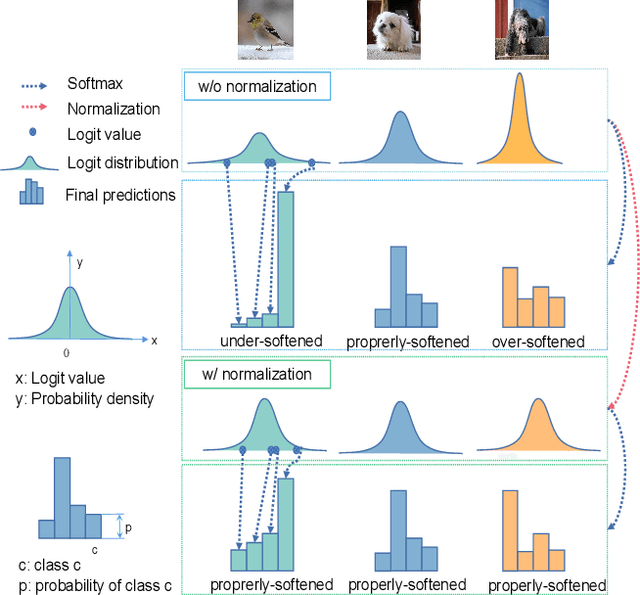

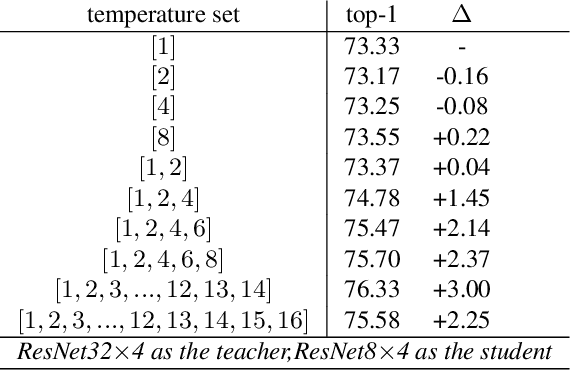

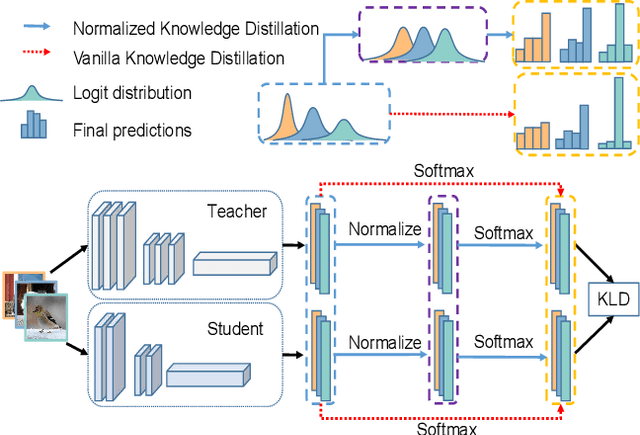

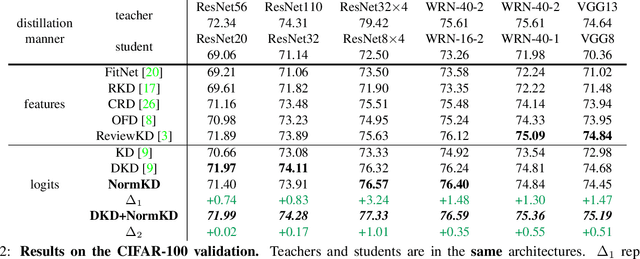

Abstract:Logit based knowledge distillation gets less attention in recent years since feature based methods perform better in most cases. Nevertheless, we find it still has untapped potential when we re-investigate the temperature, which is a crucial hyper-parameter to soften the logit outputs. For most of the previous works, it was set as a fixed value for the entire distillation procedure. However, as the logits from different samples are distributed quite variously, it is not feasible to soften all of them to an equal degree by just a single temperature, which may make the previous work transfer the knowledge of each sample inadequately. In this paper, we restudy the hyper-parameter temperature and figure out its incapability to distill the knowledge from each sample sufficiently when it is a single value. To address this issue, we propose Normalized Knowledge Distillation (NormKD), with the purpose of customizing the temperature for each sample according to the characteristic of the sample's logit distribution. Compared to the vanilla KD, NormKD barely has extra computation or storage cost but performs significantly better on CIRAR-100 and ImageNet for image classification. Furthermore, NormKD can be easily applied to the other logit based methods and achieve better performance which can be closer to or even better than the feature based method.

APPT : Asymmetric Parallel Point Transformer for 3D Point Cloud Understanding

Mar 31, 2023

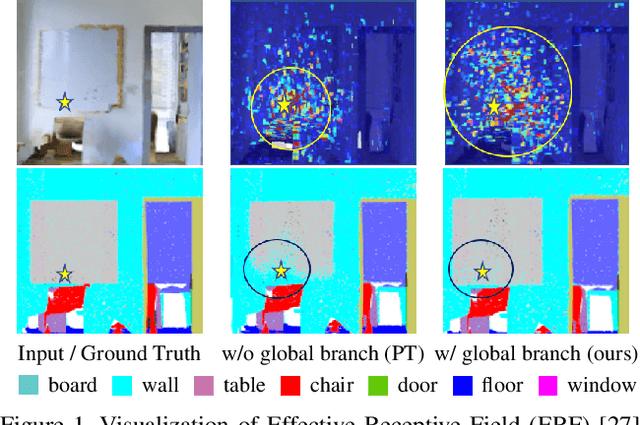

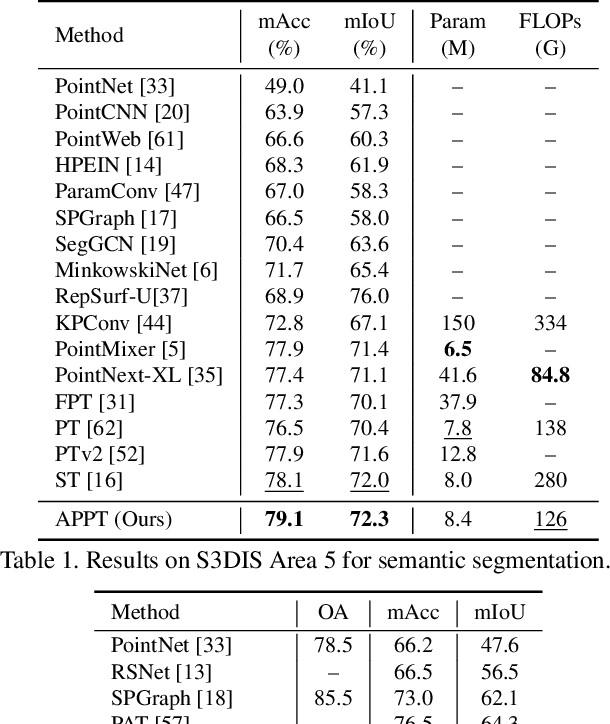

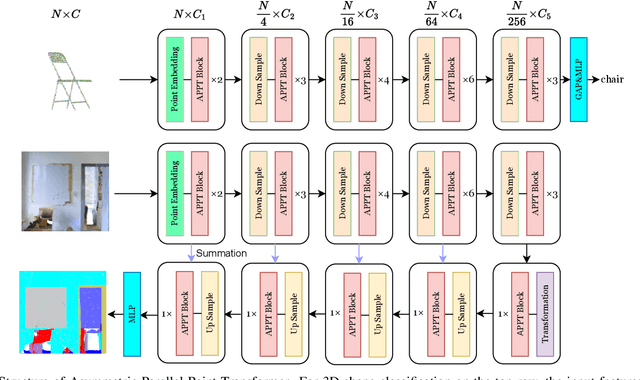

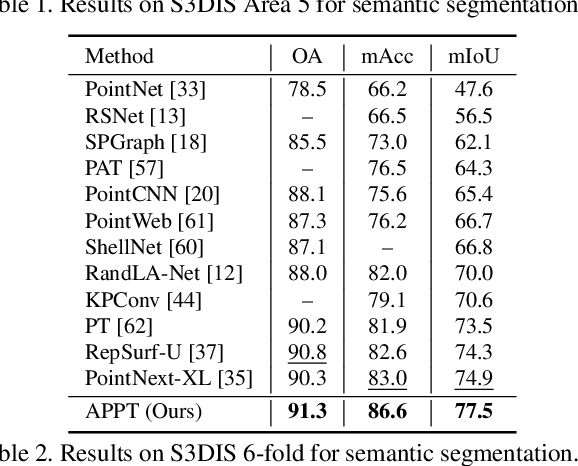

Abstract:Transformer-based networks have achieved impressive performance in 3D point cloud understanding. However, most of them concentrate on aggregating local features, but neglect to directly model global dependencies, which results in a limited effective receptive field. Besides, how to effectively incorporate local and global components also remains challenging. To tackle these problems, we propose Asymmetric Parallel Point Transformer (APPT). Specifically, we introduce Global Pivot Attention to extract global features and enlarge the effective receptive field. Moreover, we design the Asymmetric Parallel structure to effectively integrate local and global information. Combined with these designs, APPT is able to capture features globally throughout the entire network while focusing on local-detailed features. Extensive experiments show that our method outperforms the priors and achieves state-of-the-art on several benchmarks for 3D point cloud understanding, such as 3D semantic segmentation on S3DIS, 3D shape classification on ModelNet40, and 3D part segmentation on ShapeNet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge