Zhifang Fan

Make It Long, Keep It Fast: End-to-End 10k-Sequence Modeling at Billion Scale on Douyin

Nov 08, 2025

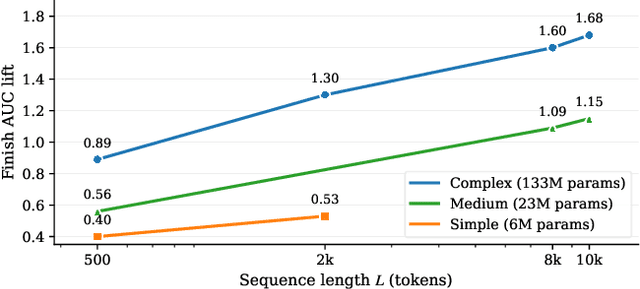

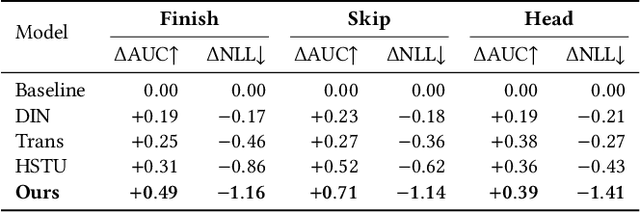

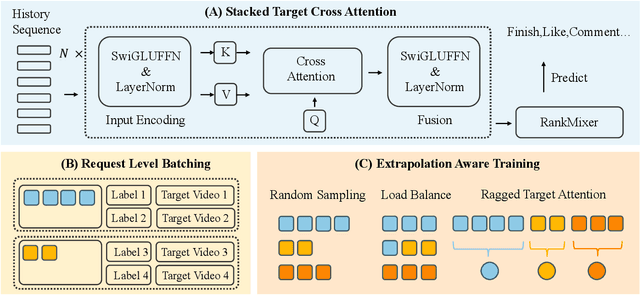

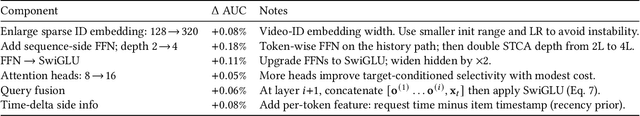

Abstract:Short-video recommenders such as Douyin must exploit extremely long user histories without breaking latency or cost budgets. We present an end-to-end system that scales long-sequence modeling to 10k-length histories in production. First, we introduce Stacked Target-to-History Cross Attention (STCA), which replaces history self-attention with stacked cross-attention from the target to the history, reducing complexity from quadratic to linear in sequence length and enabling efficient end-to-end training. Second, we propose Request Level Batching (RLB), a user-centric batching scheme that aggregates multiple targets for the same user/request to share the user-side encoding, substantially lowering sequence-related storage, communication, and compute without changing the learning objective. Third, we design a length-extrapolative training strategy -- train on shorter windows, infer on much longer ones -- so the model generalizes to 10k histories without additional training cost. Across offline and online experiments, we observe predictable, monotonic gains as we scale history length and model capacity, mirroring the scaling law behavior observed in large language models. Deployed at full traffic on Douyin, our system delivers significant improvements on key engagement metrics while meeting production latency, demonstrating a practical path to scaling end-to-end long-sequence recommendation to the 10k regime.

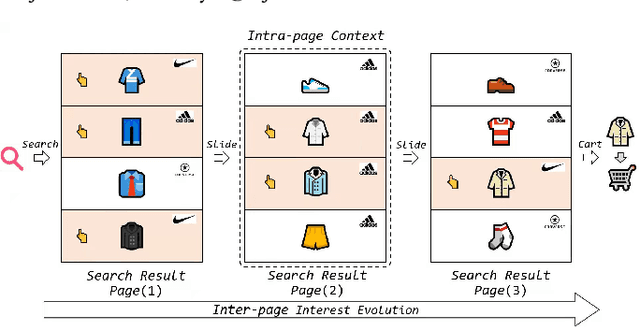

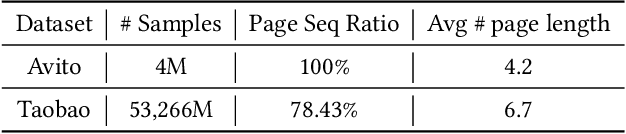

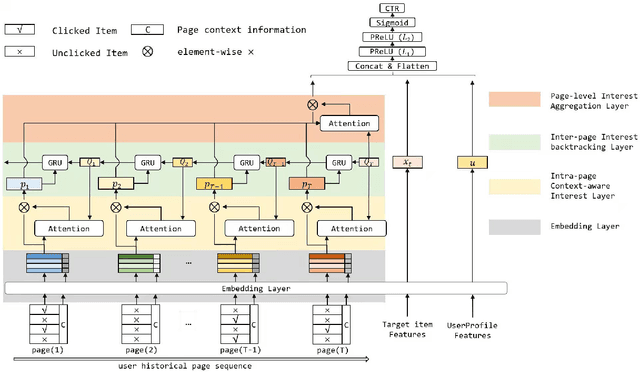

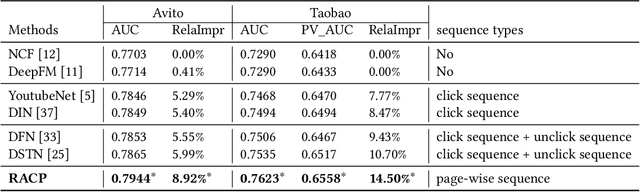

Modeling Users' Contextualized Page-wise Feedback for Click-Through Rate Prediction in E-commerce Search

Mar 29, 2022

Abstract:Modeling user's historical feedback is essential for Click-Through Rate Prediction in personalized search and recommendation. Existing methods usually only model users' positive feedback information such as click sequences which neglects the context information of the feedback. In this paper, we propose a new perspective for context-aware users' behavior modeling by including the whole page-wisely exposed products and the corresponding feedback as contextualized page-wise feedback sequence. The intra-page context information and inter-page interest evolution can be captured to learn more specific user preference. We design a novel neural ranking model RACP(i.e., Recurrent Attention over Contextualized Page sequence), which utilizes page-context aware attention to model the intra-page context. A recurrent attention process is used to model the cross-page interest convergence evolution as denoising the interest in the previous pages. Experiments on public and real-world industrial datasets verify our model's effectiveness.

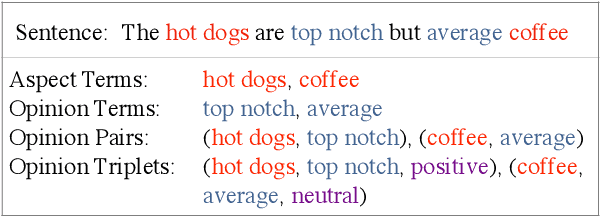

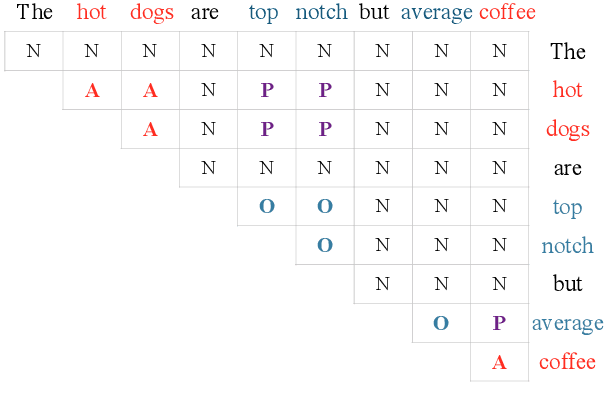

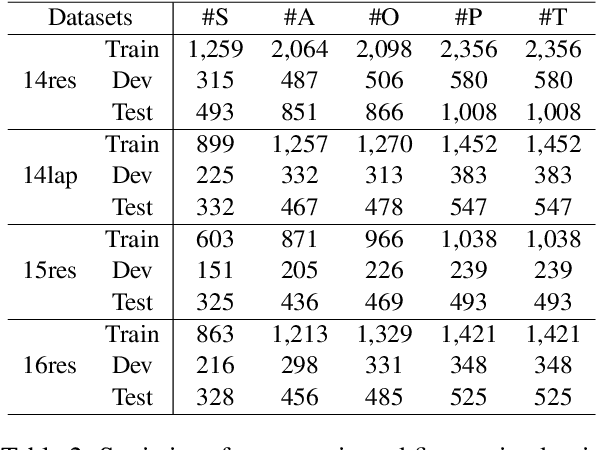

Grid Tagging Scheme for Aspect-oriented Fine-grained Opinion Extraction

Oct 09, 2020

Abstract:Aspect-oriented Fine-grained Opinion Extraction (AFOE) aims at extracting aspect terms and opinion terms from review in the form of opinion pairs or additionally extracting sentiment polarity of aspect term to form opinion triplet. Because of containing several opinion factors, the complete AFOE task is usually divided into multiple subtasks and achieved in the pipeline. However, pipeline approaches easily suffer from error propagation and inconvenience in real-world scenarios. To this end, we propose a novel tagging scheme, Grid Tagging Scheme (GTS), to address the AFOE task in an end-to-end fashion only with one unified grid tagging task. Additionally, we design an effective inference strategy on GTS to exploit mutual indication between different opinion factors for more accurate extractions. To validate the feasibility and compatibility of GTS, we implement three different GTS models respectively based on CNN, BiLSTM, and BERT, and conduct experiments on the aspect-oriented opinion pair extraction and opinion triplet extraction datasets. Extensive experimental results indicate that GTS models outperform strong baselines significantly and achieve state-of-the-art performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge