Zhaowei Cheng

HoMM: Higher-order Moment Matching for Unsupervised Domain Adaptation

Dec 27, 2019

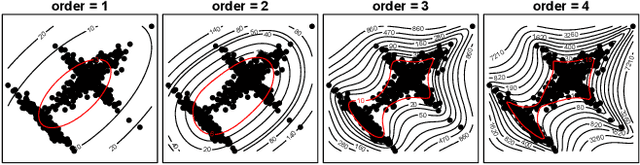

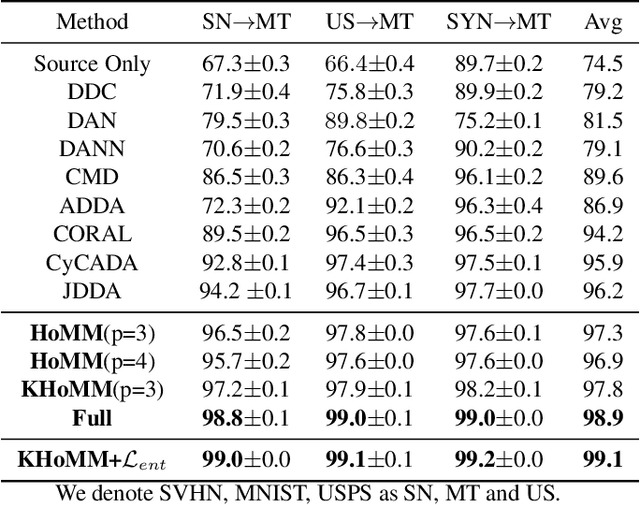

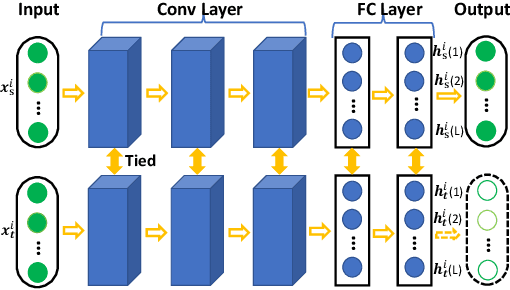

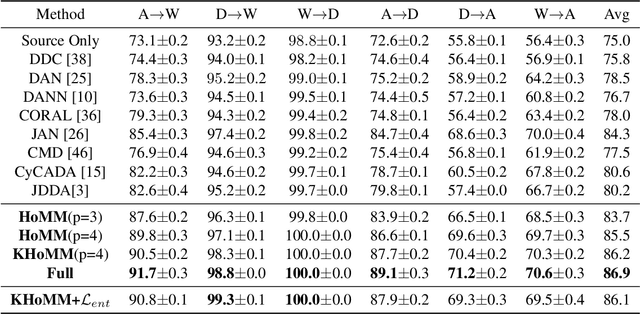

Abstract:Minimizing the discrepancy of feature distributions between different domains is one of the most promising directions in unsupervised domain adaptation. From the perspective of distribution matching, most existing discrepancy-based methods are designed to match the second-order or lower statistics, which however, have limited expression of statistical characteristic for non-Gaussian distributions. In this work, we explore the benefits of using higher-order statistics (mainly refer to third-order and fourth-order statistics) for domain matching. We propose a Higher-order Moment Matching (HoMM) method, and further extend the HoMM into reproducing kernel Hilbert spaces (RKHS). In particular, our proposed HoMM can perform arbitrary-order moment tensor matching, we show that the first-order HoMM is equivalent to Maximum Mean Discrepancy (MMD) and the second-order HoMM is equivalent to Correlation Alignment (CORAL). Moreover, the third-order and the fourth-order moment tensor matching are expected to perform comprehensive domain alignment as higher-order statistics can approximate more complex, non-Gaussian distributions. Besides, we also exploit the pseudo-labeled target samples to learn discriminative representations in the target domain, which further improves the transfer performance. Extensive experiments are conducted, showing that our proposed HoMM consistently outperforms the existing moment matching methods by a large margin. Codes are available at \url{https://github.com/chenchao666/HoMM-Master}

Selective Transfer with Reinforced Transfer Network for Partial Domain Adaptation

May 26, 2019

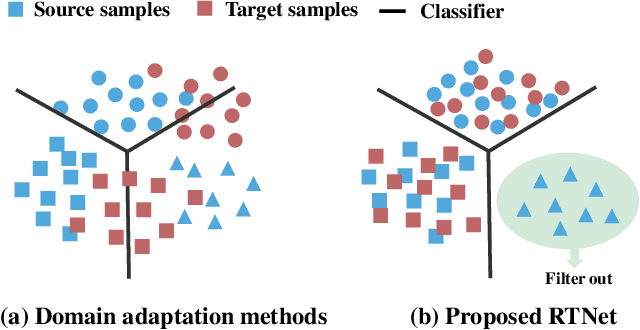

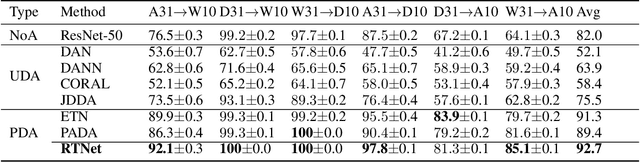

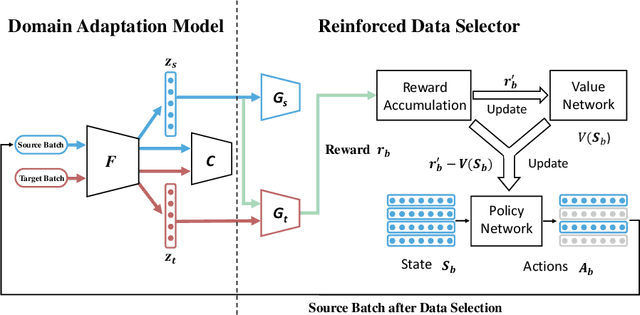

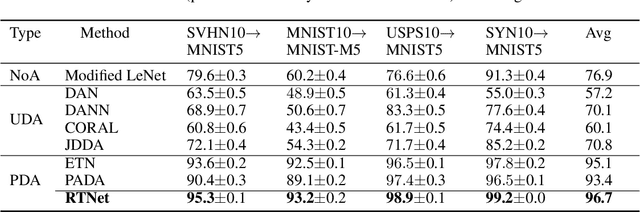

Abstract:Partial domain adaptation (PDA) extends standard domain adaptation to a more realistic scenario where the target domain only has a subset of classes from the source domain. The key challenge of PDA is how to select the relevant samples in the shared classes for knowledge transfer. Previous PDA methods tackle this problem by re-weighting the source samples based on the prediction of classifier or discriminator, thus discarding the pixel-level information. In this paper, to utilize both high-level and pixel-level information, we propose a reinforced transfer network (RTNet), which is the first work to apply reinforcement learning to address the PDA problem. The RTNet simultaneously mitigates the negative transfer by adopting a reinforced data selector to filter out outlier source classes, and promotes the positive transfer by employing a domain adaptation model to minimize the distribution discrepancy in the shared label space. Extensive experiments indicate that RTNet can achieve state-of-the-art performance for partial domain adaptation tasks on several benchmark datasets. Codes and datasets will be available online.

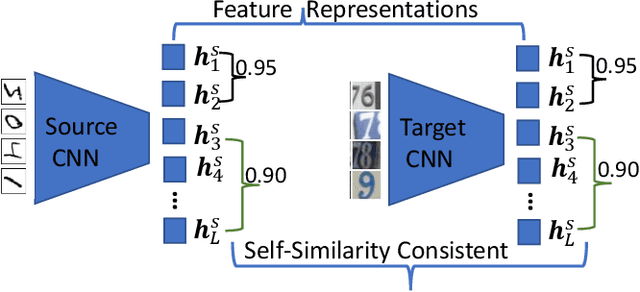

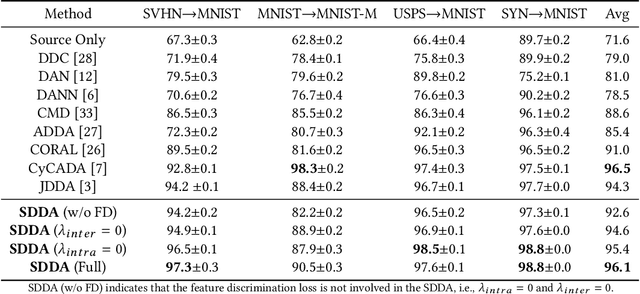

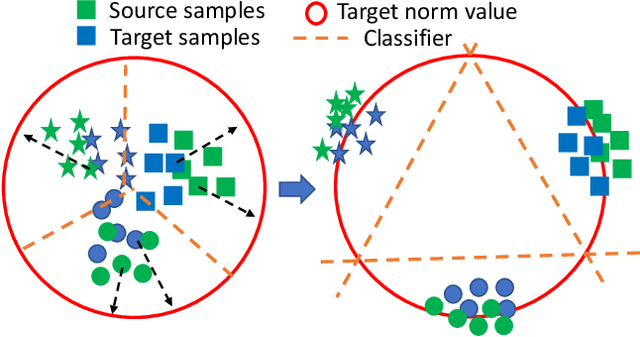

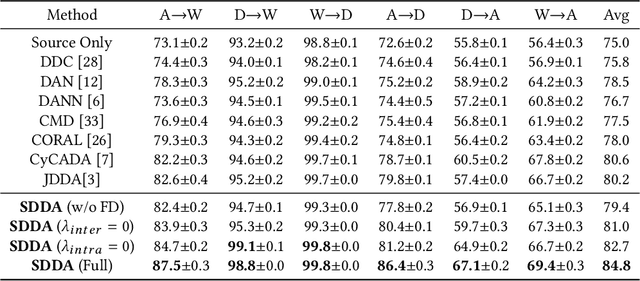

Towards Self-similarity Consistency and Feature Discrimination for Unsupervised Domain Adaptation

Apr 13, 2019

Abstract:Recent advances in unsupervised domain adaptation mainly focus on learning shared representations by global distribution alignment without considering class information across domains. The neglect of class information, however, may lead to partial alignment (or even misalignment) and poor generalization performance. For comprehensive alignment, we argue that the similarities across different features in the source domain should be consistent with that of in the target domain. Based on this assumption, we propose a new domain discrepancy metric, i.e., Self-similarity Consistency (SSC), to enforce the feature structure being consistent across domains. The renowned correlation alignment (CORAL) is proven to be a special case, and a sub-optimal measure of our proposed SSC. Furthermore, we also propose to mitigate the side effect of the partial alignment and misalignment by incorporating the discriminative information of the deep representations. Specifically, an embarrassingly simple and effective feature norm constraint is exploited to enlarge the discrepancy of inter-class samples. It relieves the requirements of strict alignment when performing adaptation, therefore improving the adaptation performance significantly. Extensive experiments on visual domain adaptation tasks demonstrate the effectiveness of our proposed SSC metric and feature discrimination approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge