Zelun Kong

Adv-Makeup: A New Imperceptible and Transferable Attack on Face Recognition

May 07, 2021

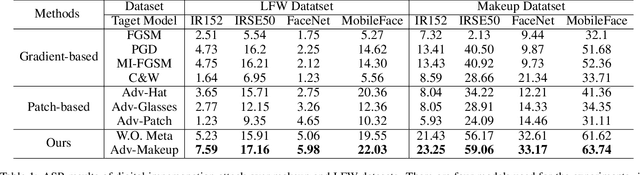

Abstract:Deep neural networks, particularly face recognition models, have been shown to be vulnerable to both digital and physical adversarial examples. However, existing adversarial examples against face recognition systems either lack transferability to black-box models, or fail to be implemented in practice. In this paper, we propose a unified adversarial face generation method - Adv-Makeup, which can realize imperceptible and transferable attack under black-box setting. Adv-Makeup develops a task-driven makeup generation method with the blending module to synthesize imperceptible eye shadow over the orbital region on faces. And to achieve transferability, Adv-Makeup implements a fine-grained meta-learning adversarial attack strategy to learn more general attack features from various models. Compared to existing techniques, sufficient visualization results demonstrate that Adv-Makeup is capable to generate much more imperceptible attacks under both digital and physical scenarios. Meanwhile, extensive quantitative experiments show that Adv-Makeup can significantly improve the attack success rate under black-box setting, even attacking commercial systems.

PoisHygiene: Detecting and Mitigating Poisoning Attacks in Neural Networks

Mar 24, 2020

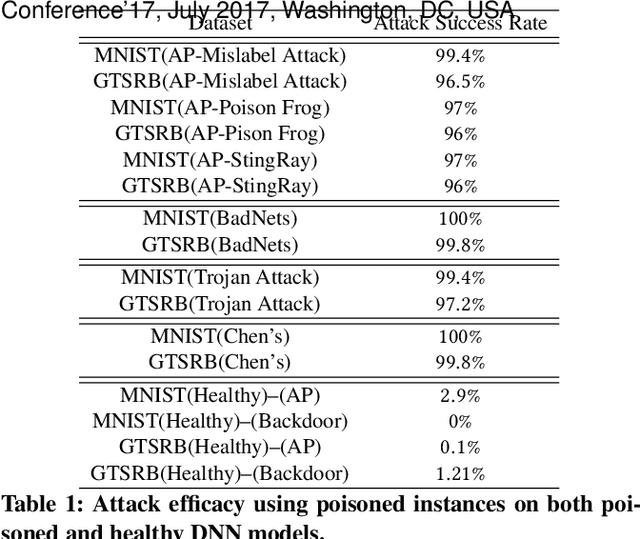

Abstract:The black-box nature of deep neural networks (DNNs) facilitates attackers to manipulate the behavior of DNN through data poisoning. Being able to detect and mitigate poisoning attacks, typically categorized into backdoor and adversarial poisoning (AP), is critical in enabling safe adoption of DNNs in many application domains. Although recent works demonstrate encouraging results on detection of certain backdoor attacks, they exhibit inherent limitations which may significantly constrain the applicability. Indeed, no technique exists for detecting AP attacks, which represents a harder challenge given that such attacks exhibit no common and explicit rules while backdoor attacks do (i.e., embedding backdoor triggers into poisoned data). We believe the key to detect and mitigate AP attacks is the capability of observing and leveraging essential poisoning-induced properties within an infected DNN model. In this paper, we present PoisHygiene, the first effective and robust detection and mitigation framework against AP attacks. PoisHygiene is fundamentally motivated by Dr. Ernest Rutherford's story (i.e., the 1908 Nobel Prize winner), on observing the structure of atom through random electron sampling.

Generating Adversarial Fragments with Adversarial Networks for Physical-world Implementation

Jul 09, 2019

Abstract:Although deep neural networks have been widely applied in many application domains, they are found to be vulnerable to adversarial attacks. A recent promising set of attacking techniques have been proposed, which mainly focus on generating adversarial examples under digital-world settings. Such strategies are unfortunately not implementable for any physical-world scenarios such as autonomous driving. In this paper, we present FragGAN, a new GAN-based framework which is capable of generating an adversarial image which differs from the original input image only through replacing a targeted fragment within the image using a corresponding visually indistinguishable adversarial fragment. FragGAN ensures that the resulting entire image is effective in attacking. For any physical-world implementation, an attacker could physically print out the adversarial fragment and then paste it onto the original fragment (e.g., a roadside sign for autonomous driving scenarios). FragGAN also enables clean-label attacks against image classification, as the resulting attacks may succeed even without modifying any essential content of an image. Extensive experiments including physical-world case studies on state-of-the-art autonomous steering and image classification models demonstrate that FragGAN is highly effective and superior to simple extensions of existing approaches. To the best of our knowledge, FragGAN is the first approach that can implement effective and clean-label physical-world attacks.

Co-Representation Learning For Classification and Novel Class Detection via Deep Networks

Nov 13, 2018

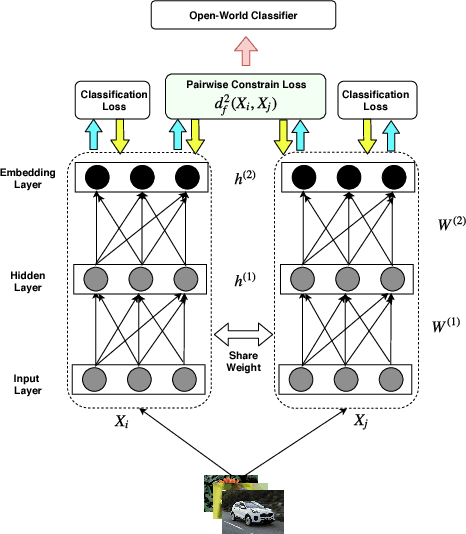

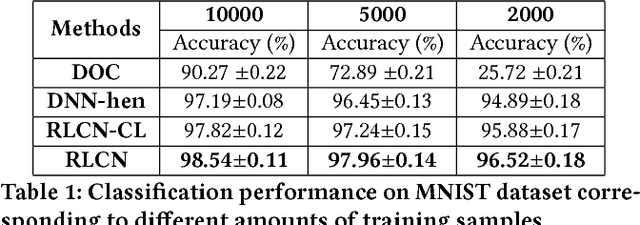

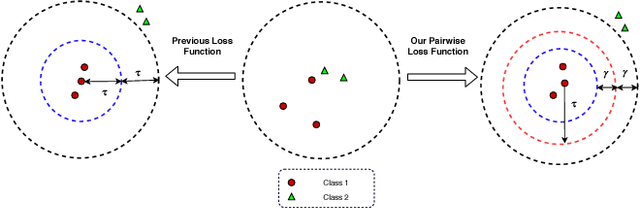

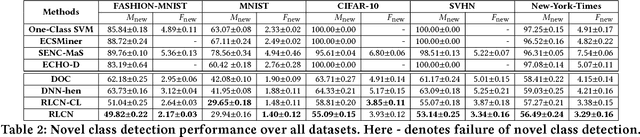

Abstract:Deep Neural Network (DNN) has been largely demonstrated to be effective for closed-world classification problems where the total number of classes are known in advance. However, when the total number of classes that may occur during test time is unknown, DNNs notorious fail, i.e., DNN will make incorrect label prediction on instances from novel or unseen classes. This severely limits its utility in many real-world web applications, particularly when data occurs as a continuous stream. In this paper, we focus on addressing this key challenge by developing a two-channel DNN based co-representation learning framework that not only predicts instances from known classes, but also detects and adapts to the occurrence of novel class instances over time. Concretely, we propose a metric learning method using pairwise-constraint loss (PCL) function to learn a feature representation where intra-class compactness and inter-class separation is achieved. Moreover, we apply the temperature scaling scheme on the softmax function to replace traditional softmax output and design an open-world classifier. Our extensive empirical evaluation on benchmark datasets demonstrates the effectiveness of our framework compared to other competing techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge