Z. Wang

Cross-Species and Cross-Modality Epileptic Seizure Detection via Multi-Space Alignment

Dec 18, 2024

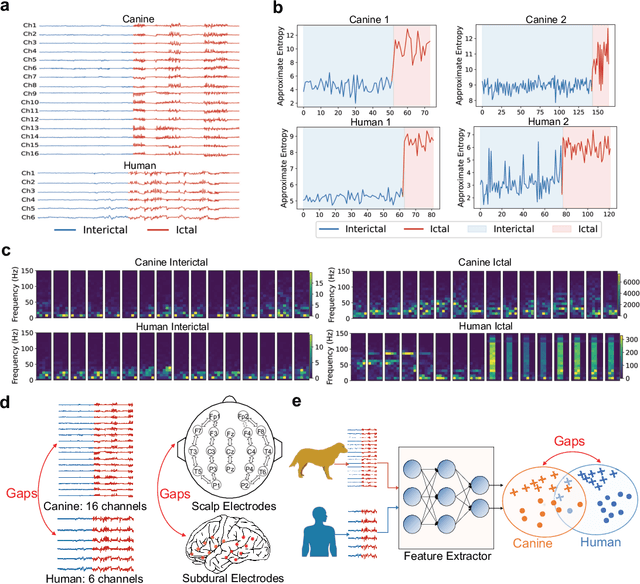

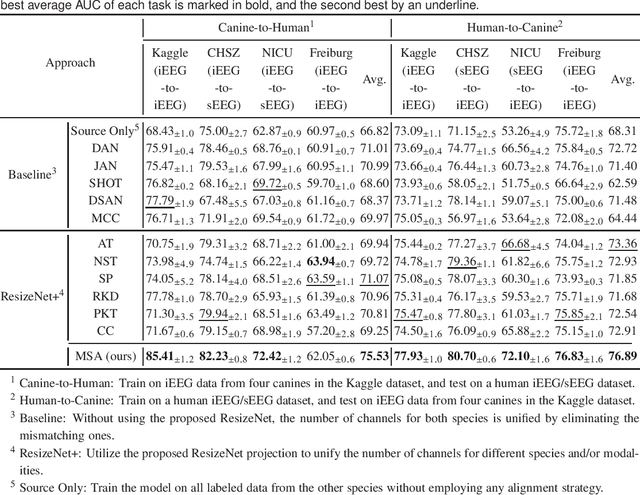

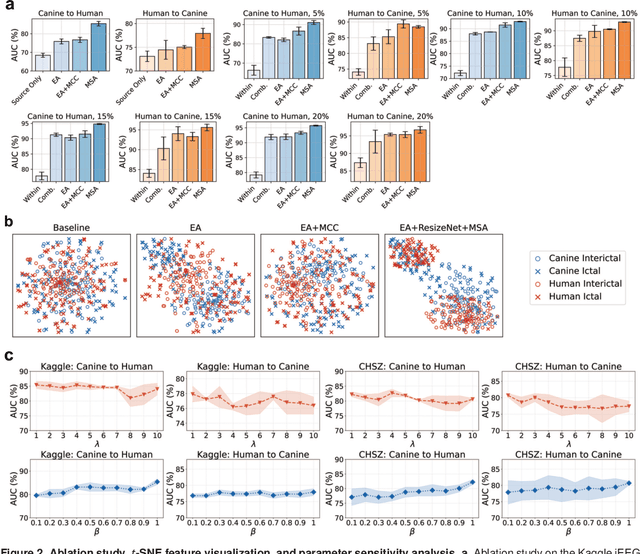

Abstract:Epilepsy significantly impacts global health, affecting about 65 million people worldwide, along with various animal species. The diagnostic processes of epilepsy are often hindered by the transient and unpredictable nature of seizures. Here we propose a multi-space alignment approach based on cross-species and cross-modality electroencephalogram (EEG) data to enhance the detection capabilities and understanding of epileptic seizures. By employing deep learning techniques, including domain adaptation and knowledge distillation, our framework aligns cross-species and cross-modality EEG signals to enhance the detection capability beyond traditional within-species and with-modality models. Experiments on multiple surface and intracranial EEG datasets of humans and canines demonstrated substantial improvements in the detection accuracy, achieving over 90% AUC scores for cross-species and cross-modality seizure detection with extremely limited labeled data from the target species/modality. To our knowledge, this is the first study that demonstrates the effectiveness of integrating heterogeneous data from different species and modalities to improve EEG-based seizure detection performance. The approach may also be generalizable to different brain-computer interface paradigms, and suggests the possibility to combine data from different species/modalities to increase the amount of training data for large EEG models.

Neural Network Methods for Radiation Detectors and Imaging

Nov 09, 2023

Abstract:Recent advances in image data processing through machine learning and especially deep neural networks (DNNs) allow for new optimization and performance-enhancement schemes for radiation detectors and imaging hardware through data-endowed artificial intelligence. We give an overview of data generation at photon sources, deep learning-based methods for image processing tasks, and hardware solutions for deep learning acceleration. Most existing deep learning approaches are trained offline, typically using large amounts of computational resources. However, once trained, DNNs can achieve fast inference speeds and can be deployed to edge devices. A new trend is edge computing with less energy consumption (hundreds of watts or less) and real-time analysis potential. While popularly used for edge computing, electronic-based hardware accelerators ranging from general purpose processors such as central processing units (CPUs) to application-specific integrated circuits (ASICs) are constantly reaching performance limits in latency, energy consumption, and other physical constraints. These limits give rise to next-generation analog neuromorhpic hardware platforms, such as optical neural networks (ONNs), for high parallel, low latency, and low energy computing to boost deep learning acceleration.

Self-Supervised Domain Adaptation with Consistency Training

Oct 15, 2020

Abstract:We consider the problem of unsupervised domain adaptation for image classification. To learn target-domain-aware features from the unlabeled data, we create a self-supervised pretext task by augmenting the unlabeled data with a certain type of transformation (specifically, image rotation) and ask the learner to predict the properties of the transformation. However, the obtained feature representation may contain a large amount of irrelevant information with respect to the main task. To provide further guidance, we force the feature representation of the augmented data to be consistent with that of the original data. Intuitively, the consistency introduces additional constraints to representation learning, therefore, the learned representation is more likely to focus on the right information about the main task. Our experimental results validate the proposed method and demonstrate state-of-the-art performance on classical domain adaptation benchmarks. Code is available at https://github.com/Jiaolong/ss-da-consistency.

Surrogate-free machine learning-based organ dose reconstruction for pediatric abdominal radiotherapy

Feb 17, 2020

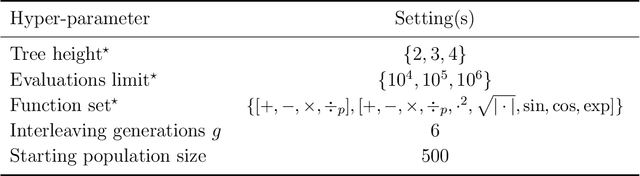

Abstract:To study radiotherapy-related adverse effects, detailed dose information (3D distribution) is needed for accurate dose-effect modeling. For childhood cancer survivors who underwent radiotherapy in the pre-CT era, only 2D radiographs were acquired, thus 3D dose distributions must be reconstructed. State-of-the-art methods achieve this by using 3D surrogate anatomies. These can however lack personalization and lead to coarse reconstructions. We present and validate a surrogate-free dose reconstruction method based on Machine Learning (ML). Abdominal planning CTs (n=142) of recently-treated childhood cancer patients were gathered, their organs at risk were segmented, and 300 artificial Wilms' tumor plans were sampled automatically. Each artificial plan was automatically emulated on the 142 CTs, resulting in 42,600 3D dose distributions from which dose-volume metrics were derived. Anatomical features were extracted from digitally reconstructed radiographs simulated from the CTs to resemble historical radiographs. Further, patient and radiotherapy plan features typically available from historical treatment records were collected. An evolutionary ML algorithm was then used to link features to dose-volume metrics. Besides 5-fold cross validation, a further evaluation was done on an independent dataset of five CTs each associated with two clinical plans. Cross-validation resulted in mean absolute errors $\leq$0.6 Gy for organs completely inside or outside the field. For organs positioned at the edge of the field, mean absolute errors $\leq$1.7 Gy for $D_{mean}$, $\leq$2.9 Gy for $D_{2cc}$, and $\leq$13% for $V_{5Gy}$ and $V_{10Gy}$, were obtained, without systematic bias. Similar results were found for the independent dataset. To conclude, our novel organ dose reconstruction method is not only accurate, but also efficient, as the setup of a surrogate is no longer needed.

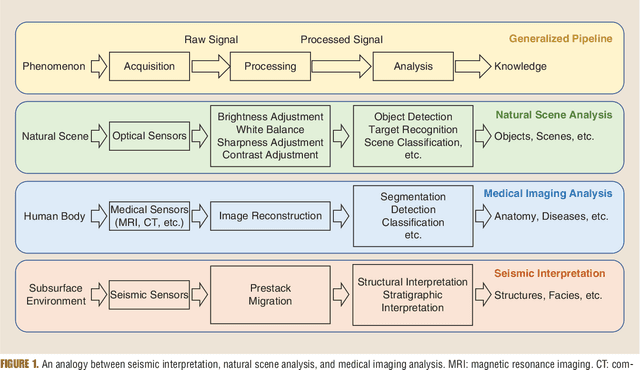

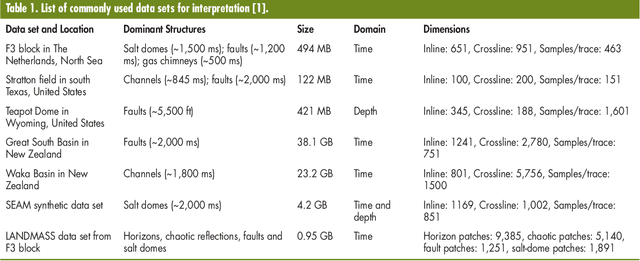

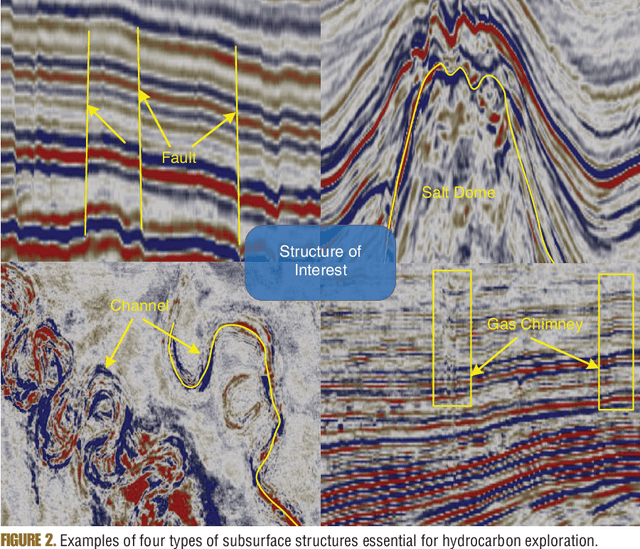

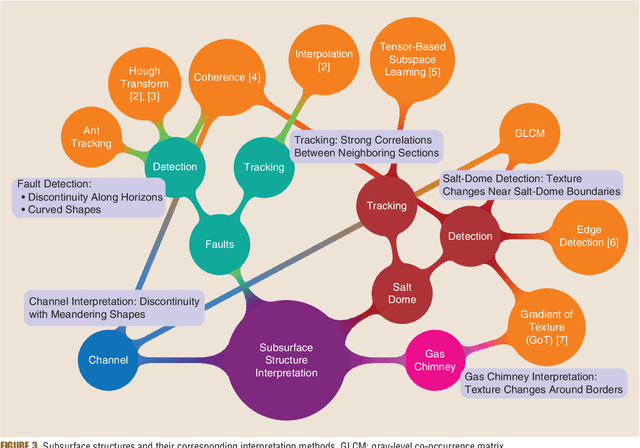

Subsurface structure analysis using computational interpretation and learning: A visual signal processing perspective

Dec 20, 2018

Abstract:Understanding Earth's subsurface structures has been and continues to be an essential component of various applications such as environmental monitoring, carbon sequestration, and oil and gas exploration. By viewing the seismic volumes that are generated through the processing of recorded seismic traces, researchers were able to learn from applying advanced image processing and computer vision algorithms to effectively analyze and understand Earth's subsurface structures. In this paper, first, we summarize the recent advances in this direction that relied heavily on the fields of image processing and computer vision. Second, we discuss the challenges in seismic interpretation and provide insights and some directions to address such challenges using emerging machine learning algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge