You Pang

PitVis-2023 Challenge: Workflow Recognition in videos of Endoscopic Pituitary Surgery

Sep 02, 2024

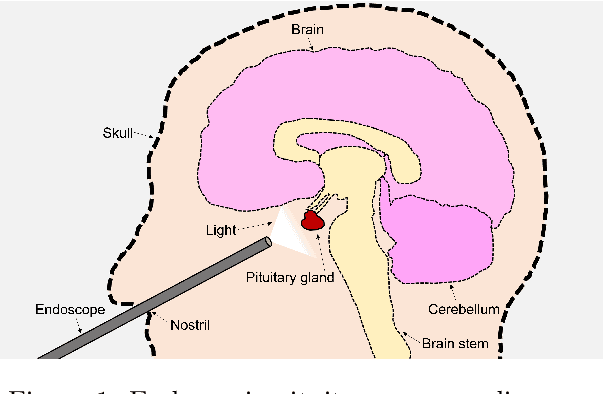

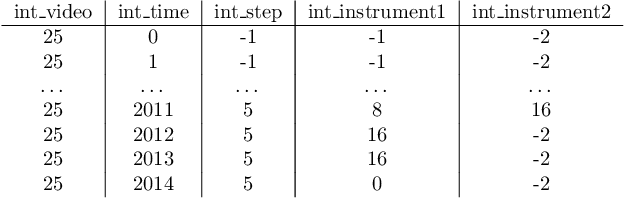

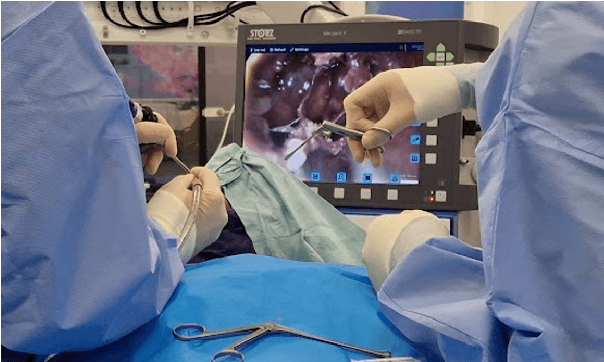

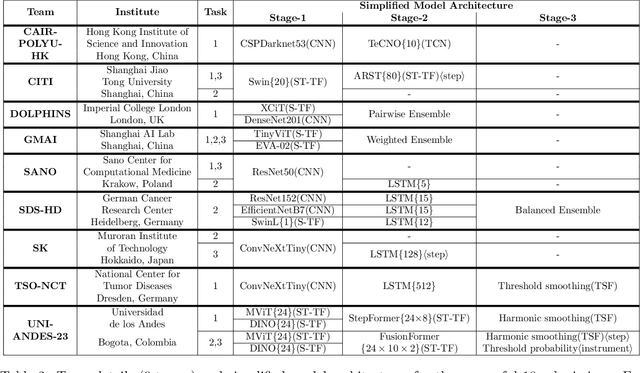

Abstract:The field of computer vision applied to videos of minimally invasive surgery is ever-growing. Workflow recognition pertains to the automated recognition of various aspects of a surgery: including which surgical steps are performed; and which surgical instruments are used. This information can later be used to assist clinicians when learning the surgery; during live surgery; and when writing operation notes. The Pituitary Vision (PitVis) 2023 Challenge tasks the community to step and instrument recognition in videos of endoscopic pituitary surgery. This is a unique task when compared to other minimally invasive surgeries due to the smaller working space, which limits and distorts vision; and higher frequency of instrument and step switching, which requires more precise model predictions. Participants were provided with 25-videos, with results presented at the MICCAI-2023 conference as part of the Endoscopic Vision 2023 Challenge in Vancouver, Canada, on 08-Oct-2023. There were 18-submissions from 9-teams across 6-countries, using a variety of deep learning models. A commonality between the top performing models was incorporating spatio-temporal and multi-task methods, with greater than 50% and 10% macro-F1-score improvement over purely spacial single-task models in step and instrument recognition respectively. The PitVis-2023 Challenge therefore demonstrates state-of-the-art computer vision models in minimally invasive surgery are transferable to a new dataset, with surgery specific techniques used to enhance performance, progressing the field further. Benchmark results are provided in the paper, and the dataset is publicly available at: https://doi.org/10.5522/04/26531686.

SurgPLAN: Surgical Phase Localization Network for Phase Recognition

Nov 16, 2023Abstract:Surgical phase recognition is crucial to providing surgery understanding in smart operating rooms. Despite great progress in automatic surgical phase recognition, most existing methods are still restricted by two problems. First, these methods cannot capture discriminative visual features for each frame and motion information with simple 2D networks. Second, the frame-by-frame recognition paradigm degrades the performance due to unstable predictions within each phase, termed as phase shaking. To address these two challenges, we propose a Surgical Phase LocAlization Network, named SurgPLAN, to facilitate a more accurate and stable surgical phase recognition with the principle of temporal detection. Specifically, we first devise a Pyramid SlowFast (PSF) architecture to serve as the visual backbone to capture multi-scale spatial and temporal features by two branches with different frame sampling rates. Moreover, we propose a Temporal Phase Localization (TPL) module to generate the phase prediction based on temporal region proposals, which ensures accurate and consistent predictions within each surgical phase. Extensive experiments confirm the significant advantages of our SurgPLAN over frame-by-frame approaches in terms of both accuracy and stability.

WS-YOLO: Weakly Supervised Yolo Network for Surgical Tool Localization in Endoscopic Videos

Sep 27, 2023

Abstract:Being able to automatically detect and track surgical instruments in endoscopic video recordings would allow for many useful applications that could transform different aspects of surgery. In robot-assisted surgery, the potentially informative data like categories of surgical tool can be captured, which is sparse, full of noise and without spatial information. We proposed a Weakly Supervised Yolo Network (WS-YOLO) for Surgical Tool Localization in Endoscopic Videos, to generate fine-grained semantic information with location and category from coarse-grained semantic information outputted by the da Vinci surgical robot, which significantly diminished the necessary human annotation labor while striking an optimal balance between the quantity of manually annotated data and detection performance. The source code is available at https://github.com/Breezewrf/Weakly-Supervised-Yolov8.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge