Xiangyu Du

Kling-Omni Technical Report

Dec 18, 2025

Abstract:We present Kling-Omni, a generalist generative framework designed to synthesize high-fidelity videos directly from multimodal visual language inputs. Adopting an end-to-end perspective, Kling-Omni bridges the functional separation among diverse video generation, editing, and intelligent reasoning tasks, integrating them into a holistic system. Unlike disjointed pipeline approaches, Kling-Omni supports a diverse range of user inputs, including text instructions, reference images, and video contexts, processing them into a unified multimodal representation to deliver cinematic-quality and highly-intelligent video content creation. To support these capabilities, we constructed a comprehensive data system that serves as the foundation for multimodal video creation. The framework is further empowered by efficient large-scale pre-training strategies and infrastructure optimizations for inference. Comprehensive evaluations reveal that Kling-Omni demonstrates exceptional capabilities in in-context generation, reasoning-based editing, and multimodal instruction following. Moving beyond a content creation tool, we believe Kling-Omni is a pivotal advancement toward multimodal world simulators capable of perceiving, reasoning, generating and interacting with the dynamic and complex worlds.

Relit-NeuLF: Efficient Relighting and Novel View Synthesis via Neural 4D Light Field

Oct 23, 2023

Abstract:In this paper, we address the problem of simultaneous relighting and novel view synthesis of a complex scene from multi-view images with a limited number of light sources. We propose an analysis-synthesis approach called Relit-NeuLF. Following the recent neural 4D light field network (NeuLF), Relit-NeuLF first leverages a two-plane light field representation to parameterize each ray in a 4D coordinate system, enabling efficient learning and inference. Then, we recover the spatially-varying bidirectional reflectance distribution function (SVBRDF) of a 3D scene in a self-supervised manner. A DecomposeNet learns to map each ray to its SVBRDF components: albedo, normal, and roughness. Based on the decomposed BRDF components and conditioning light directions, a RenderNet learns to synthesize the color of the ray. To self-supervise the SVBRDF decomposition, we encourage the predicted ray color to be close to the physically-based rendering result using the microfacet model. Comprehensive experiments demonstrate that the proposed method is efficient and effective on both synthetic data and real-world human face data, and outperforms the state-of-the-art results. We publicly released our code on GitHub. You can find it here: https://github.com/oppo-us-research/RelitNeuLF

PoP-Net: Pose over Parts Network for Multi-Person 3D Pose Estimation from a Depth Image

Dec 12, 2020

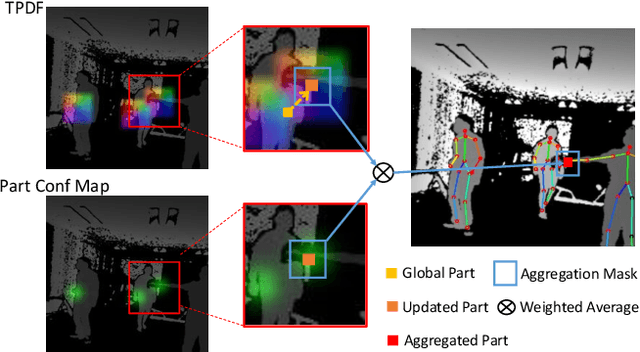

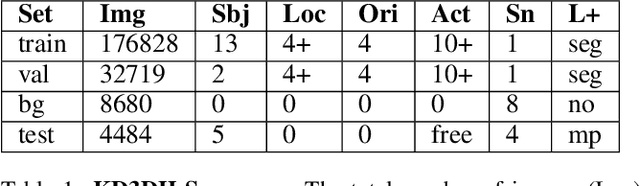

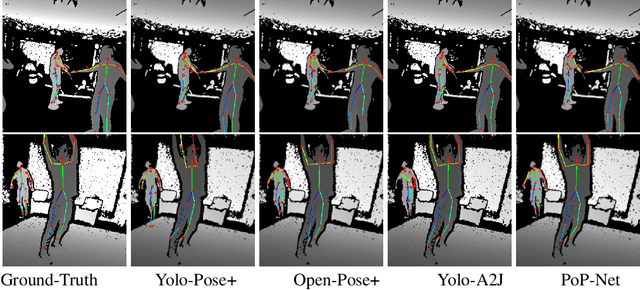

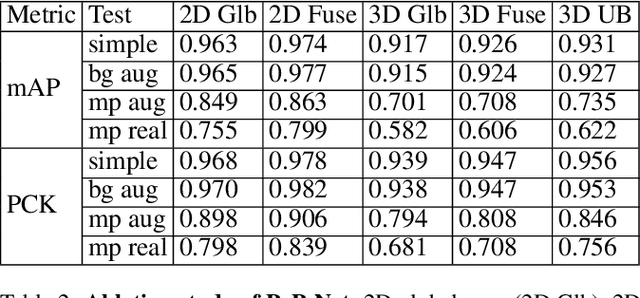

Abstract:In this paper, a real-time method called PoP-Net is proposed to predict multi-person 3D poses from a depth image. PoP-Net learns to predict bottom-up part detection maps and top-down global poses in a single-shot framework. A simple and effective fusion process is applied to fuse the global poses and part detection. Specifically, a new part-level representation, called Truncated Part Displacement Field (TPDF), is introduced. It drags low-precision global poses towards more accurate part locations while maintaining the advantage of global poses in handling severe occlusion and truncation cases. A mode selection scheme is developed to automatically resolve the conflict between global poses and local detection. Finally, due to the lack of high-quality depth datasets for developing and evaluating multi-person 3D pose estimation methods, a comprehensive depth dataset with 3D pose labels is released. The dataset is designed to enable effective multi-person and background data augmentation such that the developed models are more generalizable towards uncontrolled real-world multi-person scenarios. We show that PoP-Net has significant advantages in efficiency for multi-person processing and achieves the state-of-the-art results both on the released challenging dataset and on the widely used ITOP dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge