Will Pearce

Poisoning Web-Scale Training Datasets is Practical

Feb 20, 2023Abstract:Deep learning models are often trained on distributed, webscale datasets crawled from the internet. In this paper, we introduce two new dataset poisoning attacks that intentionally introduce malicious examples to a model's performance. Our attacks are immediately practical and could, today, poison 10 popular datasets. Our first attack, split-view poisoning, exploits the mutable nature of internet content to ensure a dataset annotator's initial view of the dataset differs from the view downloaded by subsequent clients. By exploiting specific invalid trust assumptions, we show how we could have poisoned 0.01% of the LAION-400M or COYO-700M datasets for just $60 USD. Our second attack, frontrunning poisoning, targets web-scale datasets that periodically snapshot crowd-sourced content -- such as Wikipedia -- where an attacker only needs a time-limited window to inject malicious examples. In light of both attacks, we notify the maintainers of each affected dataset and recommended several low-overhead defenses.

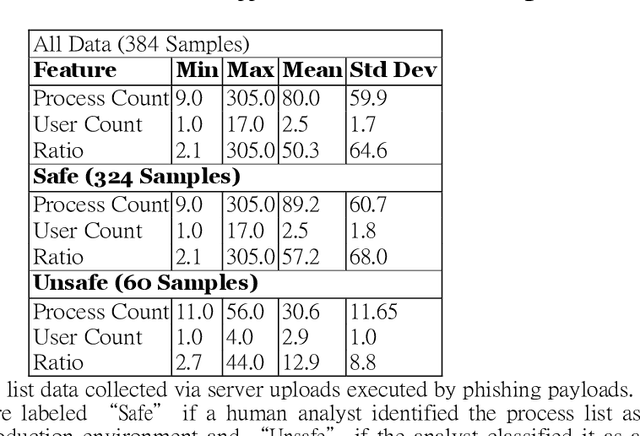

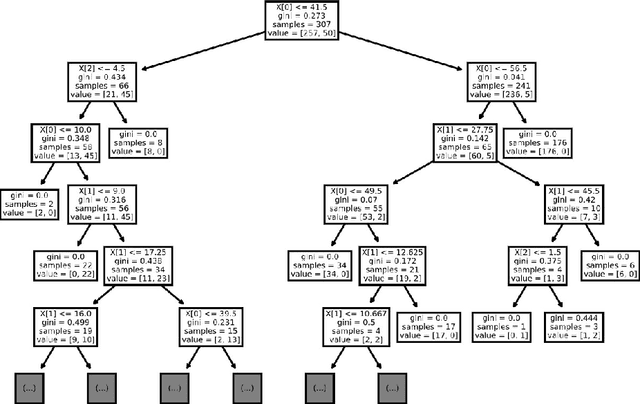

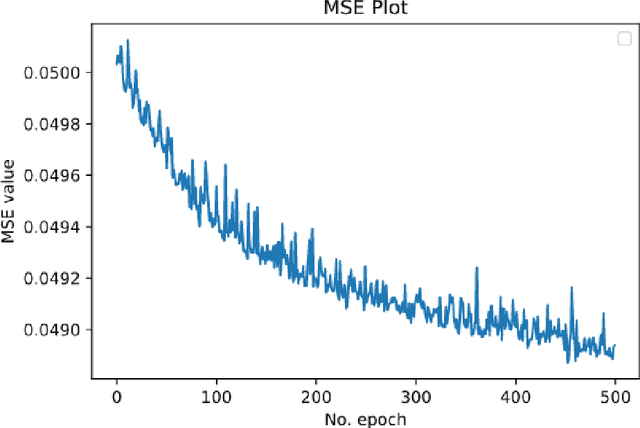

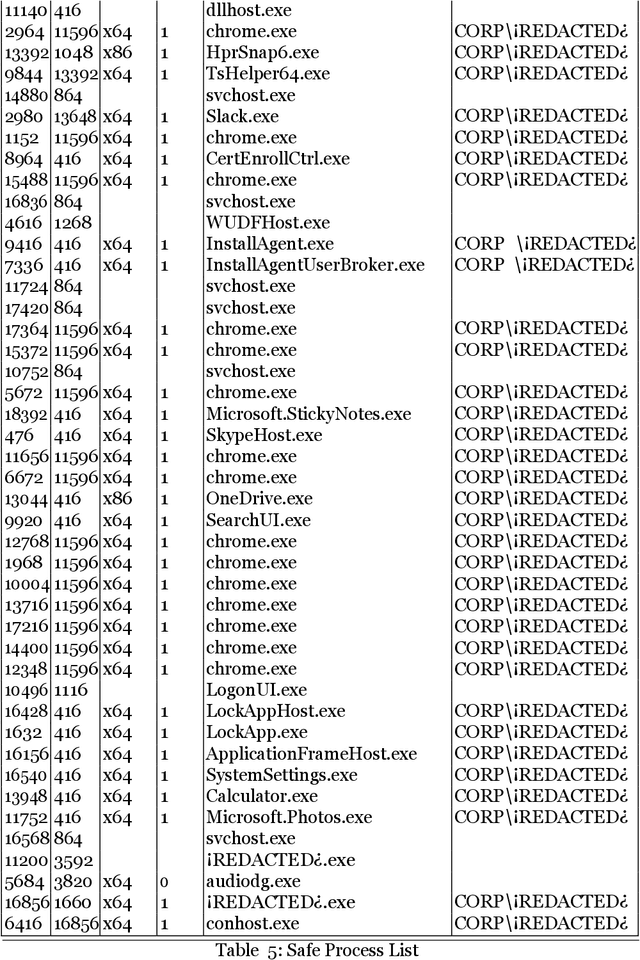

Machine Learning for Offensive Security: Sandbox Classification Using Decision Trees and Artificial Neural Networks

Jul 14, 2020

Abstract:The merits of machine learning in information security have primarily focused on bolstering defenses. However, machine learning (ML) techniques are not reserved for organizations with deep pockets and massive data repositories; the democratization of ML has lead to a rise in the number of security teams using ML to support offensive operations. The research presented here will explore two models that our team has used to solve a single offensive task, detecting a sandbox. Using process list data gathered with phishing emails, we will demonstrate the use of Decision Trees and Artificial Neural Networks to successfully classify sandboxes, thereby avoiding unsafe execution. This paper aims to give unique insight into how a real offensive team is using machine learning to support offensive operations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge