Wenfang Sun

RegionReasoner: Region-Grounded Multi-Round Visual Reasoning

Feb 03, 2026Abstract:Large vision-language models have achieved remarkable progress in visual reasoning, yet most existing systems rely on single-step or text-only reasoning, limiting their ability to iteratively refine understanding across multiple visual contexts. To address this limitation, we introduce a new multi-round visual reasoning benchmark with training and test sets spanning both detection and segmentation tasks, enabling systematic evaluation under iterative reasoning scenarios. We further propose RegionReasoner, a reinforcement learning framework that enforces grounded reasoning by requiring each reasoning trace to explicitly cite the corresponding reference bounding boxes, while maintaining semantic coherence via a global-local consistency reward. This reward extracts key objects and nouns from both global scene captions and region-level captions, aligning them with the reasoning trace to ensure consistency across reasoning steps. RegionReasoner is optimized with structured rewards combining grounding fidelity and global-local semantic alignment. Experiments on detection and segmentation tasks show that RegionReasoner-7B, together with our newly introduced benchmark RegionDial-Bench, considerably improves multi-round reasoning accuracy, spatial grounding precision, and global-local consistency, establishing a strong baseline for this emerging research direction.

The Curse of Depth in Large Language Models

Feb 09, 2025Abstract:In this paper, we introduce the Curse of Depth, a concept that highlights, explains, and addresses the recent observation in modern Large Language Models(LLMs) where nearly half of the layers are less effective than expected. We first confirm the wide existence of this phenomenon across the most popular families of LLMs such as Llama, Mistral, DeepSeek, and Qwen. Our analysis, theoretically and empirically, identifies that the underlying reason for the ineffectiveness of deep layers in LLMs is the widespread usage of Pre-Layer Normalization (Pre-LN). While Pre-LN stabilizes the training of Transformer LLMs, its output variance exponentially grows with the model depth, which undesirably causes the derivative of the deep Transformer blocks to be an identity matrix, and therefore barely contributes to the training. To resolve this training pitfall, we propose LayerNorm Scaling, which scales the variance of output of the layer normalization inversely by the square root of its depth. This simple modification mitigates the output variance explosion of deeper Transformer layers, improving their contribution. Our experimental results, spanning model sizes from 130M to 1B, demonstrate that LayerNorm Scaling significantly enhances LLM pre-training performance compared to Pre-LN. Moreover, this improvement seamlessly carries over to supervised fine-tuning. All these gains can be attributed to the fact that LayerNorm Scaling enables deeper layers to contribute more effectively during training.

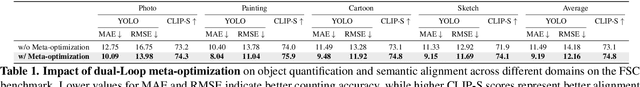

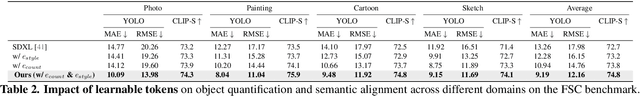

QUOTA: Quantifying Objects with Text-to-Image Models for Any Domain

Nov 29, 2024

Abstract:We tackle the problem of quantifying the number of objects by a generative text-to-image model. Rather than retraining such a model for each new image domain of interest, which leads to high computational costs and limited scalability, we are the first to consider this problem from a domain-agnostic perspective. We propose QUOTA, an optimization framework for text-to-image models that enables effective object quantification across unseen domains without retraining. It leverages a dual-loop meta-learning strategy to optimize a domain-invariant prompt. Further, by integrating prompt learning with learnable counting and domain tokens, our method captures stylistic variations and maintains accuracy, even for object classes not encountered during training. For evaluation, we adopt a new benchmark specifically designed for object quantification in domain generalization, enabling rigorous assessment of object quantification accuracy and adaptability across unseen domains in text-to-image generation. Extensive experiments demonstrate that QUOTA outperforms conventional models in both object quantification accuracy and semantic consistency, setting a new benchmark for efficient and scalable text-to-image generation for any domain.

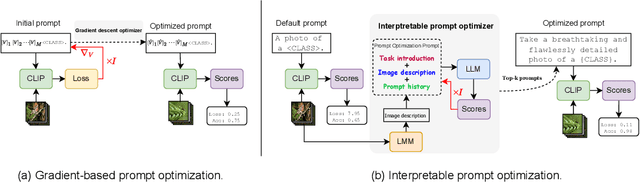

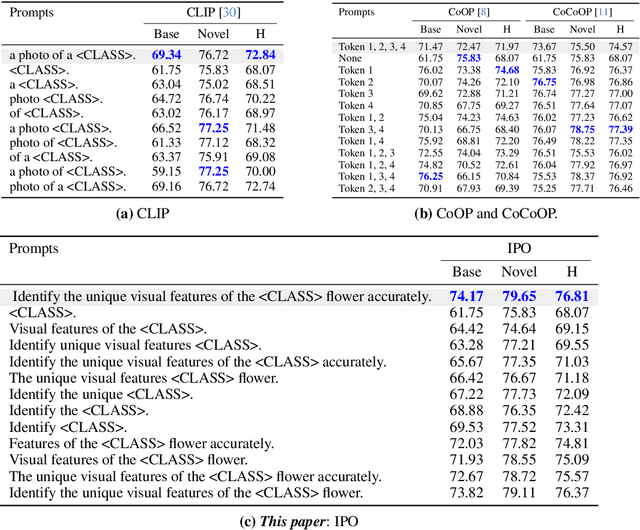

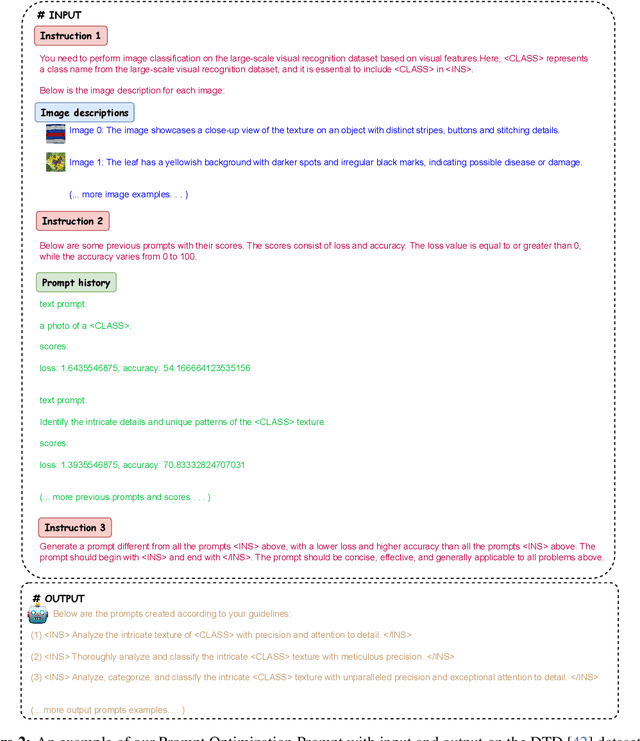

IPO: Interpretable Prompt Optimization for Vision-Language Models

Oct 20, 2024

Abstract:Pre-trained vision-language models like CLIP have remarkably adapted to various downstream tasks. Nonetheless, their performance heavily depends on the specificity of the input text prompts, which requires skillful prompt template engineering. Instead, current approaches to prompt optimization learn the prompts through gradient descent, where the prompts are treated as adjustable parameters. However, these methods tend to lead to overfitting of the base classes seen during training and produce prompts that are no longer understandable by humans. This paper introduces a simple but interpretable prompt optimizer (IPO), that utilizes large language models (LLMs) to generate textual prompts dynamically. We introduce a Prompt Optimization Prompt that not only guides LLMs in creating effective prompts but also stores past prompts with their performance metrics, providing rich in-context information. Additionally, we incorporate a large multimodal model (LMM) to condition on visual content by generating image descriptions, which enhance the interaction between textual and visual modalities. This allows for thae creation of dataset-specific prompts that improve generalization performance, while maintaining human comprehension. Extensive testing across 11 datasets reveals that IPO not only improves the accuracy of existing gradient-descent-based prompt learning methods but also considerably enhances the interpretability of the generated prompts. By leveraging the strengths of LLMs, our approach ensures that the prompts remain human-understandable, thereby facilitating better transparency and oversight for vision-language models.

Training-Free Semantic Segmentation via LLM-Supervision

Mar 31, 2024

Abstract:Recent advancements in open vocabulary models, like CLIP, have notably advanced zero-shot classification and segmentation by utilizing natural language for class-specific embeddings. However, most research has focused on improving model accuracy through prompt engineering, prompt learning, or fine-tuning with limited labeled data, thereby overlooking the importance of refining the class descriptors. This paper introduces a new approach to text-supervised semantic segmentation using supervision by a large language model (LLM) that does not require extra training. Our method starts from an LLM, like GPT-3, to generate a detailed set of subclasses for more accurate class representation. We then employ an advanced text-supervised semantic segmentation model to apply the generated subclasses as target labels, resulting in diverse segmentation results tailored to each subclass's unique characteristics. Additionally, we propose an assembly that merges the segmentation maps from the various subclass descriptors to ensure a more comprehensive representation of the different aspects in the test images. Through comprehensive experiments on three standard benchmarks, our method outperforms traditional text-supervised semantic segmentation methods by a marked margin.

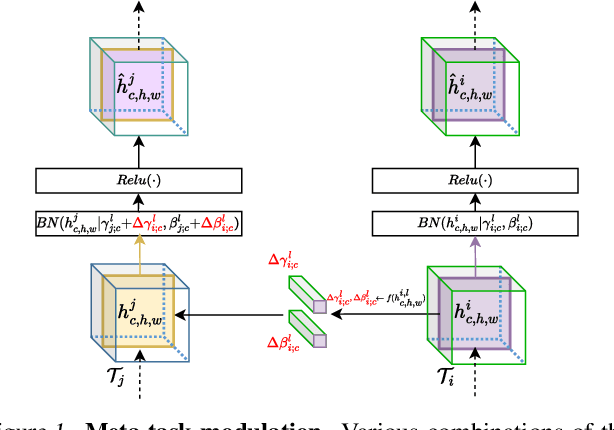

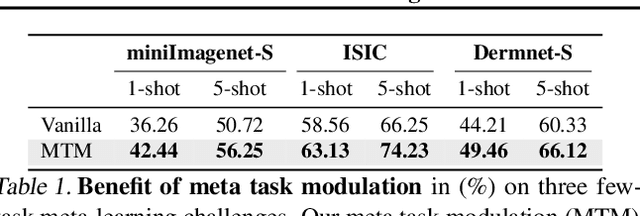

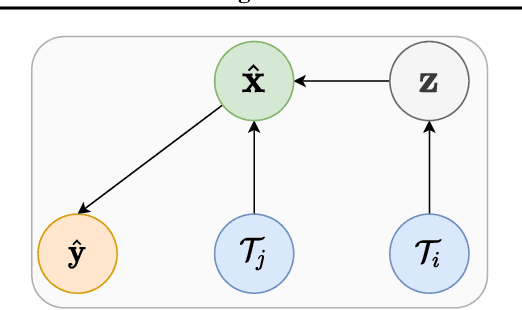

MetaModulation: Learning Variational Feature Hierarchies for Few-Shot Learning with Fewer Tasks

May 17, 2023

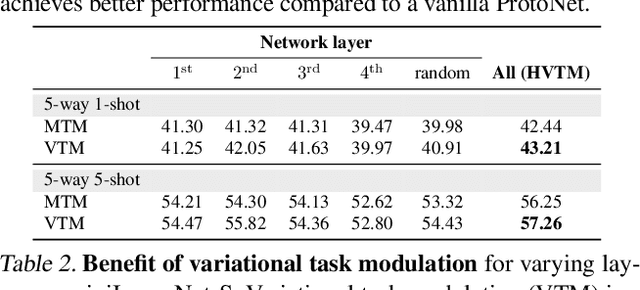

Abstract:Meta-learning algorithms are able to learn a new task using previously learned knowledge, but they often require a large number of meta-training tasks which may not be readily available. To address this issue, we propose a method for few-shot learning with fewer tasks, which we call MetaModulation. The key idea is to use a neural network to increase the density of the meta-training tasks by modulating batch normalization parameters during meta-training. Additionally, we modify parameters at various network levels, rather than just a single layer, to increase task diversity. To account for the uncertainty caused by the limited training tasks, we propose a variational MetaModulation where the modulation parameters are treated as latent variables. We also introduce learning variational feature hierarchies by the variational MetaModulation, which modulates features at all layers and can consider task uncertainty and generate more diverse tasks. The ablation studies illustrate the advantages of utilizing a learnable task modulation at different levels and demonstrate the benefit of incorporating probabilistic variants in few-task meta-learning. Our MetaModulation and its variational variants consistently outperform state-of-the-art alternatives on four few-task meta-learning benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge