Weikai Kong

Large Language Models as Automated Aligners for benchmarking Vision-Language Models

Nov 24, 2023

Abstract:With the advancements in Large Language Models (LLMs), Vision-Language Models (VLMs) have reached a new level of sophistication, showing notable competence in executing intricate cognition and reasoning tasks. However, existing evaluation benchmarks, primarily relying on rigid, hand-crafted datasets to measure task-specific performance, face significant limitations in assessing the alignment of these increasingly anthropomorphic models with human intelligence. In this work, we address the limitations via Auto-Bench, which delves into exploring LLMs as proficient aligners, measuring the alignment between VLMs and human intelligence and value through automatic data curation and assessment. Specifically, for data curation, Auto-Bench utilizes LLMs (e.g., GPT-4) to automatically generate a vast set of question-answer-reasoning triplets via prompting on visual symbolic representations (e.g., captions, object locations, instance relationships, and etc.). The curated data closely matches human intent, owing to the extensive world knowledge embedded in LLMs. Through this pipeline, a total of 28.5K human-verified and 3,504K unfiltered question-answer-reasoning triplets have been curated, covering 4 primary abilities and 16 sub-abilities. We subsequently engage LLMs like GPT-3.5 to serve as judges, implementing the quantitative and qualitative automated assessments to facilitate a comprehensive evaluation of VLMs. Our validation results reveal that LLMs are proficient in both evaluation data curation and model assessment, achieving an average agreement rate of 85%. We envision Auto-Bench as a flexible, scalable, and comprehensive benchmark for evaluating the evolving sophisticated VLMs.

Video Question Answering Using CLIP-Guided Visual-Text Attention

Mar 08, 2023

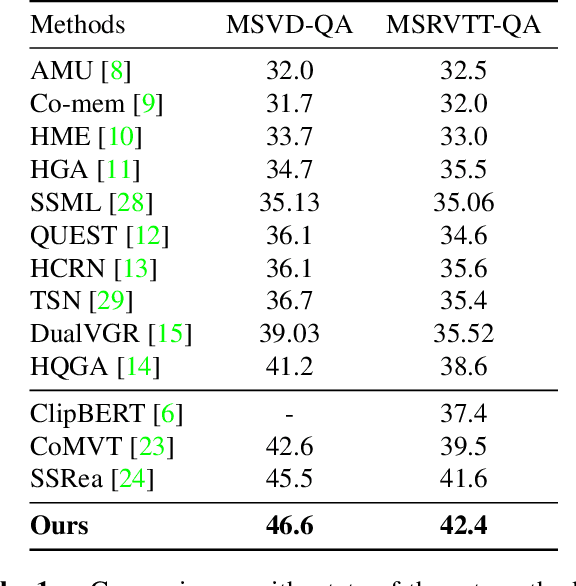

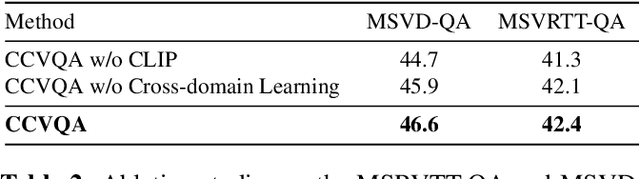

Abstract:Cross-modal learning of video and text plays a key role in Video Question Answering (VideoQA). In this paper, we propose a visual-text attention mechanism to utilize the Contrastive Language-Image Pre-training (CLIP) trained on lots of general domain language-image pairs to guide the cross-modal learning for VideoQA. Specifically, we first extract video features using a TimeSformer and text features using a BERT from the target application domain, and utilize CLIP to extract a pair of visual-text features from the general-knowledge domain through the domain-specific learning. We then propose a Cross-domain Learning to extract the attention information between visual and linguistic features across the target domain and general domain. The set of CLIP-guided visual-text features are integrated to predict the answer. The proposed method is evaluated on MSVD-QA and MSRVTT-QA datasets, and outperforms state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge