Weicheng Chi

Registration-Guided Deep Learning Image Segmentation for Cone Beam CT-based Online Adaptive Radiotherapy

Aug 19, 2021

Abstract:Adaptive radiotherapy (ART), especially online ART, effectively accounts for positioning errors and anatomical changes. One key component of online ART is accurately and efficiently delineating organs at risk (OARs) and targets on online images, such as CBCT, to meet the online demands of plan evaluation and adaptation. Deep learning (DL)-based automatic segmentation has gained great success in segmenting planning CT, but its applications to CBCT yielded inferior results due to the low image quality and limited available contour labels for training. To overcome these obstacles to online CBCT segmentation, we propose a registration-guided DL (RgDL) segmentation framework that integrates image registration algorithms and DL segmentation models. The registration algorithm generates initial contours, which were used as guidance by DL model to obtain accurate final segmentations. We had two implementations the proposed framework--Rig-RgDL (Rig for rigid body) and Def-RgDL (Def for deformable)--with rigid body (RB) registration or deformable image registration (DIR) as the registration algorithm respectively and U-Net as DL model architecture. The two implementations of RgDL framework were trained and evaluated on seven OARs in an institutional clinical Head and Neck (HN) dataset. Compared to the baseline approaches using the registration or the DL alone, RgDL achieved more accurate segmentation, as measured by higher mean Dice similarity coefficients (DSC) and other distance-based metrics. Rig-RgDL achieved a DSC of 84.5% on seven OARs on average, higher than RB or DL alone by 4.5% and 4.7%. The DSC of Def-RgDL is 86.5%, higher than DIR or DL alone by 2.4% and 6.7%. The inference time took by the DL model to generate final segmentations of seven OARs is less than one second in RgDL. The resulting segmentation accuracy and efficiency show the promise of applying RgDL framework for online ART.

Saliency-Guided Deep Learning Network for Automatic Tumor Bed Volume Delineation in Post-operative Breast Irradiation

May 06, 2021

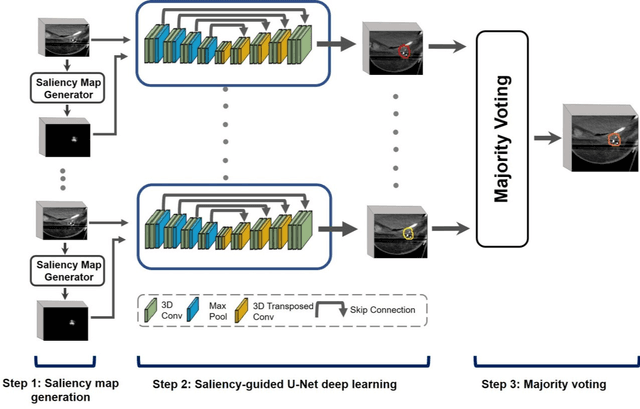

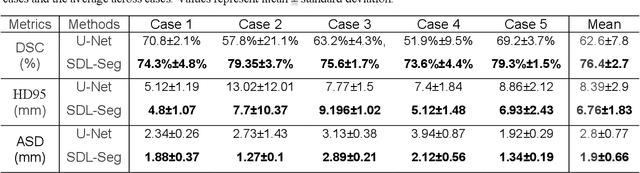

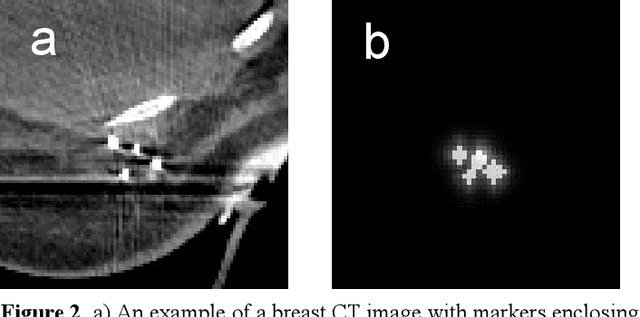

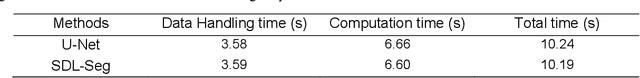

Abstract:Efficient, reliable and reproducible target volume delineation is a key step in the effective planning of breast radiotherapy. However, post-operative breast target delineation is challenging as the contrast between the tumor bed volume (TBV) and normal breast tissue is relatively low in CT images. In this study, we propose to mimic the marker-guidance procedure in manual target delineation. We developed a saliency-based deep learning segmentation (SDL-Seg) algorithm for accurate TBV segmentation in post-operative breast irradiation. The SDL-Seg algorithm incorporates saliency information in the form of markers' location cues into a U-Net model. The design forces the model to encode the location-related features, which underscores regions with high saliency levels and suppresses low saliency regions. The saliency maps were generated by identifying markers on CT images. Markers' locations were then converted to probability maps using a distance-transformation coupled with a Gaussian filter. Subsequently, the CT images and the corresponding saliency maps formed a multi-channel input for the SDL-Seg network. Our in-house dataset was comprised of 145 prone CT images from 29 post-operative breast cancer patients, who received 5-fraction partial breast irradiation (PBI) regimen on GammaPod. The performance of the proposed method was compared against basic U-Net. Our model achieved mean (standard deviation) of 76.4 %, 6.76 mm, and 1.9 mm for DSC, HD95, and ASD respectively on the test set with computation time of below 11 seconds per one CT volume. SDL-Seg showed superior performance relative to basic U-Net for all the evaluation metrics while preserving low computation cost. The findings demonstrate that SDL-Seg is a promising approach for improving the efficiency and accuracy of the on-line treatment planning procedure of PBI, such as GammaPod based PBI.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge