Tsung-Nan Lin

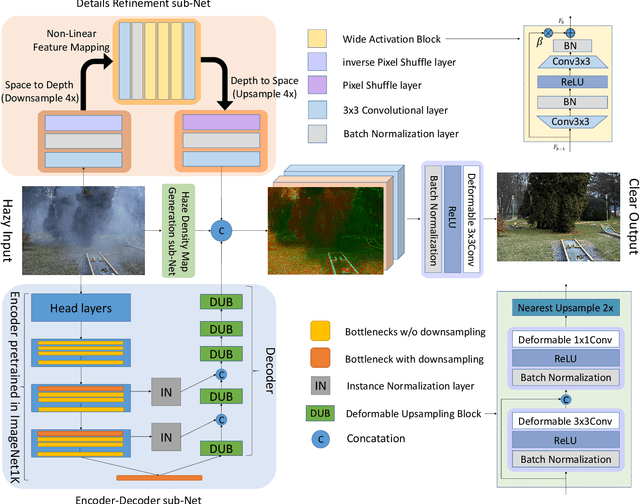

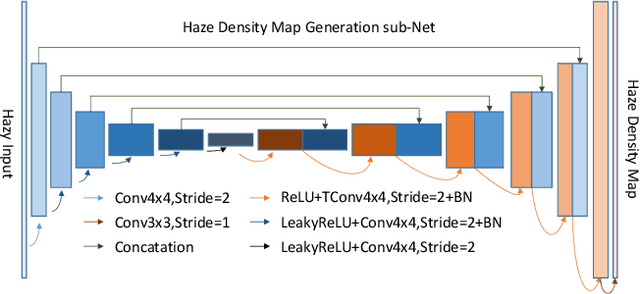

DAMix: Density-Aware Data Augmentation for Unsupervised Domain Adaptation on Single Image Dehazing

Sep 26, 2021

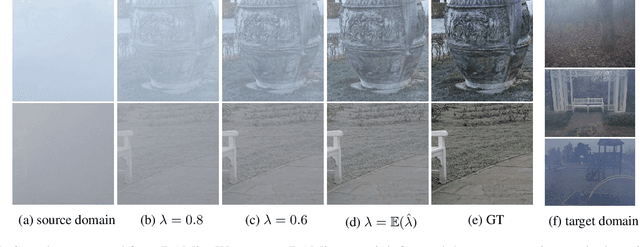

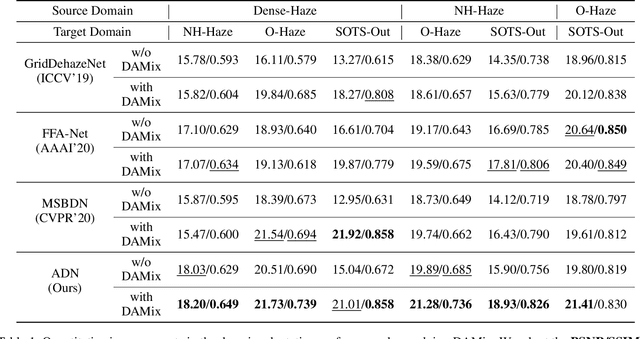

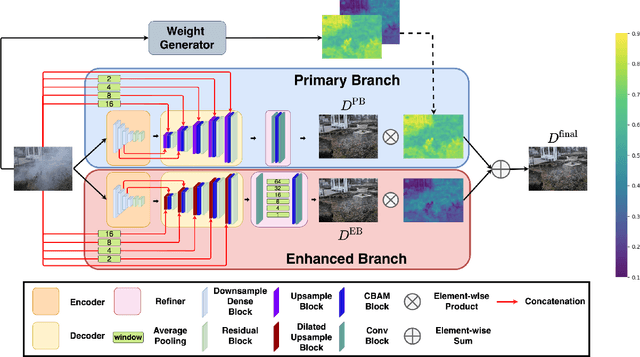

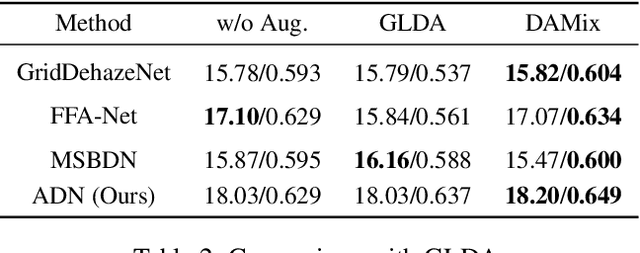

Abstract:Learning-based methods have achieved great success on single image dehazing in recent years. However, these methods are often subject to performance degradation when domain shifts are confronted. Specifically, haze density gaps exist among the existing datasets, often resulting in poor performance when these methods are tested across datasets. To address this issue, we propose a density-aware data augmentation method (DAMix) that generates synthetic hazy samples according to the haze density level of the target domain. These samples are generated by combining a hazy image with its corresponding ground truth by a combination ratio sampled from a density-aware distribution. They not only comply with the atmospheric scattering model but also bridge the haze density gap between the source and target domains. DAMix ensures that the model learns from examples featuring diverse haze densities. To better utilize the various hazy samples generated by DAMix, we develop a dual-branch dehazing network involving two branches that can adaptively remove haze according to the haze density of the region. In addition, the dual-branch design enlarges the learning capacity of the entire network; hence, our network can fully utilize the DAMix-ed samples. We evaluate the effectiveness of DAMix by applying it to the existing open-source dehazing methods. The experimental results demonstrate that all methods show significant improvements after DAMix is applied. Furthermore, by combining DAMix with our model, we can achieve state-of-the-art (SOTA) performance in terms of domain adaptation.

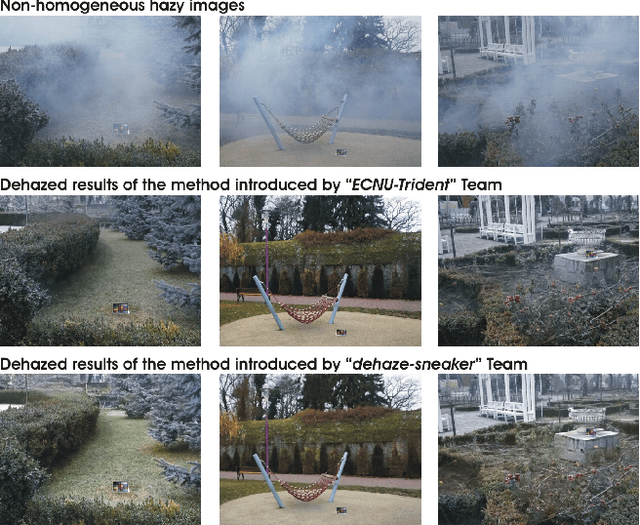

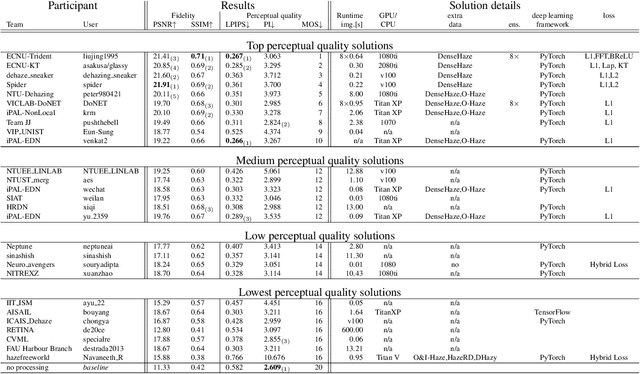

NTIRE 2020 Challenge on NonHomogeneous Dehazing

May 07, 2020

Abstract:This paper reviews the NTIRE 2020 Challenge on NonHomogeneous Dehazing of images (restoration of rich details in hazy image). We focus on the proposed solutions and their results evaluated on NH-Haze, a novel dataset consisting of 55 pairs of real haze free and nonhomogeneous hazy images recorded outdoor. NH-Haze is the first realistic nonhomogeneous haze dataset that provides ground truth images. The nonhomogeneous haze has been produced using a professional haze generator that imitates the real conditions of haze scenes. 168 participants registered in the challenge and 27 teams competed in the final testing phase. The proposed solutions gauge the state-of-the-art in image dehazing.

Vanishing Nodes: Another Phenomenon That Makes Training Deep Neural Networks Difficult

Oct 22, 2019

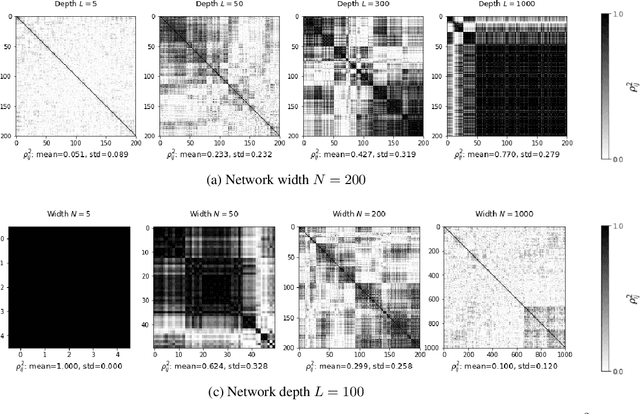

Abstract:It is well known that the problem of vanishing/exploding gradients is a challenge when training deep networks. In this paper, we describe another phenomenon, called vanishing nodes, that also increases the difficulty of training deep neural networks. As the depth of a neural network increases, the network's hidden nodes have more highly correlated behavior. This results in great similarities between these nodes. The redundancy of hidden nodes thus increases as the network becomes deeper. We call this problem vanishing nodes, and we propose the metric vanishing node indicator (VNI) for quantitatively measuring the degree of vanishing nodes. The VNI can be characterized by the network parameters, which is shown analytically to be proportional to the depth of the network and inversely proportional to the network width. The theoretical results show that the effective number of nodes vanishes to one when the VNI increases to one (its maximal value), and that vanishing/exploding gradients and vanishing nodes are two different challenges that increase the difficulty of training deep neural networks. The numerical results from the experiments suggest that the degree of vanishing nodes will become more evident during back-propagation training, and that when the VNI is equal to 1, the network cannot learn simple tasks (e.g. the XOR problem) even when the gradients are neither vanishing nor exploding. We refer to this kind of gradients as the walking dead gradients, which cannot help the network converge when having a relatively large enough scale. Finally, the experiments show that the likelihood of failed training increases as the depth of the network increases. The training will become much more difficult due to the lack of network representation capability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge