Tong Nie

ADV-0: Closed-Loop Min-Max Adversarial Training for Long-Tail Robustness in Autonomous Driving

Mar 16, 2026Abstract:Deploying autonomous driving systems requires robustness against long-tail scenarios that are rare but safety-critical. While adversarial training offers a promising solution, existing methods typically decouple scenario generation from policy optimization and rely on heuristic surrogates. This leads to objective misalignment and fails to capture the shifting failure modes of evolving policies. This paper presents ADV-0, a closed-loop min-max optimization framework that treats the interaction between driving policy (defender) and adversarial agent (attacker) as a zero-sum Markov game. By aligning the attacker's utility directly with the defender's objective, we reveal the optimal adversary distribution. To make this tractable, we cast dynamic adversary evolution as iterative preference learning, efficiently approximating this optimum and offering an algorithm-agnostic solution to the game. Theoretically, ADV-0 converges to a Nash Equilibrium and maximizes a certified lower bound on real-world performance. Experiments indicate that it effectively exposes diverse safety-critical failures and greatly enhances the generalizability of both learned policies and motion planners against unseen long-tail risks.

Seeking to Collide: Online Safety-Critical Scenario Generation for Autonomous Driving with Retrieval Augmented Large Language Models

May 02, 2025Abstract:Simulation-based testing is crucial for validating autonomous vehicles (AVs), yet existing scenario generation methods either overfit to common driving patterns or operate in an offline, non-interactive manner that fails to expose rare, safety-critical corner cases. In this paper, we introduce an online, retrieval-augmented large language model (LLM) framework for generating safety-critical driving scenarios. Our method first employs an LLM-based behavior analyzer to infer the most dangerous intent of the background vehicle from the observed state, then queries additional LLM agents to synthesize feasible adversarial trajectories. To mitigate catastrophic forgetting and accelerate adaptation, we augment the framework with a dynamic memorization and retrieval bank of intent-planner pairs, automatically expanding its behavioral library when novel intents arise. Evaluations using the Waymo Open Motion Dataset demonstrate that our model reduces the mean minimum time-to-collision from 1.62 to 1.08 s and incurs a 75% collision rate, substantially outperforming baselines.

Exploring the Roles of Large Language Models in Reshaping Transportation Systems: A Survey, Framework, and Roadmap

Mar 27, 2025Abstract:Modern transportation systems face pressing challenges due to increasing demand, dynamic environments, and heterogeneous information integration. The rapid evolution of Large Language Models (LLMs) offers transformative potential to address these challenges. Extensive knowledge and high-level capabilities derived from pretraining evolve the default role of LLMs as text generators to become versatile, knowledge-driven task solvers for intelligent transportation systems. This survey first presents LLM4TR, a novel conceptual framework that systematically categorizes the roles of LLMs in transportation into four synergetic dimensions: information processors, knowledge encoders, component generators, and decision facilitators. Through a unified taxonomy, we systematically elucidate how LLMs bridge fragmented data pipelines, enhance predictive analytics, simulate human-like reasoning, and enable closed-loop interactions across sensing, learning, modeling, and managing tasks in transportation systems. For each role, our review spans diverse applications, from traffic prediction and autonomous driving to safety analytics and urban mobility optimization, highlighting how emergent capabilities of LLMs such as in-context learning and step-by-step reasoning can enhance the operation and management of transportation systems. We further curate practical guidance, including available resources and computational guidelines, to support real-world deployment. By identifying challenges in existing LLM-based solutions, this survey charts a roadmap for advancing LLM-driven transportation research, positioning LLMs as central actors in the next generation of cyber-physical-social mobility ecosystems. Online resources can be found in the project page: https://github.com/tongnie/awesome-llm4tr.

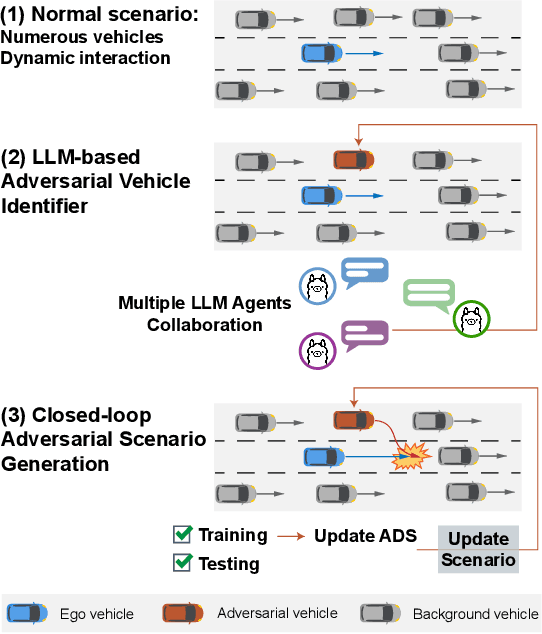

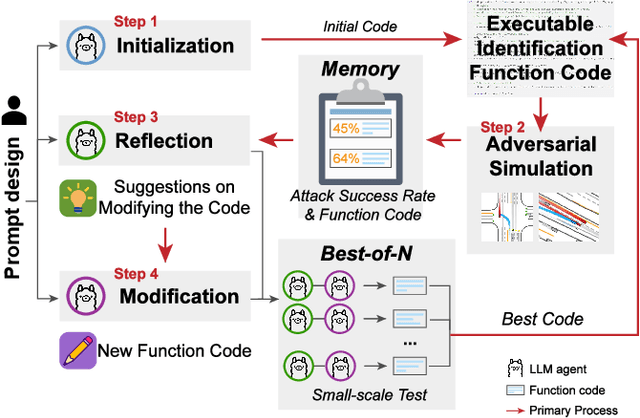

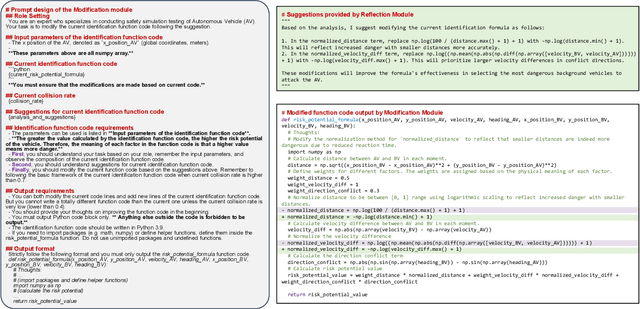

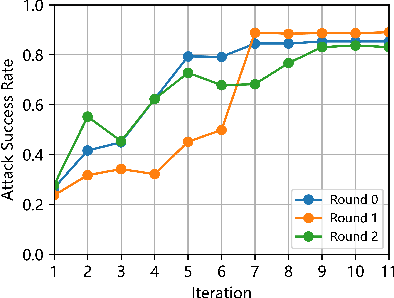

LLM-attacker: Enhancing Closed-loop Adversarial Scenario Generation for Autonomous Driving with Large Language Models

Jan 27, 2025

Abstract:Ensuring and improving the safety of autonomous driving systems (ADS) is crucial for the deployment of highly automated vehicles, especially in safety-critical events. To address the rarity issue, adversarial scenario generation methods are developed, in which behaviors of traffic participants are manipulated to induce safety-critical events. However, existing methods still face two limitations. First, identification of the adversarial participant directly impacts the effectiveness of the generation. However, the complexity of real-world scenarios, with numerous participants and diverse behaviors, makes identification challenging. Second, the potential of generated safety-critical scenarios to continuously improve ADS performance remains underexplored. To address these issues, we propose LLM-attacker: a closed-loop adversarial scenario generation framework leveraging large language models (LLMs). Specifically, multiple LLM agents are designed and coordinated to identify optimal attackers. Then, the trajectories of the attackers are optimized to generate adversarial scenarios. These scenarios are iteratively refined based on the performance of ADS, forming a feedback loop to improve ADS. Experimental results show that LLM-attacker can create more dangerous scenarios than other methods, and the ADS trained with it achieves a collision rate half that of training with normal scenarios. This indicates the ability of LLM-attacker to test and enhance the safety and robustness of ADS. Video demonstrations are provided at: https://drive.google.com/file/d/1Zv4V3iG7825oyiKbUwS2Y-rR0DQIE1ZA/view.

Collaborative Imputation of Urban Time Series through Cross-city Meta-learning

Jan 20, 2025

Abstract:Urban time series, such as mobility flows, energy consumption, and pollution records, encapsulate complex urban dynamics and structures. However, data collection in each city is impeded by technical challenges such as budget limitations and sensor failures, necessitating effective data imputation techniques that can enhance data quality and reliability. Existing imputation models, categorized into learning-based and analytics-based paradigms, grapple with the trade-off between capacity and generalizability. Collaborative learning to reconstruct data across multiple cities holds the promise of breaking this trade-off. Nevertheless, urban data's inherent irregularity and heterogeneity issues exacerbate challenges of knowledge sharing and collaboration across cities. To address these limitations, we propose a novel collaborative imputation paradigm leveraging meta-learned implicit neural representations (INRs). INRs offer a continuous mapping from domain coordinates to target values, integrating the strengths of both paradigms. By imposing embedding theory, we first employ continuous parameterization to handle irregularity and reconstruct the dynamical system. We then introduce a cross-city collaborative learning scheme through model-agnostic meta learning, incorporating hierarchical modulation and normalization techniques to accommodate multiscale representations and reduce variance in response to heterogeneity. Extensive experiments on a diverse urban dataset from 20 global cities demonstrate our model's superior imputation performance and generalizability, underscoring the effectiveness of collaborative imputation in resource-constrained settings.

Joint Estimation and Prediction of City-wide Delivery Demand: A Large Language Model Empowered Graph-based Learning Approach

Aug 30, 2024

Abstract:The proliferation of e-commerce and urbanization has significantly intensified delivery operations in urban areas, boosting the volume and complexity of delivery demand. Data-driven predictive methods, especially those utilizing machine learning techniques, have emerged to handle these complexities in urban delivery demand management problems. One particularly pressing problem that has not yet been sufficiently studied is the joint estimation and prediction of city-wide delivery demand. To this end, we formulate this problem as a graph-based spatiotemporal learning task. First, a message-passing neural network model is formalized to capture the interaction between demand patterns of associated regions. Second, by exploiting recent advances in large language models, we extract general geospatial knowledge encodings from the unstructured locational data and integrate them into the demand predictor. Last, to encourage the cross-city transferability of the model, an inductive training scheme is developed in an end-to-end routine. Extensive empirical results on two real-world delivery datasets, including eight cities in China and the US, demonstrate that our model significantly outperforms state-of-the-art baselines in these challenging tasks.

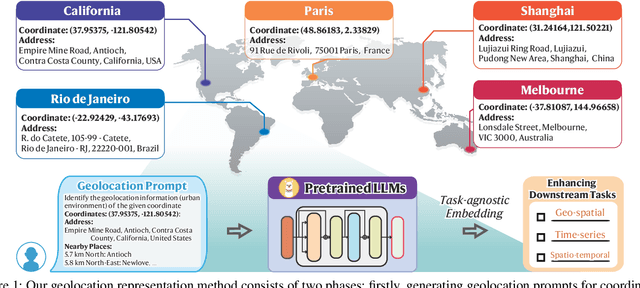

Geolocation Representation from Large Language Models are Generic Enhancers for Spatio-Temporal Learning

Aug 22, 2024

Abstract:In the geospatial domain, universal representation models are significantly less prevalent than their extensive use in natural language processing and computer vision. This discrepancy arises primarily from the high costs associated with the input of existing representation models, which often require street views and mobility data. To address this, we develop a novel, training-free method that leverages large language models (LLMs) and auxiliary map data from OpenStreetMap to derive geolocation representations (LLMGeovec). LLMGeovec can represent the geographic semantics of city, country, and global scales, which acts as a generic enhancer for spatio-temporal learning. Specifically, by direct feature concatenation, we introduce a simple yet effective paradigm for enhancing multiple spatio-temporal tasks including geographic prediction (GP), long-term time series forecasting (LTSF), and graph-based spatio-temporal forecasting (GSTF). LLMGeovec can seamlessly integrate into a wide spectrum of spatio-temporal learning models, providing immediate enhancements. Experimental results demonstrate that LLMGeovec achieves global coverage and significantly boosts the performance of leading GP, LTSF, and GSTF models.

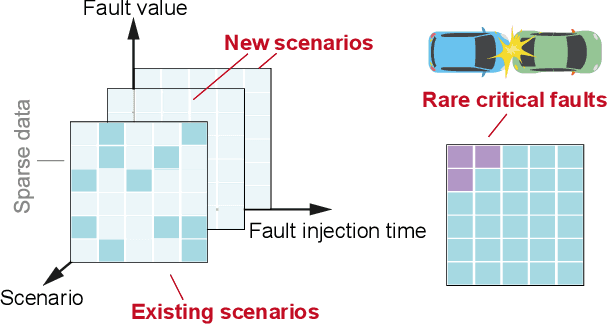

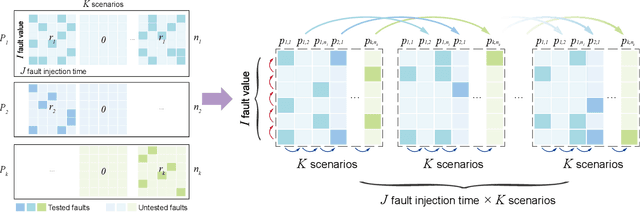

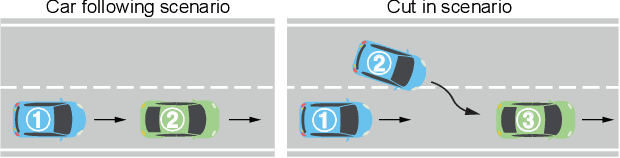

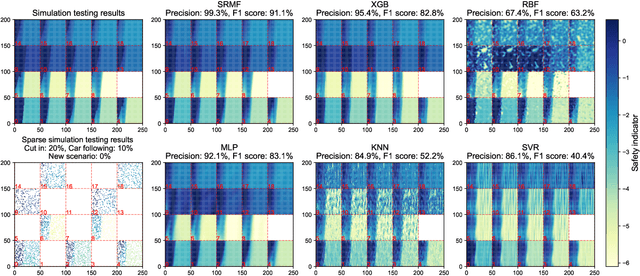

High-Dimensional Fault Tolerance Testing of Highly Automated Vehicles Based on Low-Rank Models

Jul 28, 2024

Abstract:Ensuring fault tolerance of Highly Automated Vehicles (HAVs) is crucial for their safety due to the presence of potentially severe faults. Hence, Fault Injection (FI) testing is conducted by practitioners to evaluate the safety level of HAVs. To fully cover test cases, various driving scenarios and fault settings should be considered. However, due to numerous combinations of test scenarios and fault settings, the testing space can be complex and high-dimensional. In addition, evaluating performance in all newly added scenarios is resource-consuming. The rarity of critical faults that can cause security problems further strengthens the challenge. To address these challenges, we propose to accelerate FI testing under the low-rank Smoothness Regularized Matrix Factorization (SRMF) framework. We first organize the sparse evaluated data into a structured matrix based on its safety values. Then the untested values are estimated by the correlation captured by the matrix structure. To address high dimensionality, a low-rank constraint is imposed on the testing space. To exploit the relationships between existing scenarios and new scenarios and capture the local regularity of critical faults, three types of smoothness regularization are further designed as a complement. We conduct experiments on car following and cut in scenarios. The results indicate that SRMF has the lowest prediction error in various scenarios and is capable of predicting rare critical faults compared to other machine learning models. In addition, SRMF can achieve 1171 acceleration rate, 99.3% precision and 91.1% F1 score in identifying critical faults. To the best of our knowledge, this is the first work to introduce low-rank models to FI testing of HAVs.

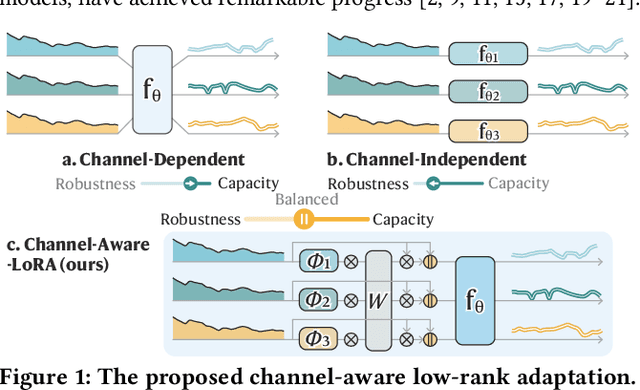

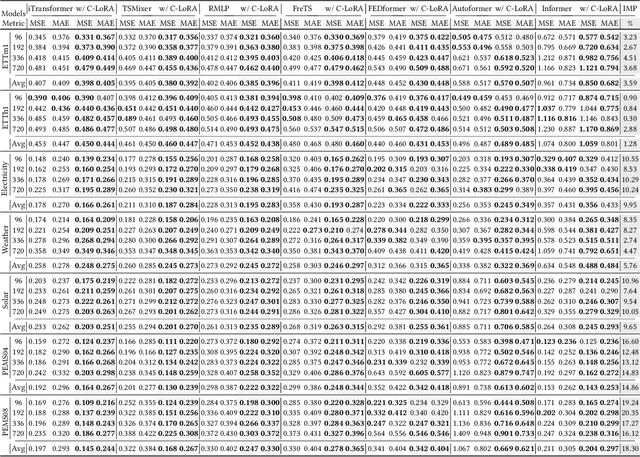

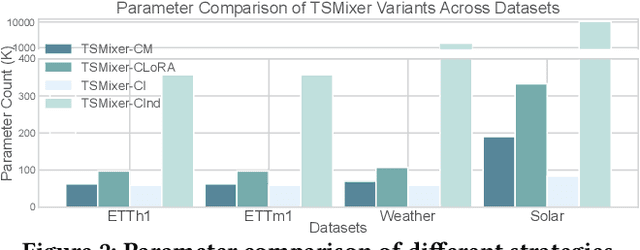

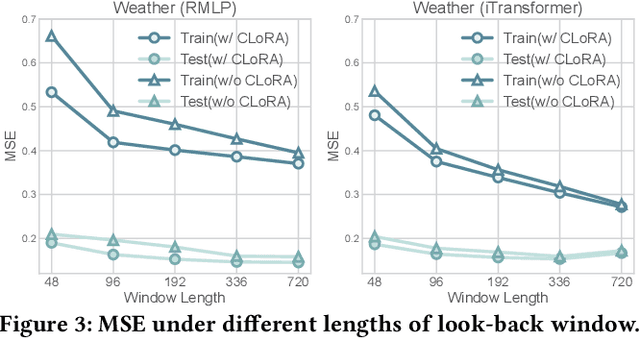

Channel-Aware Low-Rank Adaptation in Time Series Forecasting

Jul 24, 2024

Abstract:The balance between model capacity and generalization has been a key focus of recent discussions in long-term time series forecasting. Two representative channel strategies are closely associated with model expressivity and robustness, including channel independence (CI) and channel dependence (CD). The former adopts individual channel treatment and has been shown to be more robust to distribution shifts, but lacks sufficient capacity to model meaningful channel interactions. The latter is more expressive for representing complex cross-channel dependencies, but is prone to overfitting. To balance the two strategies, we present a channel-aware low-rank adaptation method to condition CD models on identity-aware individual components. As a plug-in solution, it is adaptable for a wide range of backbone architectures. Extensive experiments show that it can consistently and significantly improve the performance of both CI and CD models with demonstrated efficiency and flexibility. The code is available at https://github.com/tongnie/C-LoRA.

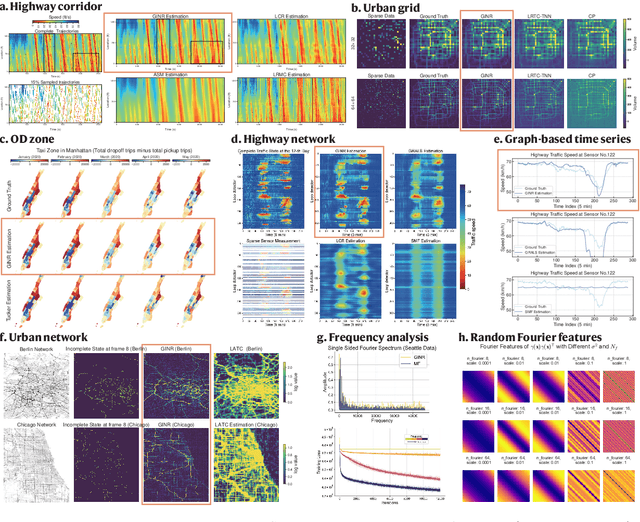

Generalizable Implicit Neural Representation As a Universal Spatiotemporal Traffic Data Learner

Jun 13, 2024

Abstract:$\textbf{This is the conference version of our paper: Spatiotemporal Implicit Neural Representation as a Generalized Traffic Data Learner}$. Spatiotemporal Traffic Data (STTD) measures the complex dynamical behaviors of the multiscale transportation system. Existing methods aim to reconstruct STTD using low-dimensional models. However, they are limited to data-specific dimensions or source-dependent patterns, restricting them from unifying representations. Here, we present a novel paradigm to address the STTD learning problem by parameterizing STTD as an implicit neural representation. To discern the underlying dynamics in low-dimensional regimes, coordinate-based neural networks that can encode high-frequency structures are employed to directly map coordinates to traffic variables. To unravel the entangled spatial-temporal interactions, the variability is decomposed into separate processes. We further enable modeling in irregular spaces such as sensor graphs using spectral embedding. Through continuous representations, our approach enables the modeling of a variety of STTD with a unified input, thereby serving as a generalized learner of the underlying traffic dynamics. It is also shown that it can learn implicit low-rank priors and smoothness regularization from the data, making it versatile for learning different dominating data patterns. We validate its effectiveness through extensive experiments in real-world scenarios, showcasing applications from corridor to network scales. Empirical results not only indicate that our model has significant superiority over conventional low-rank models, but also highlight that the versatility of the approach. We anticipate that this pioneering modeling perspective could lay the foundation for universal representation of STTD in various real-world tasks. $\textbf{The full version can be found at:}$ https://doi.org/10.48550/arXiv.2405.03185.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge