Tingmin Li

The Solution for the sequential task continual learning track of the 2nd Greater Bay Area International Algorithm Competition

Jul 06, 2024

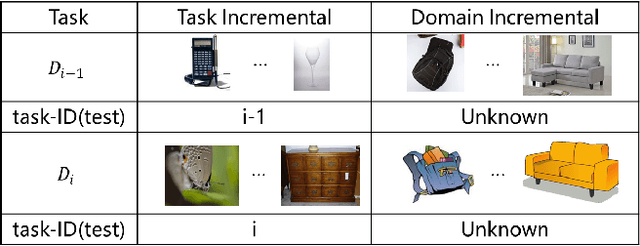

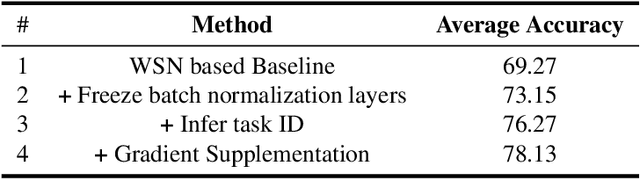

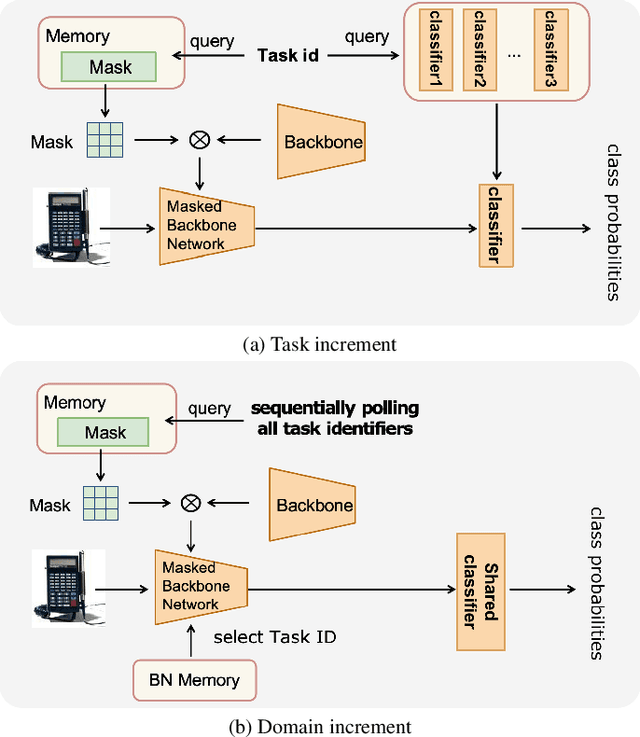

Abstract:This paper presents a data-free, parameter-isolation-based continual learning algorithm we developed for the sequential task continual learning track of the 2nd Greater Bay Area International Algorithm Competition. The method learns an independent parameter subspace for each task within the network's convolutional and linear layers and freezes the batch normalization layers after the first task. Specifically, for domain incremental setting where all domains share a classification head, we freeze the shared classification head after first task is completed, effectively solving the issue of catastrophic forgetting. Additionally, facing the challenge of domain incremental settings without providing a task identity, we designed an inference task identity strategy, selecting an appropriate mask matrix for each sample. Furthermore, we introduced a gradient supplementation strategy to enhance the importance of unselected parameters for the current task, facilitating learning for new tasks. We also implemented an adaptive importance scoring strategy that dynamically adjusts the amount of parameters to optimize single-task performance while reducing parameter usage. Moreover, considering the limitations of storage space and inference time, we designed a mask matrix compression strategy to save storage space and improve the speed of encryption and decryption of the mask matrix. Our approach does not require expanding the core network or using external auxiliary networks or data, and performs well under both task incremental and domain incremental settings. This solution ultimately won a second-place prize in the competition.

Proposal Report for the 2nd SciCAP Competition 2024

Jul 02, 2024Abstract:In this paper, we propose a method for document summarization using auxiliary information. This approach effectively summarizes descriptions related to specific images, tables, and appendices within lengthy texts. Our experiments demonstrate that leveraging high-quality OCR data and initially extracted information from the original text enables efficient summarization of the content related to described objects. Based on these findings, we enhanced popular text generation model models by incorporating additional auxiliary branches to improve summarization performance. Our method achieved top scores of 4.33 and 4.66 in the long caption and short caption tracks, respectively, of the 2024 SciCAP competition, ranking highest in both categories.

The Championship-Winning Solution for the 5th CLVISION Challenge 2024

Jun 24, 2024

Abstract:In this paper, we introduce our approach to the 5th CLVision Challenge, which presents distinctive challenges beyond traditional class incremental learning. Unlike standard settings, this competition features the recurrence of previously encountered classes and includes unlabeled data that may contain Out-of-Distribution (OOD) categories. Our approach is based on Winning Subnetworks to allocate independent parameter spaces for each task addressing the catastrophic forgetting problem in class incremental learning and employ three training strategies: supervised classification learning, unsupervised contrastive learning, and pseudo-label classification learning to fully utilize the information in both labeled and unlabeled data, enhancing the classification performance of each subnetwork. Furthermore, during the inference stage, we have devised an interaction strategy between subnetworks, where the prediction for a specific class of a particular sample is the average logits across different subnetworks corresponding to that class, leveraging the knowledge learned from different subnetworks on recurring classes to improve classification accuracy. These strategies can be simultaneously applied to the three scenarios of the competition, effectively solving the difficulties in the competition scenarios. Experimentally, our method ranks first in both the pre-selection and final evaluation stages, with an average accuracy of 0.4535 during the preselection stage and an average accuracy of 0.4805 during the final evaluation stage.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge