Thomas Bolander

Technical University of Denmark

A Logic of General Attention Using Edge-Conditioned Event Models (Extended Version)

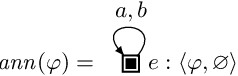

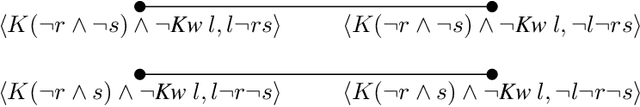

May 20, 2025Abstract:In this work, we present the first general logic of attention. Attention is a powerful cognitive ability that allows agents to focus on potentially complex information, such as logically structured propositions, higher-order beliefs, or what other agents pay attention to. This ability is a strength, as it helps to ignore what is irrelevant, but it can also introduce biases when some types of information or agents are systematically ignored. Existing dynamic epistemic logics for attention cannot model such complex attention scenarios, as they only model attention to atomic formulas. Additionally, such logics quickly become cumbersome, as their size grows exponentially in the number of agents and announced literals. Here, we introduce a logic that overcomes both limitations. First, we generalize edge-conditioned event models, which we show to be as expressive as standard event models yet exponentially more succinct (generalizing both standard event models and generalized arrow updates). Second, we extend attention to arbitrary formulas, allowing agents to also attend to other agents' beliefs or attention. Our work treats attention as a modality, like belief or awareness. We introduce attention principles that impose closure properties on that modality and that can be used in its axiomatization. Throughout, we illustrate our framework with examples of AI agents reasoning about human attentional biases, demonstrating how such agents can discover attentional biases.

Depth-Bounded Epistemic Planning

Jun 03, 2024

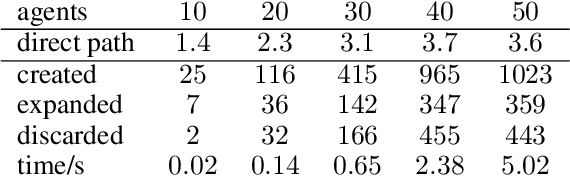

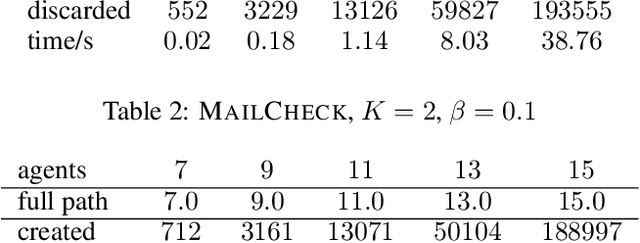

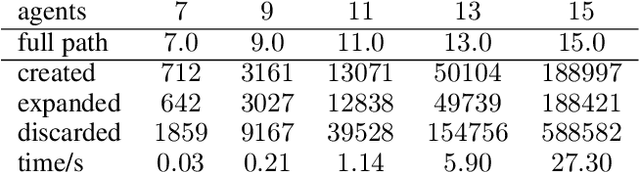

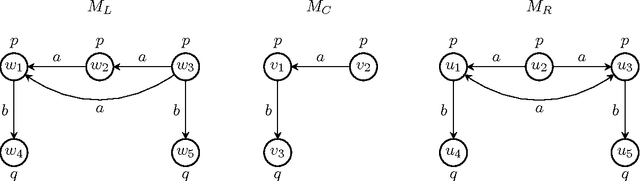

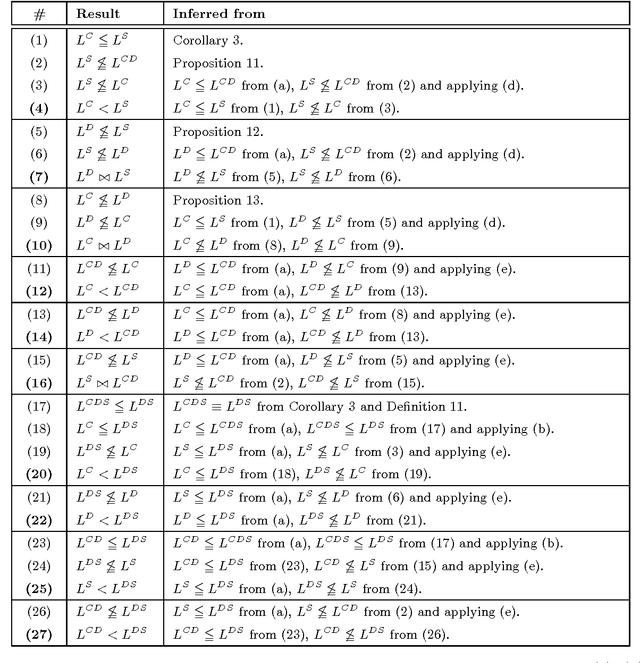

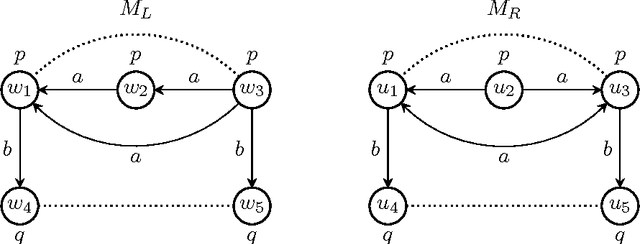

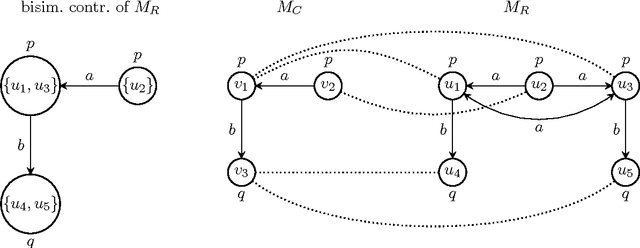

Abstract:In this paper, we propose a novel algorithm for epistemic planning based on dynamic epistemic logic (DEL). The novelty is that we limit the depth of reasoning of the planning agent to an upper bound b, meaning that the planning agent can only reason about higher-order knowledge to at most (modal) depth b. The algorithm makes use of a novel type of canonical b-bisimulation contraction guaranteeing unique minimal models with respect to b-bisimulation. We show our depth-bounded planning algorithm to be sound. Additionally, we show it to be complete with respect to planning tasks having a solution within bound b of reasoning depth (and hence the iterative bound-deepening variant is complete in the standard sense). For bound b of reasoning depth, the algorithm is shown to be (b + 1)-EXPTIME complete, and furthermore fixed-parameter tractable in the number of agents and atoms. We present both a tree search and a graph search variant of the algorithm, and we benchmark an implementation of the tree search version against a baseline epistemic planner.

Attention! Dynamic Epistemic Logic Models of (In)attentive Agents

Mar 23, 2023

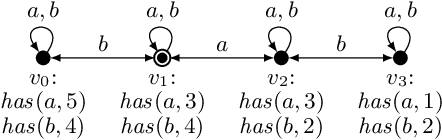

Abstract:Attention is the crucial cognitive ability that limits and selects what information we observe. Previous work by Bolander et al. (2016) proposes a model of attention based on dynamic epistemic logic (DEL) where agents are either fully attentive or not attentive at all. While introducing the realistic feature that inattentive agents believe nothing happens, the model does not represent the most essential aspect of attention: its selectivity. Here, we propose a generalization that allows for paying attention to subsets of atomic formulas. We introduce the corresponding logic for propositional attention, and show its axiomatization to be sound and complete. We then extend the framework to account for inattentive agents that, instead of assuming nothing happens, may default to a specific truth-value of what they failed to attend to (a sort of prior concerning the unattended atoms). This feature allows for a more cognitively plausible representation of the inattentional blindness phenomenon, where agents end up with false beliefs due to their failure to attend to conspicuous but unexpected events. Both versions of the model define attention-based learning through appropriate DEL event models based on a few and clear edge principles. While the size of such event models grow exponentially both with the number of agents and the number of atoms, we introduce a new logical language for describing event models syntactically and show that using this language our event models can be represented linearly in the number of agents and atoms. Furthermore, representing our event models using this language is achieved by a straightforward formalisation of the aforementioned edge principles.

Learning to Act and Observe in Partially Observable Domains

Sep 13, 2021

Abstract:We consider a learning agent in a partially observable environment, with which the agent has never interacted before, and about which it learns both what it can observe and how its actions affect the environment. The agent can learn about this domain from experience gathered by taking actions in the domain and observing their results. We present learning algorithms capable of learning as much as possible (in a well-defined sense) both about what is directly observable and about what actions do in the domain, given the learner's observational constraints. We differentiate the level of domain knowledge attained by each algorithm, and characterize the type of observations required to reach it. The algorithms use dynamic epistemic logic (DEL) to represent the learned domain information symbolically. Our work continues that of Bolander and Gierasimczuk (2015), which developed DEL-based learning algorithms based to learn domain information in fully observable domains.

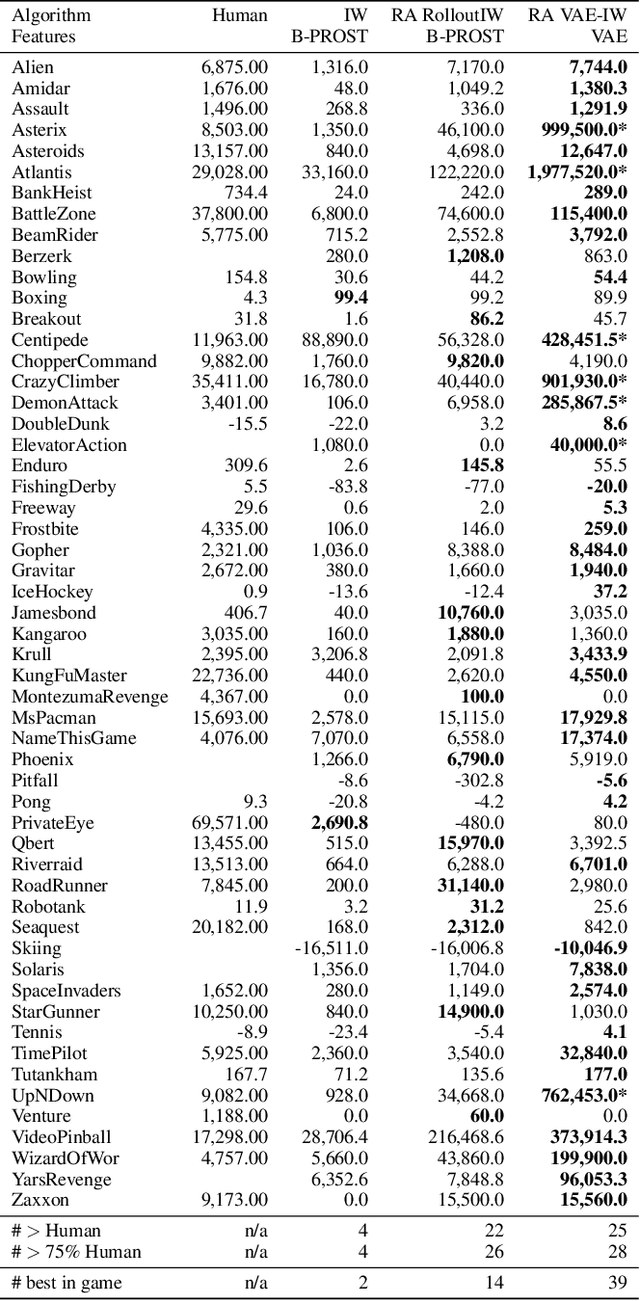

Planning From Pixels in Atari With Learned Symbolic Representations

Dec 16, 2020

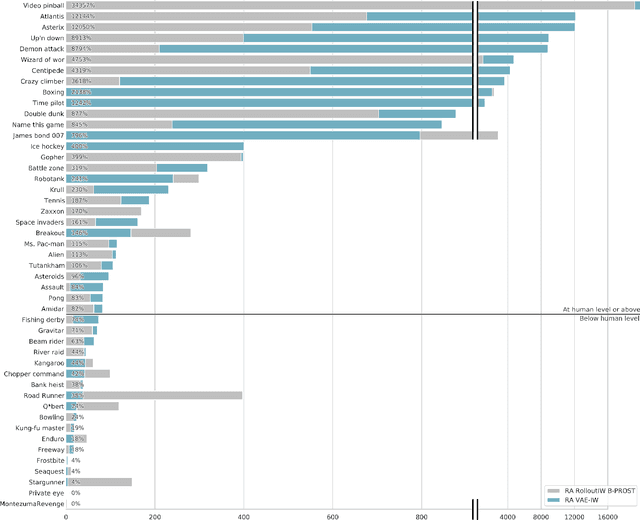

Abstract:Width-based planning methods have been shown to yield state-of-the-art performance in the Atari 2600 domain using pixel input. One successful approach, RolloutIW, represents states with the B-PROST boolean feature set. An augmented version of RolloutIW, $\pi$-IW, shows that learned features can be competitive with handcrafted ones for width-based search. In this paper, we leverage variational autoencoders (VAEs) to learn features directly from pixels in a principled manner, and without supervision. The inference model of the trained VAEs extracts boolean features from pixels, and RolloutIW plans with these features. The resulting combination outperforms the original RolloutIW and human professional play on Atari 2600 and drastically reduces the size of the feature set.

Cooperative Epistemic Multi-Agent Planning for Implicit Coordination

Mar 07, 2017

Abstract:Epistemic planning can be used for decision making in multi-agent situations with distributed knowledge and capabilities. Recently, Dynamic Epistemic Logic (DEL) has been shown to provide a very natural and expressive framework for epistemic planning. We extend the DEL-based epistemic planning framework to include perspective shifts, allowing us to define new notions of sequential and conditional planning with implicit coordination. With these, it is possible to solve planning tasks with joint goals in a decentralized manner without the agents having to negotiate about and commit to a joint policy at plan time. First we define the central planning notions and sketch the implementation of a planning system built on those notions. Afterwards we provide some case studies in order to evaluate the planner empirically and to show that the concept is useful for multi-agent systems in practice.

* In Proceedings M4M9 2017, arXiv:1703.01736

A Gentle Introduction to Epistemic Planning: The DEL Approach

Mar 07, 2017

Abstract:Epistemic planning can be used for decision making in multi-agent situations with distributed knowledge and capabilities. Dynamic Epistemic Logic (DEL) has been shown to provide a very natural and expressive framework for epistemic planning. In this paper, we aim to give an accessible introduction to DEL-based epistemic planning. The paper starts with the most classical framework for planning, STRIPS, and then moves towards epistemic planning in a number of smaller steps, where each step is motivated by the need to be able to model more complex planning scenarios.

* In Proceedings M4M9 2017, arXiv:1703.01736

Bisimulation and expressivity for conditional belief, degrees of belief, and safe belief

Feb 25, 2016

Abstract:Plausibility models are Kripke models that agents use to reason about knowledge and belief, both of themselves and of each other. Such models are used to interpret the notions of conditional belief, degrees of belief, and safe belief. The logic of conditional belief contains that modality and also the knowledge modality, and similarly for the logic of degrees of belief and the logic of safe belief. With respect to these logics, plausibility models may contain too much information. A proper notion of bisimulation is required that characterises them. We define that notion of bisimulation and prove the required characterisations: on the class of image-finite and preimage-finite models (with respect to the plausibility relation), two pointed Kripke models are modally equivalent in either of the three logics, if and only if they are bisimilar. As a result, the information content of such a model can be similarly expressed in the logic of conditional belief, or the logic of degrees of belief, or that of safe belief. This, we found a surprising result. Still, that does not mean that the logics are equally expressive: the logics of conditional and degrees of belief are incomparable, the logics of degrees of belief and safe belief are incomparable, while the logic of safe belief is more expressive than the logic of conditional belief. In view of the result on bisimulation characterisation, this is an equally surprising result. We hope our insights may contribute to the growing community of formal epistemology and on the relation between qualitative and quantitative modelling.

Learning Action Models: Qualitative Approach

Jul 15, 2015

Abstract:In dynamic epistemic logic, actions are described using action models. In this paper we introduce a framework for studying learnability of action models from observations. We present first results concerning propositional action models. First we check two basic learnability criteria: finite identifiability (conclusively inferring the appropriate action model in finite time) and identifiability in the limit (inconclusive convergence to the right action model). We show that deterministic actions are finitely identifiable, while non-deterministic actions require more learning power-they are identifiable in the limit. We then move on to a particular learning method, which proceeds via restriction of a space of events within a learning-specific action model. This way of learning closely resembles the well-known update method from dynamic epistemic logic. We introduce several different learning methods suited for finite identifiability of particular types of deterministic actions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge