Nina Gierasimczuk

Technical University of Denmark

SymDQN: Symbolic Knowledge and Reasoning in Neural Network-based Reinforcement Learning

Apr 03, 2025

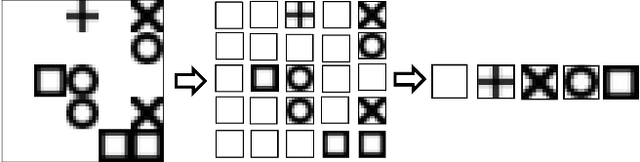

Abstract:We propose a learning architecture that allows symbolic control and guidance in reinforcement learning with deep neural networks. We introduce SymDQN, a novel modular approach that augments the existing Dueling Deep Q-Networks (DuelDQN) architecture with modules based on the neuro-symbolic framework of Logic Tensor Networks (LTNs). The modules guide action policy learning and allow reinforcement learning agents to display behaviour consistent with reasoning about the environment. Our experiment is an ablation study performed on the modules. It is conducted in a reinforcement learning environment of a 5x5 grid navigated by an agent that encounters various shapes, each associated with a given reward. The underlying DuelDQN attempts to learn the optimal behaviour of the agent in this environment, while the modules facilitate shape recognition and reward prediction. We show that our architecture significantly improves learning, both in terms of performance and the precision of the agent. The modularity of SymDQN allows reflecting on the intricacies and complexities of combining neural and symbolic approaches in reinforcement learning.

Cognitive Bias and Belief Revision

Jul 11, 2023Abstract:In this paper we formalise three types of cognitive bias within the framework of belief revision: confirmation bias, framing bias, and anchoring bias. We interpret them generally, as restrictions on the process of iterated revision, and we apply them to three well-known belief revision methods: conditioning, lexicographic revision, and minimal revision. We investigate the reliability of biased belief revision methods in truth tracking. We also run computer simulations to assess the performance of biased belief revision in random scenarios.

* In Proceedings TARK 2023, arXiv:2307.04005

Learning to Act and Observe in Partially Observable Domains

Sep 13, 2021

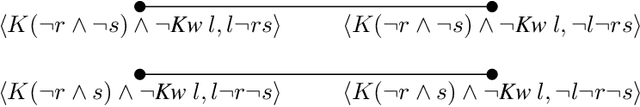

Abstract:We consider a learning agent in a partially observable environment, with which the agent has never interacted before, and about which it learns both what it can observe and how its actions affect the environment. The agent can learn about this domain from experience gathered by taking actions in the domain and observing their results. We present learning algorithms capable of learning as much as possible (in a well-defined sense) both about what is directly observable and about what actions do in the domain, given the learner's observational constraints. We differentiate the level of domain knowledge attained by each algorithm, and characterize the type of observations required to reach it. The algorithms use dynamic epistemic logic (DEL) to represent the learned domain information symbolically. Our work continues that of Bolander and Gierasimczuk (2015), which developed DEL-based learning algorithms based to learn domain information in fully observable domains.

On the Solvability of Inductive Problems: A Study in Epistemic Topology

Jun 24, 2016

Abstract:We investigate the issues of inductive problem-solving and learning by doxastic agents. We provide topological characterizations of solvability and learnability, and we use them to prove that AGM-style belief revision is "universal", i.e., that every solvable problem is solvable by AGM conditioning.

* In Proceedings TARK 2015, arXiv:1606.07295

Learning Action Models: Qualitative Approach

Jul 15, 2015

Abstract:In dynamic epistemic logic, actions are described using action models. In this paper we introduce a framework for studying learnability of action models from observations. We present first results concerning propositional action models. First we check two basic learnability criteria: finite identifiability (conclusively inferring the appropriate action model in finite time) and identifiability in the limit (inconclusive convergence to the right action model). We show that deterministic actions are finitely identifiable, while non-deterministic actions require more learning power-they are identifiable in the limit. We then move on to a particular learning method, which proceeds via restriction of a space of events within a learning-specific action model. This way of learning closely resembles the well-known update method from dynamic epistemic logic. We introduce several different learning methods suited for finite identifiability of particular types of deterministic actions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge