Thirimachos Bourlai

A Brief Survey on Person Recognition at a Distance

Dec 17, 2022

Abstract:Person recognition at a distance entails recognizing the identity of an individual appearing in images or videos collected by long-range imaging systems such as drones or surveillance cameras. Despite recent advances in deep convolutional neural networks (DCNNs), this remains challenging. Images or videos collected by long-range cameras often suffer from atmospheric turbulence, blur, low-resolution, unconstrained poses, and poor illumination. In this paper, we provide a brief survey of recent advances in person recognition at a distance. In particular, we review recent work in multi-spectral face verification, person re-identification, and gait-based analysis techniques. Furthermore, we discuss the merits and drawbacks of existing approaches and identify important, yet under explored challenges for deploying remote person recognition systems in-the-wild.

The Multiscenario Multienvironment BioSecure Multimodal Database (BMDB)

Nov 17, 2021

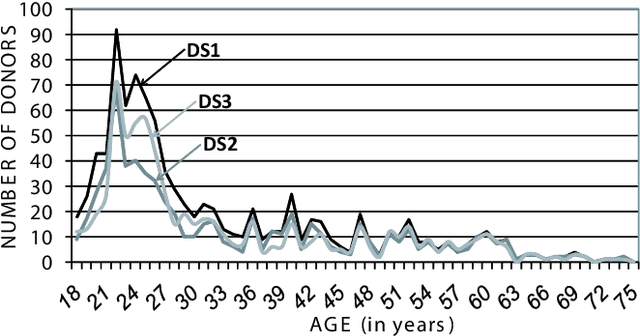

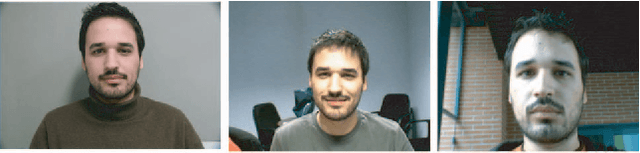

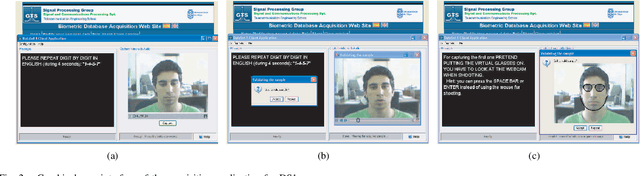

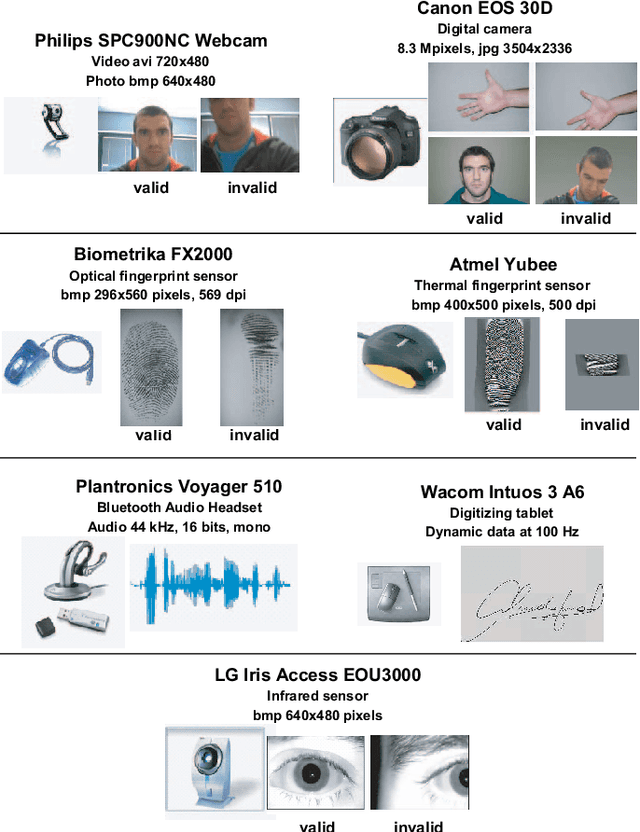

Abstract:A new multimodal biometric database designed and acquired within the framework of the European BioSecure Network of Excellence is presented. It is comprised of more than 600 individuals acquired simultaneously in three scenarios: 1) over the Internet, 2) in an office environment with desktop PC, and 3) in indoor/outdoor environments with mobile portable hardware. The three scenarios include a common part of audio/video data. Also, signature and fingerprint data have been acquired both with desktop PC and mobile portable hardware. Additionally, hand and iris data were acquired in the second scenario using desktop PC. Acquisition has been conducted by 11 European institutions. Additional features of the BioSecure Multimodal Database (BMDB) are: two acquisition sessions, several sensors in certain modalities, balanced gender and age distributions, multimodal realistic scenarios with simple and quick tasks per modality, cross-European diversity, availability of demographic data, and compatibility with other multimodal databases. The novel acquisition conditions of the BMDB allow us to perform new challenging research and evaluation of either monomodal or multimodal biometric systems, as in the recent BioSecure Multimodal Evaluation campaign. A description of this campaign including baseline results of individual modalities from the new database is also given. The database is expected to be available for research purposes through the BioSecure Association during 2008

Benchmarking Quality-Dependent and Cost-Sensitive Score-Level Multimodal Biometric Fusion Algorithms

Nov 17, 2021

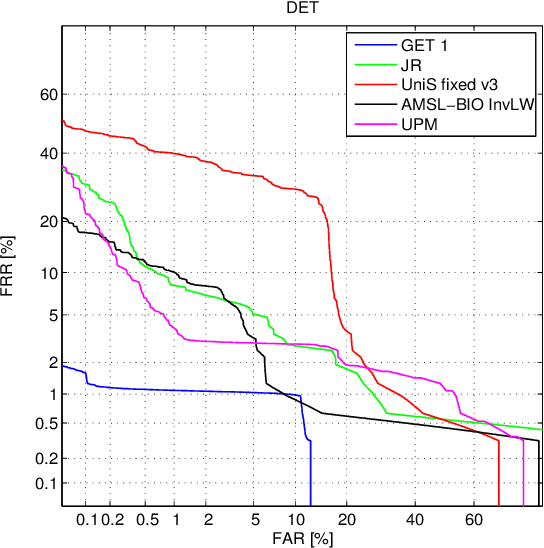

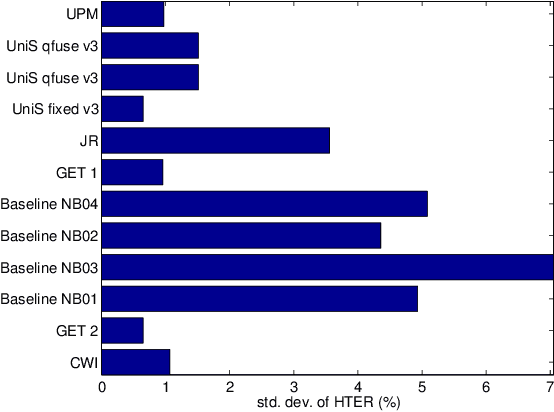

Abstract:Automatically verifying the identity of a person by means of biometrics is an important application in day-to-day activities such as accessing banking services and security control in airports. To increase the system reliability, several biometric devices are often used. Such a combined system is known as a multimodal biometric system. This paper reports a benchmarking study carried out within the framework of the BioSecure DS2 (Access Control) evaluation campaign organized by the University of Surrey, involving face, fingerprint, and iris biometrics for person authentication, targeting the application of physical access control in a medium-size establishment with some 500 persons. While multimodal biometrics is a well-investigated subject, there exists no benchmark for a fusion algorithm comparison. Working towards this goal, we designed two sets of experiments: quality-dependent and cost-sensitive evaluation. The quality-dependent evaluation aims at assessing how well fusion algorithms can perform under changing quality of raw images principally due to change of devices. The cost-sensitive evaluation, on the other hand, investigates how well a fusion algorithm can perform given restricted computation and in the presence of software and hardware failures, resulting in errors such as failure-to-acquire and failure-to-match. Since multiple capturing devices are available, a fusion algorithm should be able to handle this nonideal but nevertheless realistic scenario. In both evaluations, each fusion algorithm is provided with scores from each biometric comparison subsystem as well as the quality measures of both template and query data. The response to the call of the campaign proved very encouraging, with the submission of 22 fusion systems. To the best of our knowledge, this is the first attempt to benchmark quality-based multimodal fusion algorithms.

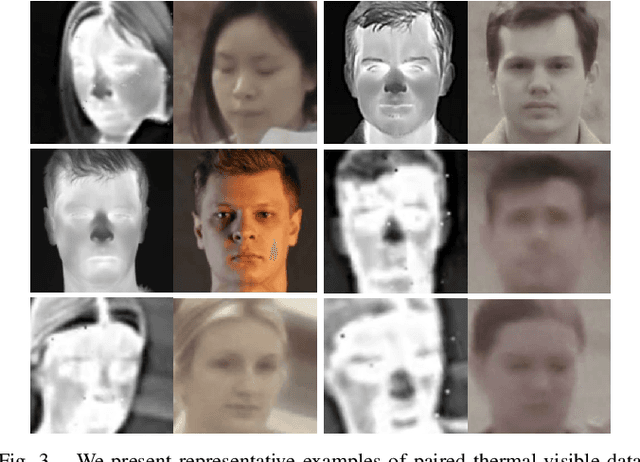

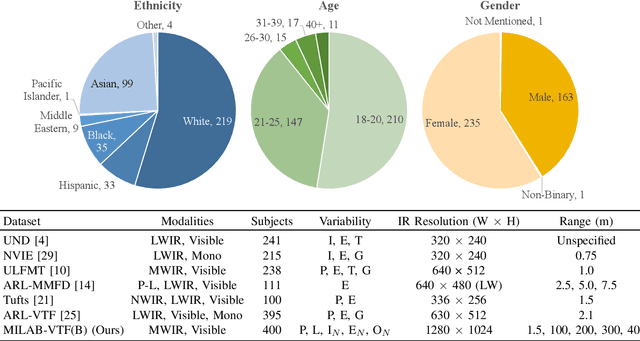

A Synthesis-Based Approach for Thermal-to-Visible Face Verification

Aug 21, 2021

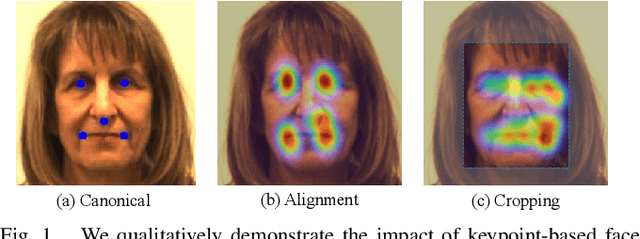

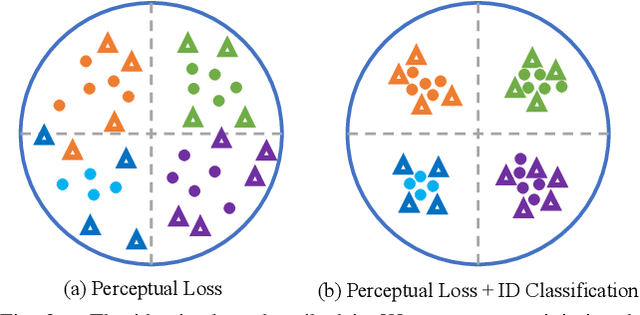

Abstract:In recent years, visible-spectrum face verification systems have been shown to match expert forensic examiner recognition performance. However, such systems are ineffective in low-light and nighttime conditions. Thermal face imagery, which captures body heat emissions, effectively augments the visible spectrum, capturing discriminative facial features in scenes with limited illumination. Due to the increased cost and difficulty of obtaining diverse, paired thermal and visible spectrum datasets, algorithms and large-scale benchmarks for low-light recognition are limited. This paper presents an algorithm that achieves state-of-the-art performance on both the ARL-VTF and TUFTS multi-spectral face datasets. Importantly, we study the impact of face alignment, pixel-level correspondence, and identity classification with label smoothing for multi-spectral face synthesis and verification. We show that our proposed method is widely applicable, robust, and highly effective. In addition, we show that the proposed method significantly outperforms face frontalization methods on profile-to-frontal verification. Finally, we present MILAB-VTF(B), a challenging multi-spectral face dataset that is composed of paired thermal and visible videos. To the best of our knowledge, with face data from 400 subjects, this dataset represents the most extensive collection of publicly available indoor and long-range outdoor thermal-visible face imagery. Lastly, we show that our end-to-end thermal-to-visible face verification system provides strong performance on the MILAB-VTF(B) dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge