Snehal Raj

Bayesian Quantum Orthogonal Neural Networks for Anomaly Detection

Apr 25, 2025Abstract:Identification of defects or anomalies in 3D objects is a crucial task to ensure correct functionality. In this work, we combine Bayesian learning with recent developments in quantum and quantum-inspired machine learning, specifically orthogonal neural networks, to tackle this anomaly detection problem for an industrially relevant use case. Bayesian learning enables uncertainty quantification of predictions, while orthogonality in weight matrices enables smooth training. We develop orthogonal (quantum) versions of 3D convolutional neural networks and show that these models can successfully detect anomalies in 3D objects. To test the feasibility of incorporating quantum computers into a quantum-enhanced anomaly detection pipeline, we perform hardware experiments with our models on IBM's 127-qubit Brisbane device, testing the effect of noise and limited measurement shots.

Hyper Compressed Fine-Tuning of Large Foundation Models with Quantum Inspired Adapters

Feb 10, 2025Abstract:Fine-tuning pre-trained large foundation models for specific tasks has become increasingly challenging due to the computational and storage demands associated with full parameter updates. Parameter-Efficient Fine-Tuning (PEFT) methods address this issue by updating only a small subset of model parameters using adapter modules. In this work, we propose \emph{Quantum-Inspired Adapters}, a PEFT approach inspired by Hamming-weight preserving quantum circuits from quantum machine learning literature. These models can be both expressive and parameter-efficient by operating in a combinatorially large space while simultaneously preserving orthogonality in weight parameters. We test our proposed adapters by adapting large language models and large vision transformers on benchmark datasets. Our method can achieve 99.2\% of the performance of existing fine-tuning methods such LoRA with a 44x parameter compression on language understanding datasets like GLUE and VTAB. Compared to existing orthogonal fine-tuning methods such as OFT or BOFT, we achieve 98\% relative performance with 25x fewer parameters. This demonstrates competitive performance paired with a significant reduction in trainable parameters. Through ablation studies, we determine that combining multiple Hamming-weight orders with orthogonality and matrix compounding are essential for performant fine-tuning. Our findings suggest that Quantum-Inspired Adapters offer a promising direction for efficient adaptation of language and vision models in resource-constrained environments.

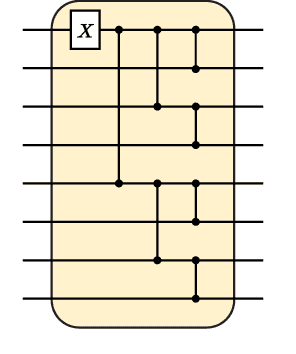

Training-efficient density quantum machine learning

May 30, 2024Abstract:Quantum machine learning requires powerful, flexible and efficiently trainable models to be successful in solving challenging problems. In this work, we present density quantum neural networks, a learning model incorporating randomisation over a set of trainable unitaries. These models generalise quantum neural networks using parameterised quantum circuits, and allow a trade-off between expressibility and efficient trainability, particularly on quantum hardware. We demonstrate the flexibility of the formalism by applying it to two recently proposed model families. The first are commuting-block quantum neural networks (QNNs) which are efficiently trainable but may be limited in expressibility. The second are orthogonal (Hamming-weight preserving) quantum neural networks which provide well-defined and interpretable transformations on data but are challenging to train at scale on quantum devices. Density commuting QNNs improve capacity with minimal gradient complexity overhead, and density orthogonal neural networks admit a quadratic-to-constant gradient query advantage with minimal to no performance loss. We conduct numerical experiments on synthetic translationally invariant data and MNIST image data with hyperparameter optimisation to support our findings. Finally, we discuss the connection to post-variational quantum neural networks, measurement-based quantum machine learning and the dropout mechanism.

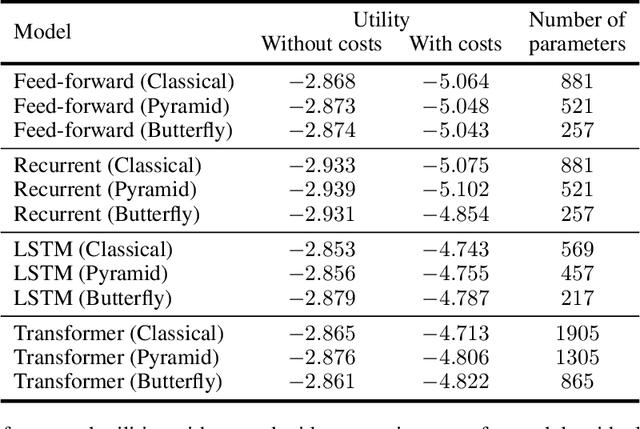

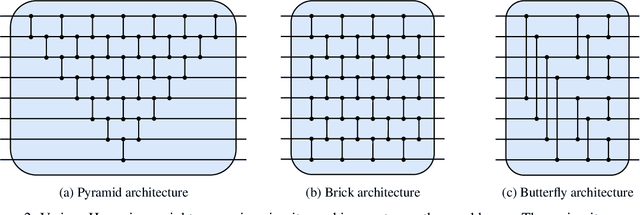

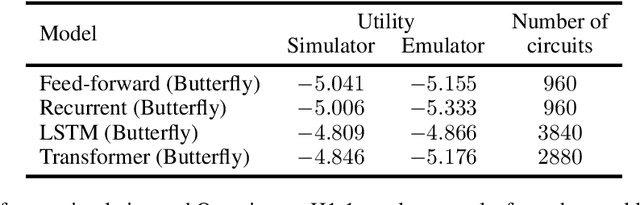

Quantum Deep Hedging

Mar 29, 2023

Abstract:Quantum machine learning has the potential for a transformative impact across industry sectors and in particular in finance. In our work we look at the problem of hedging where deep reinforcement learning offers a powerful framework for real markets. We develop quantum reinforcement learning methods based on policy-search and distributional actor-critic algorithms that use quantum neural network architectures with orthogonal and compound layers for the policy and value functions. We prove that the quantum neural networks we use are trainable, and we perform extensive simulations that show that quantum models can reduce the number of trainable parameters while achieving comparable performance and that the distributional approach obtains better performance than other standard approaches, both classical and quantum. We successfully implement the proposed models on a trapped-ion quantum processor, utilizing circuits with up to $16$ qubits, and observe performance that agrees well with noiseless simulation. Our quantum techniques are general and can be applied to other reinforcement learning problems beyond hedging.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge