Shu Yu

LINA: Learning INterventions Adaptively for Physical Alignment and Generalization in Diffusion Models

Dec 15, 2025

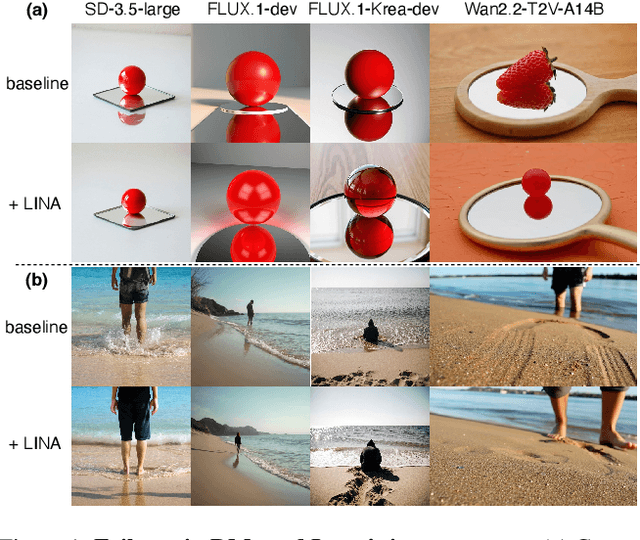

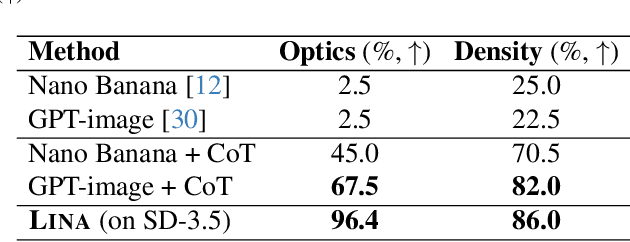

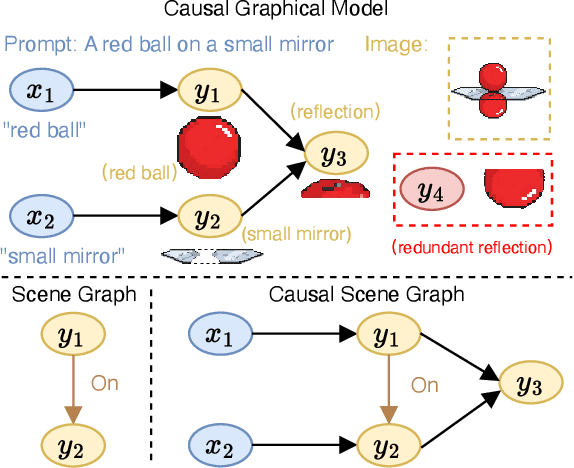

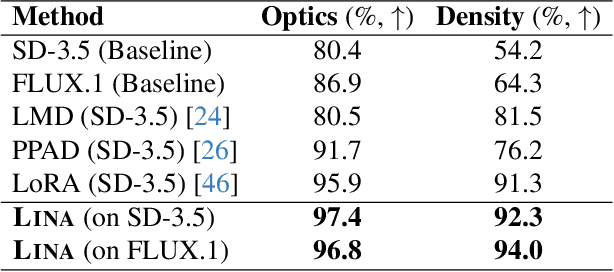

Abstract:Diffusion models (DMs) have achieved remarkable success in image and video generation. However, they still struggle with (1) physical alignment and (2) out-of-distribution (OOD) instruction following. We argue that these issues stem from the models' failure to learn causal directions and to disentangle causal factors for novel recombination. We introduce the Causal Scene Graph (CSG) and the Physical Alignment Probe (PAP) dataset to enable diagnostic interventions. This analysis yields three key insights. First, DMs struggle with multi-hop reasoning for elements not explicitly determined in the prompt. Second, the prompt embedding contains disentangled representations for texture and physics. Third, visual causal structure is disproportionately established during the initial, computationally limited denoising steps. Based on these findings, we introduce LINA (Learning INterventions Adaptively), a novel framework that learns to predict prompt-specific interventions, which employs (1) targeted guidance in the prompt and visual latent spaces, and (2) a reallocated, causality-aware denoising schedule. Our approach enforces both physical alignment and OOD instruction following in image and video DMs, achieving state-of-the-art performance on challenging causal generation tasks and the Winoground dataset. Our project page is at https://opencausalab.github.io/LINA.

Exploring Consciousness in LLMs: A Systematic Survey of Theories, Implementations, and Frontier Risks

May 26, 2025

Abstract:Consciousness stands as one of the most profound and distinguishing features of the human mind, fundamentally shaping our understanding of existence and agency. As large language models (LLMs) develop at an unprecedented pace, questions concerning intelligence and consciousness have become increasingly significant. However, discourse on LLM consciousness remains largely unexplored territory. In this paper, we first clarify frequently conflated terminologies (e.g., LLM consciousness and LLM awareness). Then, we systematically organize and synthesize existing research on LLM consciousness from both theoretical and empirical perspectives. Furthermore, we highlight potential frontier risks that conscious LLMs might introduce. Finally, we discuss current challenges and outline future directions in this emerging field. The references discussed in this paper are organized at https://github.com/OpenCausaLab/Awesome-LLM-Consciousness.

ADAM: An Embodied Causal Agent in Open-World Environments

Oct 29, 2024

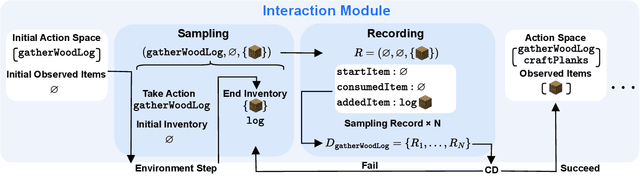

Abstract:In open-world environments like Minecraft, existing agents face challenges in continuously learning structured knowledge, particularly causality. These challenges stem from the opacity inherent in black-box models and an excessive reliance on prior knowledge during training, which impair their interpretability and generalization capability. To this end, we introduce ADAM, An emboDied causal Agent in Minecraft, that can autonomously navigate the open world, perceive multimodal contexts, learn causal world knowledge, and tackle complex tasks through lifelong learning. ADAM is empowered by four key components: 1) an interaction module, enabling the agent to execute actions while documenting the interaction processes; 2) a causal model module, tasked with constructing an ever-growing causal graph from scratch, which enhances interpretability and diminishes reliance on prior knowledge; 3) a controller module, comprising a planner, an actor, and a memory pool, which uses the learned causal graph to accomplish tasks; 4) a perception module, powered by multimodal large language models, which enables ADAM to perceive like a human player. Extensive experiments show that ADAM constructs an almost perfect causal graph from scratch, enabling efficient task decomposition and execution with strong interpretability. Notably, in our modified Minecraft games where no prior knowledge is available, ADAM maintains its performance and shows remarkable robustness and generalization capability. ADAM pioneers a novel paradigm that integrates causal methods and embodied agents in a synergistic manner. Our project page is at https://opencausalab.github.io/ADAM.

From Imitation to Introspection: Probing Self-Consciousness in Language Models

Oct 24, 2024

Abstract:Self-consciousness, the introspection of one's existence and thoughts, represents a high-level cognitive process. As language models advance at an unprecedented pace, a critical question arises: Are these models becoming self-conscious? Drawing upon insights from psychological and neural science, this work presents a practical definition of self-consciousness for language models and refines ten core concepts. Our work pioneers an investigation into self-consciousness in language models by, for the first time, leveraging causal structural games to establish the functional definitions of the ten core concepts. Based on our definitions, we conduct a comprehensive four-stage experiment: quantification (evaluation of ten leading models), representation (visualization of self-consciousness within the models), manipulation (modification of the models' representation), and acquisition (fine-tuning the models on core concepts). Our findings indicate that although models are in the early stages of developing self-consciousness, there is a discernible representation of certain concepts within their internal mechanisms. However, these representations of self-consciousness are hard to manipulate positively at the current stage, yet they can be acquired through targeted fine-tuning. Our datasets and code are at https://github.com/OpenCausaLab/SelfConsciousness.

An Adapter based Multi-label Pre-training for Speech Separation and Enhancement

Nov 11, 2022

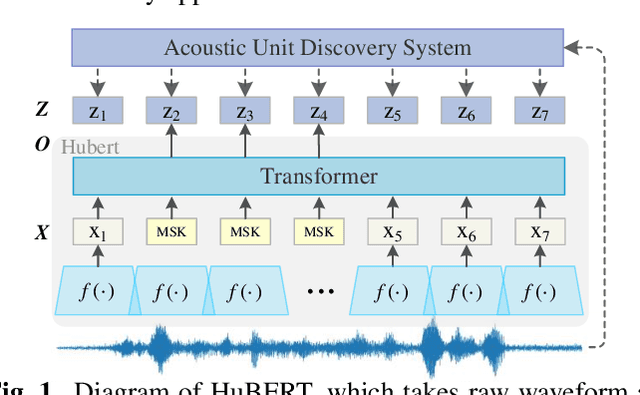

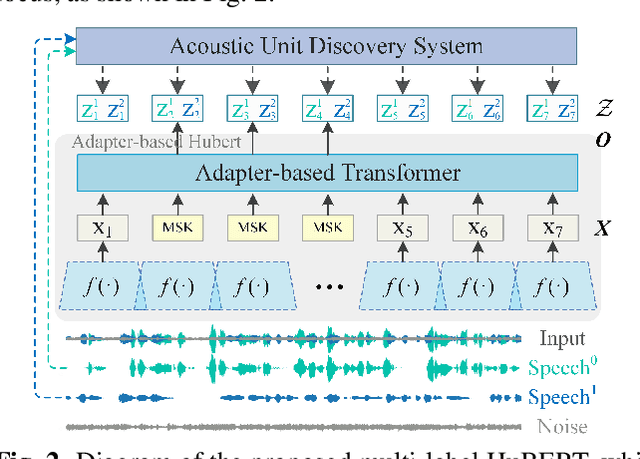

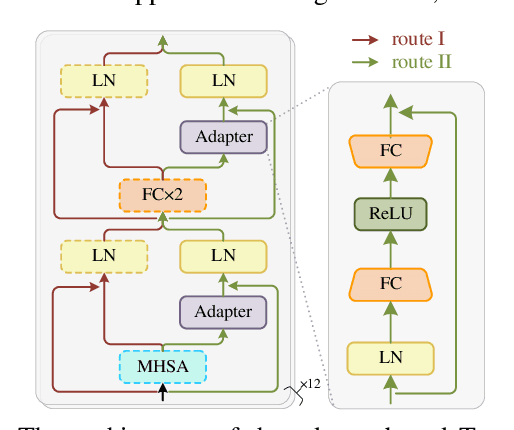

Abstract:In recent years, self-supervised learning (SSL) has achieved tremendous success in various speech tasks due to its power to extract representations from massive unlabeled data. However, compared with tasks such as speech recognition (ASR), the improvements from SSL representation in speech separation (SS) and enhancement (SE) are considerably smaller. Based on HuBERT, this work investigates improving the SSL model for SS and SE. We first update HuBERT's masked speech prediction (MSP) objective by integrating the separation and denoising terms, resulting in a multiple pseudo label pre-training scheme, which significantly improves HuBERT's performance on SS and SE but degrades the performance on ASR. To maintain its performance gain on ASR, we further propose an adapter-based architecture for HuBERT's Transformer encoder, where only a few parameters of each layer are adjusted to the multiple pseudo label MSP while other parameters remain frozen as default HuBERT. Experimental results show that our proposed adapter-based multiple pseudo label HuBERT yield consistent and significant performance improvements on SE, SS, and ASR tasks, with a faster pre-training speed, at only marginal parameters increase.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge