Sewoong Ahn

Simple Baseline for Weather Forecasting Using Spatiotemporal Context Aggregation Network

Dec 10, 2022

Abstract:Traditional weather forecasting relies on domain expertise and computationally intensive numerical simulation systems. Recently, with the development of a data-driven approach, weather forecasting based on deep learning has been receiving attention. Deep learning-based weather forecasting has made stunning progress, from various backbone studies using CNN, RNN, and Transformer to training strategies using weather observations datasets with auxiliary inputs. All of this progress has contributed to the field of weather forecasting; however, many elements and complex structures of deep learning models prevent us from reaching physical interpretations. This paper proposes a SImple baseline with a spatiotemporal context Aggregation Network (SIANet) that achieved state-of-the-art in 4 parts of 5 benchmarks of W4C22. This simple but efficient structure uses only satellite images and CNNs in an end-to-end fashion without using a multi-model ensemble or fine-tuning. This simplicity of SIANet can be used as a solid baseline that can be easily applied in weather forecasting using deep learning.

Domain Generalization Strategy to Train Classifiers Robust to Spatial-Temporal Shift

Dec 10, 2022Abstract:Deep learning-based weather prediction models have advanced significantly in recent years. However, data-driven models based on deep learning are difficult to apply to real-world applications because they are vulnerable to spatial-temporal shifts. A weather prediction task is especially susceptible to spatial-temporal shifts when the model is overfitted to locality and seasonality. In this paper, we propose a training strategy to make the weather prediction model robust to spatial-temporal shifts. We first analyze the effect of hyperparameters and augmentations of the existing training strategy on the spatial-temporal shift robustness of the model. Next, we propose an optimal combination of hyperparameters and augmentation based on the analysis results and a test-time augmentation. We performed all experiments on the W4C22 Transfer dataset and achieved the 1st performance.

Learning Multiple Probabilistic Degradation Generators for Unsupervised Real World Image Super Resolution

Jan 26, 2022

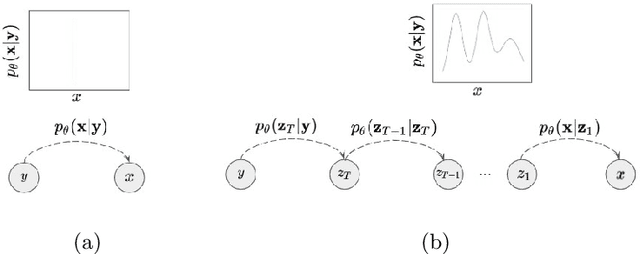

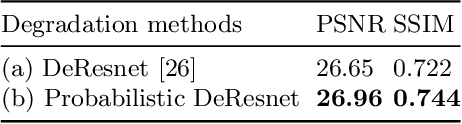

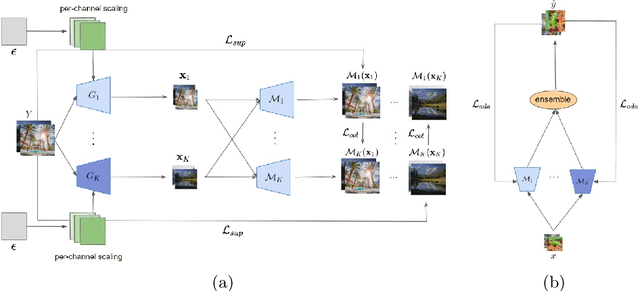

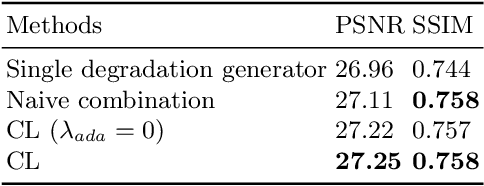

Abstract:Unsupervised real world super resolution (USR) aims at restoring high-resolution (HR) images given low-resolution (LR) inputs when paired data is unavailable. One of the most common approaches is synthesizing noisy LR images using GANs and utilizing a synthetic dataset to train the model in a supervised manner. The goal of modeling the degradation generator is to approximate the distribution of LR images given a HR image. Previous works simply assumed the conditional distribution as a delta function and learned the deterministic mapping from HR image to a LR image. Instead, we propose the probabilistic degradation generator. Our degradation generator is a deep hierarchical latent variable model and more suitable for modeling the complex distribution. Furthermore, we train multiple degradation generators to enhance the mode coverage and apply the novel collaborative learning. We outperform several baselines on benchmark datasets in terms of PSNR and SSIM and demonstrate the robustness of our method on unseen data distribution.

NTIRE 2021 Challenge on Perceptual Image Quality Assessment

May 11, 2021

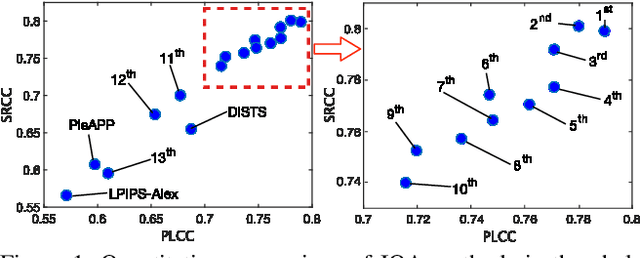

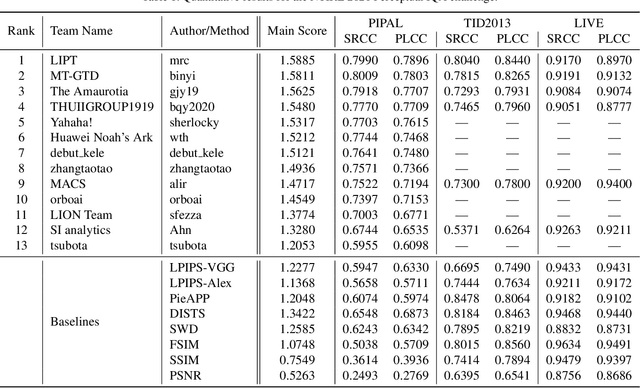

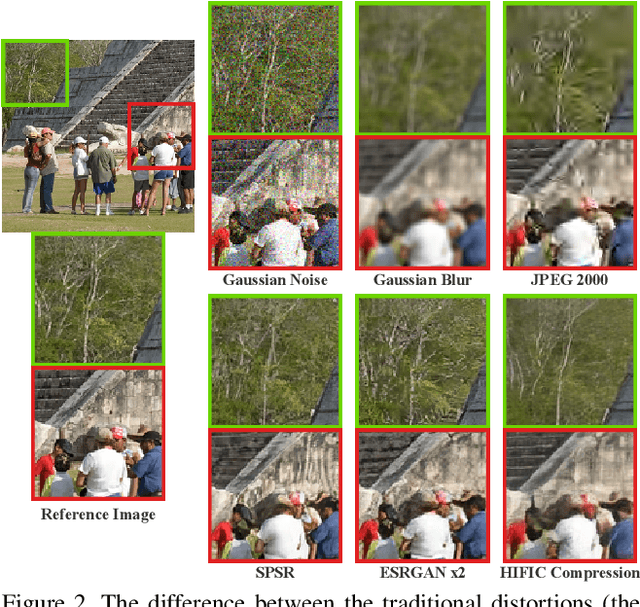

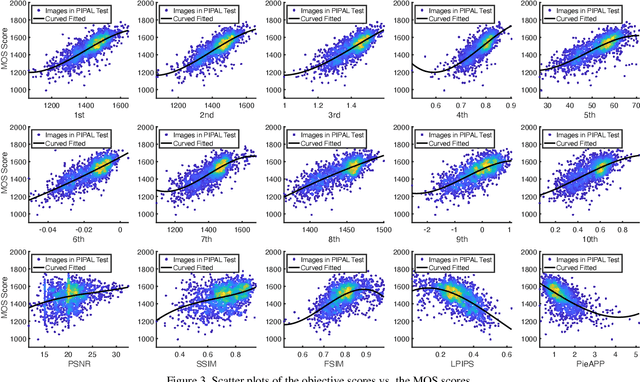

Abstract:This paper reports on the NTIRE 2021 challenge on perceptual image quality assessment (IQA), held in conjunction with the New Trends in Image Restoration and Enhancement workshop (NTIRE) workshop at CVPR 2021. As a new type of image processing technology, perceptual image processing algorithms based on Generative Adversarial Networks (GAN) have produced images with more realistic textures. These output images have completely different characteristics from traditional distortions, thus pose a new challenge for IQA methods to evaluate their visual quality. In comparison with previous IQA challenges, the training and testing datasets in this challenge include the outputs of perceptual image processing algorithms and the corresponding subjective scores. Thus they can be used to develop and evaluate IQA methods on GAN-based distortions. The challenge has 270 registered participants in total. In the final testing stage, 13 participating teams submitted their models and fact sheets. Almost all of them have achieved much better results than existing IQA methods, while the winning method can demonstrate state-of-the-art performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge