Sebastien Piat

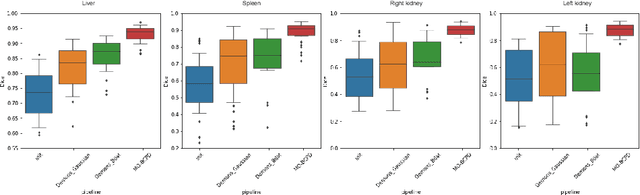

EchoApex: A General-Purpose Vision Foundation Model for Echocardiography

Oct 14, 2024

Abstract:Quantitative evaluation of echocardiography is essential for precise assessment of cardiac condition, monitoring disease progression, and guiding treatment decisions. The diverse nature of echo images, including variations in probe types, manufacturers, and pathologies, poses challenges for developing artificial intelligent models that can generalize across different clinical practice. We introduce EchoApex, the first general-purpose vision foundation model echocardiography with applications on a variety of clinical practice. Leveraging self-supervised learning, EchoApex is pretrained on over 20 million echo images from 11 clinical centres. By incorporating task-specific decoders and adapter modules, we demonstrate the effectiveness of EchoApex on 4 different kind of clinical applications with 28 sub-tasks, including view classification, interactive structure segmentation, left ventricle hypertrophy detection and automated ejection fraction estimation from view sequences. Compared to state-of-the-art task-specific models, EchoApex attains improved performance with a unified image encoding architecture, demonstrating the benefits of model pretraining at scale with in-domain data. Furthermore, EchoApex illustrates the potential for developing a general-purpose vision foundation model tailored specifically for echocardiography, capable of addressing a diverse range of clinical applications with high efficiency and efficacy.

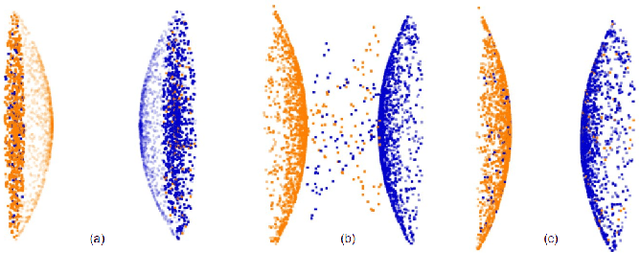

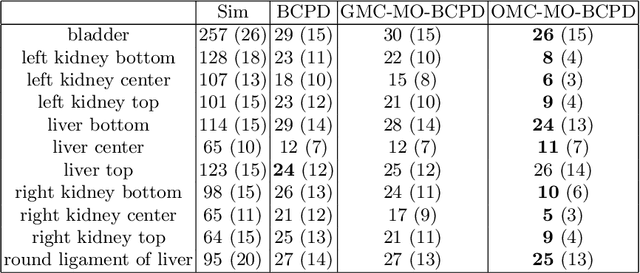

A multi-organ point cloud registration algorithm for abdominal CT registration

Mar 15, 2022

Abstract:Registering CT images of the chest is a crucial step for several tasks such as disease progression tracking or surgical planning. It is also a challenging step because of the heterogeneous content of the human abdomen which implies complex deformations. In this work, we focus on accurately registering a subset of organs of interest. We register organ surface point clouds, as may typically be extracted from an automatic segmentation pipeline, by expanding the Bayesian Coherent Point Drift algorithm (BCPD). We introduce MO-BCPD, a multi-organ version of the BCPD algorithm which explicitly models three important aspects of this task: organ individual elastic properties, inter-organ motion coherence and segmentation inaccuracy. This model also provides an interpolation framework to estimate the deformation of the entire volume. We demonstrate the efficiency of our method by registering different patients from the LITS challenge dataset. The target registration error on anatomical landmarks is almost twice as small for MO-BCPD compared to standard BCPD while imposing the same constraints on individual organs deformation.

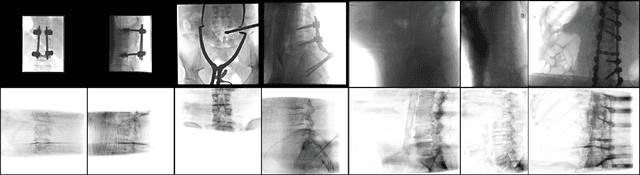

Dilated FCN for Multi-Agent 2D/3D Medical Image Registration

Nov 22, 2017

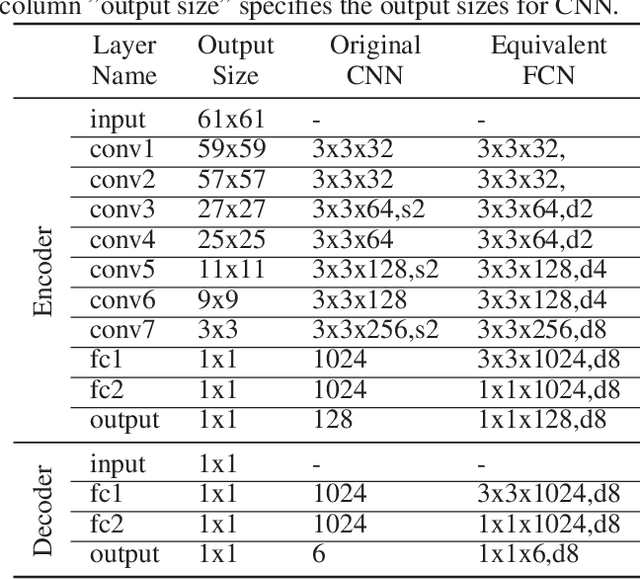

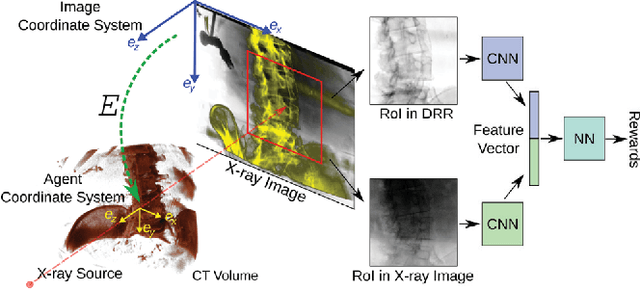

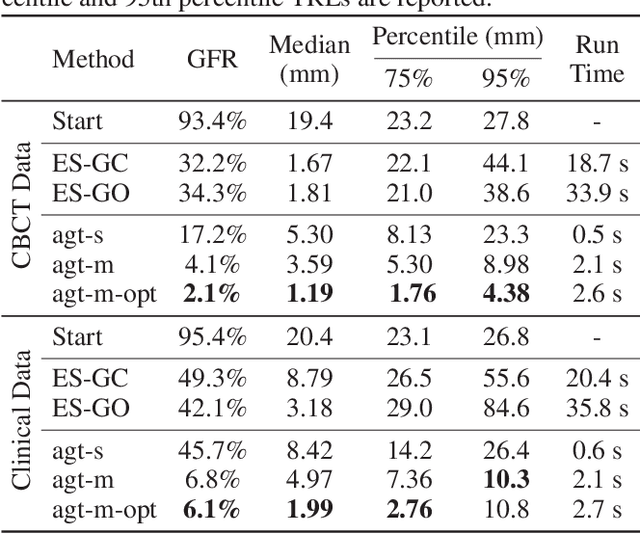

Abstract:2D/3D image registration to align a 3D volume and 2D X-ray images is a challenging problem due to its ill-posed nature and various artifacts presented in 2D X-ray images. In this paper, we propose a multi-agent system with an auto attention mechanism for robust and efficient 2D/3D image registration. Specifically, an individual agent is trained with dilated Fully Convolutional Network (FCN) to perform registration in a Markov Decision Process (MDP) by observing a local region, and the final action is then taken based on the proposals from multiple agents and weighted by their corresponding confidence levels. The contributions of this paper are threefold. First, we formulate 2D/3D registration as a MDP with observations, actions, and rewards properly defined with respect to X-ray imaging systems. Second, to handle various artifacts in 2D X-ray images, multiple local agents are employed efficiently via FCN-based structures, and an auto attention mechanism is proposed to favor the proposals from regions with more reliable visual cues. Third, a dilated FCN-based training mechanism is proposed to significantly reduce the Degree of Freedom in the simulation of registration environment, and drastically improve training efficiency by an order of magnitude compared to standard CNN-based training method. We demonstrate that the proposed method achieves high robustness on both spine cone beam Computed Tomography data with a low signal-to-noise ratio and data from minimally invasive spine surgery where severe image artifacts and occlusions are presented due to metal screws and guide wires, outperforming other state-of-the-art methods (single agent-based and optimization-based) by a large margin.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge