Roi Naveiro

Evasion Attacks Against Bayesian Predictive Models

Jun 11, 2025Abstract:There is an increasing interest in analyzing the behavior of machine learning systems against adversarial attacks. However, most of the research in adversarial machine learning has focused on studying weaknesses against evasion or poisoning attacks to predictive models in classical setups, with the susceptibility of Bayesian predictive models to attacks remaining underexplored. This paper introduces a general methodology for designing optimal evasion attacks against such models. We investigate two adversarial objectives: perturbing specific point predictions and altering the entire posterior predictive distribution. For both scenarios, we propose novel gradient-based attacks and study their implementation and properties in various computational setups.

Poisoning Bayesian Inference via Data Deletion and Replication

Mar 06, 2025

Abstract:Research in adversarial machine learning (AML) has shown that statistical models are vulnerable to maliciously altered data. However, despite advances in Bayesian machine learning models, most AML research remains concentrated on classical techniques. Therefore, we focus on extending the white-box model poisoning paradigm to attack generic Bayesian inference, highlighting its vulnerability in adversarial contexts. A suite of attacks are developed that allow an attacker to steer the Bayesian posterior toward a target distribution through the strategic deletion and replication of true observations, even when only sampling access to the posterior is available. Analytic properties of these algorithms are proven and their performance is empirically examined in both synthetic and real-world scenarios. With relatively little effort, the attacker is able to substantively alter the Bayesian's beliefs and, by accepting more risk, they can mold these beliefs to their will. By carefully constructing the adversarial posterior, surgical poisoning is achieved such that only targeted inferences are corrupted and others are minimally disturbed.

Manipulating hidden-Markov-model inferences by corrupting batch data

Feb 19, 2024Abstract:Time-series models typically assume untainted and legitimate streams of data. However, a self-interested adversary may have incentive to corrupt this data, thereby altering a decision maker's inference. Within the broader field of adversarial machine learning, this research provides a novel, probabilistic perspective toward the manipulation of hidden Markov model inferences via corrupted data. In particular, we provision a suite of corruption problems for filtering, smoothing, and decoding inferences leveraging an adversarial risk analysis approach. Multiple stochastic programming models are set forth that incorporate realistic uncertainties and varied attacker objectives. Three general solution methods are developed by alternatively viewing the problem from frequentist and Bayesian perspectives. The efficacy of each method is illustrated via extensive, empirical testing. The developed methods are characterized by their solution quality and computational effort, resulting in a stratification of techniques across varying problem-instance architectures. This research highlights the weaknesses of hidden Markov models under adversarial activity, thereby motivating the need for robustification techniques to ensure their security.

* 42 pages, 8 figures, 11 tables

Simulation Based Bayesian Optimization

Jan 19, 2024Abstract:Bayesian Optimization (BO) is a powerful method for optimizing black-box functions by combining prior knowledge with ongoing function evaluations. BO constructs a probabilistic surrogate model of the objective function given the covariates, which is in turn used to inform the selection of future evaluation points through an acquisition function. For smooth continuous search spaces, Gaussian Processes (GPs) are commonly used as the surrogate model as they offer analytical access to posterior predictive distributions, thus facilitating the computation and optimization of acquisition functions. However, in complex scenarios involving optimizations over categorical or mixed covariate spaces, GPs may not be ideal. This paper introduces Simulation Based Bayesian Optimization (SBBO) as a novel approach to optimizing acquisition functions that only requires \emph{sampling-based} access to posterior predictive distributions. SBBO allows the use of surrogate probabilistic models tailored for combinatorial spaces with discrete variables. Any Bayesian model in which posterior inference is carried out through Markov chain Monte Carlo can be selected as the surrogate model in SBBO. In applications involving combinatorial optimization, we demonstrate empirically the effectiveness of SBBO method using various choices of surrogate models.

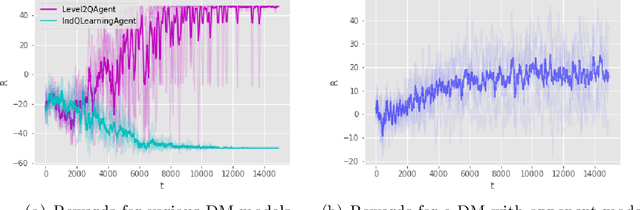

Adversarial attacks against Bayesian forecasting dynamic models

Oct 20, 2021

Abstract:The last decade has seen the rise of Adversarial Machine Learning (AML). This discipline studies how to manipulate data to fool inference engines, and how to protect those systems against such manipulation attacks. Extensive work on attacks against regression and classification systems is available, while little attention has been paid to attacks against time series forecasting systems. In this paper, we propose a decision analysis based attacking strategy that could be utilized against Bayesian forecasting dynamic models.

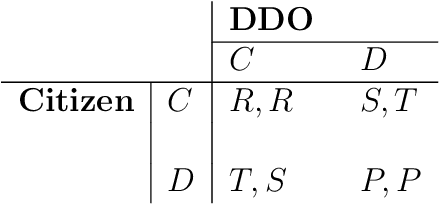

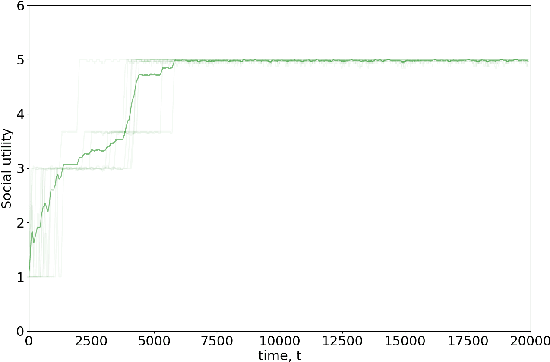

Data sharing games

Jan 26, 2021

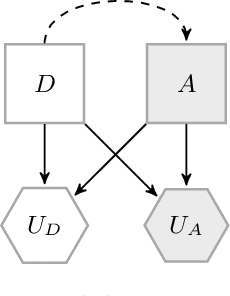

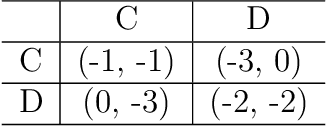

Abstract:Data sharing issues pervade online social and economic environments. To foster social progress, it is important to develop models of the interaction between data producers and consumers that can promote the rise of cooperation between the involved parties. We formalize this interaction as a game, the data sharing game, based on the Iterated Prisoner's Dilemma and deal with it through multi-agent reinforcement learning techniques. We consider several strategies for how the citizens may behave, depending on the degree of centralization sought. Simulations suggest mechanisms for cooperation to take place and, thus, achieve maximum social utility: data consumers should perform some kind of opponent modeling, or a regulator should transfer utility between both players and incentivise them.

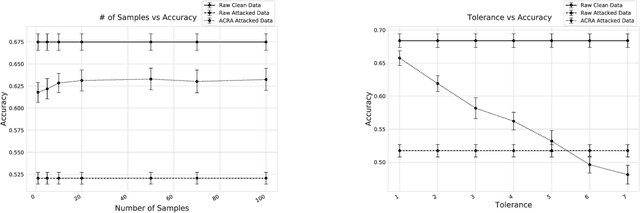

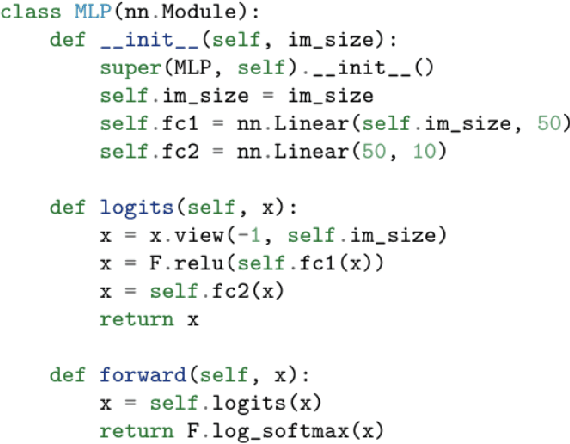

Protecting Classifiers From Attacks. A Bayesian Approach

Apr 18, 2020

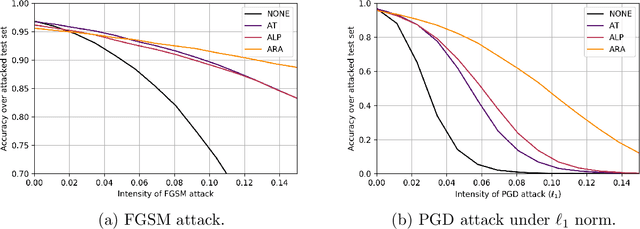

Abstract:Classification problems in security settings are usually modeled as confrontations in which an adversary tries to fool a classifier manipulating the covariates of instances to obtain a benefit. Most approaches to such problems have focused on game-theoretic ideas with strong underlying common knowledge assumptions, which are not realistic in the security realm. We provide an alternative Bayesian framework that accounts for the lack of precise knowledge about the attacker's behavior using adversarial risk analysis. A key ingredient required by our framework is the ability to sample from the distribution of originating instances given the possibly attacked observed one. We propose a sampling procedure based on approximate Bayesian computation, in which we simulate the attacker's problem taking into account our uncertainty about his elements. For large scale problems, we propose an alternative, scalable approach that could be used when dealing with differentiable classifiers. Within it, we move the computational load to the training phase, simulating attacks from an adversary, adapting the framework to obtain a classifier robustified against attacks.

Adversarial Machine Learning: Perspectives from Adversarial Risk Analysis

Mar 07, 2020

Abstract:Adversarial Machine Learning (AML) is emerging as a major field aimed at the protection of automated ML systems against security threats. The majority of work in this area has built upon a game-theoretic framework by modelling a conflict between an attacker and a defender. After reviewing game-theoretic approaches to AML, we discuss the benefits that a Bayesian Adversarial Risk Analysis perspective brings when defending ML based systems. A research agenda is included.

Gradient Methods for Solving Stackelberg Games

Aug 29, 2019

Abstract:Stackelberg Games are gaining importance in the last years due to the raise of Adversarial Machine Learning (AML). Within this context, a new paradigm must be faced: in classical game theory, intervening agents were humans whose decisions are generally discrete and low dimensional. In AML, decisions are made by algorithms and are usually continuous and high dimensional, e.g. choosing the weights of a neural network. As closed form solutions for Stackelberg games generally do not exist, it is mandatory to have efficient algorithms to search for numerical solutions. We study two different procedures for solving this type of games using gradient methods. We study time and space scalability of both approaches and discuss in which situation it is more appropriate to use each of them. Finally, we illustrate their use in an adversarial prediction problem.

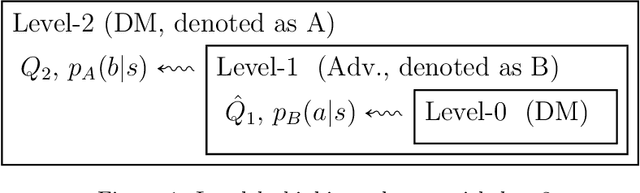

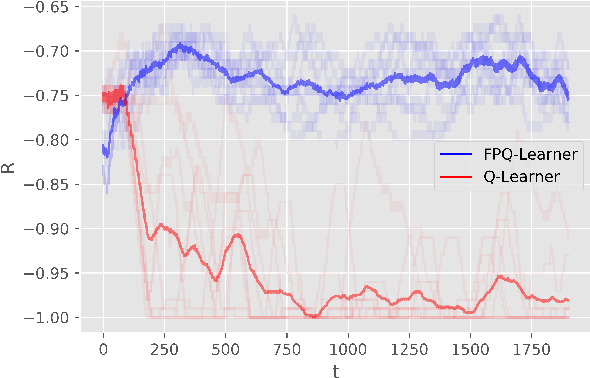

Opponent Aware Reinforcement Learning

Aug 26, 2019

Abstract:We introduce Threatened Markov Decision Processes (TMDPs) as an extension of the classical Markov Decision Process framework for Reinforcement Learning (RL). TMDPs allow suporting a decision maker against potential opponents in a RL context. We also propose a level-k thinking scheme resulting in a novel learning approach to deal with TMDPs. After introducing our framework and deriving theoretical results, relevant empirical evidence is given via extensive experiments, showing the benefits of accounting for adversaries in RL while the agent learns

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge