Rohan Subramani

How does information access affect LLM monitors' ability to detect sabotage?

Jan 28, 2026Abstract:Frontier language model agents can exhibit misaligned behaviors, including deception, exploiting reward hacks, and pursuing hidden objectives. To control potentially misaligned agents, we can use LLMs themselves to monitor for misbehavior. In this paper, we study how information access affects LLM monitor performance. While one might expect that monitors perform better when they have access to more of the monitored agents' reasoning and actions, we find that contemporary systems often perform better with less information, a phenomenon we call the less-is-more effect for automated oversight. We demonstrate this phenomenon, and analyze the conditions under which it occurs, in three evaluation environments where agents must conduct sabotage while evading monitors. Motivated by the less-is-more effect, we introduce extract-and-evaluate (EaE) monitoring--a new hierarchical approach where one monitor isolates relevant excerpts from the monitored agent's trajectory and a separate monitor scores them. In BigCodeBench-Sabotage with GPT-4.1-mini as the monitor model, EaE improves sabotage detection rates by 16.8 percentage points over the next-best monitor without increasing the false positive rate. In other settings, EaE either outperforms or is competitive with baselines. In addition, we find that agents unaware of being monitored can be caught much more easily and that monitors scoring longer reasoning traces gain more from information filtering. Lastly, we conduct a cost-performance analysis and find that Gemini and Claude models with monitoring techniques that involve information filtering occupy much of the Pareto frontier.

Higher-Order Belief in Incomplete Information MAIDs

Mar 08, 2025

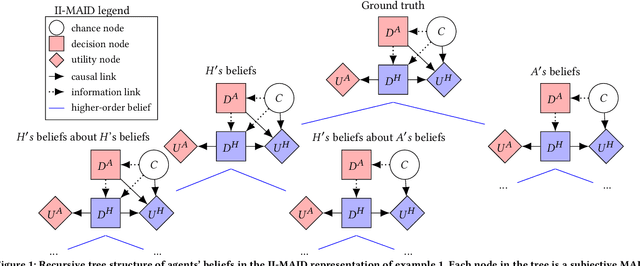

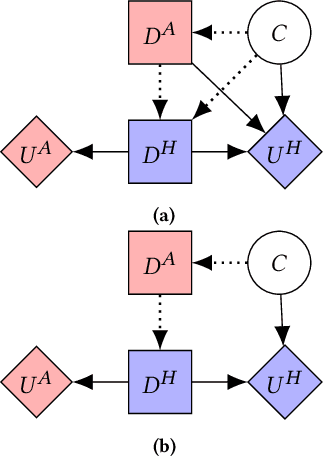

Abstract:Multi-agent influence diagrams (MAIDs) are probabilistic graphical models which represent strategic interactions between agents. MAIDs are equivalent to extensive form games (EFGs) but have a more compact and informative structure. However, MAIDs cannot, in general, represent settings of incomplete information -- wherein agents have different beliefs about the game being played, and different beliefs about each-other's beliefs. In this paper, we introduce incomplete information MAIDs (II-MAIDs). We define both infinite and finite-depth II-MAIDs and prove an equivalence relation to EFGs with incomplete information and no common prior over types. We prove that II-MAIDs inherit classical equilibria concepts via this equivalence, but note that these solution concepts are often unrealistic in the setting with no common prior because they violate common knowledge of rationality. We define a more realistic solution concept based on recursive best-response. Throughout, we describe an example with a hypothetical AI agent undergoing evaluation to illustrate the applicability of II-MAIDs.

Will an AI with Private Information Allow Itself to Be Switched Off?

Nov 25, 2024

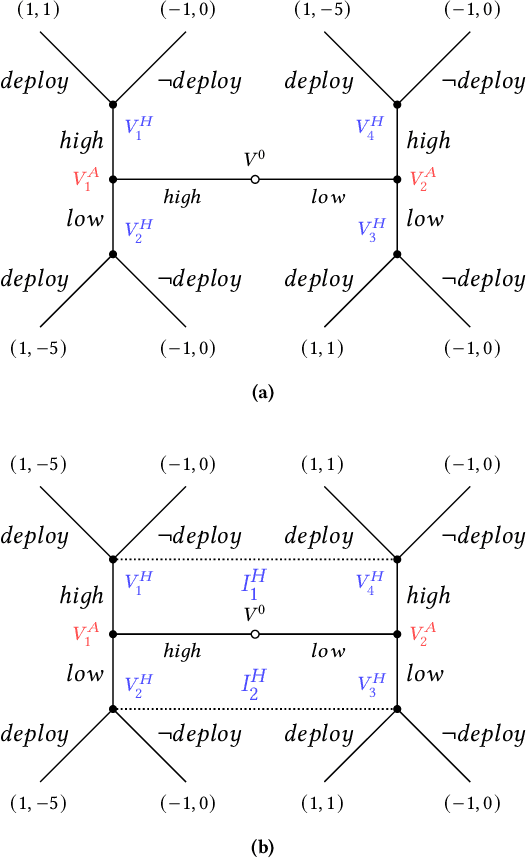

Abstract:A wide variety of goals could cause an AI to disable its off switch because "you can't fetch the coffee if you're dead" (Russell 2019). Prior theoretical work on this shutdown problem assumes that humans know everything that AIs do. In practice, however, humans have only limited information. Moreover, in many of the settings where the shutdown problem is most concerning, AIs might have vast amounts of private information. To capture these differences in knowledge, we introduce the Partially Observable Off-Switch Game (POSG), a game-theoretic model of the shutdown problem with asymmetric information. Unlike when the human has full observability, we find that in optimal play, even AI agents assisting perfectly rational humans sometimes avoid shutdown. As expected, increasing the amount of communication or information available always increases (or leaves unchanged) the agents' expected common payoff. But counterintuitively, introducing bounded communication can make the AI defer to the human less in optimal play even though communication mitigates information asymmetry. In particular, communication sometimes enables new optimal behavior requiring strategic AI deference to achieve outcomes that were previously inaccessible. Thus, designing safe artificial agents in the presence of asymmetric information requires careful consideration of the tradeoffs between maximizing payoffs (potentially myopically) and maintaining AIs' incentives to defer to humans.

Generalization Analogies: A Testbed for Generalizing AI Oversight to Hard-To-Measure Domains

Nov 19, 2023Abstract:As AI systems become more intelligent and their behavior becomes more challenging to assess, they may learn to game the flaws of human feedback instead of genuinely striving to follow instructions; however, this risk can be mitigated by controlling how LLMs generalize human feedback to situations where it is unreliable. To better understand how reward models generalize, we craft 69 distribution shifts spanning 8 categories. We find that reward models do not learn to evaluate `instruction-following' by default and instead favor personas that resemble internet text. Techniques for interpreting reward models' internal representations achieve better generalization than standard fine-tuning, but still frequently fail to distinguish instruction-following from conflated behaviors. We consolidate the 15 most challenging distribution shifts into the GENeralization analogIES (GENIES) benchmark, which we hope will enable progress toward controlling reward model generalization.

On The Expressivity of Objective-Specification Formalisms in Reinforcement Learning

Oct 18, 2023

Abstract:To solve a task with reinforcement learning (RL), it is necessary to formally specify the goal of that task. Although most RL algorithms require that the goal is formalised as a Markovian reward function, alternatives have been developed (such as Linear Temporal Logic and Multi-Objective Reinforcement Learning). Moreover, it is well known that some of these formalisms are able to express certain tasks that other formalisms cannot express. However, there has not yet been any thorough analysis of how these formalisms relate to each other in terms of expressivity. In this work, we fill this gap in the existing literature by providing a comprehensive comparison of the expressivities of 17 objective-specification formalisms in RL. We place these formalisms in a preorder based on their expressive power, and present this preorder as a Hasse diagram. We find a variety of limitations for the different formalisms, and that no formalism is both dominantly expressive and straightforward to optimise with current techniques. For example, we prove that each of Regularised RL, Outer Nonlinear Markov Rewards, Reward Machines, Linear Temporal Logic, and Limit Average Rewards can express an objective that the others cannot. Our findings have implications for both policy optimisation and reward learning. Firstly, we identify expressivity limitations which are important to consider when specifying objectives in practice. Secondly, our results highlight the need for future research which adapts reward learning to work with a variety of formalisms, since many existing reward learning methods implicitly assume that desired objectives can be expressed with Markovian rewards. Our work contributes towards a more cohesive understanding of the costs and benefits of different RL objective-specification formalisms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge