Reuben M. Aronson

CHARM: Considering Human Attributes for Reinforcement Modeling

Jun 16, 2025

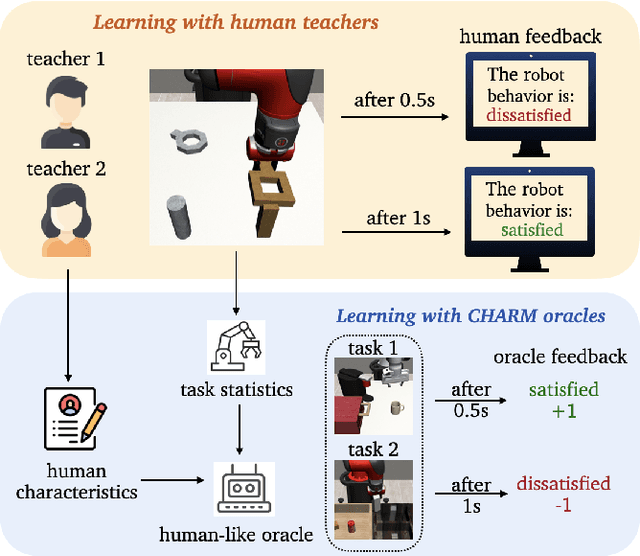

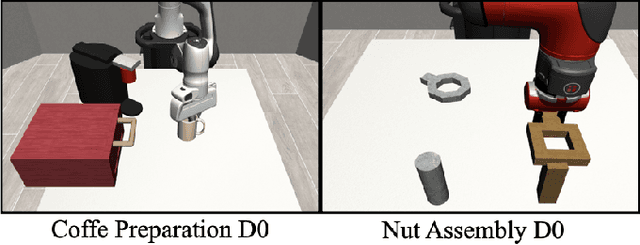

Abstract:Reinforcement Learning from Human Feedback has recently achieved significant success in various fields, and its performance is highly related to feedback quality. While much prior work acknowledged that human teachers' characteristics would affect human feedback patterns, there is little work that has closely investigated the actual effects. In this work, we designed an exploratory study investigating how human feedback patterns are associated with human characteristics. We conducted a public space study with two long horizon tasks and 46 participants. We found that feedback patterns are not only correlated with task statistics, such as rewards, but also correlated with participants' characteristics, especially robot experience and educational background. Additionally, we demonstrated that human feedback value can be more accurately predicted with human characteristics compared to only using task statistics. All human feedback and characteristics we collected, and codes for our data collection and predicting more accurate human feedback are available at https://github.com/AABL-Lab/CHARM

Demonstration Sidetracks: Categorizing Systematic Non-Optimality in Human Demonstrations

Jun 12, 2025

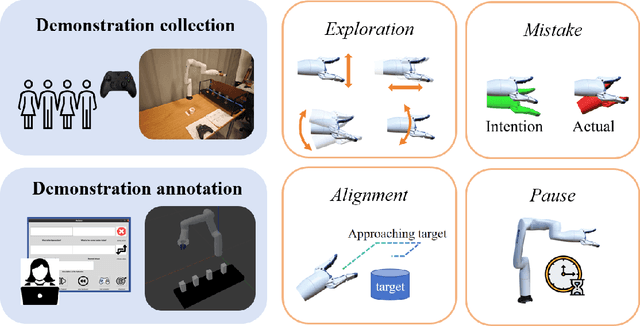

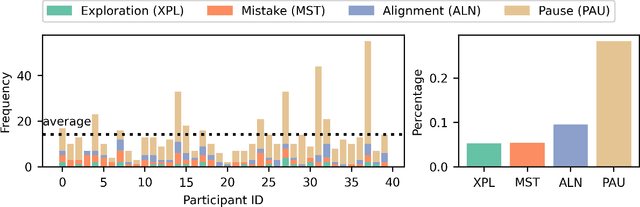

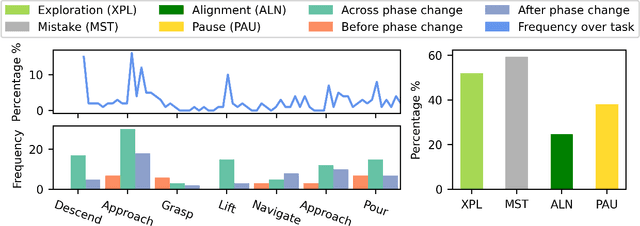

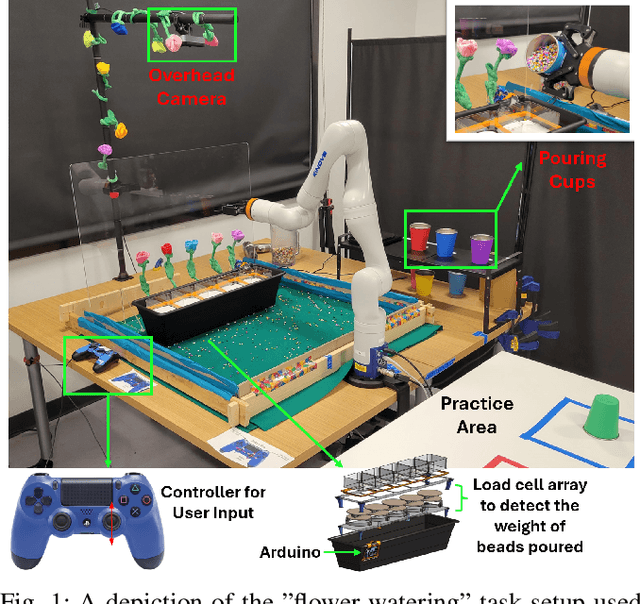

Abstract:Learning from Demonstration (LfD) is a popular approach for robots to acquire new skills, but most LfD methods suffer from imperfections in human demonstrations. Prior work typically treats these suboptimalities as random noise. In this paper we study non-optimal behaviors in non-expert demonstrations and show that they are systematic, forming what we call demonstration sidetracks. Using a public space study with 40 participants performing a long-horizon robot task, we recreated the setup in simulation and annotated all demonstrations. We identify four types of sidetracks (Exploration, Mistake, Alignment, Pause) and one control pattern (one-dimension control). Sidetracks appear frequently across participants, and their temporal and spatial distribution is tied to task context. We also find that users' control patterns depend on the control interface. These insights point to the need for better models of suboptimal demonstrations to improve LfD algorithms and bridge the gap between lab training and real-world deployment. All demonstrations, infrastructure, and annotations are available at https://github.com/AABL-Lab/Human-Demonstration-Sidetracks.

On the Effect of Robot Errors on Human Teaching Dynamics

Sep 15, 2024Abstract:Human-in-the-loop learning is gaining popularity, particularly in the field of robotics, because it leverages human knowledge about real-world tasks to facilitate agent learning. When people instruct robots, they naturally adapt their teaching behavior in response to changes in robot performance. While current research predominantly focuses on integrating human teaching dynamics from an algorithmic perspective, understanding these dynamics from a human-centered standpoint is an under-explored, yet fundamental problem. Addressing this issue will enhance both robot learning and user experience. Therefore, this paper explores one potential factor contributing to the dynamic nature of human teaching: robot errors. We conducted a user study to investigate how the presence and severity of robot errors affect three dimensions of human teaching dynamics: feedback granularity, feedback richness, and teaching time, in both forced-choice and open-ended teaching contexts. The results show that people tend to spend more time teaching robots with errors, provide more detailed feedback over specific segments of a robot's trajectory, and that robot error can influence a teacher's choice of feedback modality. Our findings offer valuable insights for designing effective interfaces for interactive learning and optimizing algorithms to better understand human intentions.

Control-Theoretic Analysis of Shared Control Systems

Aug 22, 2024

Abstract:Users of shared control systems change their behavior in the presence of assistance, which conflicts with assumpts about user behavior that some assistance methods make. In this paper, we propose an analysis technique to evaluate the user's experience with the assistive systems that bypasses required assumptions: we model the assistance as a dynamical system that can be analyzed using control theory techniques. We analyze the shared autonomy assistance algorithm and make several observations: we identify a problem with runaway goal confidence and propose a system adjustment to mitigate it, we demonstrate that the system inherently limits the possible actions available to the user, and we show that in a simplified setting, the effect of the assistance is to drive the system to the convex hull of the goals and, once there, add a layer of indirection between the user control and the system behavior. We conclude by discussing the possible uses of this analysis for the field.

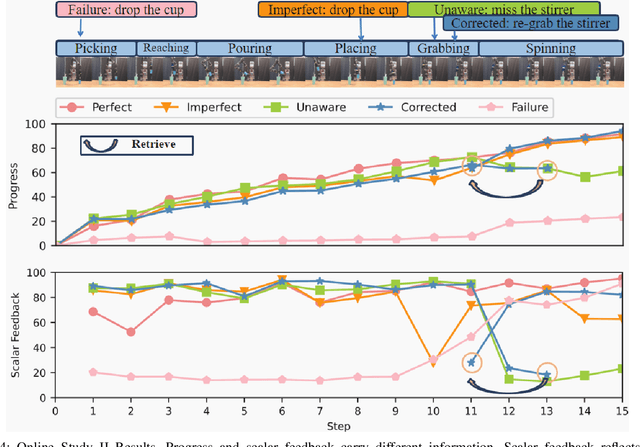

How Much Progress Did I Make? An Unexplored Human Feedback Signal for Teaching Robots

Jul 08, 2024

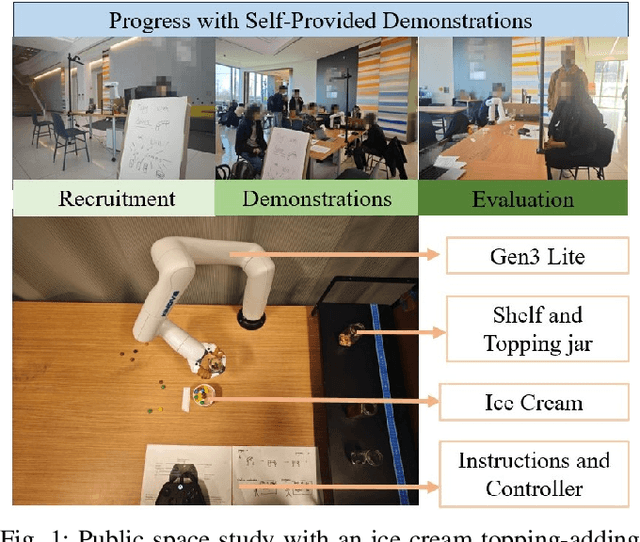

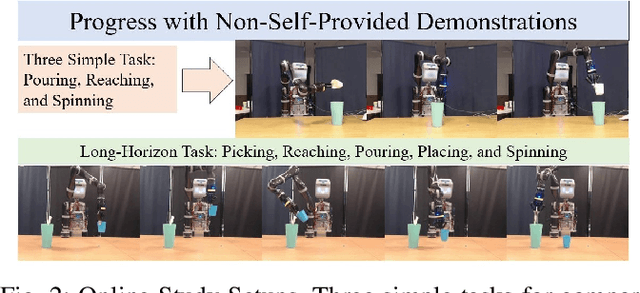

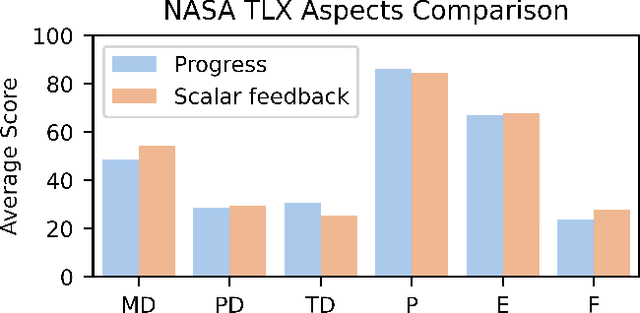

Abstract:Enhancing the expressiveness of human teaching is vital for both improving robots' learning from humans and the human-teaching-robot experience. In this work, we characterize and test a little-used teaching signal: \textit{progress}, designed to represent the completion percentage of a task. We conducted two online studies with 76 crowd-sourced participants and one public space study with 40 non-expert participants to validate the capability of this progress signal. We find that progress indicates whether the task is successfully performed, reflects the degree of task completion, identifies unproductive but harmless behaviors, and is likely to be more consistent across participants. Furthermore, our results show that giving progress does not require extra workload and time. An additional contribution of our work is a dataset of 40 non-expert demonstrations from the public space study through an ice cream topping-adding task, which we observe to be multi-policy and sub-optimal, with sub-optimality not only from teleoperation errors but also from exploratory actions and attempts. The dataset is available at \url{https://github.com/TeachingwithProgress/Non-Expert\_Demonstrations}.

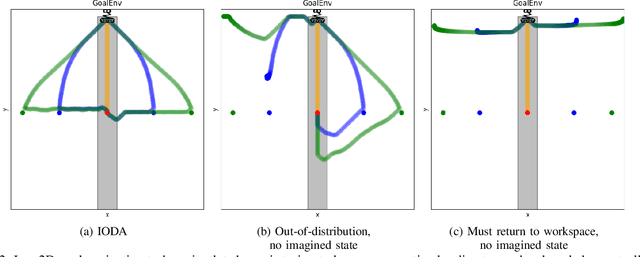

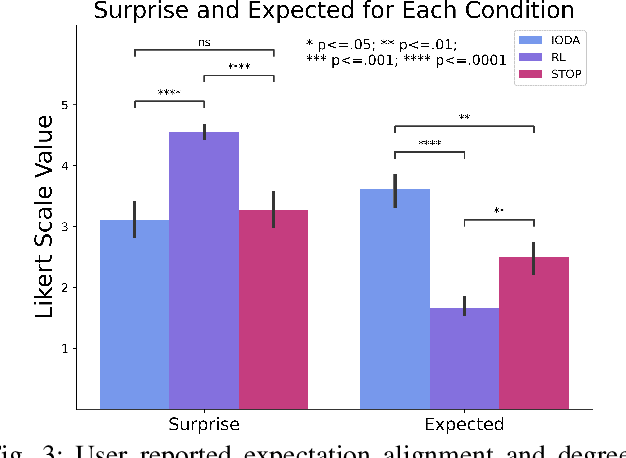

Imagining In-distribution States: How Predictable Robot Behavior Can Enable User Control Over Learned Policies

Jun 19, 2024

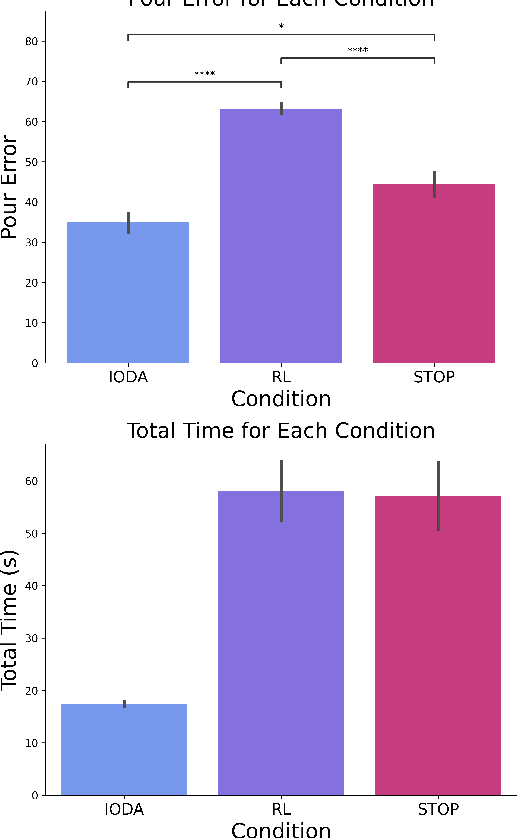

Abstract:It is crucial that users are empowered to take advantage of the functionality of a robot and use their understanding of that functionality to perform novel and creative tasks. Given a robot trained with Reinforcement Learning (RL), a user may wish to leverage that autonomy along with their familiarity of how they expect the robot to behave to collaborate with the robot. One technique is for the user to take control of some of the robot's action space through teleoperation, allowing the RL policy to simultaneously control the rest. We formalize this type of shared control as Partitioned Control (PC). However, this may not be possible using an out-of-the-box RL policy. For example, a user's control may bring the robot into a failure state from the policy's perspective, causing it to act unexpectedly and hindering the success of the user's desired task. In this work, we formalize this problem and present Imaginary Out-of-Distribution Actions, IODA, an initial algorithm which empowers users to leverage their expectations of a robot's behavior to accomplish new tasks. We deploy IODA in a user study with a real robot and find that IODA leads to both better task performance and a higher degree of alignment between robot behavior and user expectation. We also show that in PC, there is a strong and significant correlation between task performance and the robot's ability to meet user expectations, highlighting the need for approaches like IODA. Code is available at https://github.com/AABL-Lab/ioda_roman_2024

From "Thumbs Up" to "10 out of 10": Reconsidering Scalar Feedback in Interactive Reinforcement Learning

Nov 17, 2023

Abstract:Learning from human feedback is an effective way to improve robotic learning in exploration-heavy tasks. Compared to the wide application of binary human feedback, scalar human feedback has been used less because it is believed to be noisy and unstable. In this paper, we compare scalar and binary feedback, and demonstrate that scalar feedback benefits learning when properly handled. We collected binary or scalar feedback respectively from two groups of crowdworkers on a robot task. We found that when considering how consistently a participant labeled the same data, scalar feedback led to less consistency than binary feedback; however, the difference vanishes if small mismatches are allowed. Additionally, scalar and binary feedback show no significant differences in their correlations with key Reinforcement Learning targets. We then introduce Stabilizing TEacher Assessment DYnamics (STEADY) to improve learning from scalar feedback. Based on the idea that scalar feedback is muti-distributional, STEADY re-constructs underlying positive and negative feedback distributions and re-scales scalar feedback based on feedback statistics. We show that models trained with \textit{scalar feedback + STEADY } outperform baselines, including binary feedback and raw scalar feedback, in a robot reaching task with non-expert human feedback. Our results show that both binary feedback and scalar feedback are dynamic, and scalar feedback is a promising signal for use in interactive Reinforcement Learning.

HARMONIC: A Multimodal Dataset of Assistive Human-Robot Collaboration

Jul 30, 2018

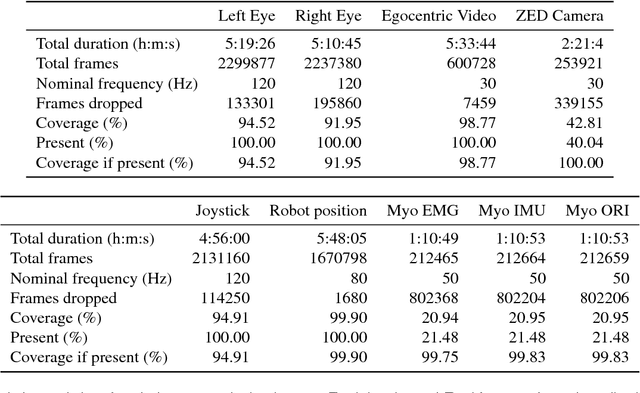

Abstract:We present HARMONIC, a large multi-modal dataset of human interactions in a shared autonomy setting. The dataset provides human, robot, and environment data streams from twenty-four people engaged in an assistive eating task with a 6 degree-of-freedom (DOF) robot arm. From each participant, we recorded video of both eyes, egocentric video from a head-mounted camera, joystick commands, electromyography from the participant's forearm used to operate the joystick, third person stereo video, and the joint positions of the 6 DOF robot arm. Also included are several data streams that come as a direct result of these recordings, namely eye gaze fixations in the egocentric camera frame and body position skeletons. This dataset could be of interest to researchers studying intention prediction, human mental state modeling, and shared autonomy. Data streams are provided in a variety of formats such as video and human-readable csv or yaml files.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge