Renato Zaccaria

RICO-MR: An Open-Source Architecture for Robot Intent Communication through Mixed Reality

Sep 09, 2023

Abstract:This article presents an open-source architecture for conveying robots' intentions to human teammates using Mixed Reality and Head-Mounted Displays. The architecture has been developed focusing on its modularity and re-usability aspects. Both binaries and source code are available, enabling researchers and companies to adopt the proposed architecture as a standalone solution or to integrate it in more comprehensive implementations. Due to its scalability, the proposed architecture can be easily employed to develop shared Mixed Reality experiences involving multiple robots and human teammates in complex collaborative scenarios.

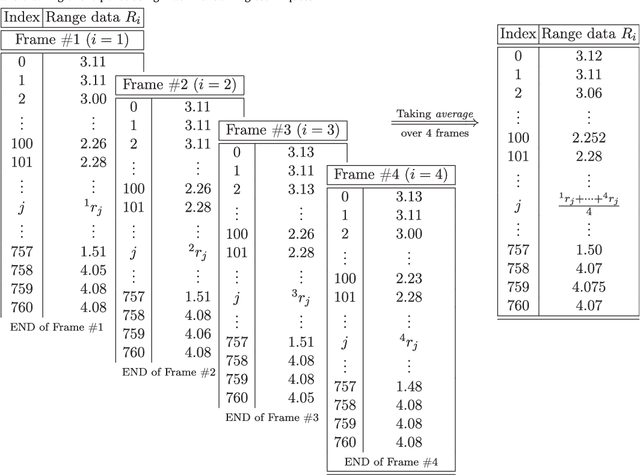

A 2D laser rangefinder scans dataset of standard EUR pallets

Mar 13, 2019

Abstract:In the past few years, the technology of automated guided vehicles (AGVs) has notably advanced. In particular, in the context of factory and warehouse automation, different approaches have been presented for detecting and localizing pallets inside warehouses and shop-floor environments. In a related research paper [1], we show that an AGVs can detect, localize, and track pallets using machine learning techniques based only on the data of an on-board 2D laser rangefinder. Such sensor is very common in industrial scenarios due to its simplicity and robustness, but it can only provide a limited amount of data. Therefore, it has been neglected in the past in favor of more complex solutions. In this paper, we release to the community the data we collected in [1] for further research activities in the field of pallet localization and tracking. The dataset comprises a collection of 565 2D scans from real-world environments, which are divided into 340 samples where pallets are present, and 225 samples where they are not. The data have been manually labelled and are provided in different formats.

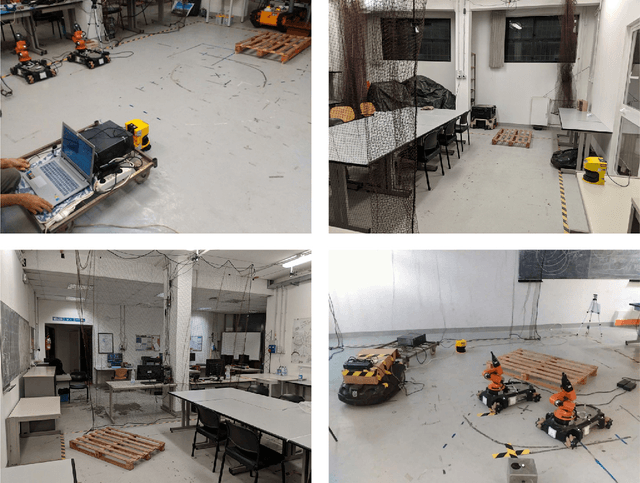

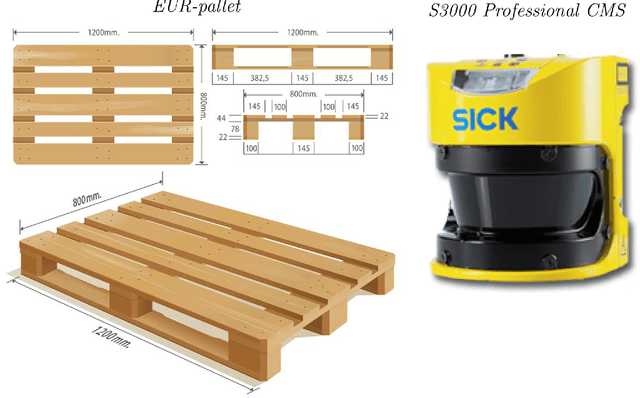

Detection, localisation and tracking of pallets using machine learning techniques and 2D range data

Feb 22, 2019

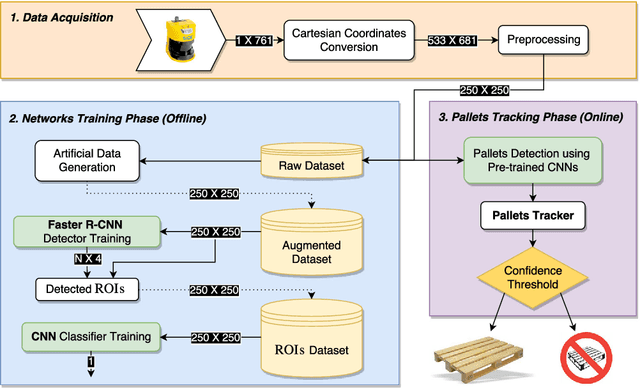

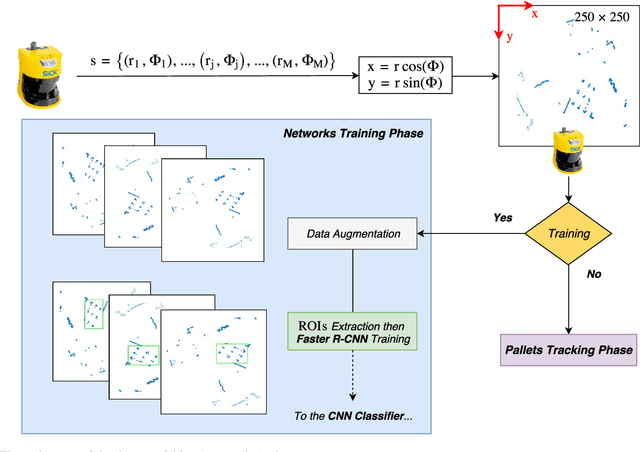

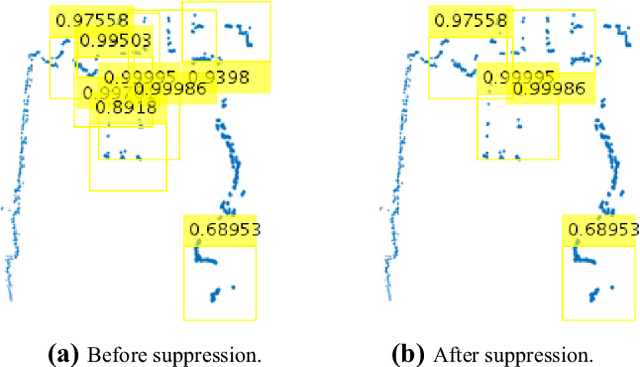

Abstract:The problem of autonomous transportation in industrial scenarios is receiving a renewed interest due to the way it can revolutionise internal logistics, especially in unstructured environments. This paper presents a novel architecture allowing a robot to detect, localise, and track (possibly multiple) pallets using machine learning techniques based on an on-board 2D laser rangefinder only. The architecture is composed of two main components: the first stage is a pallet detector employing a Faster Region-based Convolutional Neural Network (Faster R-CNN) detector cascaded with a CNN-based classifier; the second stage is a Kalman filter for localising and tracking detected pallets, which we also use to defer commitment to a pallet detected in the first stage until sufficient confidence has been acquired via a sequential data acquisition process. For fine-tuning the CNNs, the architecture has been systematically evaluated using a real-world dataset containing 340 labeled 2D scans, which have been made freely available in an online repository. Detection performance has been assessed on the basis of the average accuracy over k-fold cross-validation, and it scored 99.58% in our tests. Concerning pallet localisation and tracking, experiments have been performed in a scenario where the robot is approaching the pallet to fork. Although data have been originally acquired by considering only one pallet as per specification of the use case we consider, artificial data have been generated as well to mimic the presence of multiple pallets in the robot workspace. Our experimental results confirm that the system is capable of identifying, localising and tracking pallets with a high success rate while being robust to false positives.

Towards a new paradigm for assistive technology at home: research challenges, design issues and performance assessment

Oct 27, 2017

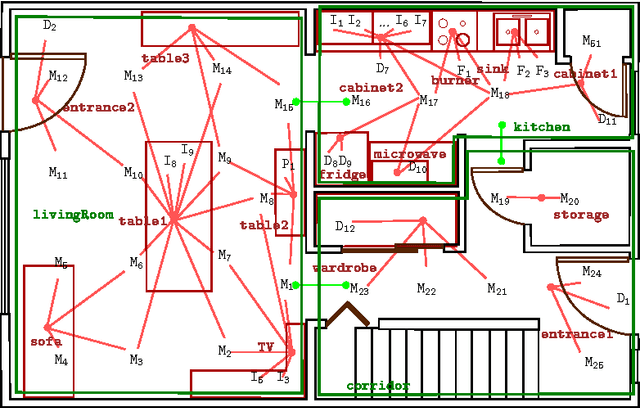

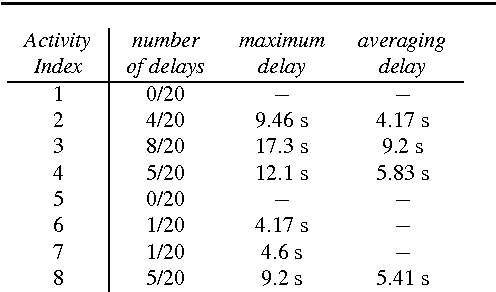

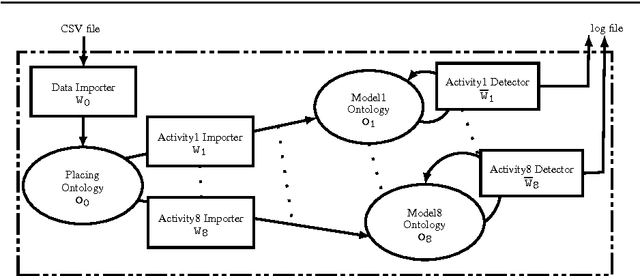

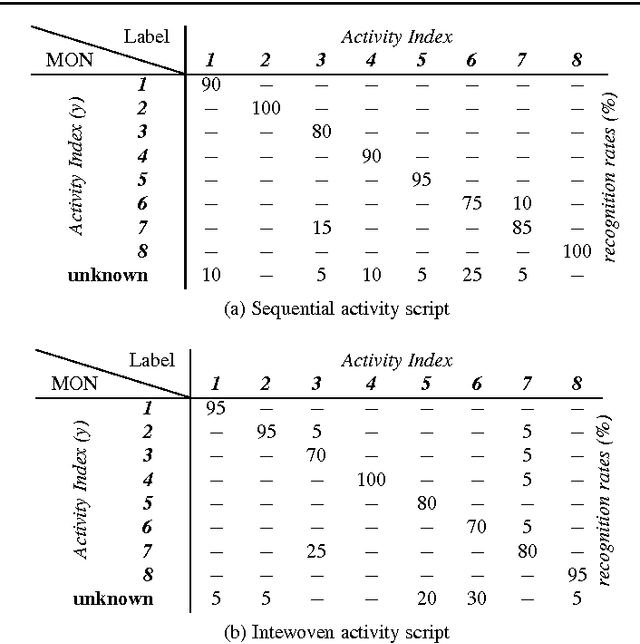

Abstract:Providing elderly and people with special needs, including those suffering from physical disabilities and chronic diseases, with the possibility of retaining their independence at best is one of the most important challenges our society is expected to face. Assistance models based on the home care paradigm are being adopted rapidly in almost all industrialized and emerging countries. Such paradigms hypothesize that it is necessary to ensure that the so-called Activities of Daily Living are correctly and regularly performed by the assisted person to increase the perception of an improved quality of life. This chapter describes the computational inference engine at the core of Arianna, a system able to understand whether an assisted person performs a given set of ADL and to motivate him/her in performing them through a speech-mediated motivational dialogue, using a set of nearables to be installed in an apartment, plus a wearable to be worn or fit in garments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge