Renato Mancuso

UniLCD: Unified Local-Cloud Decision-Making via Reinforcement Learning

Sep 17, 2024

Abstract:Embodied vision-based real-world systems, such as mobile robots, require a careful balance between energy consumption, compute latency, and safety constraints to optimize operation across dynamic tasks and contexts. As local computation tends to be restricted, offloading the computation, ie, to a remote server, can save local resources while providing access to high-quality predictions from powerful and large models. However, the resulting communication and latency overhead has led to limited usability of cloud models in dynamic, safety-critical, real-time settings. To effectively address this trade-off, we introduce UniLCD, a novel hybrid inference framework for enabling flexible local-cloud collaboration. By efficiently optimizing a flexible routing module via reinforcement learning and a suitable multi-task objective, UniLCD is specifically designed to support the multiple constraints of safety-critical end-to-end mobile systems. We validate the proposed approach using a challenging, crowded navigation task requiring frequent and timely switching between local and cloud operations. UniLCD demonstrates improved overall performance and efficiency, by over 35% compared to state-of-the-art baselines based on various split computing and early exit strategies.

The SwaNNFlight System: On-the-Fly Sim-to-Real Adaptation via Anchored Learning

Jan 17, 2023Abstract:Reinforcement Learning (RL) agents trained in simulated environments and then deployed in the real world are often sensitive to the differences in dynamics presented, commonly termed the sim-to-real gap. With the goal of minimizing this gap on resource-constrained embedded systems, we train and live-adapt agents on quadrotors built from off-the-shelf hardware. In achieving this we developed three novel contributions. (i) SwaNNFlight, an open-source firmware enabling wireless data capture and transfer of agents' observations. Fine-tuning agents with new data, and receiving and swapping onboard NN controllers -- all while in flight. We also design SwaNNFlight System (SwaNNFS) allowing new research in training and live-adapting learning agents on similar systems. (ii) Multiplicative value composition, a technique for preserving the importance of each policy optimization criterion, improving training performance and variability in learnt behavior. And (iii) anchor critics to help stabilize the fine-tuning of agents during sim-to-real transfer, online learning from real data while retaining behavior optimized in simulation. We train consistently flight-worthy control policies in simulation and deploy them on real quadrotors. We then achieve live controller adaptation via over-the-air updates of the onboard control policy from a ground station. Our results indicate that live adaptation unlocks a near-50\% reduction in power consumption, attributed to the sim-to-real gap. Finally, we tackle the issues of catastrophic forgetting and controller instability, showing the effectiveness of our novel methods. Project Website: https://github.com/BU-Cyber-Physical-Systems-Lab/SwaNNFS

Good Actors can come in Smaller Sizes: A Case Study on the Value of Actor-Critic Asymmetry

Feb 23, 2021

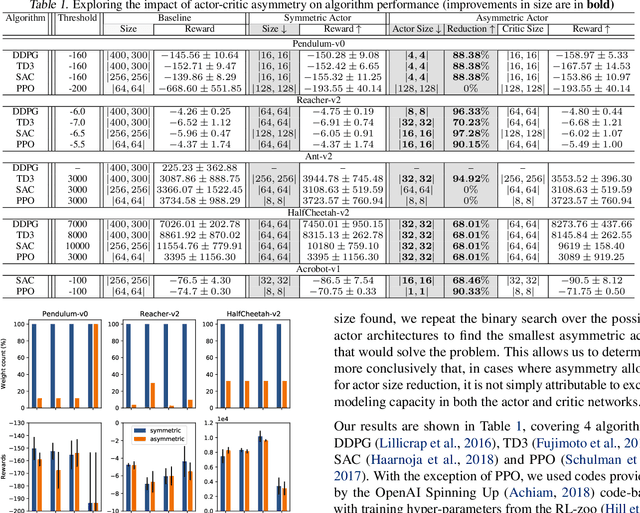

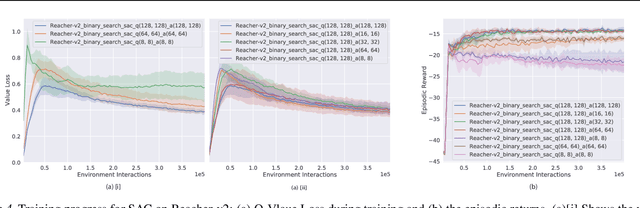

Abstract:Actors and critics in actor-critic reinforcement learning algorithms are functionally separate, yet they often use the same network architectures. This case study explores the performance impact of network sizes when considering actor and critic architectures independently. By relaxing the assumption of architectural symmetry, it is often possible for smaller actors to achieve comparable policy performance to their symmetric counterparts. Our experiments show up to 97% reduction in the number of network weights with an average reduction of 64% over multiple algorithms on multiple tasks. Given the practical benefits of reducing actor complexity, we believe configurations of actors and critics are aspects of actor-critic design that deserve to be considered independently.

How to Train your Quadrotor: A Framework for Consistently Smooth and Responsive Flight Control via Reinforcement Learning

Dec 11, 2020

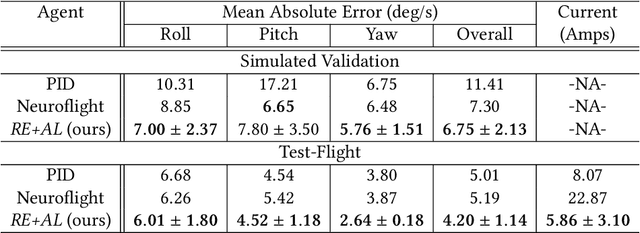

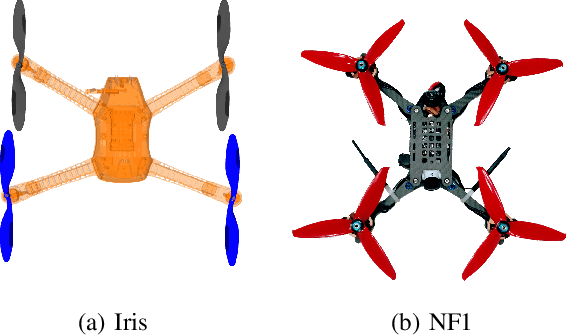

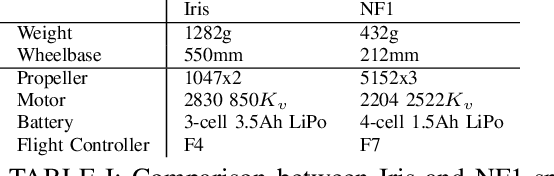

Abstract:We focus on the problem of reliably training Reinforcement Learning (RL) models (agents) for stable low-level control in embedded systems and test our methods on a high-performance, custom-built quadrotor platform. A common but often under-studied problem in developing RL agents for continuous control is that the control policies developed are not always smooth. This lack of smoothness can be a major problem when learning controllers %intended for deployment on real hardware as it can result in control instability and hardware failure. Issues of noisy control are further accentuated when training RL agents in simulation due to simulators ultimately being imperfect representations of reality - what is known as the reality gap. To combat issues of instability in RL agents, we propose a systematic framework, `REinforcement-based transferable Agents through Learning' (RE+AL), for designing simulated training environments which preserve the quality of trained agents when transferred to real platforms. RE+AL is an evolution of the Neuroflight infrastructure detailed in technical reports prepared by members of our research group. Neuroflight is a state-of-the-art framework for training RL agents for low-level attitude control. RE+AL improves and completes Neuroflight by solving a number of important limitations that hindered the deployment of Neuroflight to real hardware. We benchmark RE+AL on the NF1 racing quadrotor developed as part of Neuroflight. We demonstrate that RE+AL significantly mitigates the previously observed issues of smoothness in RL agents. Additionally, RE+AL is shown to consistently train agents that are flight-capable and with minimal degradation in controller quality upon transfer. RE+AL agents also learn to perform better than a tuned PID controller, with better tracking errors, smoother control and reduced power consumption.

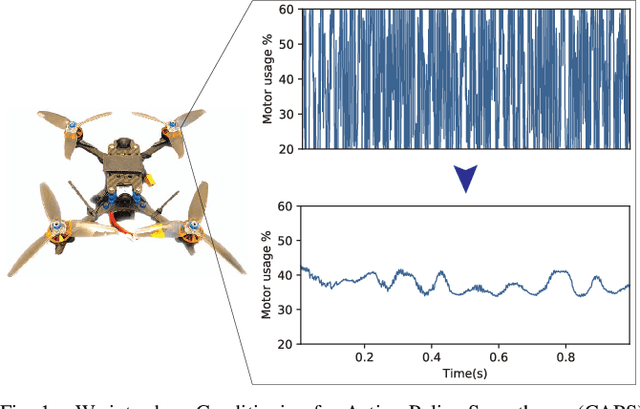

Regularizing Action Policies for Smooth Control with Reinforcement Learning

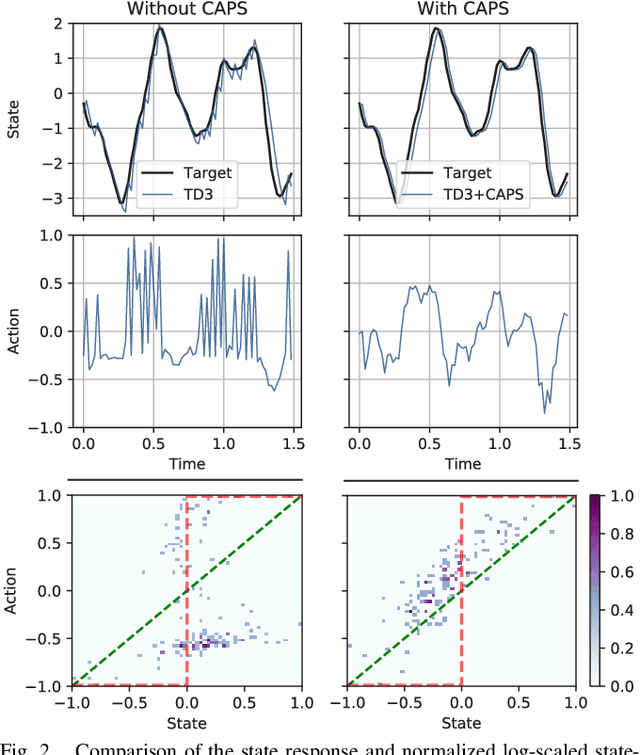

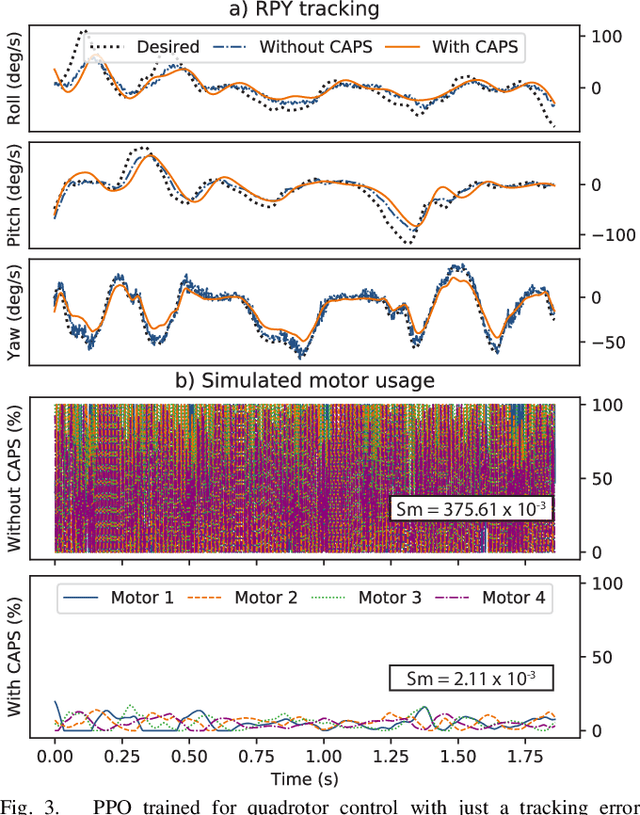

Dec 11, 2020

Abstract:A critical problem with the practical utility of controllers trained with deep Reinforcement Learning (RL) is the notable lack of smoothness in the actions learned by the RL policies. This trend often presents itself in the form of control signal oscillation and can result in poor control, high power consumption, and undue system wear. We introduce Conditioning for Action Policy Smoothness (CAPS), an effective yet intuitive regularization on action policies, which offers consistent improvement in the smoothness of the learned state-to-action mappings of neural network controllers, reflected in the elimination of high-frequency components in the control signal. Tested on a real system, improvements in controller smoothness on a quadrotor drone resulted in an almost 80% reduction in power consumption while consistently training flight-worthy controllers. Project website: http://ai.bu.edu/caps

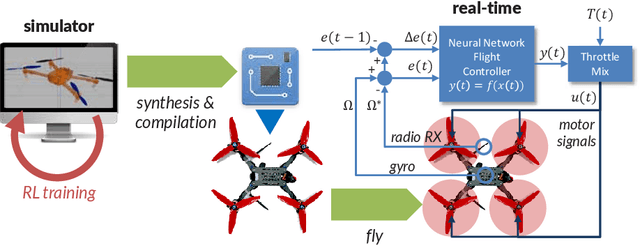

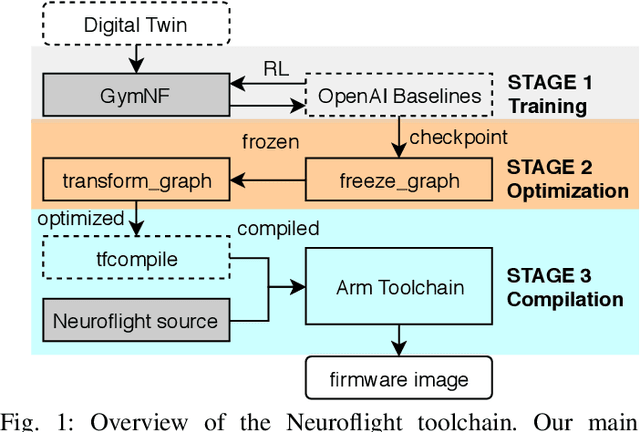

Neuroflight: Next Generation Flight Control Firmware

Jan 19, 2019

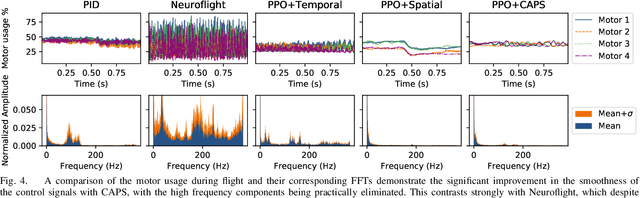

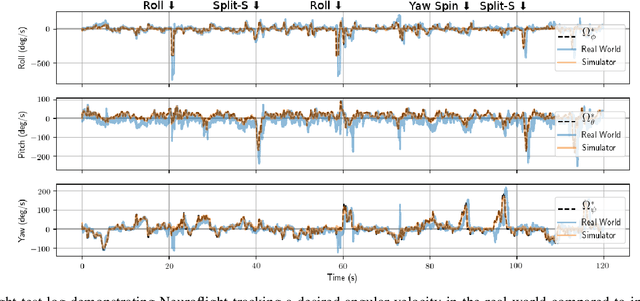

Abstract:Little innovation has been made to low-level attitude flight control used by unmanned aerial vehicles, which still predominantly uses the classical PID controller. In this work we introduce Neuroflight, the first open source neuro-flight controller firmware. We present our toolchain for training a neural network in simulation and compiling it to run on embedded hardware. Challenges faced jumping from simulation to reality are discussed along with our solutions. Our evaluation shows the neural network can execute at over 2.67kHz on an Arm Cortex-M7 processor and flight tests demonstrate a quadcopter running Neuroflight can achieve stable flight and execute aerobatic maneuvers.

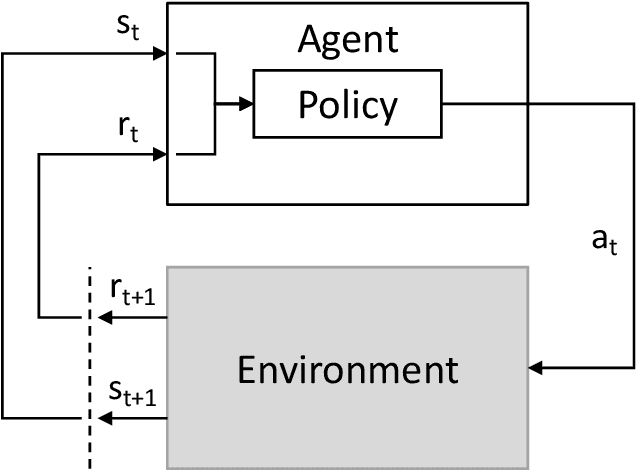

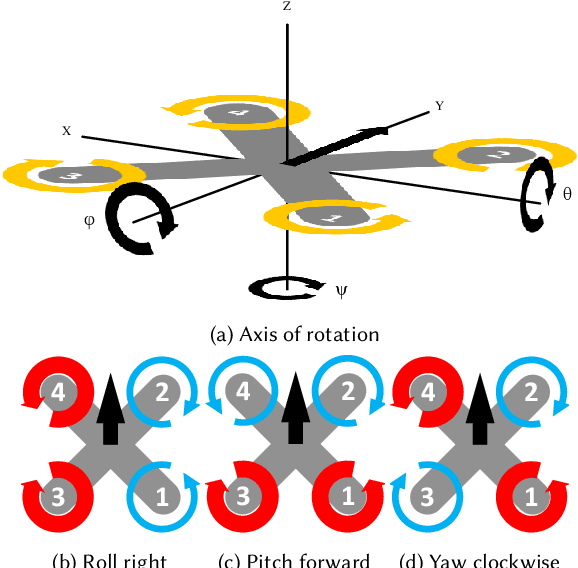

Reinforcement Learning for UAV Attitude Control

Apr 11, 2018

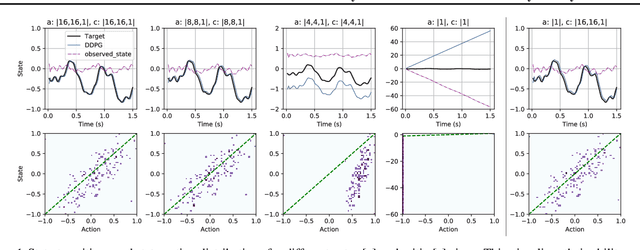

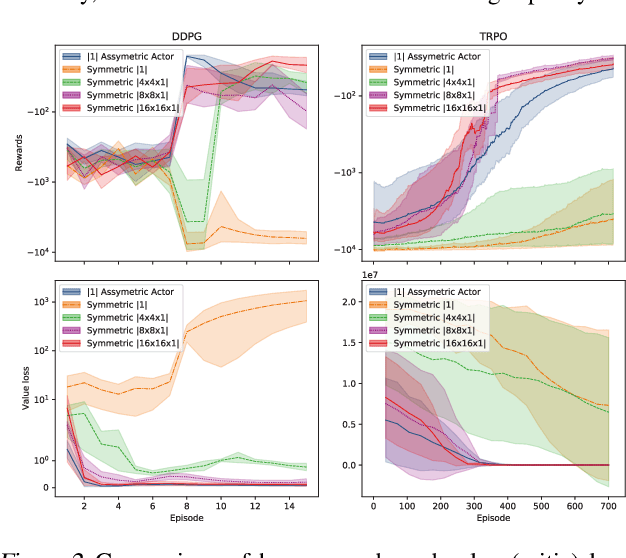

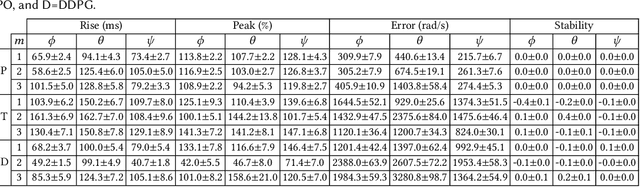

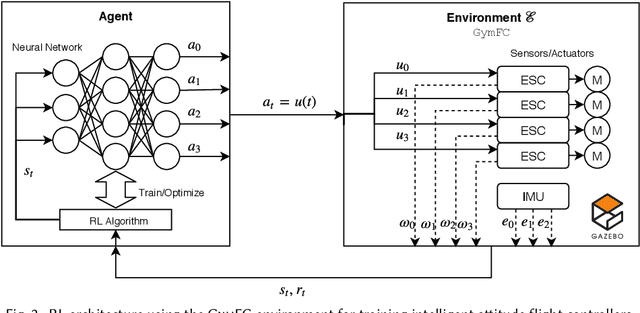

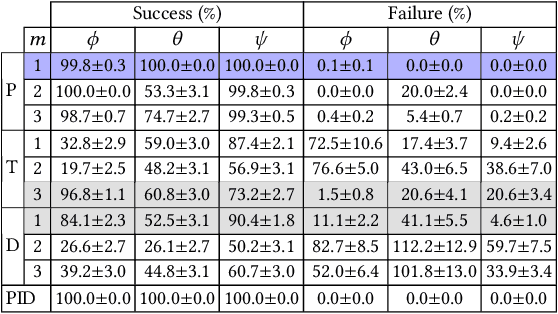

Abstract:Autopilot systems are typically composed of an "inner loop" providing stability and control, while an "outer loop" is responsible for mission-level objectives, e.g. way-point navigation. Autopilot systems for UAVs are predominately implemented using Proportional, Integral Derivative (PID) control systems, which have demonstrated exceptional performance in stable environments. However more sophisticated control is required to operate in unpredictable, and harsh environments. Intelligent flight control systems is an active area of research addressing limitations of PID control most recently through the use of reinforcement learning (RL) which has had success in other applications such as robotics. However previous work has focused primarily on using RL at the mission-level controller. In this work, we investigate the performance and accuracy of the inner control loop providing attitude control when using intelligent flight control systems trained with the state-of-the-art RL algorithms, Deep Deterministic Gradient Policy (DDGP), Trust Region Policy Optimization (TRPO) and Proximal Policy Optimization (PPO). To investigate these unknowns we first developed an open-source high-fidelity simulation environment to train a flight controller attitude control of a quadrotor through RL. We then use our environment to compare their performance to that of a PID controller to identify if using RL is appropriate in high-precision, time-critical flight control.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge