Razin A. Shaikh

A Pipeline For Discourse Circuits From CCG

Nov 29, 2023

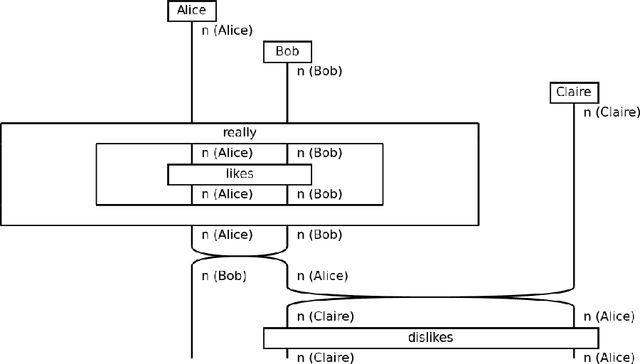

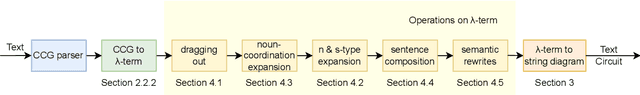

Abstract:There is a significant disconnect between linguistic theory and modern NLP practice, which relies heavily on inscrutable black-box architectures. DisCoCirc is a newly proposed model for meaning that aims to bridge this divide, by providing neuro-symbolic models that incorporate linguistic structure. DisCoCirc represents natural language text as a `circuit' that captures the core semantic information of the text. These circuits can then be interpreted as modular machine learning models. Additionally, DisCoCirc fulfils another major aim of providing an NLP model that can be implemented on near-term quantum computers. In this paper we describe a software pipeline that converts English text to its DisCoCirc representation. The pipeline achieves coverage over a large fragment of the English language. It relies on Combinatory Categorial Grammar (CCG) parses of the input text as well as coreference resolution information. This semantic and syntactic information is used in several steps to convert the text into a simply-typed $\lambda$-calculus term, and then into a circuit diagram. This pipeline will enable the application of the DisCoCirc framework to NLP tasks, using both classical and quantum approaches.

Formalising and Learning a Quantum Model of Concepts

Feb 07, 2023

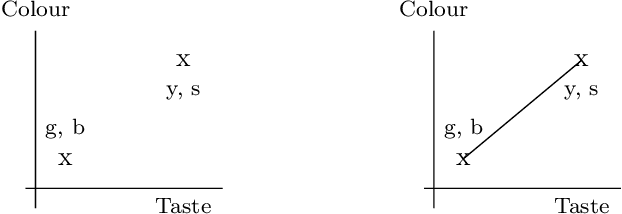

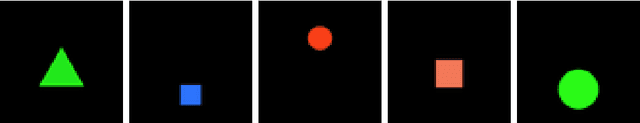

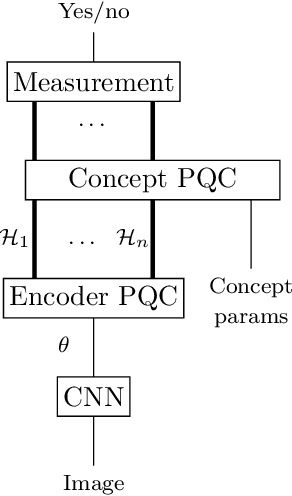

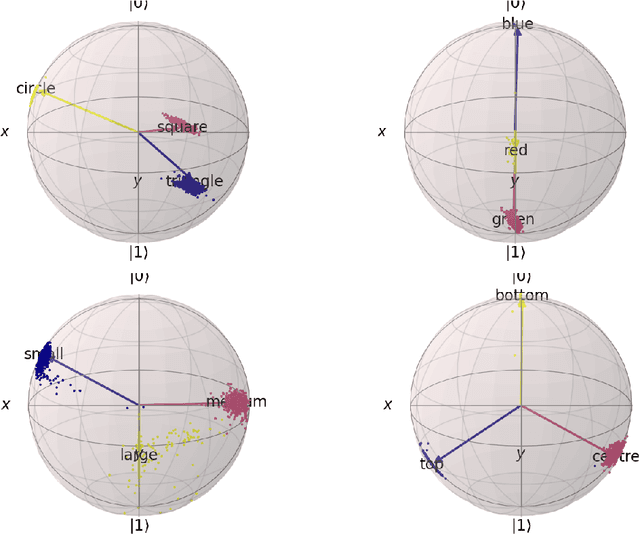

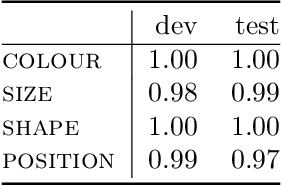

Abstract:In this report we present a new modelling framework for concepts based on quantum theory, and demonstrate how the conceptual representations can be learned automatically from data. A contribution of the work is a thorough category-theoretic formalisation of our framework. We claim that the use of category theory, and in particular the use of string diagrams to describe quantum processes, helps elucidate some of the most important features of our quantum approach to concept modelling. Our approach builds upon Gardenfors' classical framework of conceptual spaces, in which cognition is modelled geometrically through the use of convex spaces, which in turn factorise in terms of simpler spaces called domains. We show how concepts from the domains of shape, colour, size and position can be learned from images of simple shapes, where individual images are represented as quantum states and concepts as quantum effects. Concepts are learned by a hybrid classical-quantum network trained to perform concept classification, where the classical image processing is carried out by a convolutional neural network and the quantum representations are produced by a parameterised quantum circuit. We also use discarding to produce mixed effects, which can then be used to learn concepts which only apply to a subset of the domains, and show how entanglement (together with discarding) can be used to capture interesting correlations across domains. Finally, we consider the question of whether our quantum models of concepts can be considered conceptual spaces in the Gardenfors sense.

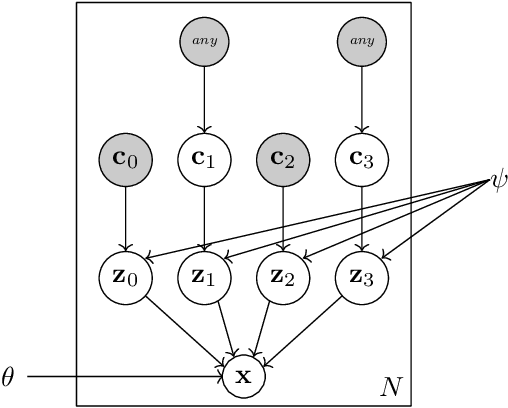

The Conceptual VAE

Mar 21, 2022

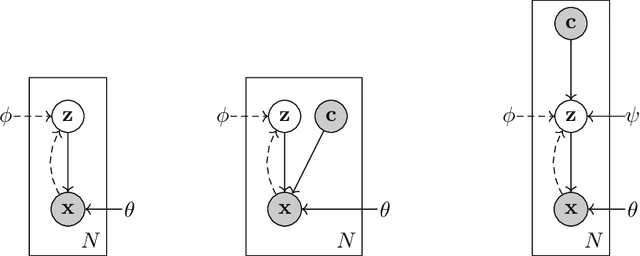

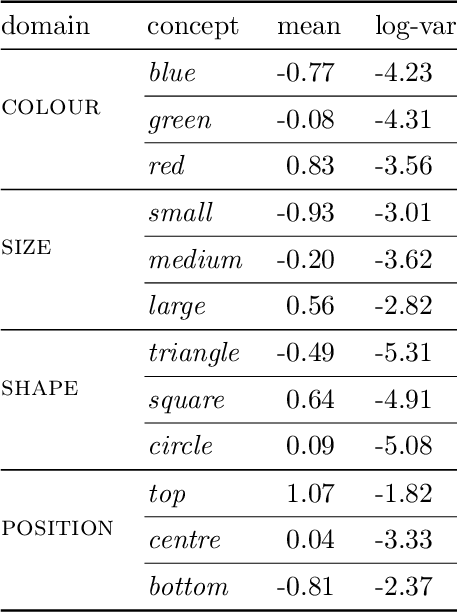

Abstract:In this report we present a new model of concepts, based on the framework of variational autoencoders, which is designed to have attractive properties such as factored conceptual domains, and at the same time be learnable from data. The model is inspired by, and closely related to, the Beta-VAE model of concepts, but is designed to be more closely connected with language, so that the names of concepts form part of the graphical model. We provide evidence that our model -- which we call the Conceptual VAE -- is able to learn interpretable conceptual representations from simple images of coloured shapes together with the corresponding concept labels. We also show how the model can be used as a concept classifier, and how it can be adapted to learn from fewer labels per instance. Finally, we formally relate our model to Gardenfors' theory of conceptual spaces, showing how the Gaussians we use to represent concepts can be formalised in terms of "fuzzy concepts" in such a space.

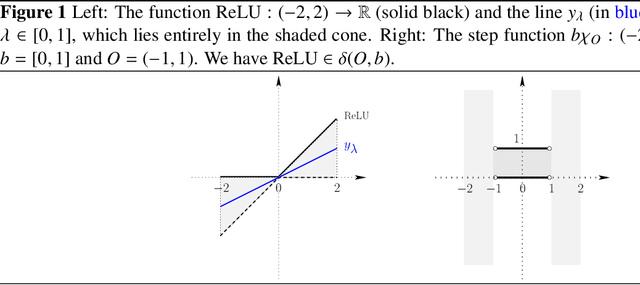

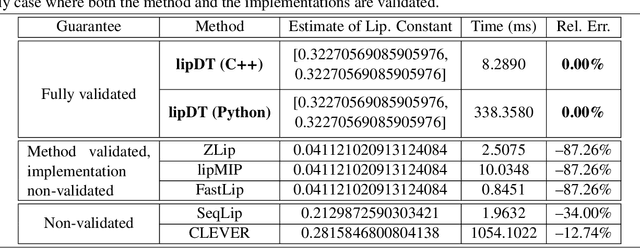

A Domain-Theoretic Framework for Robustness Analysis of Neural Networks

Mar 01, 2022

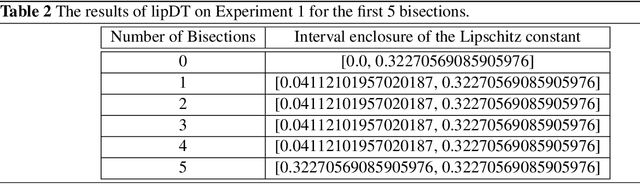

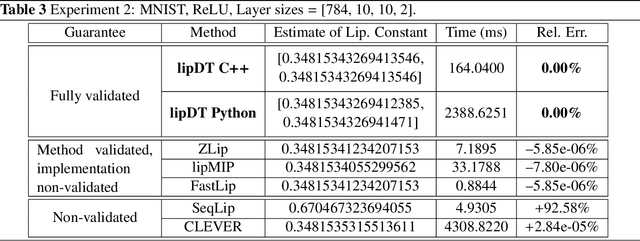

Abstract:We present a domain-theoretic framework for validated robustness analysis of neural networks. We first analyze the global robustness of a general class of networks. Then, using the fact that, over finite-dimensional Banach spaces, the domain-theoretic L-derivative coincides with Clarke's generalized gradient, we extend our framework for attack-agnostic local robustness analysis. Our framework is ideal for designing algorithms which are correct by construction. We exemplify this claim by developing a validated algorithm for estimation of Lipschitz constant of feedforward regressors. We prove the completeness of the algorithm over differentiable networks, and also over general position ReLU networks. Within our domain model, differentiable and non-differentiable networks can be analyzed uniformly. We implement our algorithm using arbitrary-precision interval arithmetic, and present the results of some experiments. Our implementation is truly validated, as it handles floating-point errors as well.

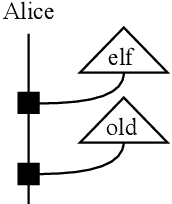

Composing Conversational Negation

Jul 14, 2021

Abstract:Negation in natural language does not follow Boolean logic and is therefore inherently difficult to model. In particular, it takes into account the broader understanding of what is being negated. In previous work, we proposed a framework for negation of words that accounts for `worldly context'. In this paper, we extend that proposal now accounting for the compositional structure inherent in language, within the DisCoCirc framework. We compose the negations of single words to capture the negation of sentences. We also describe how to model the negation of words whose meanings evolve in the text.

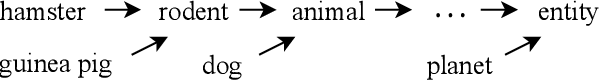

Conversational Negation using Worldly Context in Compositional Distributional Semantics

May 12, 2021

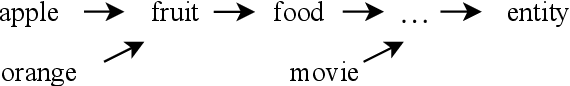

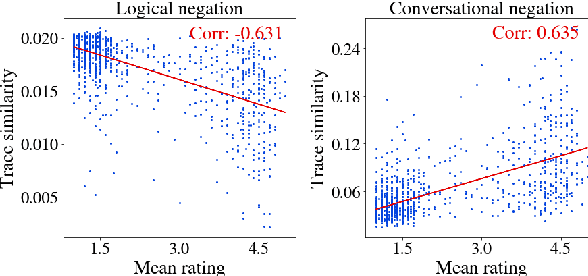

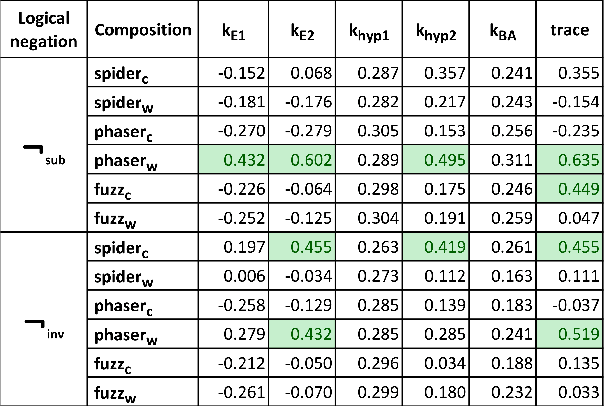

Abstract:We propose a framework to model an operational conversational negation by applying worldly context (prior knowledge) to logical negation in compositional distributional semantics. Given a word, our framework can create its negation that is similar to how humans perceive negation. The framework corrects logical negation to weight meanings closer in the entailment hierarchy more than meanings further apart. The proposed framework is flexible to accommodate different choices of logical negations, compositions, and worldly context generation. In particular, we propose and motivate a new logical negation using matrix inverse. We validate the sensibility of our conversational negation framework by performing experiments, leveraging density matrices to encode graded entailment information. We conclude that the combination of subtraction negation and phaser in the basis of the negated word yields the highest Pearson correlation of 0.635 with human ratings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge