Lia Yeh

Composing Conversational Negation

Jul 14, 2021

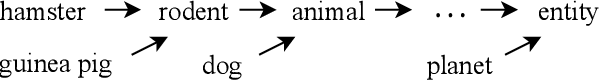

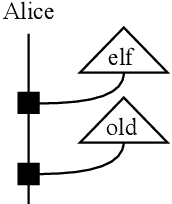

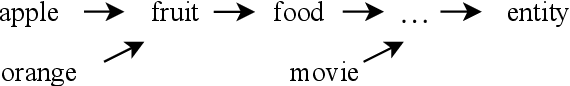

Abstract:Negation in natural language does not follow Boolean logic and is therefore inherently difficult to model. In particular, it takes into account the broader understanding of what is being negated. In previous work, we proposed a framework for negation of words that accounts for `worldly context'. In this paper, we extend that proposal now accounting for the compositional structure inherent in language, within the DisCoCirc framework. We compose the negations of single words to capture the negation of sentences. We also describe how to model the negation of words whose meanings evolve in the text.

Conversational Negation using Worldly Context in Compositional Distributional Semantics

May 12, 2021

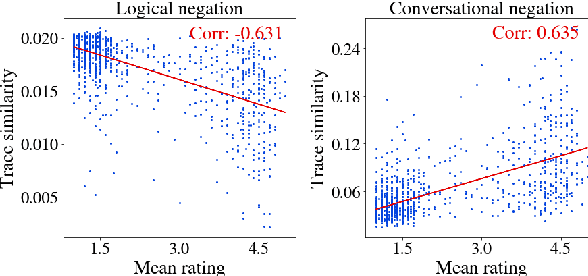

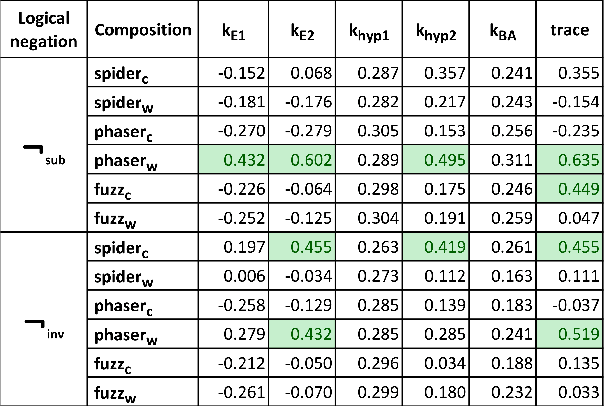

Abstract:We propose a framework to model an operational conversational negation by applying worldly context (prior knowledge) to logical negation in compositional distributional semantics. Given a word, our framework can create its negation that is similar to how humans perceive negation. The framework corrects logical negation to weight meanings closer in the entailment hierarchy more than meanings further apart. The proposed framework is flexible to accommodate different choices of logical negations, compositions, and worldly context generation. In particular, we propose and motivate a new logical negation using matrix inverse. We validate the sensibility of our conversational negation framework by performing experiments, leveraging density matrices to encode graded entailment information. We conclude that the combination of subtraction negation and phaser in the basis of the negated word yields the highest Pearson correlation of 0.635 with human ratings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge