Rakesh Bobba

ROS2-Based Simulation Framework for Cyberphysical Security Analysis of UAVs

Oct 04, 2024Abstract:We present a new simulator of Uncrewed Aerial Vehicles (UAVs) that is tailored to the needs of testing cyber-physical security attacks and defenses. Recent investigations into UAV safety have unveiled various attack surfaces and some defense mechanisms. However, due to escalating regulations imposed by aviation authorities on security research on real UAVs, and the substantial costs associated with hardware test-bed configurations, there arises a necessity for a simulator capable of substituting for hardware experiments, and/or narrowing down their scope to the strictly necessary. The study of different attack mechanisms requires specific features in a simulator. We propose a simulation framework based on ROS2, leveraging some of its key advantages, including modularity, replicability, customization, and the utilization of open-source tools such as Gazebo. Our framework has a built-in motion planner, controller, communication models and attack models. We share examples of research use cases that our framework can enable, demonstrating its utility.

BERT Lost Patience Won't Be Robust to Adversarial Slowdown

Oct 31, 2023Abstract:In this paper, we systematically evaluate the robustness of multi-exit language models against adversarial slowdown. To audit their robustness, we design a slowdown attack that generates natural adversarial text bypassing early-exit points. We use the resulting WAFFLE attack as a vehicle to conduct a comprehensive evaluation of three multi-exit mechanisms with the GLUE benchmark against adversarial slowdown. We then show our attack significantly reduces the computational savings provided by the three methods in both white-box and black-box settings. The more complex a mechanism is, the more vulnerable it is to adversarial slowdown. We also perform a linguistic analysis of the perturbed text inputs, identifying common perturbation patterns that our attack generates, and comparing them with standard adversarial text attacks. Moreover, we show that adversarial training is ineffective in defeating our slowdown attack, but input sanitization with a conversational model, e.g., ChatGPT, can remove perturbations effectively. This result suggests that future work is needed for developing efficient yet robust multi-exit models. Our code is available at: https://github.com/ztcoalson/WAFFLE

Toward Metrics for Differentiating Out-of-Distribution Sets

Oct 18, 2019

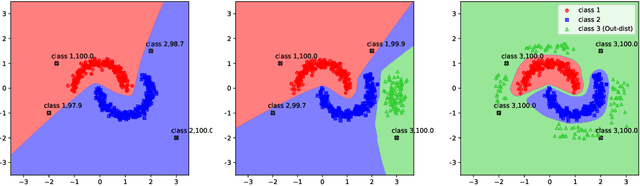

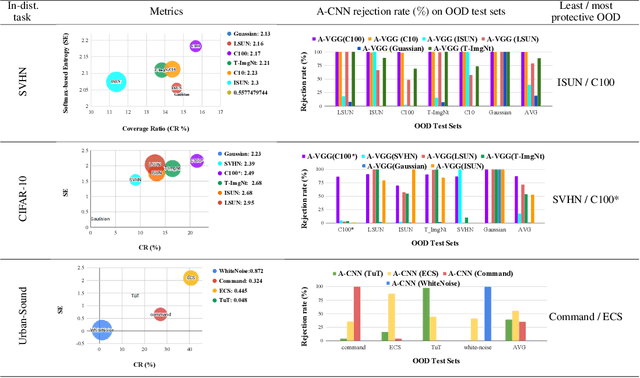

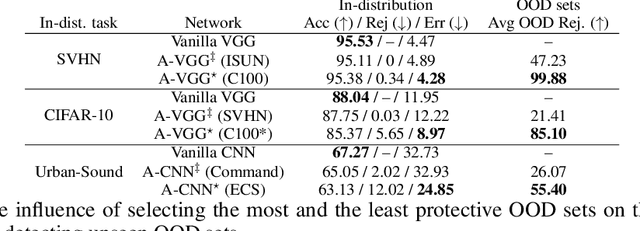

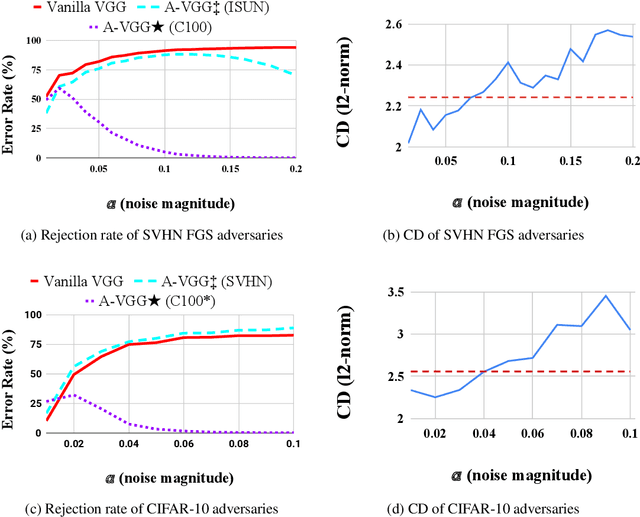

Abstract:Vanilla CNNs, as uncalibrated classifiers, suffer from classifying out-of-distribution (OOD) samples nearly as confidently as in-distribution samples, making them indistinguishable from each other. To tackle this challenge, some recent works have demonstrated the gains of leveraging readily accessible OOD sets for training end-to-end calibrated CNNs. However, a critical question remains unanswered in these works: how to select an OOD set, among the available OOD sets, for training such CNNs that induces high detection rates on unseen OOD sets? We address this pivotal question through the use of Augmented-CNN (A-CNN) involving an explicit rejection option. We first provide a formal definition to precisely differentiate OOD sets for the purpose of selection. As using this definition incurs a huge computational cost, we propose novel metrics, as a computationally efficient tool, for characterizing OOD sets in order to select the proper one. In a series of experiments on several image and audio benchmarks, we show that training an A-CNN with an OOD set identified by our metrics (called A-CNN$^{\star}$) leads to remarkable detection rate of unseen OOD sets while maintaining in-distribution generalization performance, thus demonstrating the viability of our metrics for identifying the proper OOD set. Furthermore, we show that A-CNN$^{\star}$ outperforms state-of-the-art OOD detectors across different benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge