Qiwen Zhu

Perceptual-Distortion Balanced Image Super-Resolution is a Multi-Objective Optimization Problem

Sep 05, 2024

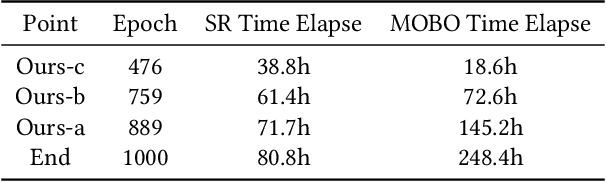

Abstract:Training Single-Image Super-Resolution (SISR) models using pixel-based regression losses can achieve high distortion metrics scores (e.g., PSNR and SSIM), but often results in blurry images due to insufficient recovery of high-frequency details. Conversely, using GAN or perceptual losses can produce sharp images with high perceptual metric scores (e.g., LPIPS), but may introduce artifacts and incorrect textures. Balancing these two types of losses can help achieve a trade-off between distortion and perception, but the challenge lies in tuning the loss function weights. To address this issue, we propose a novel method that incorporates Multi-Objective Optimization (MOO) into the training process of SISR models to balance perceptual quality and distortion. We conceptualize the relationship between loss weights and image quality assessment (IQA) metrics as black-box objective functions to be optimized within our Multi-Objective Bayesian Optimization Super-Resolution (MOBOSR) framework. This approach automates the hyperparameter tuning process, reduces overall computational cost, and enables the use of numerous loss functions simultaneously. Extensive experiments demonstrate that MOBOSR outperforms state-of-the-art methods in terms of both perceptual quality and distortion, significantly advancing the perception-distortion Pareto frontier. Our work points towards a new direction for future research on balancing perceptual quality and fidelity in nearly all image restoration tasks. The source code and pretrained models are available at: https://github.com/ZhuKeven/MOBOSR.

Hand Hygiene Assessment via Joint Step Segmentation and Key Action Scorer

Sep 25, 2022

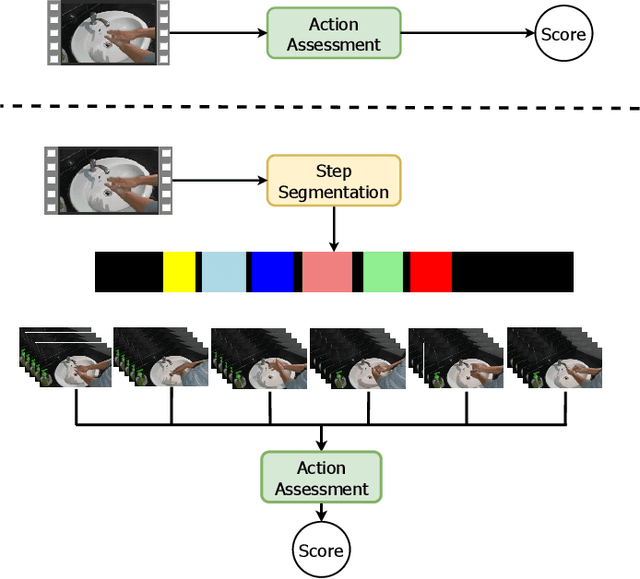

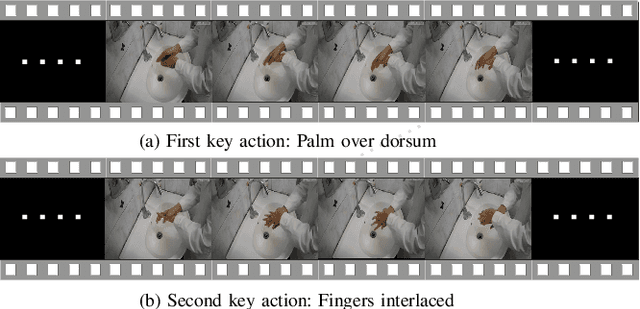

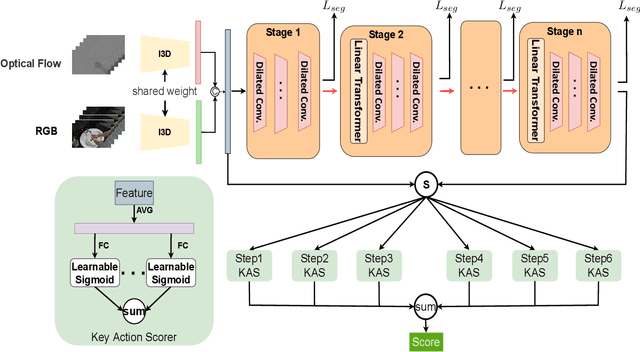

Abstract:Hand hygiene is a standard six-step hand-washing action proposed by the World Health Organization (WHO). However, there is no good way to supervise medical staff to do hand hygiene, which brings the potential risk of disease spread. In this work, we propose a new computer vision task called hand hygiene assessment to provide intelligent supervision of hand hygiene for medical staff. Existing action assessment works usually make an overall quality prediction on an entire video. However, the internal structures of hand hygiene action are important in hand hygiene assessment. Therefore, we propose a novel fine-grained learning framework to perform step segmentation and key action scorer in a joint manner for accurate hand hygiene assessment. Existing temporal segmentation methods usually employ multi-stage convolutional network to improve the segmentation robustness, but easily lead to over-segmentation due to the lack of the long-range dependence. To address this issue, we design a multi-stage convolution-transformer network for step segmentation. Based on the observation that each hand-washing step involves several key actions which determine the hand-washing quality, we design a set of key action scorers to evaluate the quality of key actions in each step. In addition, there lacks a unified dataset in hand hygiene assessment. Therefore, under the supervision of medical staff, we contribute a video dataset that contains 300 video sequences with fine-grained annotations. Extensive experiments on the dataset suggest that our method well assesses hand hygiene videos and achieves outstanding performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge