Niall L. Williams

A Framework for Active Haptic Guidance Using Robotic Haptic Proxies

Jan 12, 2023Abstract:Haptic feedback is an important component of creating an immersive virtual experience. Traditionally, haptic forces are rendered in response to the user's interactions with the virtual environment. In this work, we explore the idea of rendering haptic forces in a proactive manner, with the explicit intention to influence the user's behavior through compelling haptic forces. To this end, we present a framework for active haptic guidance in mixed reality, using one or more robotic haptic proxies to influence user behavior and deliver a safer and more immersive virtual experience. We provide details on common challenges that need to be overcome when implementing active haptic guidance, and discuss example applications that show how active haptic guidance can be used to influence the user's behavior. Finally, we apply active haptic guidance to a virtual reality navigation problem, and conduct a user study that demonstrates how active haptic guidance creates a safer and more immersive experience for users.

Redirected Walking in Static and Dynamic Scenes Using Visibility Polygons

Jun 21, 2021

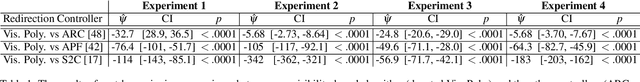

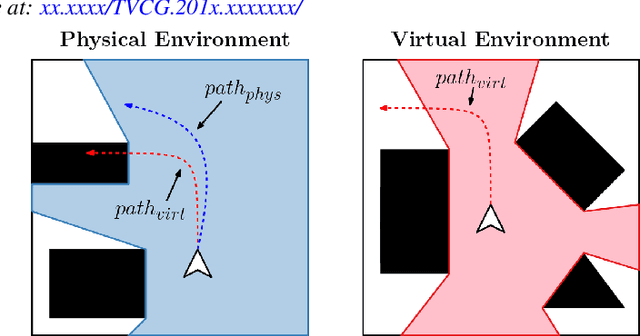

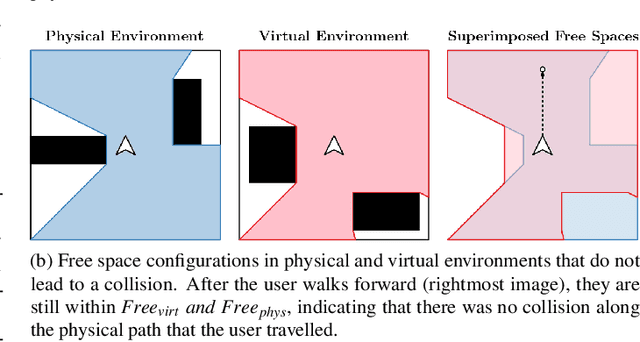

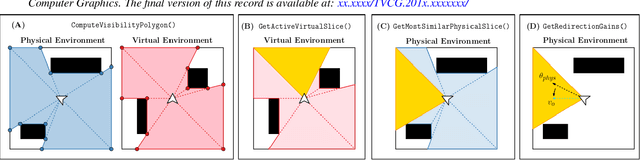

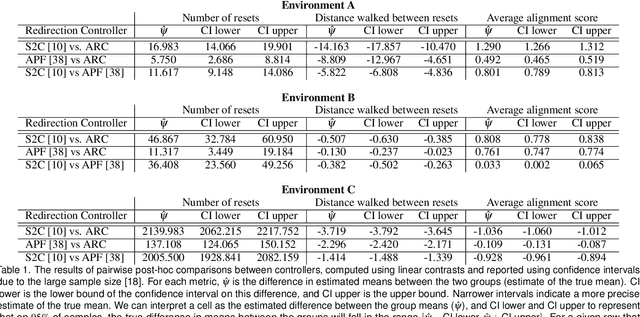

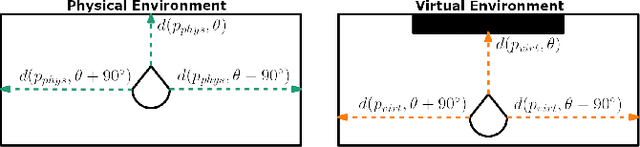

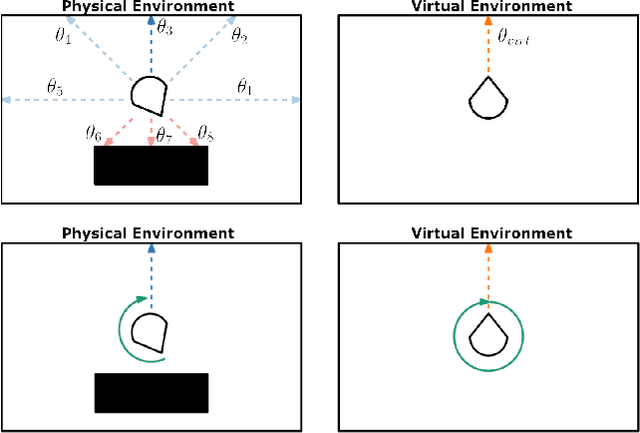

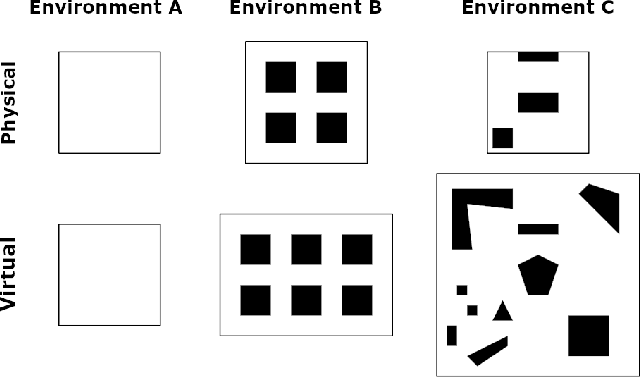

Abstract:We present a new approach for redirected walking in static and dynamic scenes that uses techniques from robot motion planning to compute the redirection gains that steer the user on collision-free paths in the physical space. Our first contribution is a mathematical framework for redirected walking using concepts from motion planning and configuration spaces. This framework highlights various geometric and perceptual constraints that tend to make collision-free redirected walking difficult. We use our framework to propose an efficient solution to the redirection problem that uses the notion of visibility polygons to compute the free spaces in the physical environment and the virtual environment. The visibility polygon provides a concise representation of the entire space that is visible, and therefore walkable, to the user from their position within an environment. Using this representation of walkable space, we apply redirected walking to steer the user to regions of the visibility polygon in the physical environment that closely match the region that the user occupies in the visibility polygon in the virtual environment. We show that our algorithm is able to steer the user along paths that result in significantly fewer red{resets than existing state-of-the-art algorithms in both static and dynamic scenes.

ARC: Alignment-based Redirection Controller for Redirected Walking in Complex Environments

Jan 13, 2021

Abstract:We present a novel redirected walking controller based on alignment that allows the user to explore large and complex virtual environments, while minimizing the number of collisions with obstacles in the physical environment. Our alignment-based redirection controller, ARC, steers the user such that their proximity to obstacles in the physical environment matches the proximity to obstacles in the virtual environment as closely as possible. To quantify a controller's performance in complex environments, we introduce a new metric, Complexity Ratio (CR), to measure the relative environment complexity and characterize the difference in navigational complexity between the physical and virtual environments. Through extensive simulation-based experiments, we show that ARC significantly outperforms current state-of-the-art controllers in its ability to steer the user on a collision-free path. We also show through quantitative and qualitative measures of performance that our controller is robust in complex environments with many obstacles. Our method is applicable to arbitrary environments and operates without any user input or parameter tweaking, aside from the layout of the environments. We have implemented our algorithm on the Oculus Quest head-mounted display and evaluated its performance in environments with varying complexity. Our project website is available at https://gamma.umd.edu/arc/.

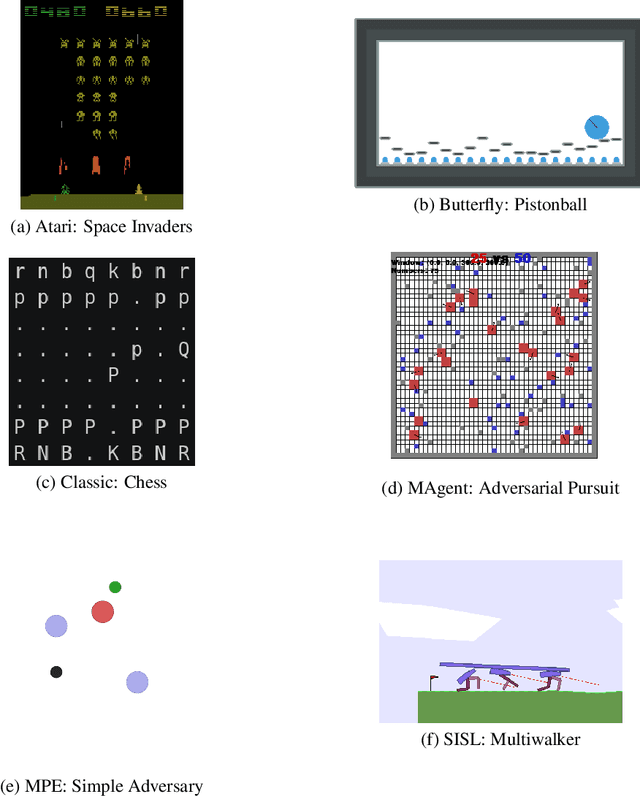

PettingZoo: Gym for Multi-Agent Reinforcement Learning

Sep 30, 2020

Abstract:OpenAI's Gym library contains a large, diverse set of environments that are useful benchmarks in reinforcement learning, under a single elegant Python API (with tools to develop new compliant environments) . The introduction of this library has proven a watershed moment for the reinforcement learning community, because it created an accessible set of benchmark environments that everyone could use (including wrapper important existing libraries), and because a standardized API let RL learning methods and environments from anywhere be trivially exchanged. This paper similarly introduces PettingZoo, a library of diverse set of multi-agent environments under a single elegant Python API, with tools to easily make new compliant environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge