Justin K. Terry

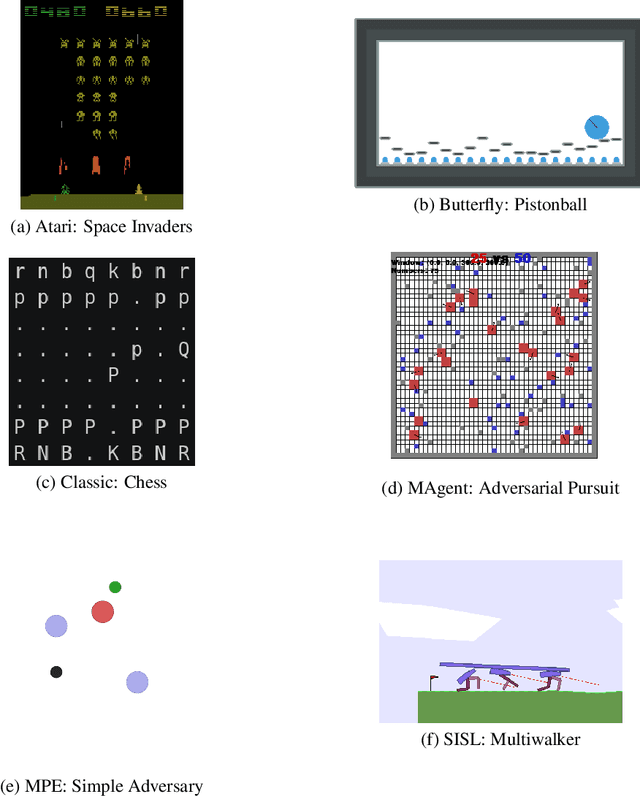

PettingZoo: Gym for Multi-Agent Reinforcement Learning

Sep 30, 2020

Abstract:OpenAI's Gym library contains a large, diverse set of environments that are useful benchmarks in reinforcement learning, under a single elegant Python API (with tools to develop new compliant environments) . The introduction of this library has proven a watershed moment for the reinforcement learning community, because it created an accessible set of benchmark environments that everyone could use (including wrapper important existing libraries), and because a standardized API let RL learning methods and environments from anywhere be trivially exchanged. This paper similarly introduces PettingZoo, a library of diverse set of multi-agent environments under a single elegant Python API, with tools to easily make new compliant environments.

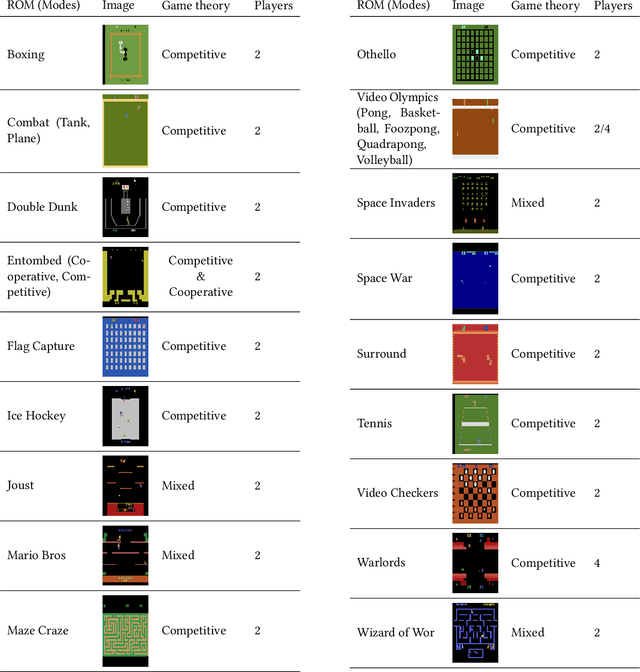

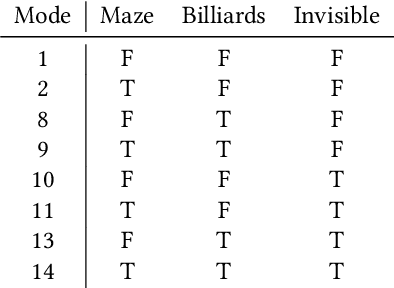

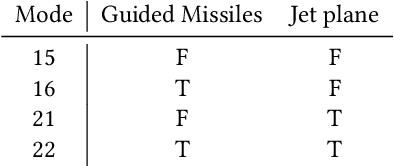

Multiplayer Support for the Arcade Learning Environment

Sep 20, 2020

Abstract:The Arcade Learning Environment ("ALE") is a widely used library in the reinforcement learning community that allows easy program-matic interfacing with Atari 2600 games, via the Stella emulator. We introduce a publicly available extension to the ALE that extends its support to multiplayer games and game modes. This interface is additionally integrated with PettingZoo to allow for a simple Gym-like interface in Python to interact with these games.

SuperSuit: Simple Microwrappers for Reinforcement Learning Environments

Aug 17, 2020Abstract:In reinforcement learning, wrappers are universally used to transform the information that passes between a model and an environment. Despite their ubiquity, no library exists with reasonable implementations of all popular preprocessing methods. This leads to unnecessary bugs, code inefficiencies, and wasted developer time. Accordingly we introduce SuperSuit, a Python library that includes all popular wrappers, and wrappers that can easily apply lambda functions to the observations/actions/reward. It's compatible with the standard Gym environment specification, as well as the PettingZoo specification for multi-agent environments. The library is available at https://github.com/PettingZoo-Team/SuperSuit,and can be installed via pip.

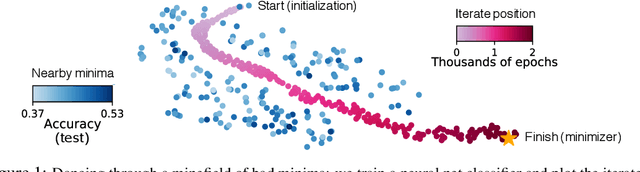

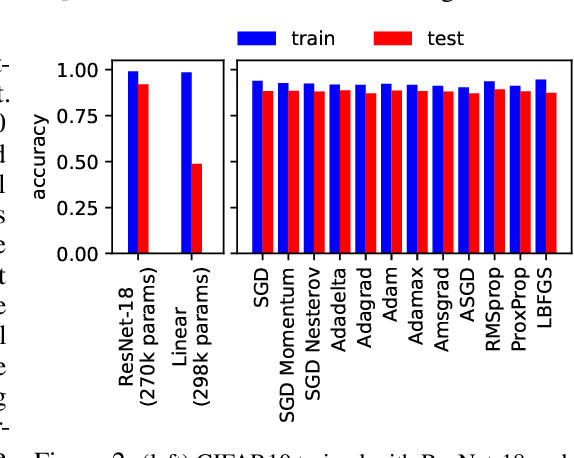

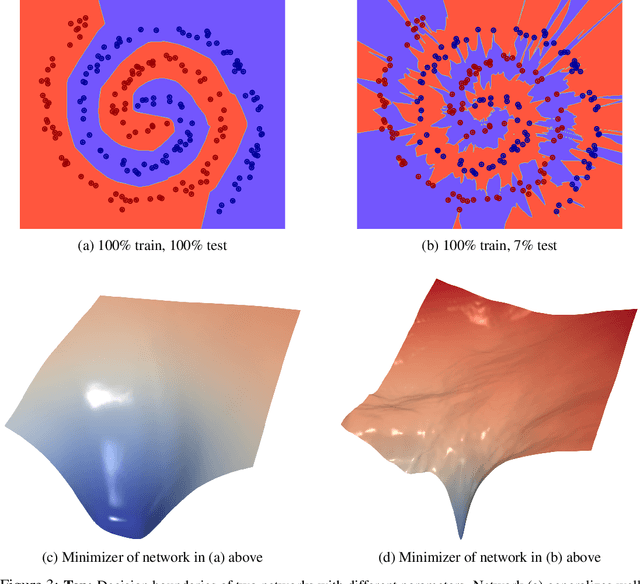

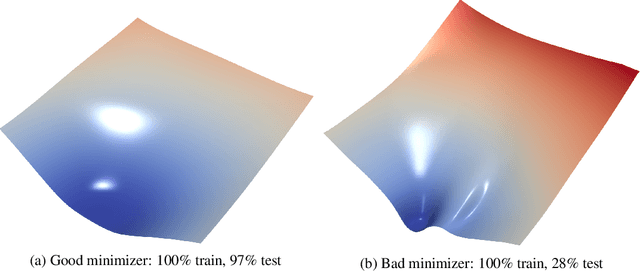

Understanding Generalization through Visualizations

Jul 16, 2019

Abstract:The power of neural networks lies in their ability to generalize to unseen data, yet the underlying reasons for this phenomenon remain elusive. Numerous rigorous attempts have been made to explain generalization, but available bounds are still quite loose, and analysis does not always lead to true understanding. The goal of this work is to make generalization more intuitive. Using visualization methods, we discuss the mystery of generalization, the geometry of loss landscapes, and how the curse (or, rather, the blessing) of dimensionality causes optimizers to settle into minima that generalize well.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge