Natawut Monaikul

Enhancing Table Representations with LLM-powered Synthetic Data Generation

Nov 04, 2024

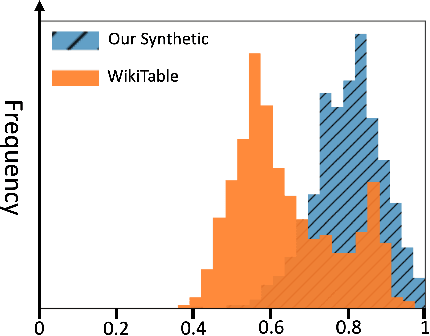

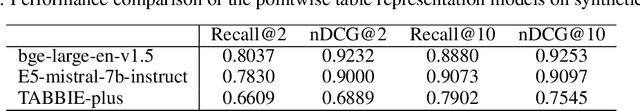

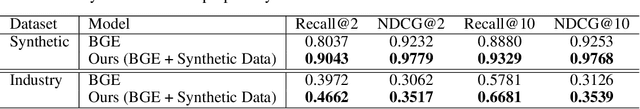

Abstract:In the era of data-driven decision-making, accurate table-level representations and efficient table recommendation systems are becoming increasingly crucial for improving table management, discovery, and analysis. However, existing approaches to tabular data representation often face limitations, primarily due to their focus on cell-level tasks and the lack of high-quality training data. To address these challenges, we first formulate a clear definition of table similarity in the context of data transformation activities within data-driven enterprises. This definition serves as the foundation for synthetic data generation, which require a well-defined data generation process. Building on this, we propose a novel synthetic data generation pipeline that harnesses the code generation and data manipulation capabilities of Large Language Models (LLMs) to create a large-scale synthetic dataset tailored for table-level representation learning. Through manual validation and performance comparisons on the table recommendation task, we demonstrate that the synthetic data generated by our pipeline aligns with our proposed definition of table similarity and significantly enhances table representations, leading to improved recommendation performance.

An End-to-End Human Simulator for Task-Oriented Multimodal Human-Robot Collaboration

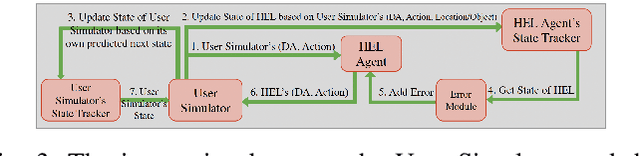

Apr 02, 2023Abstract:This paper proposes a neural network-based user simulator that can provide a multimodal interactive environment for training Reinforcement Learning (RL) agents in collaborative tasks involving multiple modes of communication. The simulator is trained on the existing ELDERLY-AT-HOME corpus and accommodates multiple modalities such as language, pointing gestures, and haptic-ostensive actions. The paper also presents a novel multimodal data augmentation approach, which addresses the challenge of using a limited dataset due to the expensive and time-consuming nature of collecting human demonstrations. Overall, the study highlights the potential for using RL and multimodal user simulators in developing and improving domestic assistive robots.

Multimodal Reinforcement Learning for Robots Collaborating with Humans

Mar 13, 2023

Abstract:Robot assistants for older adults and people with disabilities need to interact with their users in collaborative tasks. The core component of these systems is an interaction manager whose job is to observe and assess the task, and infer the state of the human and their intent to choose the best course of action for the robot. Due to the sparseness of the data in this domain, the policy for such multi-modal systems is often crafted by hand; as the complexity of interactions grows this process is not scalable. In this paper, we propose a reinforcement learning (RL) approach to learn the robot policy. In contrast to the dialog systems, our agent is trained with a simulator developed by using human data and can deal with multiple modalities such as language and physical actions. We conducted a human study to evaluate the performance of the system in the interaction with a user. Our designed system shows promising preliminary results when it is used by a real user.

Evaluating Multimodal Interaction of Robots Assisting Older Adults

Dec 20, 2022

Abstract:We outline our work on evaluating robots that assist older adults by engaging with them through multiple modalities that include physical interaction. Our thesis is that to increase the effectiveness of assistive robots: 1) robots need to understand and effect multimodal actions, 2) robots should not only react to the human, they need to take the initiative and lead the task when it is necessary. We start by briefly introducing our proposed framework for multimodal interaction and then describe two different experiments with the actual robots. In the first experiment, a Baxter robot helps a human find and locate an object using the Multimodal Interaction Manager (MIM) framework. In the second experiment, a NAO robot is used in the same task, however, the roles of the robot and the human are reversed. We discuss the evaluation methods that were used in these experiments, including different metrics employed to characterize the performance of the robot in each case. We conclude by providing our perspective on the challenges and opportunities for the evaluation of assistive robots for older adults in realistic settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge