Namyoon Lee

Fundamental Limits of CSI Compression in FDD Massive MIMO

Mar 15, 2026Abstract:Channel state information (CSI) feedback in frequency-division duplex (FDD) massive multiple-input multiple-output (MIMO) systems is fundamentally limited by the high dimensionality of wideband channels. In this paper, we model the stacked wideband CSI vector as a Gaussian-mixture source with a latent geometry state that represents different propagation environments. Each component corresponds to a locally stationary regime characterized by a correlated proper complex Gaussian distribution with its own covariance matrix. This representation captures the multimodal nature of practical CSI datasets while preserving the analytical tractability of Gaussian models. Motivated by this structure, we propose Gaussian-mixture transform coding (GMTC), a practical CSI feedback architecture that combines state inference with state-adaptive TC. The mixture parameters are learned offline from channel samples and stored as a shared statistical dictionary at both the user equipment (UE) and the base station. For each CSI realization, the UE identifies the most likely geometry state, encodes the corresponding label using a lossless source code, and compresses the CSI using the Karhunen-Loeve transform matched to that state. We further characterize the fundamental limits of CSI compression under this model by deriving analytical converse and achievability bounds on the rate-distortion (RD) function. A key structural result is that the optimal bit allocation across all mixture components is governed by a single global reverse-waterfilling level. Simulations on the COST2100 dataset show that GMTC significantly improves the RD tradeoff relative to neural transform coding approaches while requiring substantially smaller model memory and lower inference complexity. These results indicate that near-optimal CSI compression can be achieved through state-adaptive TC without relying on large neural encoders.

Scalable and Convergent Generalized Power Iteration Precoding for Massive MIMO Systems

Mar 04, 2026Abstract:In massive multiple-input multiple-output (MIMO) systems, achieving high spectral efficiency (SE) often requires advanced precoding algorithms whose complexity scales rapidly with the number of antennas, limiting practical deployment. In this paper, we develop a scalable and computationally efficient generalized power iteration precoding (GPIP) framework for massive MIMO systems under both perfect and imperfect channel state information at the transmitter (CSIT). By exploiting the low-dimensional subspace property of optimal precoders, we reformulate the high-dimensional beamforming problem into a lower-dimensional weight optimization that scales with the number of users rather than antennas. We further extend this framework to the imperfect CSIT scenario by showing that stationary solutions reside in a combined subspace spanned by the estimated channel and error covariance matrices, enabling a robust design via low-rank approximation. To reduce computational cost, we leverage the Sherman-Morrison formula to simplify matrix inversions. Moreover, interpreting the GPIP update as a projected preconditioned gradient ascent method, we establish convergence guarantees and develop a stable and monotonic algorithm using a backtracking line search. Numerical results demonstrate that the proposed methods achieve the highest SE performance compared to state-of-the-art linear precoders with significantly reduced complexity and convergence, highlighting their suitability for large-scale MIMO systems.

Multipoint Code-Weight Sphere Decoding: Parallel Near-ML Decoding for Short-Blocklength Codes

Feb 09, 2026Abstract:Ultra-reliable low-latency communications (URLLC) operate with short packets, where finite-blocklength effects make near-maximum-likelihood (near-ML) decoding desirable but often too costly. This paper proposes a two-stage near-ML decoding framework that applies to any linear block code. In the first stage, we run a low-complexity decoder to produce a candidate codeword and a cyclic redundancy check. When this stage succeeds, we terminate immediately. When it fails, we invoke a second-stage decoder, termed multipoint code-weight sphere decoding (MP-WSD). The central idea behind {MP-WSD} is to concentrate the ML search where it matters. We pre-compute a set of low-weight codewords and use them to generate structured local perturbations of the current estimate. Starting from the first-stage output, MP-WSD iteratively explores a small Euclidean sphere of candidate codewords formed by adding selected low-weight codewords, tightening the search region as better candidates are found. This design keeps the average complexity low: at high signal-to-noise ratio, the first stage succeeds with high probability and the second stage is rarely activated; when it is activated, the search remains localized. Simulation results show that the proposed decoder attains near-ML performance for short-blocklength, low-rate codes while maintaining low decoding latency.

Hierarchical Subcode Ensemble Decoding of Polar Codes

Feb 09, 2026Abstract:Subcode-ensemble decoders improve iterative decoding by running multiple decoders in parallel over carefully chosen subcodes, increasing the likelihood that at least one decoder avoids the dominant trapping structures. Achieving strong diversity gains, however, requires constructing many subcodes that satisfy a linear covering property-yet existing approaches lack a systematic way to scale the ensemble size while preserving this property. This paper introduces hierarchical subcode ensemble decoding (HSCED), a new ensemble decoding framework that expands the number of constituent decoders while still guaranteeing linear covering. The key idea is to recursively generate subcode parity constraints in a hierarchical structure so that coverage is maintained at every level, enabling large ensembles with controlled complexity. To demonstrate its effectiveness, we apply HSCED to belief propagation (BP) decoding of polar codes, where dense parity-check matrices induce severe stopping-set effects that limit conventional BP. Simulations confirm that HSCED delivers significant block-error-rate improvements over standard BP and conventional subcode-ensemble decoding under the same decoding-latency constraint.

The MIMO-ME-MS Channel: Analysis and Algorithm for Secure MIMO Integrated Sensing and Communications

Dec 24, 2025Abstract:This paper studies precoder design for secure MIMO integrated sensing and communications (ISAC) by introducing the MIMO-ME-MS channel, where a multi-antenna transmitter serves a legitimate multi-antenna receiver in the presence of a multi-antenna eavesdropper while simultaneously enabling sensing via a multi-antenna sensing receiver. Using sensing mutual information as the sensing metric, we formulate a nonconvex weighted objective that jointly captures secure communication (via secrecy rate) and sensing performance. A high-SNR analysis based on subspace decomposition characterizes the maximum achievable weighted degrees of freedom and reveals that a quasi-optimal precoder must span a "useful subspace," highlighting why straightforward extensions of classical wiretap/ISAC precoders can be suboptimal in this tripartite setting. Motivated by these insights, we develop a practical two-stage iterative algorithm that alternates between sequential basis construction and power allocation via a difference-of-convex program. Numerical results show that the proposed approach captures the desirable precoding structure predicted by the analysis and yields substantial gains in the MIMO-ME-MS channel.

Code-Weight Sphere Decoding

Aug 28, 2025Abstract:Ultra-reliable low-latency communications (URLLC) demand high-performance error-correcting codes and decoders in the finite blocklength regime. This letter introduces a novel two-stage near-maximum likelihood (near-ML) decoding framework applicable to any linear block code. Our approach first employs a low-complexity initial decoder. If this initial stage fails a cyclic redundancy check, it triggers a second stage: the proposed code-weight sphere decoding (WSD). WSD iteratively refines the codeword estimate by exploring a localized sphere of candidates constructed from pre-computed low-weight codewords. This strategy adaptively minimizes computational overhead at high signal-to-noise ratios while achieving near-ML performance, especially for low-rate codes. Extensive simulations demonstrate that our two-stage decoder provides an excellent trade-off between decoding reliability and complexity, establishing it as a promising solution for next-generation URLLC systems.

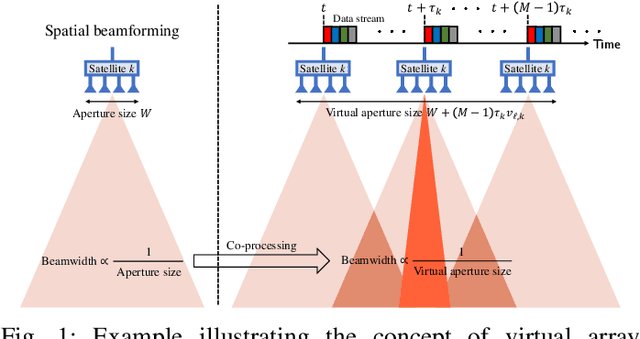

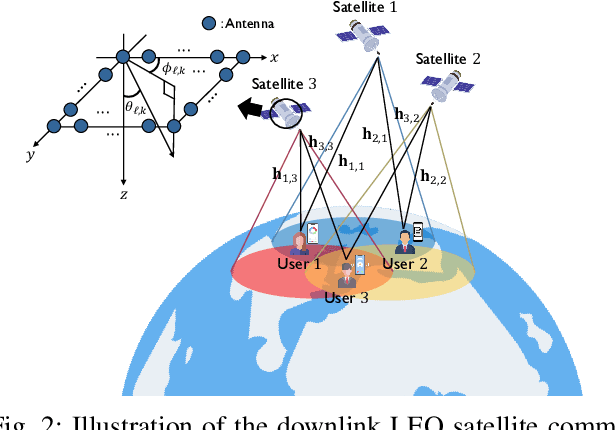

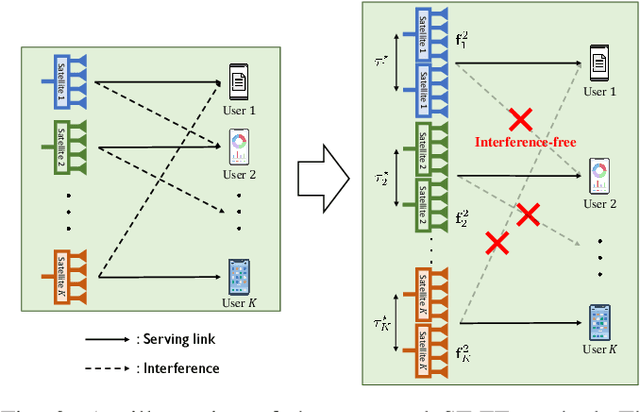

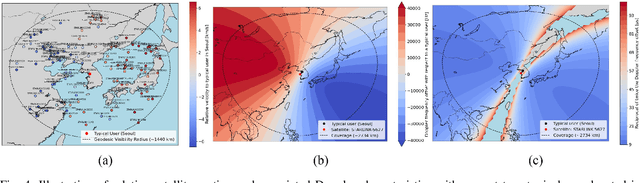

Space-Time Beamforming for LEO Satellite Communications

May 12, 2025

Abstract:Inter-beam interference poses a significant challenge in low Earth orbit (LEO) satellite communications due to dense satellite constellations. To address this issue, we introduce spacetime beamforming, a novel paradigm that leverages the spacetime channel vector, uniquely determined by the angle of arrival (AoA) and relative Doppler shift, to optimize beamforming between a moving satellite transmitter and a ground station user. We propose two space-time beamforming techniques: spacetime zero-forcing (ST-ZF) and space-time signal-to-leakage-plus-noise ratio (ST-SLNR) maximization. In a partially connected interference channel, ST-ZF achieves a 3dB SNR gain over the conventional interference avoidance method using maximum ratio transmission beamforming. Moreover, in general interference networks, ST-SLNR beamforming significantly enhances sum spectral efficiency compared to conventional interference management approaches. These results demonstrate the effectiveness of space-time beamforming in improving spectral efficiency and interference mitigation for next-generation LEO satellite networks.

Rate-Matching Deep Polar Codes via Polar Coded Extension

May 11, 2025Abstract:Deep polar codes are pre-transformed polar codes that employ a multi-layered polar kernel transformation strategy to enhance code performance in short blocklength regimes. However, like conventional polar codes, their block length is constrained to powers of two, as the final transformation layer uses a conventional polar kernel matrix. This paper introduces a novel rate-matching technique for deep polar codes using code extension, particularly effective when the desired code length slightly exceeds a power of two. The key idea is to exploit the layered structure of deep polar codes by concatenating polar codewords generated at each transformation layer. Based on this structure, we also develop an efficient decoding algorithm leveraging soft-output successive cancellation list decoding and provide comprehensive error probability analysis supporting our code design algorithms. Additionally, we propose a computationally efficient greedy algorithm for multi-layer configurations. Extensive simulations confirm that our approach delivers substantial coding gains over conventional rate-matching methods, especially in medium to high code-rate regimes.

Robust Deep Joint Source Channel Coding for Task-Oriented Semantic Communications

Mar 17, 2025Abstract:Semantic communications based on deep joint source-channel coding (JSCC) aim to improve communication efficiency by transmitting only task-relevant information. However, ensuring robustness to the stochasticity of communication channels remains a key challenge in learning-based JSCC. In this paper, we propose a novel regularization technique for learning-based JSCC to enhance robustness against channel noise. The proposed method utilizes the Kullback-Leibler (KL) divergence as a regularizer term in the training loss, measuring the discrepancy between two posterior distributions: one under noisy channel conditions (noisy posterior) and one for a noise-free system (noise-free posterior). Reducing this KL divergence mitigates the impact of channel noise on task performance by keeping the noisy posterior close to the noise-free posterior. We further show that the expectation of the KL divergence given the encoded representation can be analytically approximated using the Fisher information matrix and the covariance matrix of the channel noise. Notably, the proposed regularization is architecture-agnostic, making it broadly applicable to general semantic communication systems over noisy channels. Our experimental results validate that the proposed regularization consistently improves task performance across diverse semantic communication systems and channel conditions.

Transformer-Based Nonlinear Transform Coding for Multi-Rate CSI Compression in MIMO-OFDM Systems

Feb 27, 2025

Abstract:We propose a novel approach for channel state information (CSI) compression in multiple-input multiple-output orthogonal frequency division multiplexing (MIMO-OFDM) systems, where the frequency-domain channel matrix is treated as a high-dimensional complex-valued image. Our method leverages transformer-based nonlinear transform coding (NTC), an advanced deep-learning-driven image compression technique that generates a highly compact binary representation of the CSI. Unlike conventional autoencoder-based CSI compression, NTC optimizes a nonlinear mapping to produce a latent vector while simultaneously estimating its probability distribution for efficient entropy coding. By exploiting the statistical independence of latent vector entries, we integrate a transformer-based deep neural network with a scalar nested-lattice uniform quantization scheme, enabling low-complexity, multi-rate CSI feedback that dynamically adapts to varying feedback channel conditions. The proposed multi-rate CSI compression scheme achieves state-of-the-art rate-distortion performance, outperforming existing techniques with the same number of neural network parameters. Simulation results further demonstrate that our approach provides a superior rate-distortion trade-off, requiring only 6% of the neural network parameters compared to existing methods, making it highly efficient for practical deployment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge