Moisés Santos

Exploring Transformer Placement in Variational Autoencoders for Tabular Data Generation

Jan 28, 2026Abstract:Tabular data remains a challenging domain for generative models. In particular, the standard Variational Autoencoder (VAE) architecture, typically composed of multilayer perceptrons, struggles to model relationships between features, especially when handling mixed data types. In contrast, Transformers, through their attention mechanism, are better suited for capturing complex feature interactions. In this paper, we empirically investigate the impact of integrating Transformers into different components of a VAE. We conduct experiments on 57 datasets from the OpenML CC18 suite and draw two main conclusions. First, results indicate that positioning Transformers to leverage latent and decoder representations leads to a trade-off between fidelity and diversity. Second, we observe a high similarity between consecutive blocks of a Transformer in all components. In particular, in the decoder, the relationship between the input and output of a Transformer is approximately linear.

Tabular data generation with tensor contraction layers and transformers

Dec 06, 2024

Abstract:Generative modeling for tabular data has recently gained significant attention in the Deep Learning domain. Its objective is to estimate the underlying distribution of the data. However, estimating the underlying distribution of tabular data has its unique challenges. Specifically, this data modality is composed of mixed types of features, making it a non-trivial task for a model to learn intra-relationships between them. One approach to address mixture is to embed each feature into a continuous matrix via tokenization, while a solution to capture intra-relationships between variables is via the transformer architecture. In this work, we empirically investigate the potential of using embedding representations on tabular data generation, utilizing tensor contraction layers and transformers to model the underlying distribution of tabular data within Variational Autoencoders. Specifically, we compare four architectural approaches: a baseline VAE model, two variants that focus on tensor contraction layers and transformers respectively, and a hybrid model that integrates both techniques. Our empirical study, conducted across multiple datasets from the OpenML CC18 suite, compares models over density estimation and Machine Learning efficiency metrics. The main takeaway from our results is that leveraging embedding representations with the help of tensor contraction layers improves density estimation metrics, albeit maintaining competitive performance in terms of machine learning efficiency.

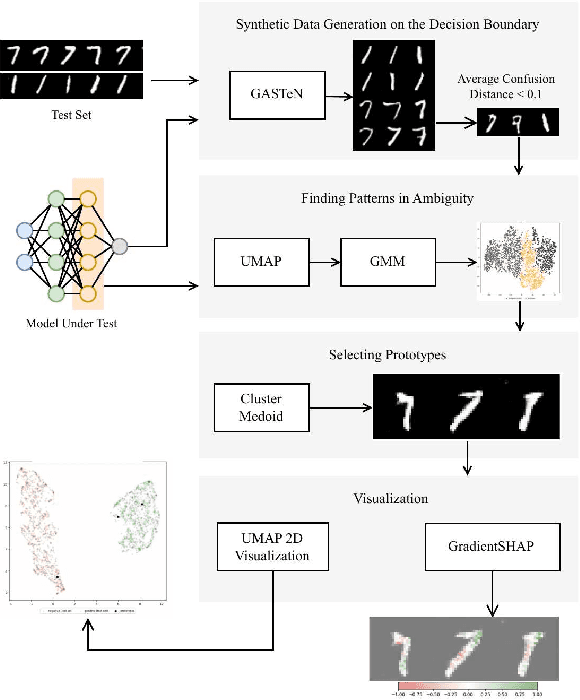

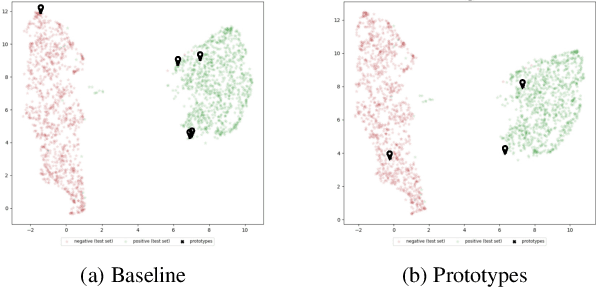

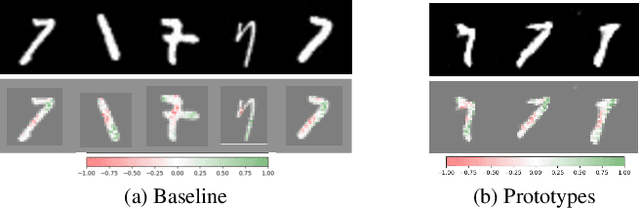

Finding Patterns in Ambiguity: Interpretable Stress Testing in the Decision~Boundary

Aug 12, 2024

Abstract:The increasing use of deep learning across various domains highlights the importance of understanding the decision-making processes of these black-box models. Recent research focusing on the decision boundaries of deep classifiers, relies on generated synthetic instances in areas of low confidence, uncovering samples that challenge both models and humans. We propose a novel approach to enhance the interpretability of deep binary classifiers by selecting representative samples from the decision boundary - prototypes - and applying post-model explanation algorithms. We evaluate the effectiveness of our approach through 2D visualizations and GradientSHAP analysis. Our experiments demonstrate the potential of the proposed method, revealing distinct and compact clusters and diverse prototypes that capture essential features that lead to low-confidence decisions. By offering a more aggregated view of deep classifiers' decision boundaries, our work contributes to the responsible development and deployment of reliable machine learning systems.

On-the-fly Data Augmentation for Forecasting with Deep Learning

Apr 25, 2024

Abstract:Deep learning approaches are increasingly used to tackle forecasting tasks. A key factor in the successful application of these methods is a large enough training sample size, which is not always available. In these scenarios, synthetic data generation techniques are usually applied to augment the dataset. Data augmentation is typically applied before fitting a model. However, these approaches create a single augmented dataset, potentially limiting their effectiveness. This work introduces OnDAT (On-the-fly Data Augmentation for Time series) to address this issue by applying data augmentation during training and validation. Contrary to traditional methods that create a single, static augmented dataset beforehand, OnDAT performs augmentation on-the-fly. By generating a new augmented dataset on each iteration, the model is exposed to a constantly changing augmented data variations. We hypothesize this process enables a better exploration of the data space, which reduces the potential for overfitting and improves forecasting performance. We validated the proposed approach using a state-of-the-art deep learning forecasting method and 8 benchmark datasets containing a total of 75797 time series. The experiments suggest that OnDAT leads to better forecasting performance than a strategy that applies data augmentation before training as well as a strategy that does not involve data augmentation. The method and experiments are publicly available.

tsMorph: generation of semi-synthetic time series to understand algorithm performance

Dec 03, 2023

Abstract:Time series forecasting is a subject of significant scientific and industrial importance. Despite the widespread utilization of forecasting methods, there is a dearth of research aimed at comprehending the conditions under which these methods yield favorable or unfavorable performances. Empirical studies, although common, encounter challenges due to the limited availability of datasets, impeding the extraction of reliable insights. To address this, we present tsMorph, a straightforward approach for generating semi-synthetic time series through dataset morphing. tsMorph operates by creating a sequence of datasets derived from two original datasets. These newly generated datasets exhibit a progressive departure from the characteristics of one dataset and a convergence toward the attributes of the other. This method provides a valuable alternative for obtaining substantial datasets. In this paper, we demonstrate the utility of tsMorph by assessing the performance of the Long Short-Term Memory Network forecasting algorithm. The time series under examination are sourced from the NN5 Competition. The findings reveal compelling insights. Notably, the performance of the Long Short-Term Memory Network improves proportionally with the frequency of the time series. These experiments affirm that tsMorph serves as an effective tool for gaining an understanding of forecasting algorithm behaviors, offering a pathway to overcome the limitations posed by empirical studies and enabling more extensive and reliable experimentation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge