Mir Feroskhan

Learning Adaptive Cross-Embodiment Visuomotor Policy with Contrastive Prompt Orchestration

Feb 01, 2026Abstract:Learning adaptive visuomotor policies for embodied agents remains a formidable challenge, particularly when facing cross-embodiment variations such as diverse sensor configurations and dynamic properties. Conventional learning approaches often struggle to separate task-relevant features from domain-specific variations (e.g., lighting, field-of-view, and rotation), leading to poor sample efficiency and catastrophic failure in unseen environments. To bridge this gap, we propose ContrAstive Prompt Orchestration (CAPO), a novel approach for learning visuomotor policies that integrates contrastive prompt learning and adaptive prompt orchestration. For prompt learning, we devise a hybrid contrastive learning strategy that integrates visual, temporal action, and text objectives to establish a pool of learnable prompts, where each prompt induces a visual representation encapsulating fine-grained domain factors. Based on these learned prompts, we introduce an adaptive prompt orchestration mechanism that dynamically aggregates these prompts conditioned on current observations. This enables the agent to adaptively construct optimal state representations by identifying dominant domain factors instantaneously. Consequently, the policy optimization is effectively shielded from irrelevant interference, preventing the common issue of overfitting to source domains. Extensive experiments demonstrate that CAPO significantly outperforms state-of-the-art baselines in sample efficiency and asymptotic performance. Crucially, it exhibits superior zero-shot adaptation across unseen target domains characterized by drastic environmental (e.g., illumination) and physical shifts (e.g., field-of-view and rotation), validating its effectiveness as a viable solution for cross-embodiment visuomotor policy adaptation.

Grounded Vision-Language Navigation for UAVs with Open-Vocabulary Goal Understanding

Jun 12, 2025Abstract:Vision-and-language navigation (VLN) is a long-standing challenge in autonomous robotics, aiming to empower agents with the ability to follow human instructions while navigating complex environments. Two key bottlenecks remain in this field: generalization to out-of-distribution environments and reliance on fixed discrete action spaces. To address these challenges, we propose Vision-Language Fly (VLFly), a framework tailored for Unmanned Aerial Vehicles (UAVs) to execute language-guided flight. Without the requirement for localization or active ranging sensors, VLFly outputs continuous velocity commands purely from egocentric observations captured by an onboard monocular camera. The VLFly integrates three modules: an instruction encoder based on a large language model (LLM) that reformulates high-level language into structured prompts, a goal retriever powered by a vision-language model (VLM) that matches these prompts to goal images via vision-language similarity, and a waypoint planner that generates executable trajectories for real-time UAV control. VLFly is evaluated across diverse simulation environments without additional fine-tuning and consistently outperforms all baselines. Moreover, real-world VLN tasks in indoor and outdoor environments under direct and indirect instructions demonstrate that VLFly achieves robust open-vocabulary goal understanding and generalized navigation capabilities, even in the presence of abstract language input.

FM-Planner: Foundation Model Guided Path Planning for Autonomous Drone Navigation

May 27, 2025Abstract:Path planning is a critical component in autonomous drone operations, enabling safe and efficient navigation through complex environments. Recent advances in foundation models, particularly large language models (LLMs) and vision-language models (VLMs), have opened new opportunities for enhanced perception and intelligent decision-making in robotics. However, their practical applicability and effectiveness in global path planning remain relatively unexplored. This paper proposes foundation model-guided path planners (FM-Planner) and presents a comprehensive benchmarking study and practical validation for drone path planning. Specifically, we first systematically evaluate eight representative LLM and VLM approaches using standardized simulation scenarios. To enable effective real-time navigation, we then design an integrated LLM-Vision planner that combines semantic reasoning with visual perception. Furthermore, we deploy and validate the proposed path planner through real-world experiments under multiple configurations. Our findings provide valuable insights into the strengths, limitations, and feasibility of deploying foundation models in real-world drone applications and providing practical implementations in autonomous flight. Project site: https://github.com/NTU-ICG/FM-Planner.

SaViD: Spectravista Aesthetic Vision Integration for Robust and Discerning 3D Object Detection in Challenging Environments

Mar 26, 2025

Abstract:The fusion of LiDAR and camera sensors has demonstrated significant effectiveness in achieving accurate detection for short-range tasks in autonomous driving. However, this fusion approach could face challenges when dealing with long-range detection scenarios due to disparity between sparsity of LiDAR and high-resolution camera data. Moreover, sensor corruption introduces complexities that affect the ability to maintain robustness, despite the growing adoption of sensor fusion in this domain. We present SaViD, a novel framework comprised of a three-stage fusion alignment mechanism designed to address long-range detection challenges in the presence of natural corruption. The SaViD framework consists of three key elements: the Global Memory Attention Network (GMAN), which enhances the extraction of image features through offering a deeper understanding of global patterns; the Attentional Sparse Memory Network (ASMN), which enhances the integration of LiDAR and image features; and the KNNnectivity Graph Fusion (KGF), which enables the entire fusion of spatial information. SaViD achieves superior performance on the long-range detection Argoverse-2 (AV2) dataset with a performance improvement of 9.87% in AP value and an improvement of 2.39% in mAPH for L2 difficulties on the Waymo Open dataset (WOD). Comprehensive experiments are carried out to showcase its robustness against 14 natural sensor corruptions. SaViD exhibits a robust performance improvement of 31.43% for AV2 and 16.13% for WOD in RCE value compared to other existing fusion-based methods while considering all the corruptions for both datasets. Our code is available at \href{https://github.com/sanjay-810/SAVID}

Learning Resilient Formation Control of Drones with Graph Attention Network

Sep 03, 2024Abstract:The rapid advancement of drone technology has significantly impacted various sectors, including search and rescue, environmental surveillance, and industrial inspection. Multidrone systems offer notable advantages such as enhanced efficiency, scalability, and redundancy over single-drone operations. Despite these benefits, ensuring resilient formation control in dynamic and adversarial environments, such as under communication loss or cyberattacks, remains a significant challenge. Classical approaches to resilient formation control, while effective in certain scenarios, often struggle with complex modeling and the curse of dimensionality, particularly as the number of agents increases. This paper proposes a novel, learning-based formation control for enhancing the adaptability and resilience of multidrone formations using graph attention networks (GATs). By leveraging GAT's dynamic capabilities to extract internode relationships based on the attention mechanism, this GAT-based formation controller significantly improves the robustness of drone formations against various threats, such as Denial of Service (DoS) attacks. Our approach not only improves formation performance in normal conditions but also ensures the resilience of multidrone systems in variable and adversarial environments. Extensive simulation results demonstrate the superior performance of our method over baseline formation controllers. Furthermore, the physical experiments validate the effectiveness of the trained control policy in real-world flights.

Clustering-based Learning for UAV Tracking and Pose Estimation

May 27, 2024Abstract:UAV tracking and pose estimation plays an imperative role in various UAV-related missions, such as formation control and anti-UAV measures. Accurately detecting and tracking UAVs in a 3D space remains a particularly challenging problem, as it requires extracting sparse features of micro UAVs from different flight environments and continuously matching correspondences, especially during agile flight. Generally, cameras and LiDARs are the two main types of sensors used to capture UAV trajectories in flight. However, both sensors have limitations in UAV classification and pose estimation. This technical report briefly introduces the method proposed by our team "NTU-ICG" for the CVPR 2024 UG2+ Challenge Track 5. This work develops a clustering-based learning detection approach, CL-Det, for UAV tracking and pose estimation using two types of LiDARs, namely Livox Avia and LiDAR 360. We combine the information from the two data sources to locate drones in 3D. We first align the timestamps of Livox Avia data and LiDAR 360 data and then separate the point cloud of objects of interest (OOIs) from the environment. The point cloud of OOIs is clustered using the DBSCAN method, with the midpoint of the largest cluster assumed to be the UAV position. Furthermore, we utilize historical estimations to fill in missing data. The proposed method shows competitive pose estimation performance and ranks 5th on the final leaderboard of the CVPR 2024 UG2+ Challenge.

AYDIV: Adaptable Yielding 3D Object Detection via Integrated Contextual Vision Transformer

Feb 12, 2024Abstract:Combining LiDAR and camera data has shown potential in enhancing short-distance object detection in autonomous driving systems. Yet, the fusion encounters difficulties with extended distance detection due to the contrast between LiDAR's sparse data and the dense resolution of cameras. Besides, discrepancies in the two data representations further complicate fusion methods. We introduce AYDIV, a novel framework integrating a tri-phase alignment process specifically designed to enhance long-distance detection even amidst data discrepancies. AYDIV consists of the Global Contextual Fusion Alignment Transformer (GCFAT), which improves the extraction of camera features and provides a deeper understanding of large-scale patterns; the Sparse Fused Feature Attention (SFFA), which fine-tunes the fusion of LiDAR and camera details; and the Volumetric Grid Attention (VGA) for a comprehensive spatial data fusion. AYDIV's performance on the Waymo Open Dataset (WOD) with an improvement of 1.24% in mAPH value(L2 difficulty) and the Argoverse2 Dataset with a performance improvement of 7.40% in AP value demonstrates its efficacy in comparison to other existing fusion-based methods. Our code is publicly available at https://github.com/sanjay-810/AYDIV2

Vision-based Learning for Drones: A Survey

Dec 08, 2023Abstract:Drones as advanced cyber-physical systems are undergoing a transformative shift with the advent of vision-based learning, a field that is rapidly gaining prominence due to its profound impact on drone autonomy and functionality. Different from existing task-specific surveys, this review offers a comprehensive overview of vision-based learning in drones, emphasizing its pivotal role in enhancing their operational capabilities. We start by elucidating the fundamental principles of vision-based learning, highlighting how it significantly improves drones' visual perception and decision-making processes. We then categorize vision-based control methods into indirect, semi-direct, and end-to-end approaches from the perception-control perspective. We further explore various applications of vision-based drones with learning capabilities, ranging from single-agent systems to more complex multi-agent and heterogeneous system scenarios, and underscore the challenges and innovations characterizing each area. Finally, we explore open questions and potential solutions, paving the way for ongoing research and development in this dynamic and rapidly evolving field. With growing large language models (LLMs) and embodied intelligence, vision-based learning for drones provides a promising but challenging road towards artificial general intelligence (AGI) in 3D physical world.

AMS-DRL: Learning Multi-Pursuit Evasion for Safe Targeted Navigation of Drones

Apr 07, 2023Abstract:Safe navigation of drones in the presence of adversarial physical attacks from multiple pursuers is a challenging task. This paper proposes a novel approach, asynchronous multi-stage deep reinforcement learning (AMS-DRL), to train an adversarial neural network that can learn from the actions of multiple pursuers and adapt quickly to their behavior, enabling the drone to avoid attacks and reach its target. Our approach guarantees convergence by ensuring Nash Equilibrium among agents from the game-theory analysis. We evaluate our method in extensive simulations and show that it outperforms baselines with higher navigation success rates. We also analyze how parameters such as the relative maximum speed affect navigation performance. Furthermore, we have conducted physical experiments and validated the effectiveness of the trained policies in real-time flights. A success rate heatmap is introduced to elucidate how spatial geometry influences navigation outcomes. Project website: https://github.com/NTU-UAVG/AMS-DRL-for-Pursuit-Evasion.

Collaborative Target Search with a Visual Drone Swarm: An Adaptive Curriculum Embedded Multi-stage Reinforcement Learning Approach

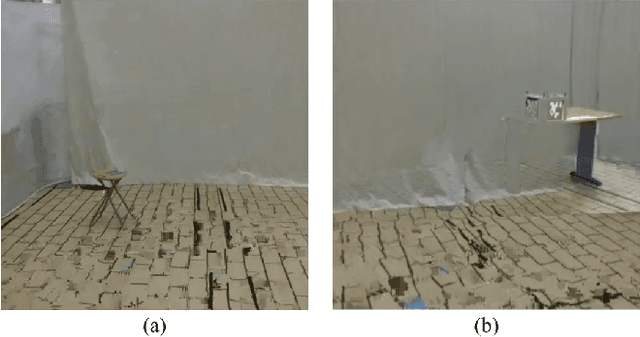

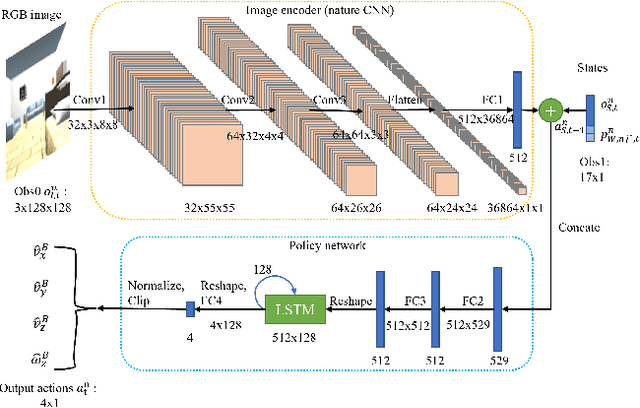

Apr 27, 2022

Abstract:Equipping drones with target search capabilities is desirable for applications in disaster management scenarios and smart warehouse delivery systems. Instead of deploying a single drone, an intelligent drone swarm that can collaborate with one another in maneuvering among obstacles will be more effective in accomplishing the target search in a shorter amount of time. In this work, we propose a data-efficient reinforcement learning-based approach, Adaptive Curriculum Embedded Multi-Stage Learning (ACEMSL), to address the challenges of carrying out a collaborative target search with a visual drone swarm, namely the 3D sparse reward space exploration and the collaborative behavior requirement. Specifically, we develop an adaptive embedded curriculum, where the task difficulty level can be adaptively adjusted according to the success rate achieved in training. Meanwhile, with multi-stage learning, ACEMSL allows data-efficient training and individual-team reward allocation for the collaborative drone swarm. The effectiveness and generalization capability of our approach are validated using simulations and actual flight tests.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge