Mingran Sun

Multi-Modal Intelligent Channel Modeling: From Fine-tuned LLMs to Pre-trained Foundation Models

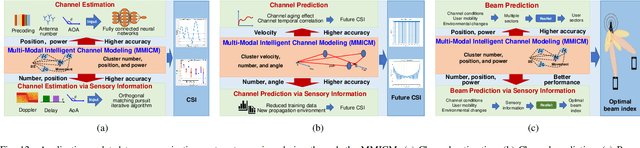

Mar 11, 2026Abstract:To meet the evolving demands of sixth-generation (6G) wireless channel modeling, such as precise prediction capability, extension capabilities, and system participation capability, multi-modal intelligent channel modeling (MMICM) has been proposed based on Synesthesia of Machines (SoM) which explores the mapping relationship between multi-modal sensing in physical environment and channel characteristics in electromagnetic space. Furthermore, for integrating heterogeneous sensing, reasoning across scales, and generalizing to complex air-space-ground-sea communication environments, two new paradigms of MMICM are explored, including fine-tuned large language models (LLMs) for Channel Modeling (LLM4CM) and Wireless Channel Foundation Model (WiCo). LLM4CM leverages pre-trained LLMs on channel representations for cross-modal alignment and lightweight adaptation, enabling flexible channel modeling for 6G multi-band and multi-scenario communication systems. WiCo, which pre-trained on physically valid channel realizations and their associated environmental and modal observations, embeds electromagnetic equations for physical interpretability and uses parameterized adapters for scalability. This article details the architectures and features of LLM4CM and WiCo, laying a foundation for artificial intelligence (AI)-native 6G wireless communication systems. Then, we conducts a comparative analysis of the two emerging paradigms, focusing on their distinct characteristics, relative advantages, inherent limitations, and performance attributes. Finally, we discuss the future research directions.

WiCo-PG: Wireless Channel Foundation Model for Pathloss Map Generation via Synesthesia of Machines

Nov 19, 2025

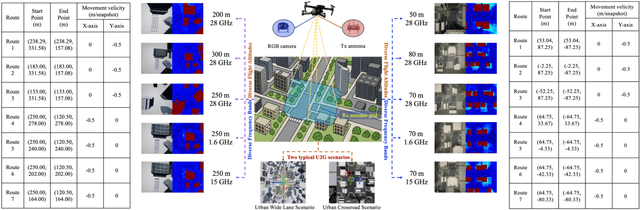

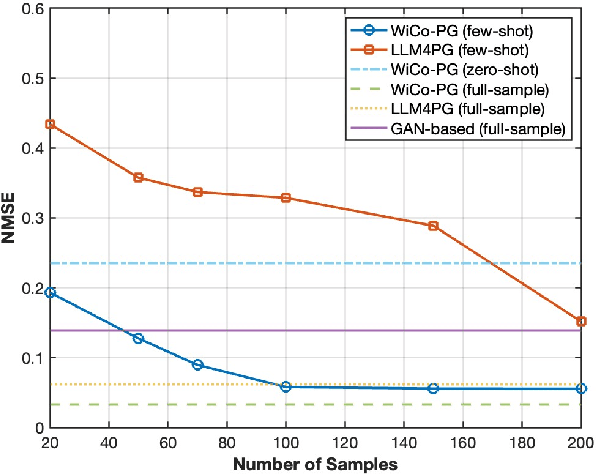

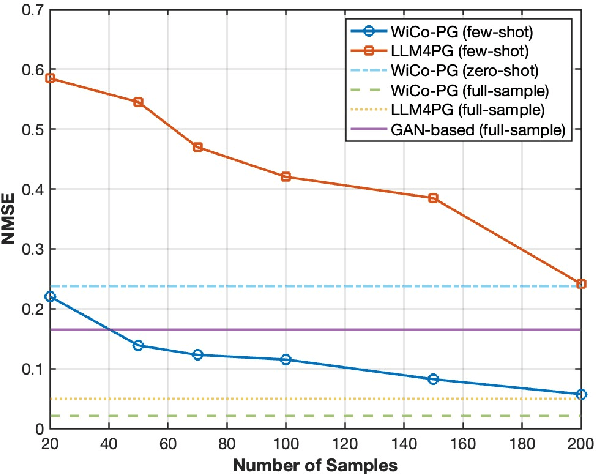

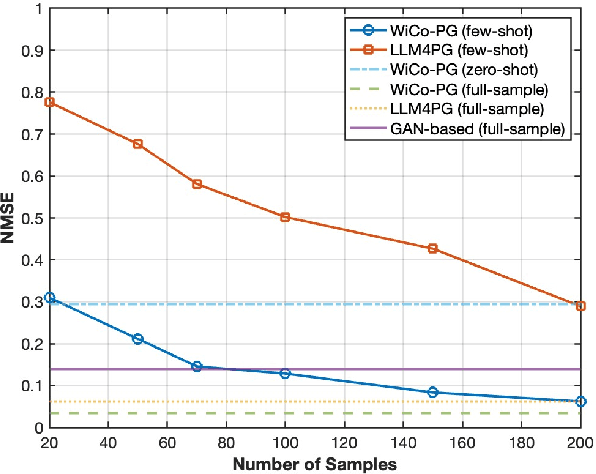

Abstract:A wireless channel foundation model for pathloss map generation (WiCo-PG) via Synesthesia of Machines (SoM) is developed for the first time. Considering sixth-generation (6G) uncrewed aerial vehicle (UAV)-to-ground (U2G) scenarios, a new multi-modal sensing-communication dataset is constructed for WiCo-PG pre-training, including multiple U2G scenarios, diverse flight altitudes, and diverse frequency bands. Based on the constructed dataset, the proposed WiCo-PG enables cross-modal pathloss map generation by leveraging RGB images from different scenarios and flight altitudes. In WiCo-PG, a novel network architecture designed for cross-modal pathloss map generation based on dual vector quantized generative adversarial networks (VQGANs) and Transformer is proposed. Furthermore, a novel frequency-guided shared-routed mixture of experts (S-R MoE) architecture is designed for cross-modal pathloss map generation. Simulation results demonstrate that the proposed WiCo-PG achieves improved pathloss map generation accuracy through pre-training with a normalized mean squared error (NMSE) of 0.012, outperforming the large language model (LLM)-based scheme, i.e., LLM4PG, and the conventional deep learning-based scheme by more than 6.98 dB. The enhanced generality of the proposed WiCo-PG can further outperform the LLM4PG by at least 1.37 dB using 2.7% samples in few-shot generalization.

SynthSoM: A synthetic intelligent multi-modal sensing-communication dataset for Synesthesia of Machines (SoM)

Jan 13, 2025

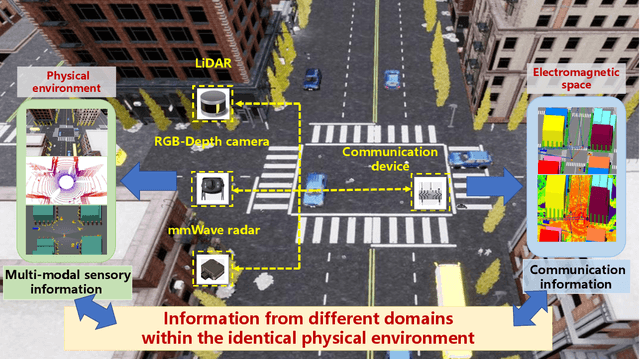

Abstract:Given the importance of datasets for sensing-communication integration research, a novel simulation platform for constructing communication and multi-modal sensory dataset is developed. The developed platform integrates three high-precision software, i.e., AirSim, WaveFarer, and Wireless InSite, and further achieves in-depth integration and precise alignment of them. Based on the developed platform, a new synthetic intelligent multi-modal sensing-communication dataset for Synesthesia of Machines (SoM), named SynthSoM, is proposed. The SynthSoM dataset contains various air-ground multi-link cooperative scenarios with comprehensive conditions, including multiple weather conditions, times of the day, intelligent agent densities, frequency bands, and antenna types. The SynthSoM dataset encompasses multiple data modalities, including radio-frequency (RF) channel large-scale and small-scale fading data, RF millimeter wave (mmWave) radar sensory data, and non-RF sensory data, e.g., RGB images, depth maps, and light detection and ranging (LiDAR) point clouds. The quality of SynthSoM dataset is validated via statistics-based qualitative inspection and evaluation metrics through machine learning (ML) via real-world measurements. The SynthSoM dataset is open-sourced and provides consistent data for cross-comparing SoM-related algorithms.

Multi-Modal Intelligent Channel Modeling: A New Modeling Paradigm via Synesthesia of Machines

Nov 06, 2024

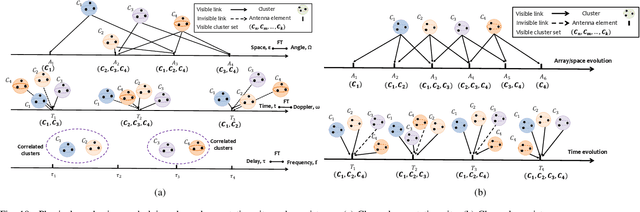

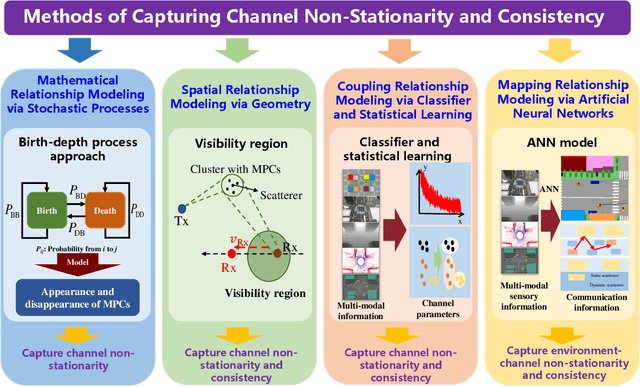

Abstract:In the future sixth-generation (6G) era, to support accurate localization sensing and efficient communication link establishment for intelligent agents, a comprehensive understanding of the surrounding environment and proper channel modeling are indispensable. The existing method, which solely exploits radio frequency (RF) communication information, is difficult to accomplish accurate channel modeling. Fortunately, multi-modal devices are deployed on intelligent agents to obtain environmental features, which could further assist in channel modeling. Currently, some research efforts have been devoted to utilizing multi-modal information to facilitate channel modeling, while still lack a comprehensive review. To fill this gap, we embark on an initial endeavor with the goal of reviewing multi-modal intelligent channel modeling (MMICM) via Synesthesia of Machines (SoM). Compared to channel modeling approaches that solely utilize RF communication information, the utilization of multi-modal information can provide a more in-depth understanding of the propagation environment around the transceiver, thus facilitating more accurate channel modeling. First, this paper introduces existing channel modeling approaches from the perspective of the channel modeling evolution. Then, we have elaborated and investigated recent advances in the topic of capturing typical channel characteristics and features, i.e., channel non-stationarity and consistency, by characterizing the mathematical, spatial, coupling, and mapping relationships. In addition, applications that can be supported by MMICM are summarized and analyzed. To corroborate the superiority of MMICM via SoM, we give the simulation result and analysis. Finally, some open issues and potential directions for the MMICM are outlined from the perspectives of measurements, modeling, and applications.

A LiDAR-Aided Channel Model for Vehicular Intelligent Sensing-Communication Integration

Mar 21, 2024

Abstract:In this paper, a novel channel modeling approach, named light detection and ranging (LiDAR)-aided geometry-based stochastic modeling (LA-GBSM), is developed. Based on the developed LA-GBSM approach, a new millimeter wave (mmWave) channel model for sixth-generation (6G) vehicular intelligent sensing-communication integration is proposed, which can support the design of intelligent transportation systems (ITSs). The proposed LA-GBSM is accurately parameterized under high, medium, and low vehicular traffic density (VTD) conditions via a sensing-communication simulation dataset with LiDAR point clouds and scatterer information for the first time. Specifically, by detecting dynamic vehicles and static building/tress through LiDAR point clouds via machine learning, scatterers are divided into static and dynamic scatterers. Furthermore, statistical distributions of parameters, e.g., distance, angle, number, and power, related to static and dynamic scatterers are quantified under high, medium, and low VTD conditions. To mimic channel non-stationarity and consistency, based on the quantified statistical distributions, a new visibility region (VR)-based algorithm in consideration of newly generated static/dynamic scatterers is developed. Key channel statistics are derived and simulated. By comparing simulation results and ray-tracing (RT)-based results, the utility of the proposed LA-GBSM is verified.

M$^3$SC: A Generic Dataset for Mixed Multi-Modal Sensing and Communication Integration

Jun 25, 2023Abstract:The sixth generation (6G) of mobile communication system is witnessing a new paradigm shift, i.e., integrated sensing-communication system. A comprehensive dataset is a prerequisite for 6G integrated sensing-communication research. This paper develops a novel simulation dataset, named M3SC, for mixed multi-modal (MMM) sensing-communication integration, and the generation framework of the M3SC dataset is further given. To obtain multi-modal sensory data in physical space and communication data in electromagnetic space, we utilize AirSim and WaveFarer to collect multi-modal sensory data and exploit Wireless InSite to collect communication data. Furthermore, the in-depth integration and precise alignment of AirSim, WaveFarer, and Wireless InSite are achieved. The M3SC dataset covers various weather conditions, various frequency bands, and different times of the day. Currently, the M3SC dataset contains 1500 snapshots, including 80 RGB images, 160 depth maps, 80 LiDAR point clouds, 256 sets of mmWave waveforms with 8 radar point clouds, and 72 channel impulse response (CIR) matrices per snapshot, thus totaling 120,000 RGB images, 240,000 depth maps, 120,000 LiDAR point clouds, 384,000 sets of mmWave waveforms with 12,000 radar point clouds, and 108,000 CIR matrices. The data processing result presents the multi-modal sensory information and communication channel statistical properties. Finally, the MMM sensing-communication application, which can be supported by the M3SC dataset, is discussed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge