Mikhail Terekhov

Adaptive Attacks on Trusted Monitors Subvert AI Control Protocols

Oct 10, 2025

Abstract:AI control protocols serve as a defense mechanism to stop untrusted LLM agents from causing harm in autonomous settings. Prior work treats this as a security problem, stress testing with exploits that use the deployment context to subtly complete harmful side tasks, such as backdoor insertion. In practice, most AI control protocols are fundamentally based on LLM monitors, which can become a central point of failure. We study adaptive attacks by an untrusted model that knows the protocol and the monitor model, which is plausible if the untrusted model was trained with a later knowledge cutoff or can search for this information autonomously. We instantiate a simple adaptive attack vector by which the attacker embeds publicly known or zero-shot prompt injections in the model outputs. Using this tactic, frontier models consistently evade diverse monitors and complete malicious tasks on two main AI control benchmarks. The attack works universally against current protocols that rely on a monitor. Furthermore, the recent Defer-to-Resample protocol even backfires, as its resampling amplifies the prompt injection and effectively reframes it as a best-of-$n$ attack. In general, adaptive attacks on monitor models represent a major blind spot in current control protocols and should become a standard component of evaluations for future AI control mechanisms.

Control Tax: The Price of Keeping AI in Check

Jun 05, 2025Abstract:The rapid integration of agentic AI into high-stakes real-world applications requires robust oversight mechanisms. The emerging field of AI Control (AIC) aims to provide such an oversight mechanism, but practical adoption depends heavily on implementation overhead. To study this problem better, we introduce the notion of Control tax -- the operational and financial cost of integrating control measures into AI pipelines. Our work makes three key contributions to the field of AIC: (1) we introduce a theoretical framework that quantifies the Control Tax and maps classifier performance to safety assurances; (2) we conduct comprehensive evaluations of state-of-the-art language models in adversarial settings, where attacker models insert subtle backdoors into code while monitoring models attempt to detect these vulnerabilities; and (3) we provide empirical financial cost estimates for control protocols and develop optimized monitoring strategies that balance safety and cost-effectiveness while accounting for practical constraints like auditing budgets. Our framework enables practitioners to make informed decisions by systematically connecting safety guarantees with their costs, advancing AIC through principled economic feasibility assessment across different deployment contexts.

Regret-Optimized Portfolio Enhancement through Deep Reinforcement Learning and Future Looking Rewards

Feb 04, 2025Abstract:This paper introduces a novel agent-based approach for enhancing existing portfolio strategies using Proximal Policy Optimization (PPO). Rather than focusing solely on traditional portfolio construction, our approach aims to improve an already high-performing strategy through dynamic rebalancing driven by PPO and Oracle agents. Our target is to enhance the traditional 60/40 benchmark (60% stocks, 40% bonds) by employing the Regret-based Sharpe reward function. To address the impact of transaction fee frictions and prevent signal loss, we develop a transaction cost scheduler. We introduce a future-looking reward function and employ synthetic data training through a circular block bootstrap method to facilitate the learning of generalizable allocation strategies. We focus on two key evaluation measures: return and maximum drawdown. Given the high stochasticity of financial markets, we train 20 independent agents each period and evaluate their average performance against the benchmark. Our method not only enhances the performance of the existing portfolio strategy through strategic rebalancing but also demonstrates strong results compared to other baselines.

Contextual Bandit Optimization with Pre-Trained Neural Networks

Jan 09, 2025

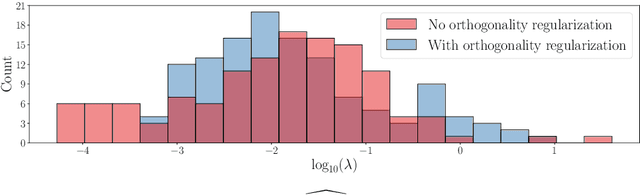

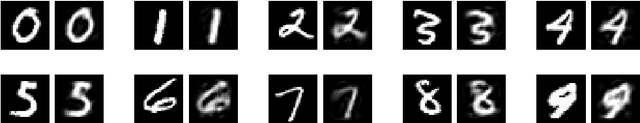

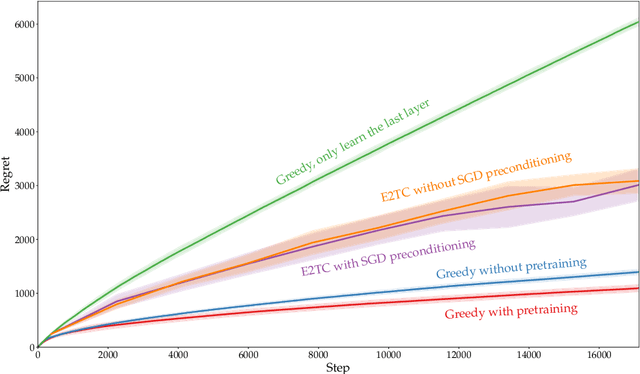

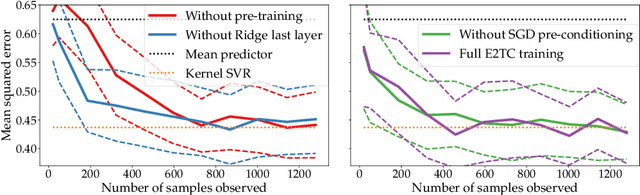

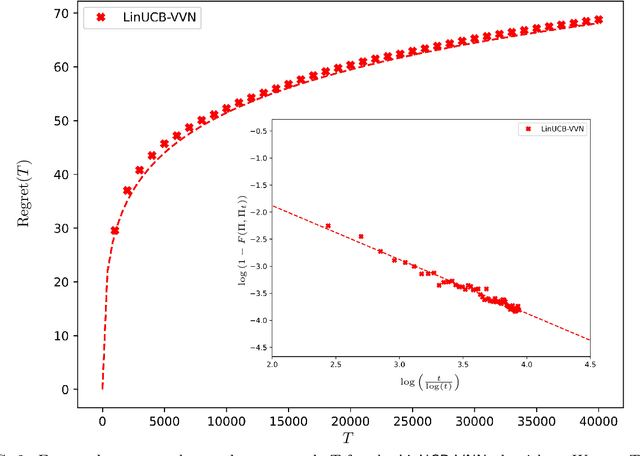

Abstract:Bandit optimization is a difficult problem, especially if the reward model is high-dimensional. When rewards are modeled by neural networks, sublinear regret has only been shown under strong assumptions, usually when the network is extremely wide. In this thesis, we investigate how pre-training can help us in the regime of smaller models. We consider a stochastic contextual bandit with the rewards modeled by a multi-layer neural network. The last layer is a linear predictor, and the layers before it are a black box neural architecture, which we call a representation network. We model pre-training as an initial guess of the weights of the representation network provided to the learner. To leverage the pre-trained weights, we introduce a novel algorithm we call Explore Twice then Commit (E2TC). During its two stages of exploration, the algorithm first estimates the last layer's weights using Ridge regression, and then runs Stochastic Gradient Decent jointly on all the weights. For a locally convex loss function, we provide conditions on the pre-trained weights under which the algorithm can learn efficiently. Under these conditions, we show sublinear regret of E2TC when the dimension of the last layer and number of actions $K$ are much smaller than the horizon $T$. In the weak training regime, when only the last layer is learned, the problem reduces to a misspecified linear bandit. We introduce a measure of misspecification $\epsilon_0$ for this bandit and use it to provide bounds $O(\epsilon_0\sqrt{d}KT+(KT)^{4 /5})$ or $\tilde{O}(\epsilon_0\sqrt{d}KT+d^{1 /3}(KT)^{2 /3})$ on the regret, depending on regularization strength. The first of these bounds has a dimension-independent sublinear term, made possible by the stochasticity of contexts. We also run experiments to evaluate the regret of E2TC and sample complexity of its exploration in practice.

Unpacking SDXL Turbo: Interpreting Text-to-Image Models with Sparse Autoencoders

Oct 28, 2024

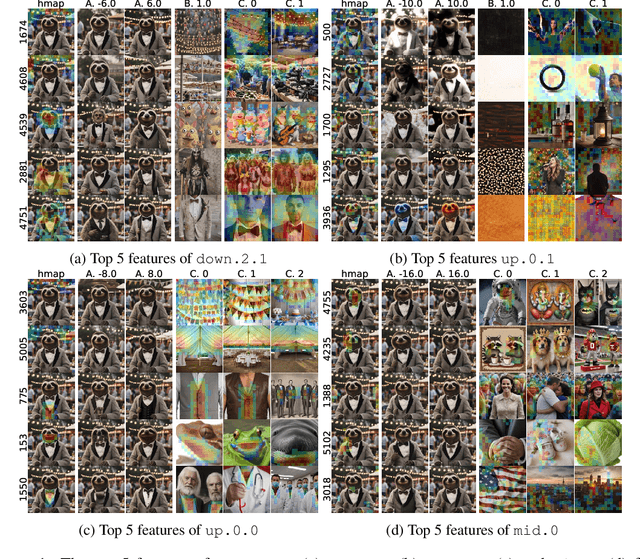

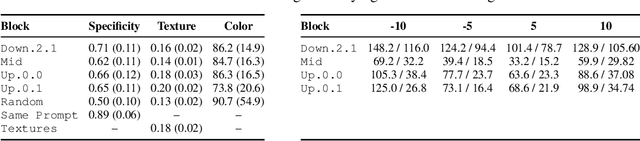

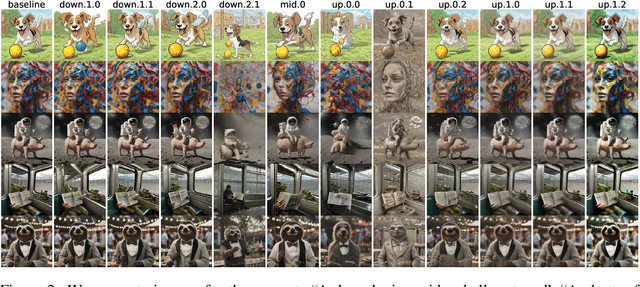

Abstract:Sparse autoencoders (SAEs) have become a core ingredient in the reverse engineering of large-language models (LLMs). For LLMs, they have been shown to decompose intermediate representations that often are not interpretable directly into sparse sums of interpretable features, facilitating better control and subsequent analysis. However, similar analyses and approaches have been lacking for text-to-image models. We investigated the possibility of using SAEs to learn interpretable features for a few-step text-to-image diffusion models, such as SDXL Turbo. To this end, we train SAEs on the updates performed by transformer blocks within SDXL Turbo's denoising U-net. We find that their learned features are interpretable, causally influence the generation process, and reveal specialization among the blocks. In particular, we find one block that deals mainly with image composition, one that is mainly responsible for adding local details, and one for color, illumination, and style. Therefore, our work is an important first step towards better understanding the internals of generative text-to-image models like SDXL Turbo and showcases the potential of features learned by SAEs for the visual domain. Code is available at https://github.com/surkovv/sdxl-unbox

In Search for Architectures and Loss Functions in Multi-Objective Reinforcement Learning

Jul 23, 2024

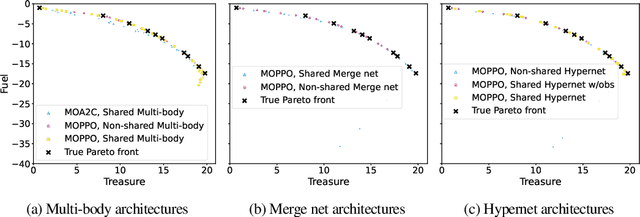

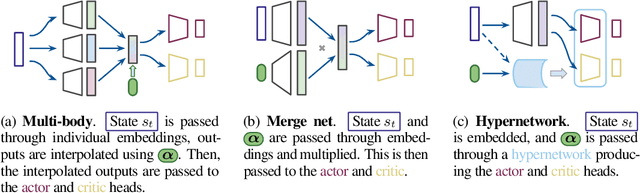

Abstract:Multi-objective reinforcement learning (MORL) is essential for addressing the intricacies of real-world RL problems, which often require trade-offs between multiple utility functions. However, MORL is challenging due to unstable learning dynamics with deep learning-based function approximators. The research path most taken has been to explore different value-based loss functions for MORL to overcome this issue. Our work empirically explores model-free policy learning loss functions and the impact of different architectural choices. We introduce two different approaches: Multi-objective Proximal Policy Optimization (MOPPO), which extends PPO to MORL, and Multi-objective Advantage Actor Critic (MOA2C), which acts as a simple baseline in our ablations. Our proposed approach is straightforward to implement, requiring only small modifications at the level of function approximator. We conduct comprehensive evaluations on the MORL Deep Sea Treasure, Minecart, and Reacher environments and show that MOPPO effectively captures the Pareto front. Our extensive ablation studies and empirical analyses reveal the impact of different architectural choices, underscoring the robustness and versatility of MOPPO compared to popular MORL approaches like Pareto Conditioned Networks (PCN) and Envelope Q-learning in terms of MORL metrics, including hypervolume and expected utility.

Learning pure quantum states (almost) without regret

Jun 26, 2024

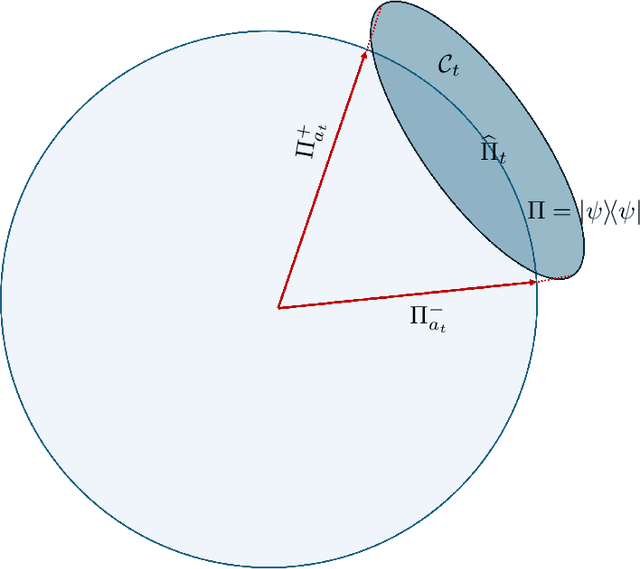

Abstract:We initiate the study of quantum state tomography with minimal regret. A learner has sequential oracle access to an unknown pure quantum state, and in each round selects a pure probe state. Regret is incurred if the unknown state is measured orthogonal to this probe, and the learner's goal is to minimise the expected cumulative regret over $T$ rounds. The challenge is to find a balance between the most informative measurements and measurements incurring minimal regret. We show that the cumulative regret scales as $\Theta(\operatorname{polylog} T)$ using a new tomography algorithm based on a median of means least squares estimator. This algorithm employs measurements biased towards the unknown state and produces online estimates that are optimal (up to logarithmic terms) in the number of observed samples.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge