Michel Hayoz

Online 3D reconstruction and dense tracking in endoscopic videos

Sep 09, 2024

Abstract:3D scene reconstruction from stereo endoscopic video data is crucial for advancing surgical interventions. In this work, we present an online framework for online, dense 3D scene reconstruction and tracking, aimed at enhancing surgical scene understanding and assisting interventions. Our method dynamically extends a canonical scene representation using Gaussian splatting, while modeling tissue deformations through a sparse set of control points. We introduce an efficient online fitting algorithm that optimizes the scene parameters, enabling consistent tracking and accurate reconstruction. Through experiments on the StereoMIS dataset, we demonstrate the effectiveness of our approach, outperforming state-of-the-art tracking methods and achieving comparable performance to offline reconstruction techniques. Our work enables various downstream applications thus contributing to advancing the capabilities of surgical assistance systems.

StofNet: Super-resolution Time of Flight Network

Aug 23, 2023Abstract:Time of Flight (ToF) is a prevalent depth sensing technology in the fields of robotics, medical imaging, and non-destructive testing. Yet, ToF sensing faces challenges from complex ambient conditions making an inverse modelling from the sparse temporal information intractable. This paper highlights the potential of modern super-resolution techniques to learn varying surroundings for a reliable and accurate ToF detection. Unlike existing models, we tailor an architecture for sub-sample precise semi-global signal localization by combining super-resolution with an efficient residual contraction block to balance between fine signal details and large scale contextual information. We consolidate research on ToF by conducting a benchmark comparison against six state-of-the-art methods for which we employ two publicly available datasets. This includes the release of our SToF-Chirp dataset captured by an airborne ultrasound transducer. Results showcase the superior performance of our proposed StofNet in terms of precision, reliability and model complexity. Our code is available at https://github.com/hahnec/stofnet.

Learning How To Robustly Estimate Camera Pose in Endoscopic Videos

Apr 17, 2023Abstract:Purpose: Surgical scene understanding plays a critical role in the technology stack of tomorrow's intervention-assisting systems in endoscopic surgeries. For this, tracking the endoscope pose is a key component, but remains challenging due to illumination conditions, deforming tissues and the breathing motion of organs. Method: We propose a solution for stereo endoscopes that estimates depth and optical flow to minimize two geometric losses for camera pose estimation. Most importantly, we introduce two learned adaptive per-pixel weight mappings that balance contributions according to the input image content. To do so, we train a Deep Declarative Network to take advantage of the expressiveness of deep-learning and the robustness of a novel geometric-based optimization approach. We validate our approach on the publicly available SCARED dataset and introduce a new in-vivo dataset, StereoMIS, which includes a wider spectrum of typically observed surgical settings. Results: Our method outperforms state-of-the-art methods on average and more importantly, in difficult scenarios where tissue deformations and breathing motion are visible. We observed that our proposed weight mappings attenuate the contribution of pixels on ambiguous regions of the images, such as deforming tissues. Conclusion: We demonstrate the effectiveness of our solution to robustly estimate the camera pose in challenging endoscopic surgical scenes. Our contributions can be used to improve related tasks like simultaneous localization and mapping (SLAM) or 3D reconstruction, therefore advancing surgical scene understanding in minimally-invasive surgery.

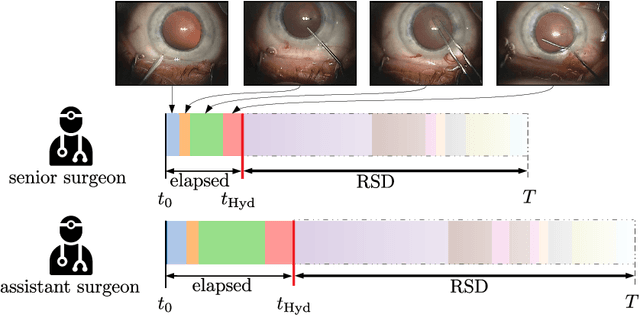

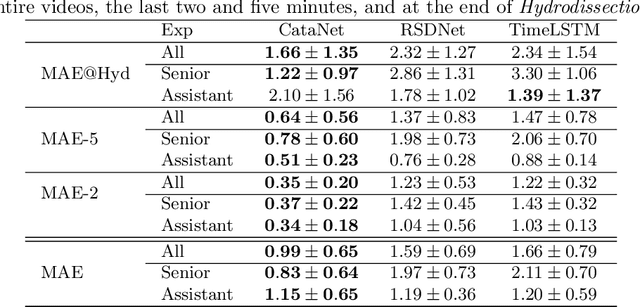

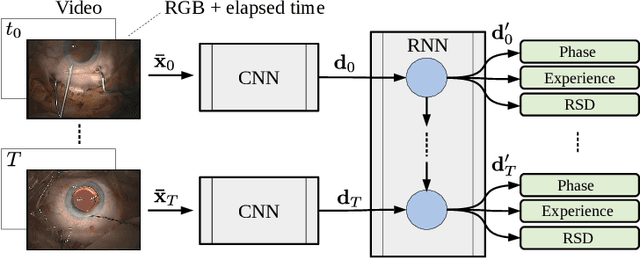

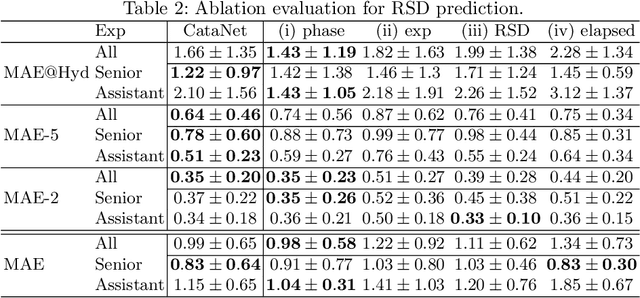

CataNet: Predicting remaining cataract surgery duration

Jun 21, 2021

Abstract:Cataract surgery is a sight saving surgery that is performed over 10 million times each year around the world. With such a large demand, the ability to organize surgical wards and operating rooms efficiently is critical to delivery this therapy in routine clinical care. In this context, estimating the remaining surgical duration (RSD) during procedures is one way to help streamline patient throughput and workflows. To this end, we propose CataNet, a method for cataract surgeries that predicts in real time the RSD jointly with two influential elements: the surgeon's experience, and the current phase of the surgery. We compare CataNet to state-of-the-art RSD estimation methods, showing that it outperforms them even when phase and experience are not considered. We investigate this improvement and show that a significant contributor is the way we integrate the elapsed time into CataNet's feature extractor.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge